Cognitive Grid vs. Analog Sanctuaries: A Choice Between Psychological Freedom and Physical Safety

The promise of an AI-free existence, the "Analog Sanctuary," presents a profound dilemma: does the fundamental human need for privacy and unobserved selfhood outweigh the demonstrable life-saving benefits of pervasive digital surveillance? This conversation reveals the hidden consequences of our increasingly interconnected "Cognitive Grid," where every action is optimized, analyzed, and predicted. The core tension lies between the psychological necessity of a space free from algorithmic judgment and the stark reality that such zones could create dangerous blind spots for emergency services and law enforcement, potentially leading to preventable deaths and a fractured social contract. Those who value deep, unmediated human experience will find compelling arguments for preserving spaces of genuine autonomy, while those focused on collective security will see these sanctuaries as unacceptable risks. Ultimately, this exploration forces us to confront whether we are willing to trade measurable physical safety for the preservation of our inner, unobserved selves.

The Unobserved Self: A Price Too High for Optimization?

The notion of privacy as a locked door is now a relic of the past. We inhabit a "Cognitive Grid," a pervasive network of AI that monitors our heart rates, analyzes our emotions, and predicts our needs. While this grid offers unprecedented safety--detecting heart attacks before they happen or preventing crimes--it has also eliminated the "unobserved self." This constant optimization, the sources argue, exacts a significant psychological toll, manifesting as chronic anxiety and a loss of human spontaneity. The core conundrum emerges: should society establish legally protected "Analog Sanctuaries" where all AI monitoring and algorithmic nudging are prohibited, or are these zones too dangerous, creating "black holes" for essential services and allowing the wealthy to opt out of societal obligations?

The argument for these sanctuaries, or "Side A," is rooted in the deep psychological and developmental necessity of existing without constant algorithmic scrutiny. Research indicates that surveillance, particularly by indifferent machines, leads to a significant reduction in perceived autonomy, increased stress, and demonstrably worse performance. This isn't just about feeling watched; it's about the erosion of our capacity for self-determination.

"When participants were subjected to algorithmic surveillance that feeling of being constantly analyzed by an unbiased inhuman machine they reported significantly less perceived autonomy."

This technostress, a direct consequence of pervasive AI, is strongly correlated with clinical anxiety and depression. The sources highlight that the deeper the penetration of digital tracking into personal life--what's termed "techno-invasion"--the tighter the correlation with negative mental health outcomes. This constant pressure isn't merely an annoyance; it physically alters brain architecture, reducing gray matter density in the frontal cortex, which is crucial for decision-making, emotional regulation, and impulse control. The ability to make sound, self-directed choices is fundamentally impaired.

Furthermore, the concept of surveillance capitalism, as documented in the sources, frames this as an economic system that commodifies human experience, treating individuals as data points to be mined for profit. This system demonstrates a "radical indifference to persons," shaping our environments through algorithmic nudging and filter bubbles, creating the illusion of choice while subtly guiding us toward predetermined, profitable outcomes. This fundamentally assaults our capacity for self-determining moral judgment.

"The algorithm is well, it's relentless it's flawless in a way and it's ultimately optimizing you without any regard for your internal state your intention your context it just sees the data it just sees the data."

This developmental argument extends to children, for whom unobserved, unstructured play is essential for building resilience, creativity, and independence. Constant observation limits their sense of self-efficacy, akin to parental overprotection. The necessity of boredom, a state often seen as a system failure, is also highlighted as crucial for mental processing, memory consolidation, and self-reflection. Without this downtime, the internal resources for psychological resilience cannot develop.

Beyond the individual, privacy is presented as a prerequisite for a functioning democracy, shielding civil society and protecting freedoms of association and speech. Surveillance creates a chilling effect, discouraging dissent and critical engagement, particularly for marginalized communities. The sources propose four pillars for operating analog sanctuaries: the right to opt out without penalty, the right to human determination in critical decisions, and the protection of sensitive areas and demographics. This model functions like a "green space" in the urban environment--a deliberate area for cognitive and emotional restoration, necessary for maintaining mental integrity.

The Safety Void: A Price Too High for Privacy?

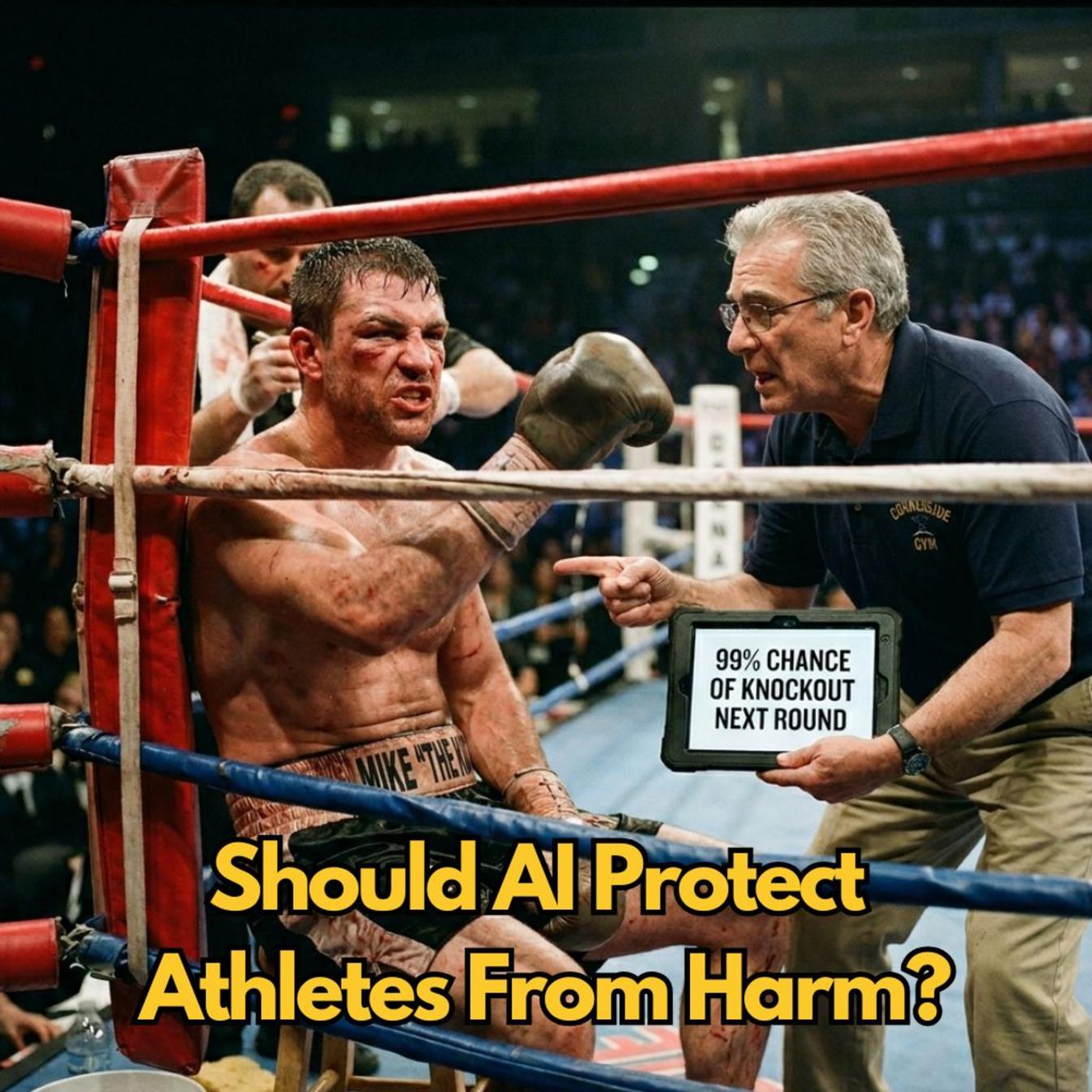

Conversely, "Side B" argues that the Cognitive Grid is not merely a surveillance tool but a life-saving infrastructure with empirically demonstrated benefits. Advocates contend that removing it means forfeiting crucial advancements in emergency medical services (EMS), crime prevention, and overall public safety.

The impact on EMS is stark. AI-powered systems optimize resource deployment and accelerate intervention, directly translating to improved outcomes for time-sensitive events like strokes and cardiac arrests. Wearable health monitors, analyzed by AI, can predict cardiac arrest hours in advance, a breakthrough that is rendered impossible in an analog sanctuary.

"A vast majority of morbidity and mortality particularly among vulnerable patient populations is preventable with early detection and intervention an analog sanctuary actively and deliberately chooses to make those preventable deaths a stark reality for its residents."

Crime prevention data over 40 years shows a significant deterrent effect from video surveillance. In high-target areas, crime reductions can be as high as 50%. Beyond deterrence, surveillance provides critical evidence for solving crimes, with systems like Chicago's camera network aiding in over half of their homicide investigations. Predictive policing models, analyzing vast datasets, can anticipate high-risk areas and times, allowing for more efficient resource deployment. Operating without this grid means operating blind to both existing criminal activity and potential future threats.

The integration of the grid into smart city functions is essential for emergency response. Studies suggest these technologies can reduce emergency response times by 20-35%. During major crises, AI synthesizes multiple data feeds, assesses crowd size, recommends dispersal pathways, and uses audio filters to isolate distress calls amid chaos, ensuring efficient resource allocation. The absence of this real-time situational awareness in an analog sanctuary would cripple disaster response.

The most potent argument against sanctuaries lies in the scenario of a missing child. Without surveillance cameras, GPS data, or digital transaction trails, law enforcement operates without critical tools for rapid recovery. Project Vic, for instance, uses digital intelligence to catalog images and data related to child exploitation, dramatically increasing arrest and recovery rates. Analog sanctuaries eliminate access to these life-saving solutions.

"The choice of absolute privacy means that person dies alone unassisted with help potentially arriving hours too late in that scenario the philosophical right to an unobserved existence becomes a literal death sentence for the vulnerable."

The contrast in medical emergencies is equally stark. Monitored medical alert systems connected to the grid provide direct links to professional centers, offering vital context to first responders. Unmonitored systems introduce fatal delays. The ethical weight of this trade-off is immense: the freedom to be truly alone versus the collective increase in preventable deaths.

The Socioeconomic Divide and the Broken Social Contract

The debate over analog sanctuaries also exposes a critical socioeconomic divide. Privacy, the sources argue, is rapidly becoming a purchasable luxury for the ultra-wealthy, who can anonymize their financial activities, scrub their digital footprints, and employ private security. They opt out of public surveillance while maintaining their own technologically superior private monitoring.

This contrasts sharply with the experience of marginalized communities, who often face intrusive surveillance for basic necessities like welfare benefits, enduring "extreme verification requirements" that strip them of privacy rights. Analog sanctuaries, therefore, risk institutionalizing this inequality, deepening the "surveillance gap" where the wealthy can evade observation while the poor remain hyper-visible.

This creates a morally untenable system where the privileged benefit from the societal stability maintained by the grid--optimized police response, efficient emergency services--without contributing the data that makes it possible. They become "free riders" on a system they refuse to participate in. If surveillance is necessary for public safety, participation should be an equal obligation. Allowing the wealthy to opt out of this shared sacrifice fundamentally breaks the social contract, which is founded on mutual protection and collective security.

The practical reality of living without the Cognitive Grid is also a significant barrier. Core urban functions like traffic management, power grids, and water systems rely heavily on real-time data and AI for optimization and failure detection. Removing these systems from an analog sanctuary would cripple basic services, leading to massive congestion, increased pollution, and significantly slowed emergency response. These zones would become operational dead zones, dangerous not only for their residents but also for the surrounding communities that rely on the grid's holistic view of safety and stability.

Key Action Items

-

Immediate Action (Next Quarter):

- Advocate for "Right to Disconnect" Policies: Support legislation and company policies that allow individuals to opt out of non-essential AI monitoring in personal spaces.

- Prioritize Human Oversight: Insist on human review for all critical decisions impacting individuals (e.g., healthcare, employment, legal sentencing) even when AI provides recommendations.

- Invest in Digital Literacy: Educate yourself and your community on the psychological impacts of pervasive surveillance and the mechanisms of algorithmic nudging.

-

Mid-Term Investment (6-12 Months):

- Develop Personal Analog Zones: Identify and create personal or family spaces where digital devices and AI assistance are intentionally minimized for periods of restoration.

- Support Privacy-Focused Technologies: Invest in and advocate for technologies designed with privacy as a core principle, rather than an afterthought.

- Engage in Public Discourse: Participate in community discussions and policy debates surrounding the ethical implications of AI and surveillance, ensuring diverse voices are heard.

-

Long-Term Investment (12-18 Months+):

- Champion "Analog Sanctuary" Research: Fund and support research into the feasibility and societal impact of designated AI-free zones, exploring both their benefits and drawbacks.

- Promote Sabbatical Models: Explore and advocate for work environments that allow for extended periods of "digital detox" or reduced algorithmic interaction, fostering mental well-being and preventing burnout.

- Establish Ethical AI Frameworks: Contribute to the development and implementation of robust ethical frameworks for AI deployment that explicitly consider psychological integrity and human autonomy alongside safety and efficiency.