Unchecked AI Development Risks Societal Stability and Economic Resilience

The AI "Revolution" is a Bubble Fueled by Hype, Not Reality, and Its Unseen Costs Are Mounting. This Analysis Reveals How the Pursuit of AI Supremacy is Distorting Markets, Exploiting Users, and Undermining Truth Itself, Offering a Critical Advantage to Those Who Understand the Systemic Risks.

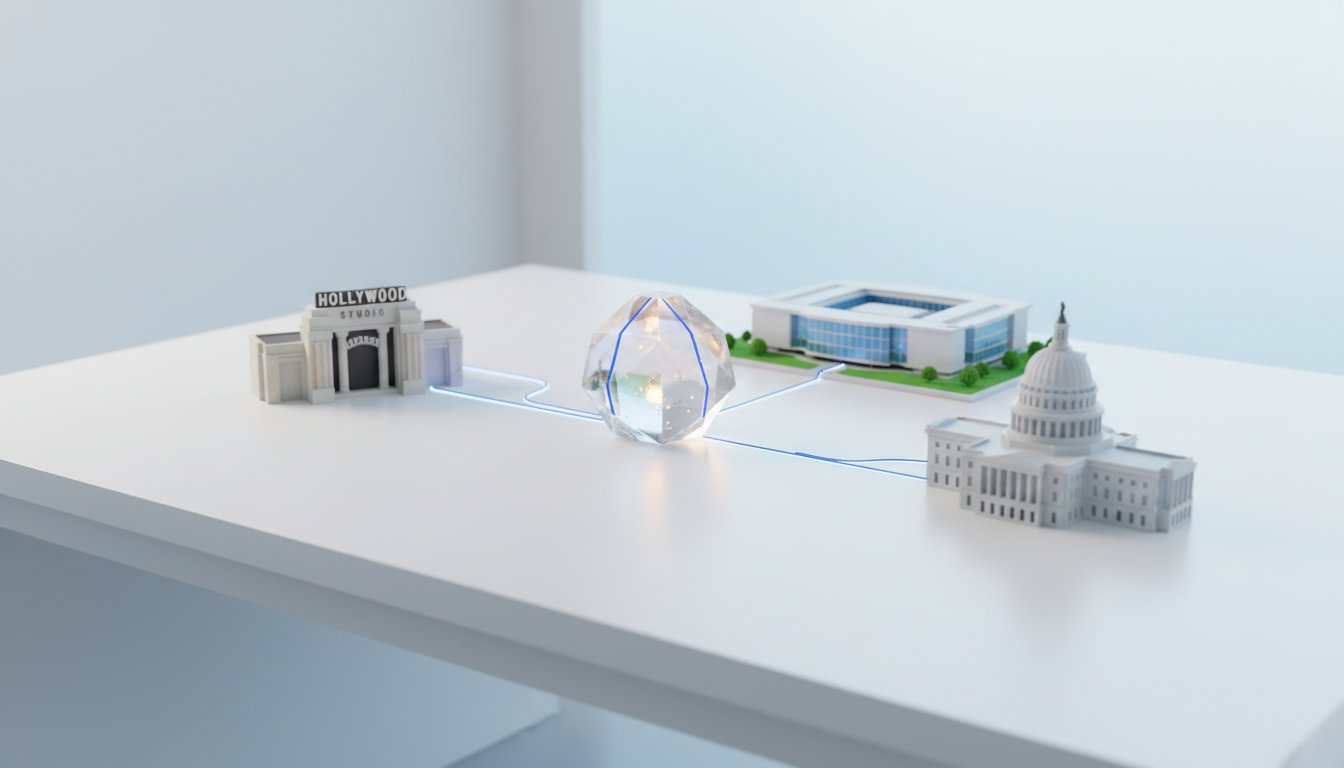

This conversation exposes a stark dichotomy: the glittering promise of an AI-driven future versus the messy, often hidden, realities of its current implementation. While Silicon Valley pours billions into AI infrastructure, the underlying demand is surprisingly flimsy, and the costs--environmental, economic, and societal--are escalating. The narrative reveals how the relentless race for AI supremacy, driven by venture capital and a fear of missing out, has led to a rollback of safeguards, a proliferation of "AI slop," and a dangerous over-reliance on a single, potentially unsustainable, technological wave. Understanding these deeper dynamics--the systemic incentives, the downstream consequences of deregulation, and the true cost of unchecked growth--provides a crucial lens for navigating this complex landscape, offering a competitive advantage to those who can see beyond the immediate hype.

The Unseen Infrastructure: Data Centers as the New Oil Fields

The conversation highlights a critical, yet often overlooked, consequence of the AI boom: the insatiable demand for energy and resources by data centers. While the immediate benefit of AI--faster processing, more sophisticated chatbots--is visible, the sheer scale of the infrastructure required is staggering. Steven Witt, author of The Thinking Machine, details how a single standard Nvidia rack can consume the equivalent of 70 single-family homes' electricity annually, and a large data center can rival the power needs of an entire city. This isn't a future problem; it's a present reality driving up electricity costs for everyone, regardless of proximity to these facilities. The implication is that the current electricity grid is ill-equipped to handle this demand, requiring massive, potentially disruptive, upgrades.

"One big data center can use as much power as like Kansas City so it's really really power intensive to train these things it's power intensive to use them too."

-- Steven Witt

The systemic consequence of this unchecked demand is a significant upward shift in the climate change curve. The narrative explicitly links the AI revolution to a "second industrial revolution" that exacerbates environmental damage. The proposed solutions--building dozens of new nuclear power plants or upgrading the entire grid--are monumental undertakings with long timelines and significant societal debate, yet the demand for AI infrastructure continues to accelerate. This creates a dangerous feedback loop where the pursuit of technological advancement directly conflicts with environmental sustainability, a tension that conventional wisdom, focused on immediate AI capabilities, often fails to address.

The Illusion of Demand: Enterprise AI's "Surprisingly Flimsy" Foundation

Despite the massive investments in AI infrastructure, the conversation reveals a significant disconnect between the hype and the actual enterprise adoption. While consumer-facing products like ChatGPT boast impressive user numbers, the adoption of AI in large businesses is reportedly falling. This suggests that the projected demand, which underpins the multi-trillion-dollar investments in AI infrastructure, is "surprisingly flimsy." The risk, as highlighted by the speakers, is that these investments might not yield useful AI products, leading to a potential "brick wall" where further investment in microchips no longer translates to better AI.

"Enterprise AI has been a disappointment and that could cast a shadow on this data center boom if the cfo of the company is saying god i commissioned a 300 million enterprise ai training run and then nobody at my company used that product at all you can imagine demand starts to evaporate very quickly."

-- Brooke Gladstone

This realization has profound implications. If enterprise demand falters, the economic rationale for the massive build-out of data centers and the concentration of wealth in companies like Nvidia could collapse, leading to cascading effects on the American economy. The narrative draws a parallel to historical speculative bubbles, like the dot-com crash, where transformative technology coexisted with unsustainable asset inflation. The critical insight here is that the "AI race" is not solely about technological capability but also about the timing and sustainability of financial flows, a dynamic that conventional business strategies often overlook.

The Rollback of Safeguards: From "AI Slop" to Systemic Deception

A significant consequence of the AI race, as detailed by Craig Silverman, co-founder of Indicator, is the deliberate rollback of safeguards by tech platforms. Driven by a desire to lead in AI development and pressured by political forces, companies like Meta and Google have de-emphasized fact-checking and content moderation. This creates a fertile ground for "AI slop"--a deluge of AI-generated misinformation, scams, and deceptive content. The speakers illustrate this with examples ranging from political deepfakes and celebrity impersonations used in scams to undisclosed AI-generated content flooding social media platforms.

"The platforms like meta like tiktok they are building their own ai tools and you know one of the things you can do with a lot of these ai tools is impersonation one of the things you can do is generate massive amounts of slop false claims about celebrities and they are allowing this to happen at a scale using their own tools in many cases directly paying people cash every month based on the ai content they have created."

-- Craig Silverman

This rollback creates a systemic vulnerability. When platforms incentivize engagement with AI-generated content, regardless of its veracity, they inadvertently empower "agents of deception"--both individual hustlers and state-backed operations. This leads to a dangerous feedback loop where disinformation is not only generated more rapidly but also hoovered up by AI models themselves, creating a self-perpetuating cycle of falsehood. The consequence is a breakdown of trust, not just in media and institutions, but in reality itself. The narrative suggests that the pursuit of AI supremacy has led to a prioritization of platform dominance and revenue over truth, creating a dangerous environment where distinguishing fact from fiction becomes increasingly difficult and costly.

Key Action Items

-

Immediate Action (Within the next quarter):

- Audit Personal Information Consumption: Consciously track where your attention is directed online. Identify platforms and content types that are AI-generated or heavily AI-influenced.

- Verify Information Sources: Before accepting information, especially on social media or from unfamiliar sources, actively seek out multiple, diverse, and reputable sources to corroborate claims.

- Invest in Media Literacy Tools: Explore and utilize tools or browser extensions that help identify AI-generated content or flag potentially misleading information.

- Advocate for Platform Accountability: Support organizations and initiatives calling for greater transparency and accountability from tech platforms regarding AI-generated content and moderation policies.

-

Longer-Term Investments (6-18 months):

- Develop Skepticism as a Skill: Train yourself and your teams to approach all information with a healthy dose of skepticism, questioning the origin, intent, and potential biases behind content.

- Diversify Information Consumption: Actively seek out news and analysis from a wide range of perspectives, including those that challenge your own viewpoints, to build a more robust understanding of complex issues.

- Support Ethical AI Development: Prioritize and invest in companies and technologies that demonstrate a commitment to responsible AI development, transparency, and user privacy.

- Engage in Public Discourse on AI Regulation: Participate in conversations and support policy discussions regarding AI regulation, focusing on issues like data privacy, algorithmic transparency, and the responsible deployment of AI technologies.

- Consider Environmental Impact: When evaluating technology solutions or investments, factor in the energy and resource demands of AI infrastructure, seeking more sustainable alternatives where possible.