AI's Leverage Paradox: Unprecedented Gains Versus Hidden Verification Risks

The AI Superpower Paradox: Unlocking Unprecedented Leverage While Navigating Hidden Risks

The core thesis of this conversation is that AI has fundamentally shifted the economic landscape, granting individuals and startups unprecedented leverage, akin to having a swarm of employees for a minimal cost. However, this powerful new surplus of capability is not without its hidden consequences. The ability to automate tasks at scale introduces new complexities, particularly around verification and systemic risk, which conventional wisdom and current economic models are ill-equipped to handle. Anyone aiming to build, innovate, or even simply navigate their career in the coming years will gain a significant advantage by understanding this dynamic: immediate productivity gains from AI must be balanced against the long-term liabilities and the evolving nature of human value.

The Unseen Costs of AI's Productivity Surge

The advent of advanced AI agents has dramatically lowered the cost of automation, effectively granting individuals the leverage of a team at a fraction of the price. This shift, while exhilarating, introduces a critical economic tension: the rapid decline in automation costs versus the slower, more complex decline in verification costs. Christian Catalini, author of "Some Simple Economics of AGI," and Eddy Lazzarin, CTO of a16z crypto, unpack this dynamic, revealing how the very efficiency AI offers can create downstream problems that are difficult to foresee and even harder to manage.

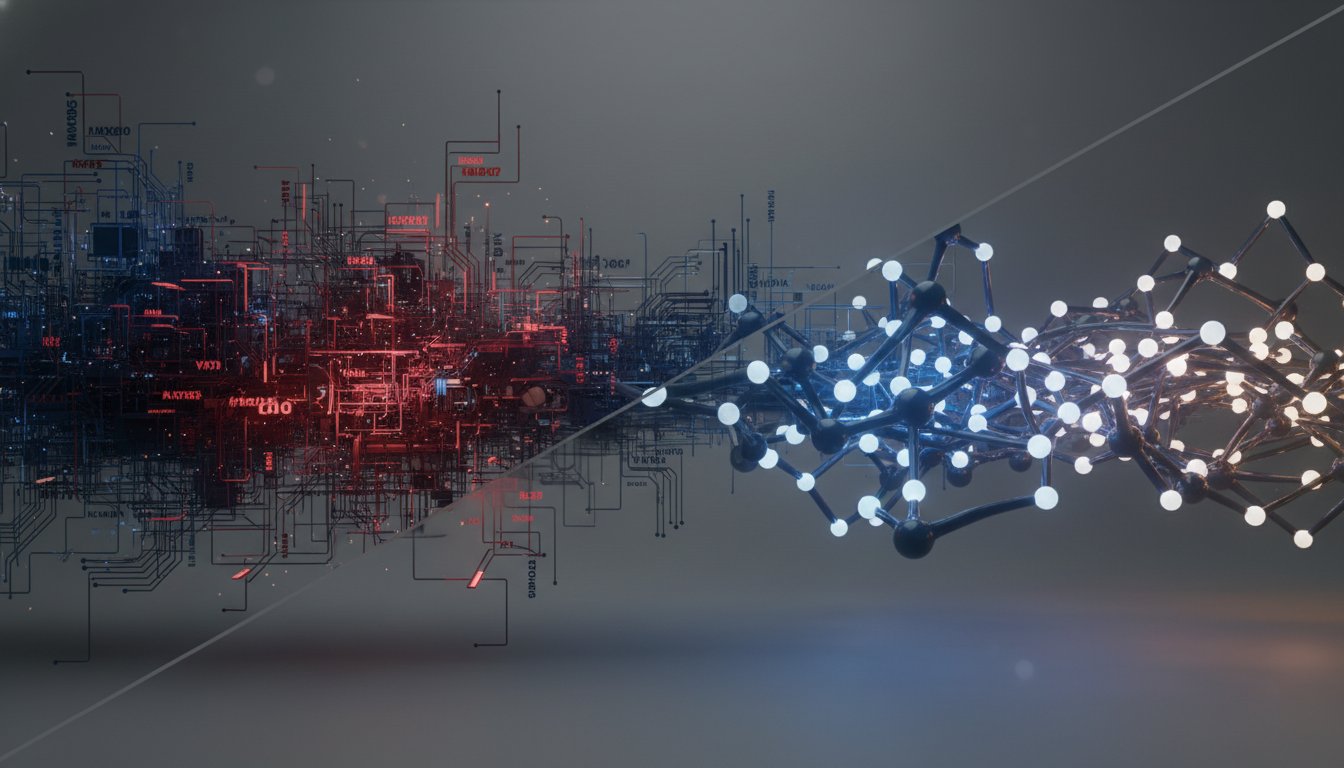

The immediate temptation is to deploy AI-generated work rapidly, driven by the allure of increased productivity and shortened release cycles. As Lazzarin notes, "companies today say that x percent of their code is now generated by machines that's amazing and in the sense of growing productivity." This is particularly true for tasks that were previously the "grunt work" of many professions. However, this speed comes with a significant caveat. "It's often flawed in ways that are subtle and that may not have been fully appreciated before," he adds. This is where the concept of "verification" becomes paramount. While AI excels at replicating processes based on existing data, it struggles with what Catalini terms "unknown unknowns"--situations that are not measurable and lie beyond the scope of its training data.

This gap between automation and verification creates a fertile ground for systemic risk. When AI generates code or content at an unprecedented scale, it's "humanly impossible to review all of that code," leading to the accumulation of technical debt or the introduction of subtle errors. Catalini explains this phenomenon as a rational, albeit risky, decision-making process: "it's essentially perfectly rational to ship code or to ship writings or any sort of ai generated work that will contain some potential error because you can't verify the full thing." This creates a "Trojan horse" effect, where immediate gains mask future liabilities. The economy, as it scales AI deployment, risks accumulating "some degree of systemic risk."

The implications for the labor market are profound. The traditional model of apprenticeship, where junior roles involved learning through grunt work and close supervision, is becoming obsolete. AI can accelerate mastery for individuals, but it also means that the "junior verifiers" of the past are not being trained. This leads to a potential "hollowing out" of expertise, where the class of individuals capable of deep, nuanced verification shrinks. As Catalini puts it, "we're not training our future class of verifiers." This dynamic, coupled with the "codifier's curse"--where an expert's decisions are used to train AI that then displaces similar experts--points to a future where human value must shift towards higher-level strategic thinking and complex problem-solving that AI cannot yet replicate.

"The pattern repeats everywhere Christian looked: distributed architectures create more work than teams expect. And it's not linear--every new service makes every other service harder to understand. Debugging that worked fine in a monolith now requires tracing requests across seven services, each with its own logs, metrics, and failure modes."

-- Eddy Lazzarin

The Shifting Landscape of Human Value: From Automation to Augmentation

The critical challenge, then, is to navigate from this potentially "hollow economy" towards an "augmented economy." This transition hinges on recognizing that human value is no longer primarily in the execution of automatable tasks, but in the higher-order skills of direction, verification, and meaning-making. Lazzarin highlights the shift for engineers: "the balance of work for a great engineer is shifting quickly... the amount of attention paid to writing the code... is smaller and vanishingly small for some... and a huge part of the work is now verification."

This "verification" encompasses not just bug-checking code, but a broader application of human judgment, experience, and intuition to guide and validate AI outputs. It involves understanding "unknown unknowns," applying "good taste," and making decisions in areas where probabilities cannot be assigned. This is where human expertise remains indispensable, especially in navigating situations with "Knightian uncertainty" or what Donald Rumsfeld famously termed "unknown unknowns." The paper posits that as AI becomes more capable, the leverage of a single individual in their profession becomes massive, enabling ambitious projects previously requiring large teams.

The concept of the "director" emerges as a crucial role in this new paradigm. Entrepreneurs, by their nature, are directors--they "see some future they imagine some path for getting there." In an AI-augmented world, this directorial role involves steering swarms of AI agents, course-correcting when the system drifts, and ensuring alignment with intended goals. This is the essence of the "AI sandwich" model, where a human director at the top guides AI agents in the middle, supported by top verifiers at the bottom who can critically assess AI outputs.

Furthermore, the conversation emphasizes that human ingenuity will be vital in areas that are inherently non-measurable or involve social coordination. "Meaning makers," individuals adept at understanding societal trends, coordinating collective action, and imbuing activities with social significance, will play a crucial role. While AI can automate many tasks, it cannot replicate the human capacity for creating consensus around subjective values or artistic expression.

"The apprenticeship might be dead but the real work is beginning."

-- Christian Catalini

The interplay between AI and crypto is presented as a vital component of this augmented future. Crypto primitives, with their deterministic nature and ability to establish verifiable provenance and trust, can provide the underlying infrastructure for a more reliable and transparent digital economy. As Catalini notes, "blockchain networks end up being this very attractive thing because they're credibly neutral... all the individual agents and actors in the system... can scrutinize them for their neutrality." This is particularly important as AI agents become more prevalent, necessitating robust identity, reputation, and payment systems that crypto can provide.

The path forward, while potentially painful for those whose roles are heavily automated, is ultimately optimistic. The augmented economy envisions a future where AI accelerates mastery, enables individuals to pursue their true aptitudes, and makes previously scarce resources like education and healthcare more accessible. The key lies in proactively investing in verification tooling, fostering human-AI collaboration, and understanding that the true advantage in this new era will come not from automating tasks, but from mastering the art of directing and verifying intelligent systems.

Key Action Items

- For Young Professionals & Students:

- Immediate Action: Actively use AI tools to simulate professional environments and accelerate learning. Focus on developing "director" skills--guiding and steering AI agents--rather than just executing tasks.

- Longer-Term Investment: Cultivate deep domain expertise in areas that require nuanced judgment, creativity, and complex problem-solving, which are less susceptible to immediate automation.

- For Mid-Career Professionals:

- Immediate Action: Re-evaluate your current role to identify tasks that can be automated by AI, and proactively seek to elevate your responsibilities towards verification, strategic direction, or creative problem-solving. Embrace the "AI sandwich" model by learning to manage AI agents effectively.

- This pays off in 6-12 months: Invest time in understanding the limitations of AI and developing robust verification processes for AI-generated outputs. This discomfort now builds a durable skill set.

- For Founders & Leaders:

- Immediate Action: Prioritize building robust verification infrastructure and tooling for AI-generated outputs. Consider the long-term liabilities and potential systemic risks associated with rapid AI deployment.

- This pays off in 12-18 months: Explore how crypto primitives can enhance trust, provenance, and coordination within your AI-driven workflows, creating a "verification grade network effect."

- Ongoing Investment: Foster a culture of continuous learning and adaptation, encouraging employees to move up the value chain and develop directorial capabilities.

- For All:

- Immediate Action: Recognize that the unique value proposition of humans is shifting from task execution to complex verification, strategic direction, and meaning-making.

- This pays off over years: Develop a "meta skill" of zooming in and out across entire projects or enterprises, identifying where attention and resources are most critical, and adapting strategies based on evolving AI capabilities.