The Python landscape in 2025 is a testament to evolving paradigms, marked by the impending obsolescence of the GIL, the refinement of packaging tools, and the prolific growth of type checkers. This conversation, featuring a distinguished panel of Python core developers and community leaders--Barry Warsaw, Brett Cannon, Gregory Kapfhammer, Jodie Burchell, Reuven Lerner, and Thomas Wouters--delves into the nuanced realities behind these trends. It reveals that while the technology itself is transformative, its sustainable integration and the community's support structures face significant challenges. This analysis is crucial for developers, project managers, and community organizers seeking to navigate the future of Python development, offering insights into where true competitive advantage lies beyond the immediate hype.

The Unfolding AI Narrative: Beyond the Hype Cycle

The discourse around Artificial Intelligence, particularly Large Language Models (LLMs), dominated 2025, yet the year also marked a critical inflection point where the initial unchecked optimism began to confront reality. Jody Burchell, with her background in NLP, observed a growing disillusionment as the promised exponential progress toward Artificial General Intelligence (AGI) faltered. The anticipated leap with GPT-5, for instance, proved to be a letdown, signaling that the scaling laws driving earlier advancements were not infinite. This suggests that the immense valuations in the AI space might be built on an unsustainable premise, potentially leading to an economic correction akin to the dot-com bubble.

The immediate benefit of LLMs is undeniable; they offer unprecedented capabilities for language tasks and code generation, acting as powerful tools when wielded effectively. However, as Thomas Wouters noted, the business models supporting these advancements are precarious. The sheer cost of development and the disconnect between investor promises and sustainable revenue streams create a palpable risk of a market correction. This doesn't negate the technology's inherent value--even current models far surpass previous autocomplete or Stack Overflow capabilities--but it highlights the potential for significant economic fallout.

"The technology even if it doesn't get any better even if we stay with what we have today I still think this is like one of the most amazing technologies I've ever seen it's not a god it's not a panacea but it's like a chainsaw that if you know how to use it it's really effective but in the hands of amateurs you can really get hurt."

-- Thomas Wouters

The consequence of this AI hype is a bifurcation: while some AI-driven businesses may collapse, the underlying technology, particularly agentic coding tools and advanced LLMs, is likely here to stay. The challenge for developers is to learn to use these tools pragmatically, understanding their limitations and specific use cases. Gregory Kapfhammer's mention of the "10k developer study" further grounds this discussion, revealing that while AI tools boost productivity, they often generate code that requires more refactoring and debugging, especially in complex or legacy systems. This implies that the true advantage lies not in blindly adopting AI, but in understanding its nuanced application, favoring greenfield projects, smaller codebases, and popular languages where training data is abundant. The immediate productivity gains must be weighed against the downstream costs of managing AI-generated code, a trade-off that conventional wisdom often overlooks.

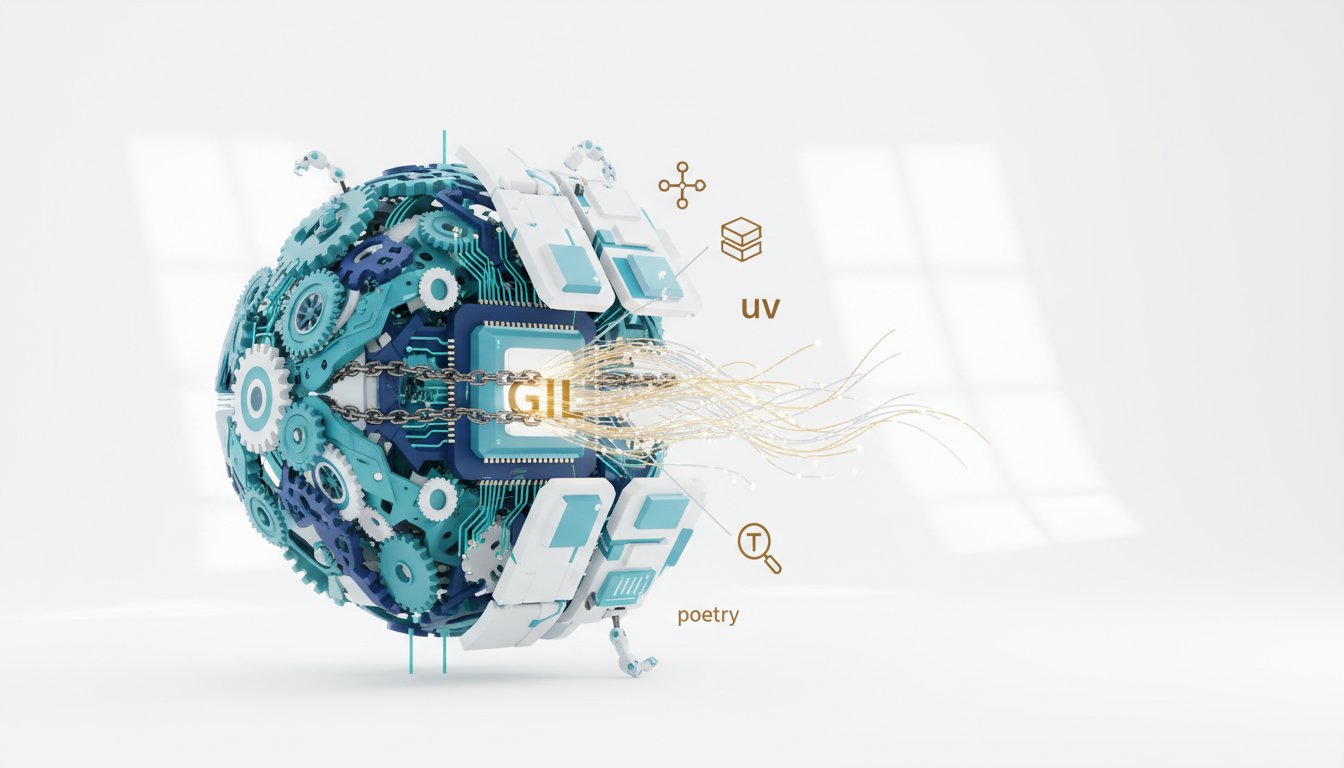

The Quiet Revolution: Packaging and Tooling as an Enabler

While AI captured headlines, a more foundational shift was occurring in Python's tooling and packaging ecosystem. Brett Cannon highlighted a growing comfort with tools that abstract away the complexities of interpreter management and dependency installation, effectively inverting the traditional setup process. Tools like uv, hatch, and pdm are not just managing environments; they are treating Python itself as an implementation detail, allowing developers to focus on their vision rather than the mechanics of execution.

This focus on user experience is a critical downstream effect. For newcomers, the traditional Python installation and environment setup could be a significant barrier, consuming valuable time and potentially leading to frustration. Tools like uv, particularly when combined with PEP 723's inline script metadata, streamline this process to an unprecedented degree. Gregory Kapfhammer shared his experience using uv in introductory classes, reducing setup time from a week to mere minutes. This immediate payoff--getting students productive faster--is a direct consequence of abstracting away the "magic" of Python's underlying infrastructure.

"The distance between vision of what i wanted and working code just really narrowed and i think that that as we are starting to think about tools and environments and how to bootstrap all this stuff we're also now taking all that stuff away because people honestly don't care."

-- Brett Cannon

However, this abstraction introduces a potential hidden cost: the "magic" can obscure fundamental concepts, making debugging and deeper understanding more challenging when things inevitably go wrong. The debate around how much to abstract versus how much to teach is a classic systems problem. While tools like uv have proven remarkably stable and have learned from past issues with package management, the risk of a single tool's disappearance or a fundamental shift in its underlying technology remains. This suggests that while the immediate advantage is speed and ease of use, the longer-term investment lies in ensuring the robustness and understandability of these foundational tools, perhaps through standardization and broader adoption of core components like the Python launcher.

The GIL's Demise: A Performance Paradigm Shift with Community Hurdles

The most significant technical transformation on the horizon for Python is the advent of free-threaded Python, effectively signaling the end of the Global Interpreter Lock (GIL) as a pervasive constraint. Thomas Wouters detailed the progress, noting that for Python 3.14, free-threading is officially supported, with performance impacts ranging from negligible to a slight slowdown depending on hardware and compiler. The immediate benefit is clear: the potential for true parallel execution on multi-core processors, unlocking significant performance gains for highly parallelizable problems. Experimental work has already shown speedups of 10x or more in certain scenarios.

The primary challenge, however, is not technological but communal. The ecosystem's reliance on the GIL for internal thread safety has meant that many third-party extension modules and even core Python code require updates to fully embrace free-threading. This necessitates a massive educational and outreach effort to guide developers and package maintainers through the necessary API changes and the mindset shift required for writing thread-safe code. The GIL never guaranteed thread safety for Python code itself, but it created a psychological buffer. Removing it means developers must now actively consider thread safety, a task that many have avoided for years.

"The gil never gave you thread safety the gil gave cpython's internals thread safety it never really affected python code and it very rarely affected thread safety in extension modules as well so they already had to take care of of making sure that the global interpreter lock couldn't be released by something that they ended up calling indirectly."

-- Thomas Wouters

The consequence of this transition will be a performance leap for many applications, but the delayed payoff comes from the effort required for ecosystem-wide adoption. While libraries like concurrent.futures offer a unified interface, the decision of when to use threads versus sub-interpreters or multiprocessing still requires careful consideration. The ideal future, as suggested, involves higher-level abstractions that intelligently leverage these concurrency models, allowing developers to focus on their application logic rather than the intricacies of thread management. The immediate pain of updating code and learning new patterns will eventually yield significant performance advantages, creating a more scalable and efficient Python for a new generation of applications.

Sustaining the Ecosystem: The Funding Conundrum

Underpinning all these technological advancements is the critical, yet often overlooked, issue of funding for Python's open-source infrastructure. Reuven Lerner articulated a growing concern: Python's success has outpaced its funding mechanisms. The Python Software Foundation (PSF), responsible for supporting conferences, workshops, and core infrastructure like PyPI, has faced significant financial strain, even pausing grant programs. This situation is exacerbated by a low level of community engagement in funding, with an infinitesimally small percentage of Python users actively contributing financially.

The consequence of this underfunding is a direct threat to the sustainability of the entire ecosystem. Companies that heavily rely on Python often fail to provide commensurate financial support, viewing their contributions to frameworks like PyTorch or TensorFlow as sufficient, rather than direct PSF sponsorship. This creates a "tragedy of the commons," where the collective benefit derived from Python's open-source nature is not matched by collective financial responsibility. The decline in conference sponsorship post-pandemic and the lack of engagement from newer entrants further compound the problem.

"We need more corporate sponsorships more than we need like i mean obviously a million people giving us a couple of bucks giving the psf let's be clear i'm not on the board anymore giving the psf a couple of bucks would be fantastic but i think the big players in the big where all the ai money is for instance having done basically no sponsorship of the psf is mind boggling."

-- Reuven Lerner

The immediate impact is the scaling back of essential services and support. The long-term consequence could be a stagnation or even decline in Python's development and infrastructure maintenance. While individual contributions and corporate sponsorships are vital, the current model is insufficient. The insight here is that the perceived "free" nature of open-source software masks the significant investment required to maintain it. Discovering alternative funding models, akin to NumFOCUS's larger budget, and fostering a culture where companies that profit from Python actively contribute to its foundation is paramount. This requires a shift in perspective, recognizing that investing in the PSF is not charity, but a strategic necessity for ensuring the continued vitality of a critical development tool.

- Immediate Action: Companies heavily reliant on Python should review their current sponsorship levels of the PSF and consider increasing contributions, even modest amounts can collectively make a significant impact.

- Longer-Term Investment: Develop and advocate for new, sustainable funding models for open-source projects, potentially through industry consortia or tiered corporate support structures that align with revenue generated from Python usage.

- Community Engagement: Individuals should actively participate in PSF elections and consider becoming members to voice their support and understand the foundation's needs.

- Discomfort for Advantage: Companies should consider that allocating even a small percentage of their R&D budget to open-source foundations like the PSF can secure the long-term health and innovation of the tools they depend on, creating a competitive advantage through ecosystem stability.

- Immediate Action: Developers should explore and adopt modern packaging tools like

uvfor new projects to streamline setup and dependency management, improving immediate productivity. - Longer-Term Investment: Invest time in understanding the underlying principles of Python packaging and environment management, even when using high-level tools, to better troubleshoot and contribute to the ecosystem.

- Discomfort for Advantage: Embrace the learning curve associated with free-threaded Python by experimenting with it in non-critical applications now, preparing for future performance gains and contributing to ecosystem readiness.

- Immediate Action: Developers should actively engage with type checkers and Language Server Protocols (LSPs) like

pyright,pyre-check, andtyto improve code quality and catch errors early. - Longer-Term Investment: Contribute to the development and standardization of type checking protocols and LSPs to foster interoperability and reduce fragmentation within the tooling ecosystem.

- Discomfort for Advantage: Embrace the complexity of modern concurrency models (asyncio, threading, multiprocessing) by seeking higher-level abstractions and understanding their trade-offs, rather than avoiding them, to build more performant and scalable applications.