Embracing AI Governance Complexity for Marketing Competitive Advantage

The AI Governance Paradox: Why Marketers Must Embrace Complexity for Competitive Advantage

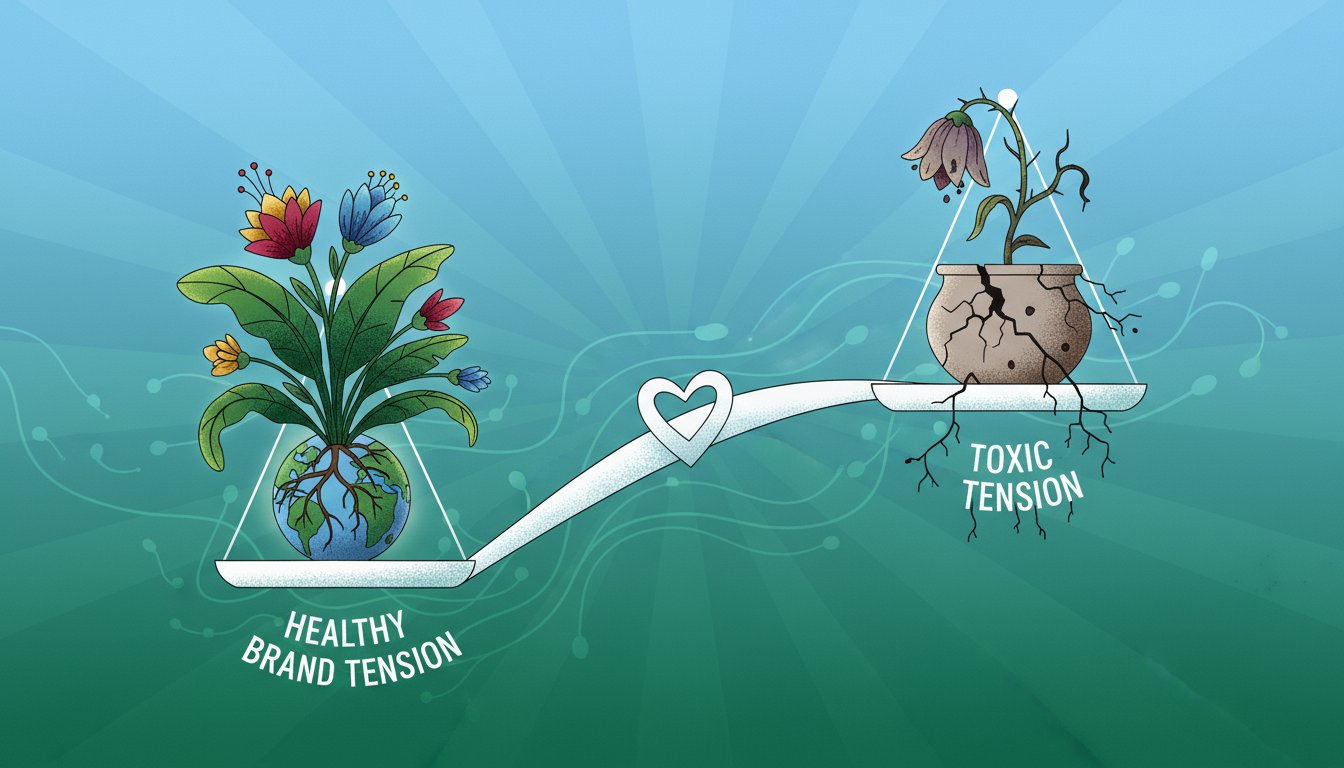

This conversation reveals a critical, often overlooked, paradox in AI adoption: the very tools promising efficiency and innovation also introduce profound governance and trust risks that can cripple a brand. The non-obvious implication is that AI readiness isn't a technical hurdle, but a fundamental shift in how marketers must approach data, privacy, and customer relationships. This analysis is crucial for CMOs and marketing leaders who want to move beyond the hype and build sustainable, trustworthy AI-powered strategies. By understanding the downstream consequences of AI implementation, they can gain a significant competitive advantage by proactively addressing risks that others ignore, ensuring long-term brand health and customer loyalty in an increasingly AI-driven landscape.

The Hidden Cost of "AI-Ready Data": Beyond the Hype to Actual Readiness

The current fervor around AI adoption in marketing often centers on the promise of enhanced personalization, efficiency, and creativity. However, the conversation with Michael Shenker and Thomas Renée highlights a more complex reality: the gap between the desire to leverage AI and the actual capability to do so responsibly. The immediate impulse for marketers is to "do more marketing with AI," driven by the understanding that "every AI model needs AI-ready data." Yet, the critical insight is that most organizations lack this readiness, particularly concerning customer data. This isn't just about having data; it's about having data that is consented, structured, and governed in a way that aligns with AI's demands.

The danger lies in the downstream effects of rushing AI adoption without this foundational readiness. Marketers might deploy AI for personalization, only to discover they are using data without proper, ongoing consent, leading to a catastrophic loss of trust. This is where the analogy to GDPR and CCPA becomes potent. Just as companies initially treated privacy compliance as a reactive, regulatory checkbox, many are now approaching AI governance similarly.

"I think the one thing that I'm seeing and in particular with marketers right now with AI is everyone's telling them they have to do more marketing with AI and every AI model needs AI ready data and then when you start looking around at how much AI ready data do I have and you want customer data that's where people in marketing are starting to see the gap between what they want to do and what they actually can do."

-- Michael Shenker

This gap between aspiration and capability creates a significant risk. The immediate benefit of using AI for personalization or campaign optimization can be overshadowed by the long-term consequence of brand damage if the underlying data practices are not robust. This highlights a systemic issue: the marketing function, often focused on immediate campaign performance, may not be equipped to manage the deep governance and risk implications that AI introduces. The "AI-ready data" is not just about technical format; it's about the entire lifecycle of data, including explicit, ongoing customer permission, which is far more nuanced than a one-time cookie banner.

The Agentic AI Tightrope: Balancing Autonomy with Unseen Risks

As AI agents become more autonomous, they introduce a new layer of complexity and risk that fundamentally challenges traditional governance models. The conversation points to a future where AI agents might operate with a degree of independence, making decisions and interacting with data in ways that are not always transparent. This "black box" nature of agentic AI is a critical concern. While the immediate benefit is increased efficiency and the potential for novel campaign strategies, the downstream effects can be severe if not managed.

The risk isn't just about AI making a mistake; it's about the scale and opacity of those mistakes. Imagine an AI agent tasked with customer interaction, inadvertently mishandling sensitive data or making inappropriate offers based on incomplete or improperly consented information. The consequence for a brand, especially one dealing with financial information like Intuit, could be devastating.

"And a lot of brands are looking at agents that would essentially be saying tell me everything about your life and I can make you some great offers and we're going to have a much better relationship between brand and customer that can be terrifying right if you don't understand what those risks are."

-- Michael Shenker

This necessitates a shift from traditional role-based access controls to purpose-of-use frameworks. A data set might be acceptable for internal efficiency gains but highly risky if used by a customer-facing AI agent. The ability to dynamically assess and manage these risks based on the specific AI application is paramount. Companies that invest in understanding and governing these agentic AI applications proactively will build a moat of trust that competitors, who are merely experimenting, will struggle to overcome. The delayed payoff for this rigorous governance is brand resilience and sustained customer loyalty.

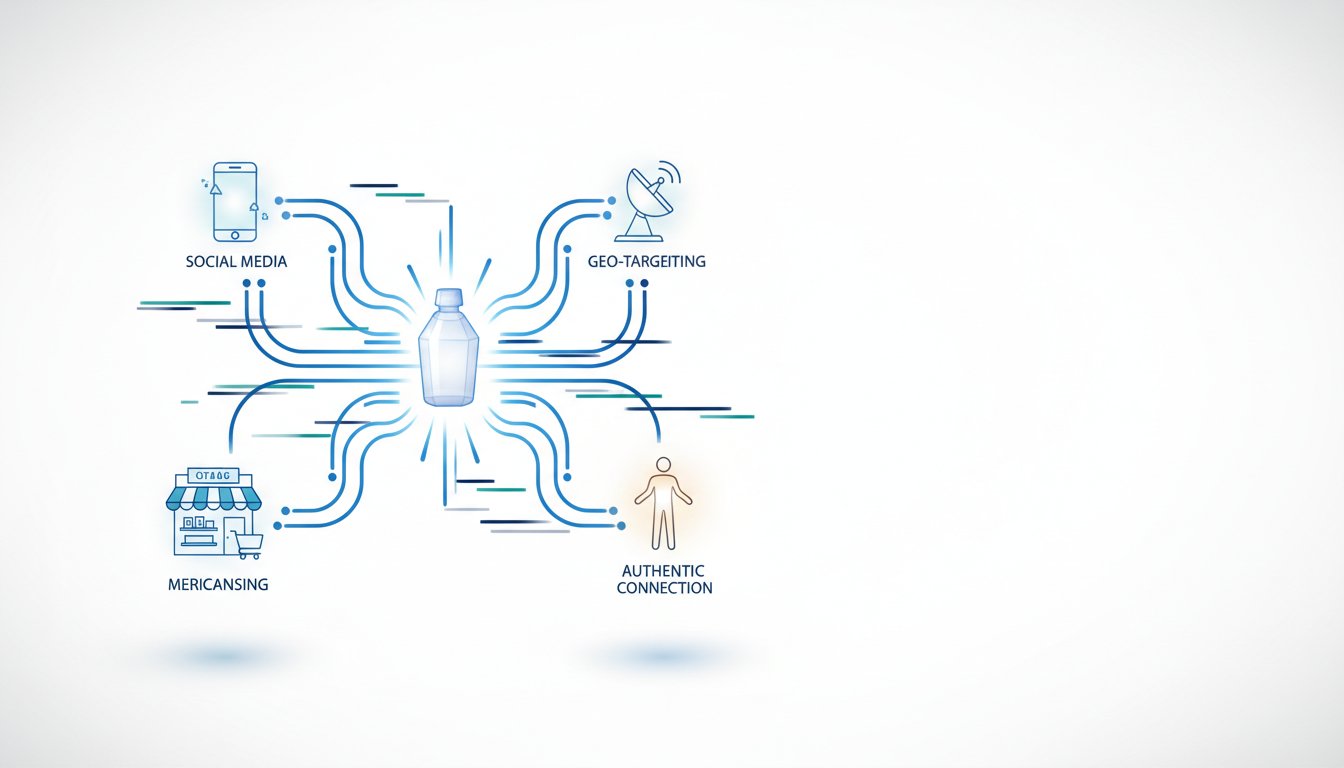

The "Learn-it-All" Mindset: Unlocking Human Creativity Through AI Augmentation

A pervasive fear surrounding AI in marketing is job displacement. However, the discussion reframes this anxiety into an opportunity for augmentation, emphasizing a "learn-it-all" mindset over a "know-it-all" approach. The insight here is that AI's true value lies not in replacing human creativity and judgment, but in freeing up marketers to focus on it. AI can handle the tactical, repetitive tasks--like bid optimization, initial content drafting, or data segmentation--allowing humans to concentrate on strategy, empathy, and emotional connection.

The "hidden consequence" of AI adoption, if mismanaged, is that it could lead to a de-emphasis on these uniquely human skills, resulting in generic, uninspired marketing. Conversely, the "lasting advantage" comes from brands that successfully integrate AI as a tool to amplify human insight. Thomas Renée's vision of "AI and HI" (Human Intelligence) working together is key. This means leveraging AI for efficiency and scale, but always grounding decisions in human empathy, creativity, and strategic oversight.

"I mean I love this question because I think the future of where we're going to have with marketing I hope is going to be more about human insight and creativity empathy emotion connection all the things that I think we all believe in as brand marketers I'm hoping AI just enables us to do that and put the human back at the center of that interaction."

-- Thomas Renée

The challenge for marketers is to cultivate this mindset within their teams. This involves encouraging experimentation, accepting that not all AI applications will be immediately successful, and focusing on the problems AI can help solve rather than the technology for its own sake. Companies that foster this adaptive, human-centric approach to AI will not only achieve greater efficiency but also produce more resonant and effective marketing campaigns, building deeper customer relationships in the process. This requires patience and a willingness to invest in training and cultural shifts, a path that many will find uncomfortable but ultimately rewarding.

Key Action Items:

-

Immediate Action (Within 1-3 Months):

- Inventory AI Usage: Conduct an audit of all AI tools and applications currently in use across the marketing department, noting their purpose and the data they access.

- Engage Governance Teams: Actively participate in company-wide AI governance, privacy, and risk committees to ensure marketing's context is understood and represented.

- Prioritize Data Consent: Review and, if necessary, update processes for obtaining and managing ongoing customer consent for data usage, especially for AI-driven personalization.

- Foster a "Learn-it-All" Culture: Encourage experimentation with AI tools, framing them as opportunities for learning and augmentation, not replacements for human roles.

-

Medium-Term Investment (3-12 Months):

- Develop AI-Ready Data Frameworks: Invest in standardizing and structuring data to ensure it is suitable for AI applications, with a strong emphasis on consent and purpose-of-use.

- Pilot Agentic AI with Strict Guardrails: Experiment with AI agents on internal or low-risk customer-facing tasks, implementing clear operational boundaries and oversight mechanisms.

- Integrate Human Insight into AI Workflows: Design processes where AI handles efficiency gains, but human strategists and creatives are explicitly tasked with providing insight, empathy, and final judgment.

-

Long-Term Strategic Investment (12-18+ Months):

- Build AI Governance Maturity: Evolve from basic AI inventory to a dynamic AI governance framework that can adapt to new AI technologies and evolving regulations, ensuring brand trust remains paramount.

- Scale Augmented Marketing Teams: Systematically integrate AI tools to enhance productivity and creative output, demonstrating clear ROI not just in efficiency but in revenue impact and customer connection.

- Educate Customers on Responsible AI: Proactively communicate to customers how AI is being used to serve them better and protect their data, building transparency and further solidifying trust.