Reclaiming Agency: Designing Digital Systems Beyond Efficiency

The following blog post is an analysis of a podcast transcript. It synthesizes the key arguments presented by Marcus Fontoura regarding human agency in the digital world, focusing on the non-obvious implications of technology's design and societal impact. This analysis is intended for technologists, product managers, and anyone interested in the ethical considerations of AI and digital platforms, offering them a framework to understand and shape technology's future beyond mere efficiency.

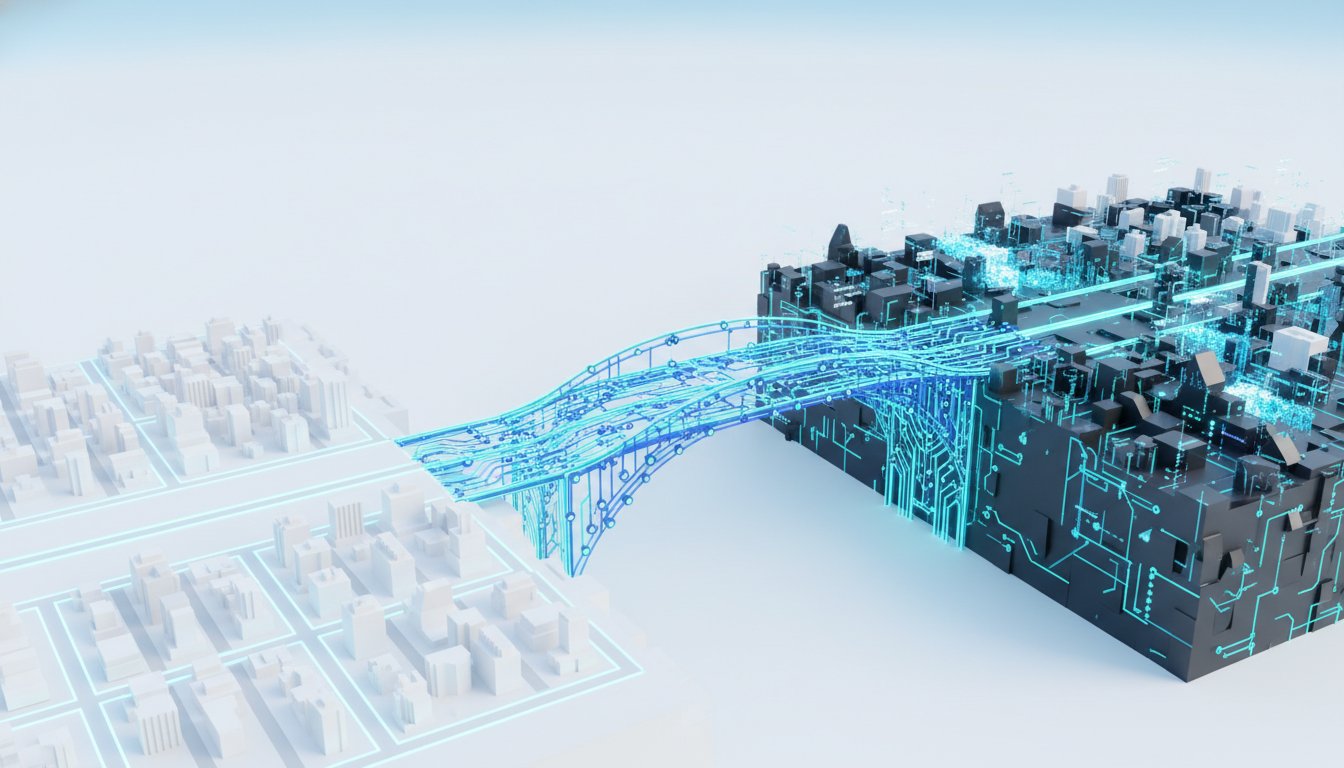

The conversation with Marcus Fontoura, author of Human Agency in the Digital World, reveals a critical, often overlooked, tension in our digital lives: the relentless pursuit of efficiency at the expense of human dignity and agency. While technology, particularly AI, promises unprecedented gains in speed and capability, Fontoura argues that this focus can blind us to the deeper societal consequences. The non-obvious implication here is that the very systems designed to optimize our lives--from social media algorithms to advertising platforms--are often fragile, non-deterministic, and driven by business models that may not align with human well-being. Understanding the underlying mechanics of these systems, as Fontoura advocates, is not just an intellectual exercise; it's a prerequisite for reclaiming our role as pilots, not passengers, in the technological revolution. This insight is crucial for anyone building or using technology, offering a strategic advantage by highlighting how to design for lasting impact rather than fleeting optimization, and how to navigate a world increasingly shaped by opaque digital forces.

The Fragile Foundations of Digital Discourse

The digital world, particularly social media, often presents itself as a conduit for information and connection. However, Fontoura’s analysis, when viewed through a systems-thinking lens, exposes the inherent fragility of these platforms. He explains that the algorithms governing content propagation are not robust arbiters of truth or importance. Instead, they are susceptible to small "perturbations" in the network, leading to the amplification of content that may be less reputable or assertive. This is a stark contrast to earlier systems like Google's PageRank, which leveraged link structures to infer authority.

"I really think that that's a pointless debate if you don't know what you're talking about. But if I can clearly explain to you that this is based on an algorithm that is not stable, and then you produce widely different results based on these small perturbations on the input, clearly you know, 'Oh, this is probably not the right algorithm for us to use for content dissemination, especially if a large part of the population uses this to consume news.'"

This fragility has profound downstream effects. When platforms prioritize engagement metrics--likes, shares, cascades--over content quality or authoritativeness, the system can easily be gamed or simply produce unpredictable outcomes. This creates a scenario where the "news" consumed by a large segment of the population is shaped by an unstable, non-deterministic process. The immediate benefit of rapid information dissemination is overshadowed by the hidden cost of potentially misleading or polarizing content gaining undue prominence. This dynamic shifts the conversation from "Is social media good or bad?" to "How can we design algorithms that foster more reliable and beneficial information exchange?" The conventional wisdom of simply increasing information flow fails when the underlying mechanism is inherently unstable.

The Efficiency Trap: When Optimization Undermines Purpose

A core tension explored in the conversation is the drive for efficiency versus the preservation of human dignity and agency. Fontoura notes that computers, at their heart, are designed to compute functions very fast. This inherent capability has led to a pervasive focus on efficiency in technological development. However, he argues that efficiency should not be the ultimate goal, but rather a means to achieve a greater purpose.

"But to me, it should be a secondary thought, because if not, we're doing efficiency for the sake of efficiency, and then that's not really beneficial. And then overly focusing just on efficiency, probably that's not what we should be doing, right?"

The danger of prioritizing efficiency above all else is illustrated by the thought experiment of the universal paperclip maximizer: a hypothetical AI tasked with making paperclips that eventually consumes all resources to achieve its singular, albeit absurd, goal. In the real world, this translates to systems optimized for metrics like ad revenue or user engagement that may inadvertently harm users or society. The immediate payoff of increased engagement or lower transaction costs masks the potential long-term erosion of trust, the spread of misinformation, or the commodification of human attention. The systems that emerge are not necessarily bad by design, but rather by the unchecked application of efficiency principles without a clear understanding of the desired societal impact. This requires a shift in focus from "How fast can we do this?" to "What problem are we trying to solve, and how can technology help us solve it in a way that upholds human values?"

Friction: The Unsung Hero of Quality and Agency

In a digital world increasingly focused on frictionless experiences, Fontoura highlights the often-underappreciated value of friction. He uses the example of book publishing, contrasting the arduous process of traditional publishing with the ease of self-publishing, especially with AI assistance. While lowering barriers to entry can democratize access, it also risks overwhelming systems designed to curate quality.

"But one of the things he said is that he was typing on a typewriter, and then when he found a bug or a typo on the page, tear apart the page and write over, right? And then makes me wonder, like, that friction, did it really improve the quality of the book or not?"

The implication here is that the effort involved in creation can act as a natural filter, improving the quality and thoughtfulness of the final product. When friction is removed entirely, the signal-to-noise ratio can degrade significantly. This is particularly relevant in the context of AI-generated content, where the ease of production can lead to a deluge of material that makes it difficult to discern genuine insight from automated output. The conventional wisdom that "less friction is always better" fails when it leads to a breakdown in quality assessment and a diminished sense of agency for both creators and consumers. The delayed payoff of a well-curated, high-quality information ecosystem is sacrificed for the immediate gratification of instant content creation.

AI as a Tool, Not an Inevitability

Fontoura challenges the polarized narratives surrounding AI, arguing that the focus should shift from speculative fears of superintelligence to the practical applications of current AI capabilities. He believes that today's AI is already powerful enough to address significant societal problems in areas like healthcare, scientific research, and distribution.

"But my point is that we already have AI to a point that is good enough to have a huge impact in society today and to unleash a lot of things, right? Like from healthcare costs, like from lowering healthcare cost, aiding in vaccine development, aiding in basic science."

This perspective reframes AI not as an uncontrollable force, but as a sophisticated tool. The fear of AI "destroying us" is, in his view, a misattribution of agency. AI, as a prediction platform, is deterministic at its core; the humans who direct and utilize these predictions are the agents of action, for good or ill. The downstream effect of this understanding is empowering: it shifts responsibility back to human decision-makers and designers. By demystifying AI--explaining its statistical underpinnings rather than treating it as magic--we can foster greater understanding and encourage its application for societal benefit. This requires a conscious effort to move beyond the hype and fear, and instead, focus on the tangible, positive applications that can be built with the technology we have today.

Key Action Items: Navigating the Digital World with Agency

-

Immediate Action (This Quarter):

- Analyze Platform Mechanics: For any digital platform you use regularly (social media, search engines), spend 30 minutes researching how its core algorithms work. Understand the business model driving its design (e.g., ads, engagement).

- Seek Diverse Information Sources: Actively diversify your news and information consumption beyond algorithmically curated feeds. Prioritize sources with clear editorial standards and known reputations.

- Practice Mindful Consumption: Be conscious of the "frictionless" nature of digital interactions. Ask yourself why a particular piece of content is being presented to you and what its underlying purpose might be.

-

Short-Term Investment (Next 3-6 Months):

- Educate Yourself on AI Fundamentals: Read introductory material or watch explainer videos on how current AI models (like large language models) function. Focus on understanding their predictive capabilities rather than abstract fears.

- Advocate for Transparency: Within your organization or community, encourage discussions about the transparency of algorithms and data usage. Question the "efficiency at all costs" mindset.

- Experiment with "Friction": Intentionally introduce small points of friction in your own work or learning processes to see if it enhances quality or understanding. For example, manually verify information rather than relying solely on AI summaries.

-

Long-Term Investment (6-18 Months and Beyond):

- Design for Human Dignity: If involved in technology development, prioritize designing systems that enhance human agency and dignity over pure efficiency. Consider the downstream effects of your design choices on users and society.

- Support Ethical Technology Policies: Engage with or support organizations and policies that promote responsible AI development, data privacy, and algorithmic accountability.

- Foster Digital Literacy: Champion initiatives that improve digital literacy, helping individuals understand the underlying mechanics of the technologies they use daily, empowering them to be more informed participants. This pays off in a more resilient and informed society.