Balancing Speed and Stability--Mitigating Tech Debt from Software Development Shortcuts

The Hidden Costs of Speed: Why Shortcuts Are the Longest Route to Failure

In this conversation with Tom Totenberg, Head of Release Automation at LaunchDarkly, we uncover a critical paradox in software development: the relentless pursuit of speed, often driven by business pressure and amplified by AI, is leading teams down a treacherous path of shortcuts. These aren't mere inefficiencies; they are fundamental design flaws that, while offering immediate relief, sow the seeds of future instability, operational nightmares, and ultimately, a slower, more brittle development process. The non-obvious implication is that the very tools and pressures designed to accelerate delivery are, in fact, creating systemic drag. This analysis is crucial for engineering leaders, architects, and developers who aim to build sustainable, high-performing systems, providing them with a framework to identify and mitigate the insidious long-term consequences of seemingly expedient decisions, thereby gaining a competitive advantage through engineered resilience.

The Siren Song of Speed: How Shortcuts Undermine System Integrity

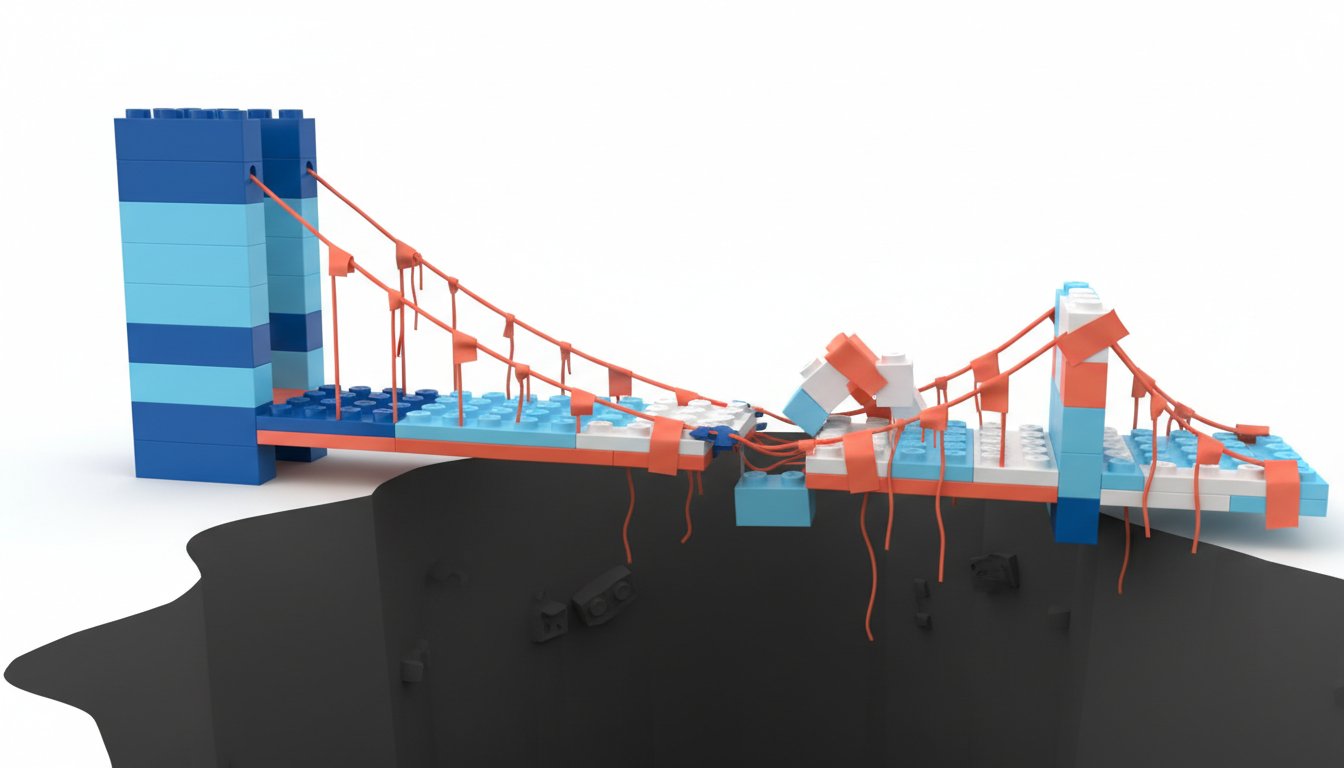

The drive to deliver faster is a constant in the software world. As Tom Totenberg points out, the pressure from business to release more frequently--shifting from annual releases to bi-weekly cadences or even continuous deployment--forces technical teams to find ways to accelerate. This pressure, however, creates fertile ground for shortcuts. Totenberg describes engineers as "fundamentally lazy" in the sense that they will always seek the path of least resistance, especially when metrics appear healthy and they aren't being bothered. This isn't necessarily a negative trait; a judicious shortcut can save significant time and effort in the long run. The danger arises when this tendency is pushed too far, leading to what Totenberg calls "duct tape connectors"--home-grown tooling or ad-hoc solutions that weren't designed for scale or robustness but become critical dependencies over time.

"My perspective on this comes from digging deep into a lot of different organizations change management processes which is where my whole technical background is from and then also helping them improve that process so overall there are a lot of themes that we'll be getting into but one of the consistent themes that i always think about is some of the best engineers out there are fundamentally lazy in that they will take the path of least resistance you know if you are measuring something they will try to gamefy that measurement and as long as the reports look good as long as it's easy and they're not getting bothered they are going to take whatever shortcut they want"

-- Tom Totenberg

A prime example of this is configuration management. Many organizations recognize the need to decouple deployment (getting code out) from release (exposing features to users). However, Totenberg has seen numerous "cobbled together arcane strange configuration utilities" that allow for dark deployments but become incredibly difficult to navigate during incidents. When something goes wrong, tracing the root cause through these custom solutions is a nightmare, leading to extended outages and significant pain. This illustrates a core systems thinking principle: an immediate solution, however convenient, can create a complex, brittle system that is hard to manage and debug. The ease of deployment offered by these tools is a first-order benefit, but the downstream consequence is an increased blast radius and MTTR (Mean Time To Recovery) when incidents occur.

The rise of AI code generation tools further exacerbates this issue. While AI can lower the barrier to entry for creating new functionalities, it also presents new avenues for shortcuts. Totenberg highlights a concerning practice where AI-generated code bypasses traditional human review processes. If a developer asks an AI to generate code and then has it reviewed by another human developer who might not fully grasp the AI's output, the system is essentially rubber-stamping code without deep scrutiny. This is particularly problematic because AI can generate vast amounts of code, making it easy to hide subtle bugs or security vulnerabilities. The shortcut here is skipping rigorous, multi-human code review, which, while time-consuming, is a critical safeguard against introducing complex, AI-generated errors into production. This bypasses the established SDLC (Software Development Lifecycle) governance, creating a new category of risk.

The Compounding Debt of Expediency

The distinction between a shortcut and technical debt is crucial. Totenberg clarifies that technical debt is often the result of shortcuts. Taking shortcuts--whether it's wrapping code in debugging features without proper planning, implementing features without considering future extensibility, or bypassing established processes--directly contributes to technical debt. This debt manifests as increased work down the line to refactor, fix, or re-architect systems that were built with immediate expediency in mind rather than long-term sustainability. The MVP (Minimum Viable Product) approach, when taken to an extreme without considering the underlying platform or future growth, is a classic example. The initial speed gained by shipping an MVP quickly is offset by the exponentially harder work required to refactor it later, especially when compared to building with extensibility in mind from the outset.

"Tech debt i think is a result of shortcuts right shortcuts can absolutely lead to tech debt and tech debt comes in multiple forms right whether this is something like wrapping code in some extra debugging observability wrapping some code in feature flag or tech debt can also absolutely be the concept of someone trying to get the mvp out the door without thinking about the underlying fundamental platform that maybe should support that feature"

-- Tom Totenberg

This dynamic often clashes with business demands for speed. Totenberg suggests that while agile methodologies rightly emphasize lead time and automated testing, they can sometimes de-emphasize the critical planning phase. He advocates for a return to more intentional, comprehensive planning, not necessarily full waterfall, but enough to understand where a new feature fits into the broader system, avoid duplicating functionality, and clearly define success and failure criteria. This upfront investment in planning, though it might feel slower in the short term, prevents the costly rework that arises from poorly integrated or ill-defined features. The "slow as fast" approach, as he frames it, is about investing time in understanding the system and defining clear metrics, which ultimately accelerates sustainable delivery.

The challenge of defining "good" or success metrics is often linked to the industry's shift towards small, nimble, and autonomous teams. While this fosters ownership, it can also lead to "shipping your organizational chart"--small teams delivering small, potentially uncoordinated solutions that create more tech debt through duplication or conflicting approaches. Totenberg argues for a balance, suggesting that clear, top-down direction, even if it means sacrificing a bit of individual team autonomy, is necessary to provide a "north star" and ensure better coordination. This centralized direction, he implies, is what truly enables continuous fast movement, preventing the eventual slowdown caused by duplicated efforts and architectural inconsistencies.

Building Sustainable Advantage: The Power of Deliberate Design

The conversation highlights several strategies for resisting the temptation of shortcuts and building durable systems. One key approach is establishing a "golden path"--a supported set of tools, techniques, and concepts--often championed by a central Center of Excellence (CoE). This is particularly effective in large organizations with thousands of applications, where standardization acts as a force multiplier, providing common metrics and best practices. This approach allows for customization within a well-defined framework, ensuring that even as teams innovate, they do so within a structure that promotes long-term stability and interoperability.

"We are going to have a golden path a supported set of tools and techniques and concepts and this is a a central coe a center of excellence that that will actually be able to support this right and then you can take it and configure it or customize it based on this golden path but we've got some standard metrics that we're going to measure you on to just to make sure everybody's performing well"

-- Tom Totenberg

Jeff Bezos's famous mandate that "everything needs to be a platform" with clearly defined inputs and outputs serves as a powerful example of this principle. This focus on interoperability and clear interfaces, even for a seemingly simple online bookstore, eventually led to AWS, demonstrating the profound long-term benefits of building for scale and interchangeability. Similarly, the adoption of open standards like OpenTelemetry for observability allows organizations to build internal platforms that can easily integrate with various vendors and adapt to new methods, preventing lock-in and enabling nimbleness. This deliberate engineering work, which doesn't serve the immediate MVP, is precisely what builds lasting competitive advantage.

To counter the "wrecking ball" approach of new leadership or sudden business pivots, Totenberg suggests grounding arguments in business metrics. If a current process, though less flashy, is demonstrably maintaining uptime, reducing MTTR, and enabling smooth releases according to industry benchmarks, this data can form a defensible position. Protecting customers and end-users is always a compelling argument. This requires a broader understanding of value stream management--where every role, from floor sweeping to HR, understands how their work contributes to the ultimate value delivered to the end customer. This holistic view empowers teams to have leadership-level conversations about the potential negative impacts of disruptive changes.

Ultimately, Totenberg advocates for a conceptual approach to release automation and observability. Rather than focusing on specific tools, which change rapidly, he emphasizes understanding the underlying principles: control and measurement. Automation should determine the release path based on the change's risk profile--cosmetic updates might go fast, while data schema changes or PII-related features require slower, more controlled exposure, perhaps to a small beta group. This involves correlating who is exposed to a change with its impact, using reusable metrics to confirm quality and validate that the change is performing as expected without introducing regressions. This deliberate, measured approach, while requiring upfront setup, creates "paved paths" with built-in guardrails, ensuring that speed doesn't come at the cost of system integrity.

Key Action Items

- Embrace "Slow as Fast" Planning: Dedicate time during the planning phase to understand where new features fit into the overall system architecture, define clear success and failure criteria, and identify potential risks. This pays off in 6-18 months by reducing rework and integration issues.

- Establish a "Golden Path" for Tooling: For larger organizations, create a supported set of tools and techniques, potentially through a Center of Excellence, to guide development and deployment. This provides immediate leverage for teams and pays off in 3-6 months through increased consistency.

- Insist on Rigorous Human Review for AI-Generated Code: Implement policies requiring at least two human reviewers for any AI-generated code entering production, regardless of existing peer review processes. This immediate action prevents subtle, hard-to-detect bugs and security vulnerabilities.

- Map Your Value Stream: Understand and articulate how every role and process contributes to delivering value to the end customer. Use these business metrics to defend stable, well-functioning processes against disruptive changes. This requires ongoing effort but builds long-term organizational resilience.

- Decouple Deployment from Release: Continue to invest in robust feature flagging and configuration management systems that allow for deploying code independently of releasing features to users. This investment pays off continuously, enabling safer, more controlled rollouts.

- Build Observability Wrappers: When adopting new observability tools or strategies, consider building a thin internal wrapper. This allows for easier migration to different vendors or methods in the future. This requires upfront engineering effort but provides flexibility and avoids vendor lock-in over 1-3 years.

- Prioritize Systemic Understanding Over Tool Fixes: Focus on understanding the conceptual principles of control and measurement in release automation and observability, rather than getting lost in the specifics of any single tool. This conceptual clarity provides lasting advantage as technologies evolve.