AI Strategy Shifts: Google's Open AlphaFold, Meta's Ecosystem, Open Source Commoditization

This conversation on The Daily AI Show reveals the accelerating pace of AI development, moving beyond theoretical advancements to practical, often surprising, applications. The participants highlight how established tech giants like Google and Meta are leveraging vast datasets and resources to push the boundaries of AI, while also underscoring the growing influence and potential of open-source models. The non-obvious implication is that the competitive landscape is rapidly shifting, not just in terms of raw model performance, but in the strategic deployment of AI to solve specific problems and create distinct advantages. This discussion is crucial for anyone involved in technology strategy, product development, or investment, offering a glimpse into the forces shaping the future of AI and its impact across industries.

The Unseen Architect: Google's Autonomy and AlphaFold's Ripple Effect

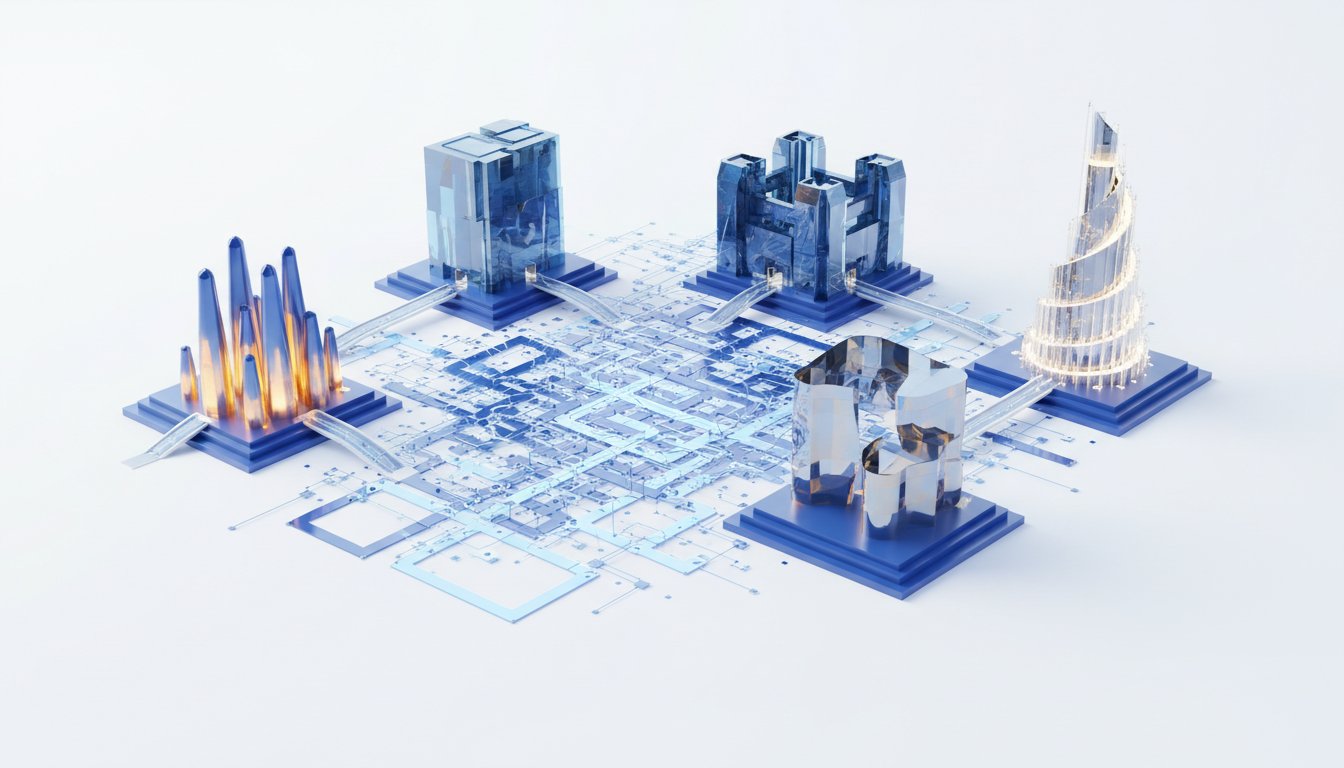

The conversation opens with a deep dive into Google's strategic autonomy granted to DeepMind, a model that has clearly paid dividends. Brian Maucere shares his awe at an interview with Demis Hassabis, CEO of Google DeepMind, detailing how Google's commitment to allowing DeepMind operational freedom has fostered groundbreaking innovation. The most striking example is AlphaFold, a project that, driven by Hassabis's vision, tackled the monumental task of solving protein structures. Instead of incremental progress, the decision was made to solve all known protein structures, a move that required significant infrastructure but promised a revolutionary, free resource for the scientific community. This wasn't just about solving a problem; it was about fundamentally changing the landscape of biological research. The implication here is that true innovation often stems not from optimizing existing processes but from a willingness to undertake massive, seemingly intractable problems with a long-term, open-source vision.

"Why don't we just solve all of them? It would take us a year, considering the, the infrastructure we have. We can do one every about every 10 seconds. There's 230 million of them. Let's just solve all the proteins."

-- Demis Hassabis

This approach, where immediate resources are invested for a massive, delayed payoff that benefits the entire ecosystem, is a powerful lesson in strategic thinking. While the immediate benefit is a dataset, the downstream effect is the acceleration of countless scientific discoveries and disease cures, a payoff that will likely be realized over years, not months. This contrasts sharply with many corporate strategies focused on quarterly results. The conversation suggests that this model of "solve it all and give it away" is a key driver of Google's AI dominance, positioning them as foundational providers rather than just product sellers.

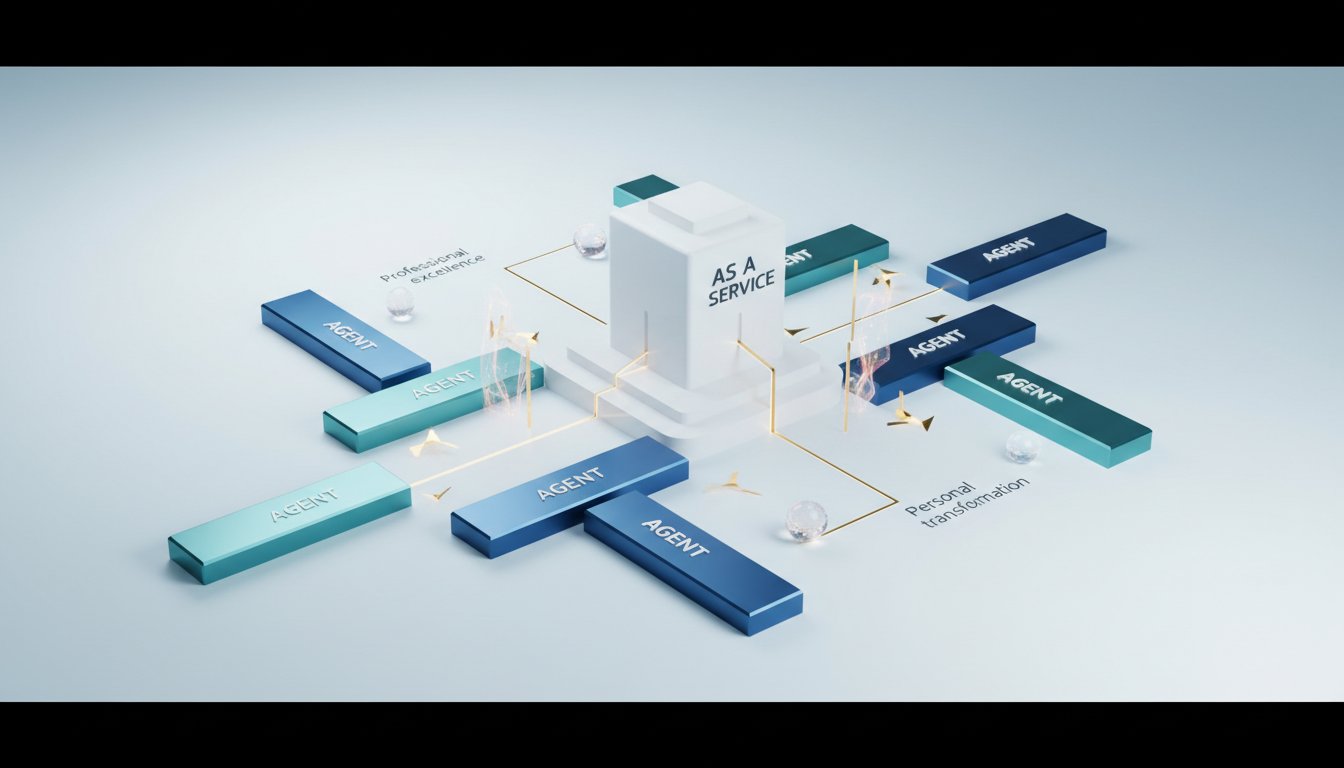

Meta's Strategic Pivot: Beyond Frontier Models to Ecosystem Integration

The discussion then shifts to Meta and its MuseSpark model, highlighting a different, yet equally potent, strategic play. While MuseSpark may not top every benchmark, its significance lies in its integration within Meta's vast ecosystem. Andy Halliday and Carl offer perspectives that emphasize Meta's strategy is less about winning the "frontier model" race and more about enhancing user experience across its platforms like WhatsApp and Instagram. Carl points out that for many regions and specific use cases, models that are "good enough" and readily available, especially open-source options, are becoming increasingly valuable due to cost and data sovereignty concerns.

"They have a really deep distribution and they're not trying to win. And I like, okay, I don't know if I believe that. But I will say, I agree with you. Like, you know, they went from 18th, you know, on the leaderboards, right? They went from 18th to fourth in a year after basically, you know, bringing in what's his name to rerun everything, tearing everything down and starting again."

-- Andy Halliday

This strategic move by Meta is a masterclass in leveraging existing distribution channels. By developing models that integrate seamlessly into their existing user base, they create a moat that performance benchmarks alone cannot capture. The delayed payoff here is not a scientific breakthrough, but sustained user engagement and a stronger network effect, making their platforms stickier and more indispensable. The failure of conventional wisdom, which often dictates chasing the absolute best model, is evident here; Meta's success hinges on making AI accessible and useful within its established domain.

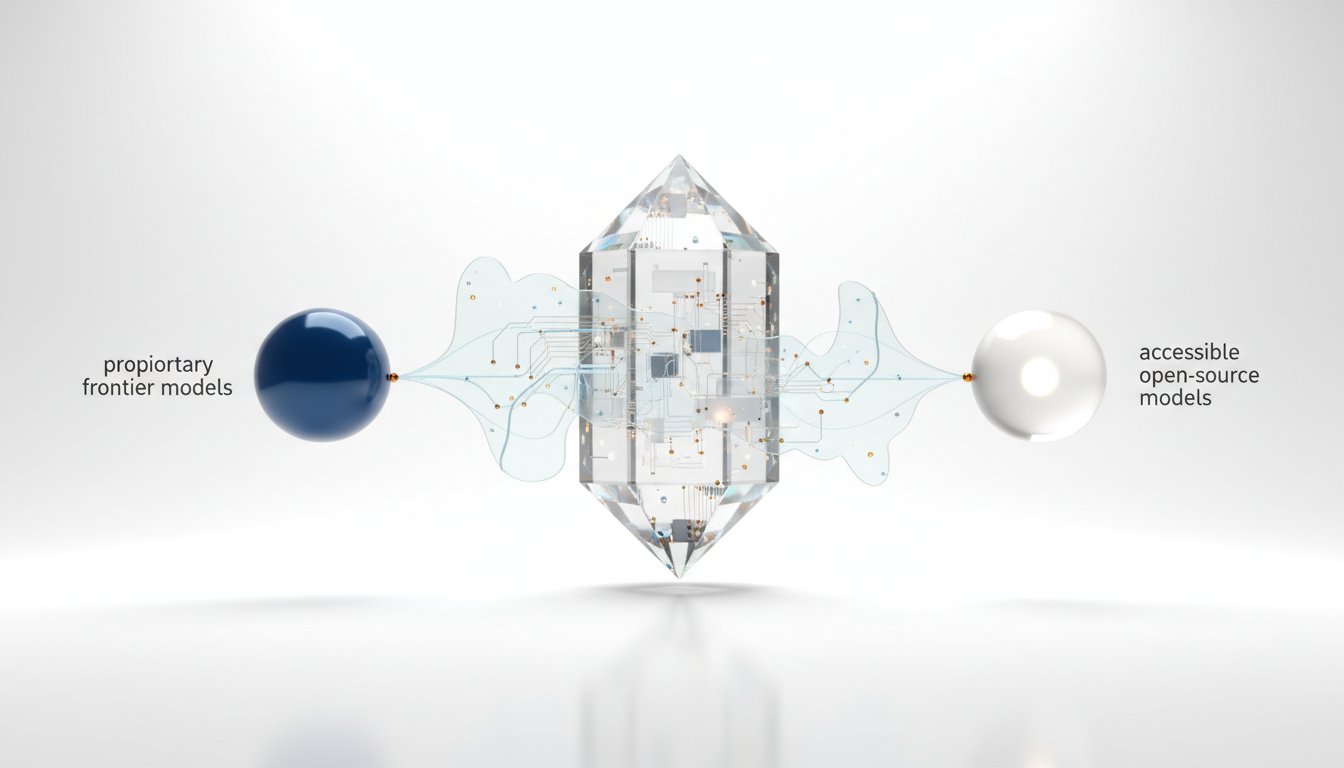

The Open Source Gambit: Democratization and Strategic Commoditization

A significant portion of the conversation revolves around the rise and strategic implications of open-source AI models. The emergence of models like Gemma 4, GLM 5 1, and the potential for Meta to open-source parts of Spark, alongside developments like Reflection AI aiming for frontier open-weight models, paints a picture of a rapidly democratizing field. Brian Maucere articulates a critical insight: open-sourcing isn't purely philanthropic; it's a strategic play to drive down competitor pricing and establish a foundational technology that others build upon.

"It is a classic Silicon Valley move, and there's a lot of reasons why you would do that. It's not necessarily just because like, oh, we're opening, we're open source, we're being, it's, you know, we want the world to use this. Well, a couple of things. Number one, it drives price down, right? So, it becomes, if that model becomes a commodity, you're directly affecting what your, your competitors can charge because they have to be able to show a mode. They have to be able to show a gap between what you just released as open source."

-- Brian Maucere

This strategy creates a long-term advantage by commoditizing core AI capabilities. While frontier models might offer a temporary edge, the open-source movement ensures that the underlying technology becomes more accessible and affordable. The delayed payoff for companies like Google and Meta, in this context, is a market where their competitors are forced to compete on value-added services and unique integrations, rather than on the cost of raw AI processing power. This also forces developers, like Carl, to become adept at evaluating and integrating various models, a skill that becomes increasingly valuable as the AI landscape diversifies.

The Friction of Progress: Rate Limiting and the CSA Model

The conversation touches upon the practical challenges of scaling AI services, exemplified by the user frustration with Perplexity's rate limiting. Beth Lyons explains that these limitations are often a consequence of the immense inference costs and capacity constraints faced by AI providers. This leads to a speculative discussion about a "Community Supported Agriculture" (CSA) model for AI services, where users pay for access to a resource whose exact yield is unpredictable. This highlights a fundamental tension: the desire for unlimited access to cutting-edge AI versus the reality of its operational costs.

The implication here is that the current infrastructure and cost models for AI are not sustainable for unlimited, high-volume usage without significant price increases. The "discomfort now" comes from reduced access or higher costs, but the "advantage later" is the continued development and availability of these powerful tools, funded by those willing to bear the cost or adapt to limitations. This also fuels the appeal of open-source models, which bypass these direct provider limitations, offering an alternative path for those who find frontier model access too restrictive or expensive.

Actionable Insights for Navigating the AI Frontier

- Embrace the "Solve It All" Mentality (Long-Term Investment): For organizations with the resources, consider tackling large, foundational problems with a long-term vision, potentially releasing solutions as open resources. This builds significant goodwill and establishes industry leadership, with payoffs realized over years.

- Integrate AI Deeply into Your Ecosystem (Strategic Deployment): Focus on how AI can enhance existing products and user experiences rather than solely chasing benchmark supremacy. This builds customer loyalty and network effects, offering a durable competitive advantage.

- Leverage Open Source Strategically (Cost and Accessibility): Actively explore and integrate open-source models. Understand that their release is often a strategic move to commoditize AI and drive down competitor costs, creating market dynamics that benefit early adopters.

- Prepare for Shifting Access Models (Adaptability): Anticipate that access to frontier AI models may become more constrained or expensive due to inference costs. Develop strategies to manage this, including exploring open-source alternatives or optimizing usage patterns.

- Develop Model Evaluation Expertise (Skill Investment): As the number of AI models proliferates, cultivate internal expertise in evaluating and integrating different models for specific use cases. This allows for more informed decision-making and avoids being locked into a single provider.

- Consider "Digital CSA" Subscription Models (Consumer Awareness): Understand that AI services may adopt models where access is variable based on demand and provider capacity. Budget accordingly and be prepared to adapt usage patterns.

- Explore AI-Powered Automation for Internal Workflows (Immediate Action): Leverage tools like Claude Managed Agents or Gemini in Google Slides/Notebooks to automate repetitive tasks and enhance productivity. This offers immediate efficiency gains and frees up resources for more strategic work.