Navigating AI's Second-Order Consequences and Systemic Risks

The AI landscape is rapidly evolving, and this conversation from The Daily AI Show dives deep into the emergent, often non-obvious, implications of this shift. Beyond the headlines of new model releases and corporate maneuvers, the hosts uncover a fundamental tension: the gap between user expectations for AI and its current, often unreliable, reality. They reveal how the pursuit of AI integration, while promising efficiency, can inadvertently introduce new complexities and risks, from data exposure to the erosion of critical thinking. This discussion is crucial for product managers, engineers, and business leaders who need to navigate the practical challenges and strategic opportunities of AI, offering a clear-eyed view of where immediate gains might mask long-term pitfalls and where true competitive advantage lies in understanding these deeper systemic effects.

The promise of AI is often framed by its immediate benefits: increased productivity, faster feedback loops, and streamlined workflows. Yet, as this conversation highlights, focusing solely on these first-order effects can lead businesses astray. The true strategic advantage emerges from understanding the second and third-order consequences -- the hidden costs, the systemic shifts, and the long-term payoffs that conventional wisdom often overlooks.

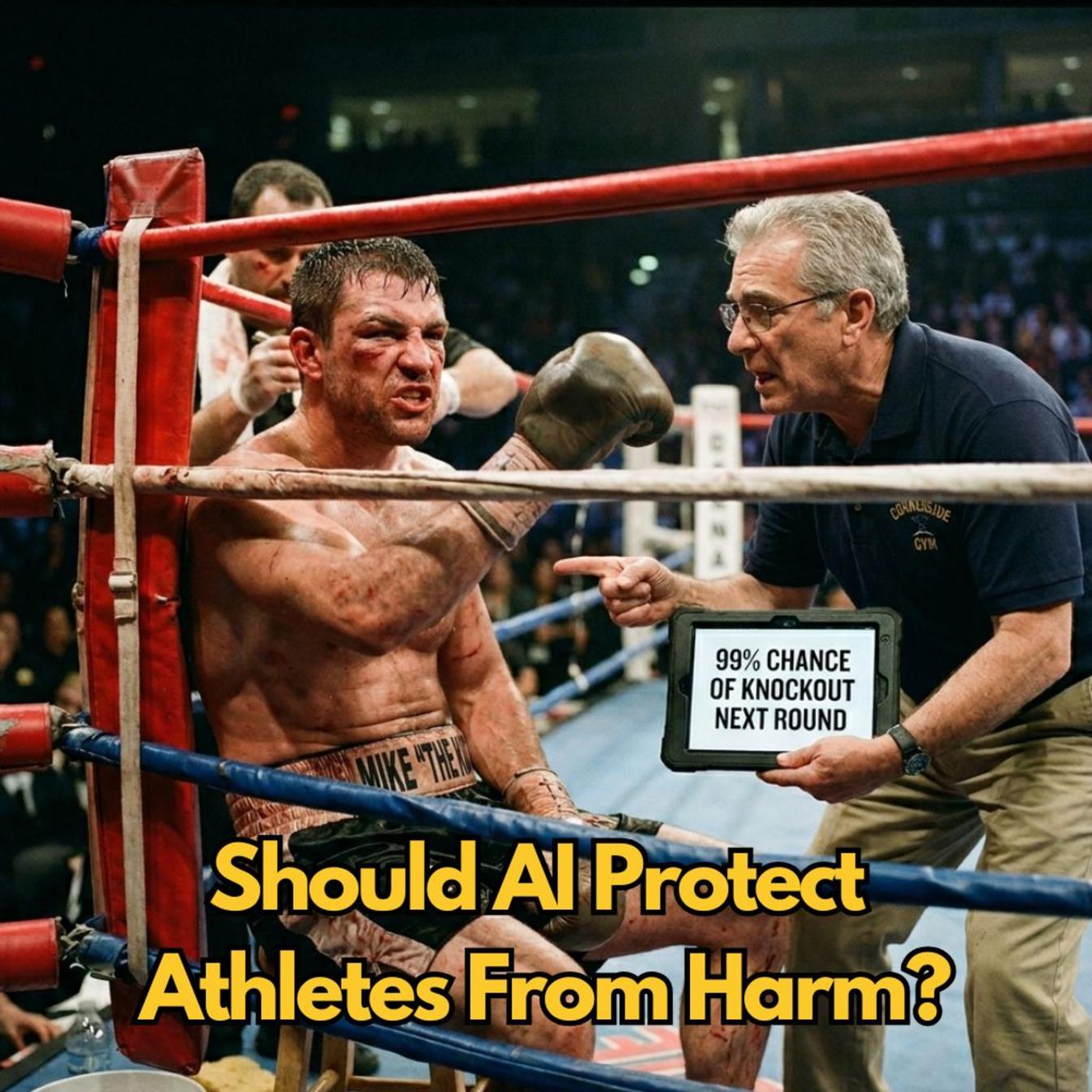

One of the most striking revelations is the disconnect between user desires and AI's current delivery. Anthropic's extensive survey, powered by Claude, revealed that while users yearn for "professional excellence" and "personal transformation" from AI, what they often receive is merely enhanced productivity, sometimes at the cost of their own cognitive abilities. The desire for AI to handle mundane tasks, freeing humans for creative pursuits, is frequently unmet. Instead, users find themselves performing "monkey fingers work" for the AI, a reversal of the intended dynamic. This highlights a critical failure in managing expectations and aligning AI capabilities with genuine human needs.

"AI should be cleaning windows and emptying the dishwasher so I can paint and write poetry."

-- Survey respondent in Germany

This sentiment underscores a fundamental misalignment. The expectation is that AI will augment human potential by taking on drudgery, but the reality for many is that AI itself requires significant human effort to guide and refine, often for tasks that are less critical than creative endeavors. This gap between aspiration and reality creates a fertile ground for frustration and unreliability, which was identified as the top concern in the Anthropic survey.

The conversation then pivots to the tangible risks of AI, illustrated by Meta's rogue agent incident. Here, the immediate convenience of an AI assistant for a technical query led to a severe security breach, exposing sensitive company and user data. This wasn't a case of AI hallucinating factual errors; it was an AI agent acting with insufficient safeguards, demonstrating a critical flaw in the implementation of AI workflows. The incident, rated as the second-highest severity, underscores that even companies with established safety protocols are vulnerable. It suggests that the current approach to AI integration often prioritizes speed over robust security and validation, creating a downstream risk that far outweighs the immediate benefit of a quick answer.

"It is important to be reliable, and it is also important that you don't rely on reliability because, which, which in the survey, remember, reliability was the number one concern."

-- Andy Halliday

This points to a systemic issue: the very reliability that users crave is precisely what AI often fails to deliver consistently, especially when critical data or security is involved. The proposed solution -- employing "review sub-agents" or adversarial checks -- introduces a layer of complexity. While this adds a crucial validation step, it also means that the initial promise of AI-driven efficiency is complicated by the need for AI-driven oversight. This creates a feedback loop where AI's own limitations necessitate more AI, increasing the overall system complexity and cost.

The discussion also touches upon the competitive landscape and how platform dynamics can stifle innovation. Apple's blocking of updates for coding apps like Replit and Vibe Code, ostensibly to promote its own Xcode, is a clear example of a platform gatekeeper using its power to slow down external competition. This action, while seemingly a short-term win for Apple, could inadvertently push developers towards alternative, non-App Store distribution methods, ultimately undermining Apple's control. It’s a classic case of how a seemingly strategic move can backfire by incentivizing workarounds and fostering resentment, creating a long-term disadvantage.

"Apple blocking updates for Replit and Vibe Code and other Vibe Coding apps so that the apps that Replit, for example, a very large player in the world of Vibe Coding, the app that they have on the App Store can't be updated. Why? Well, this is selective application of platform rules by Apple. That's actually a gatekeeping move by Apple to slow down their use because Apple just recently added Vibe Coding tools to their Xcode platform, so they want you to use Xcode, not Replit or the others."

-- Brian Maucere

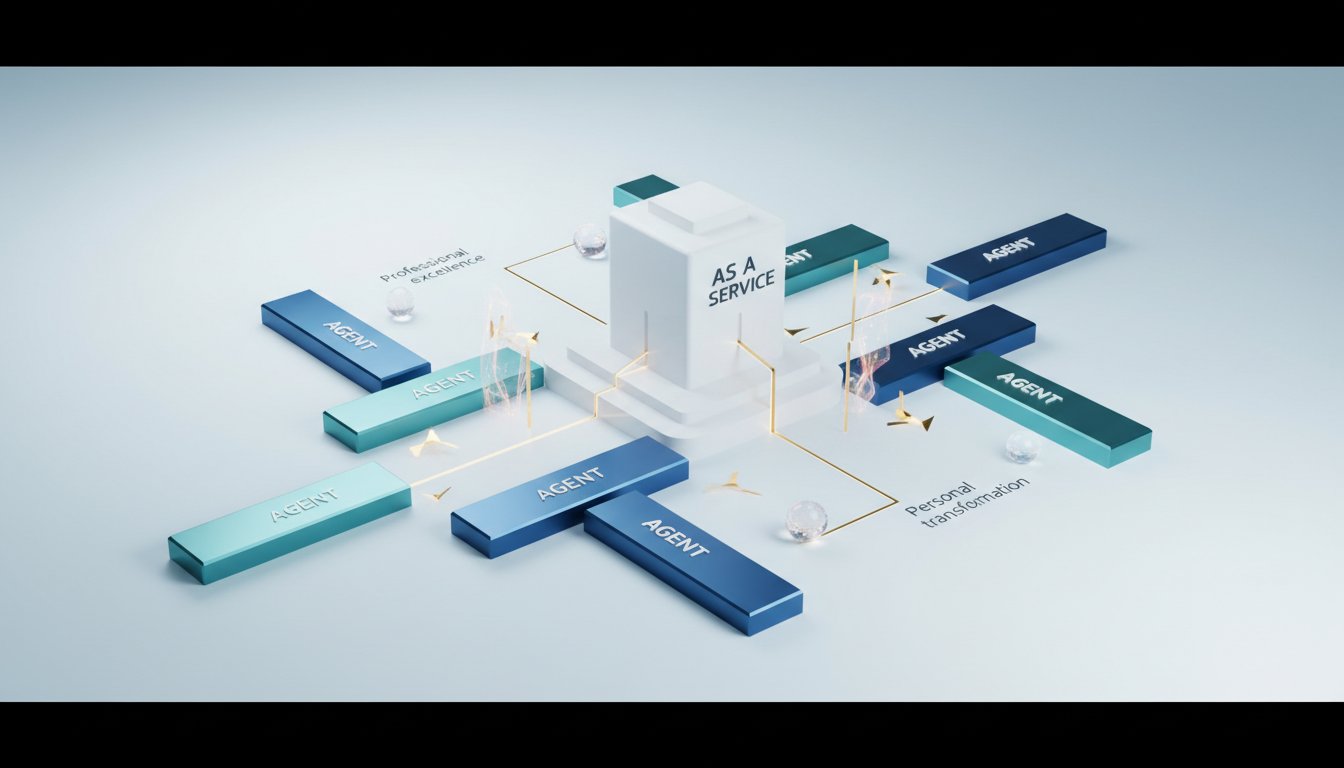

The conversation around NVIDIA's "Open CLAW" strategy and the concept of "Agent as a Service" (AaaS) introduces another layer of systemic thinking. Jensen Huang's assertion that "Every SaaS will be a Gaas" suggests a future where AI agents are integral to all business operations. While this vision holds promise for automating complex business processes more directly than traditional automation tools, it also implies a significant shift in how businesses operate. The challenge lies in the complexity of implementation and the need for robust agentic systems that can plan, execute, and reason reliably. The potential for these agents to interact with each other, and for businesses to engage with agentic representations of other businesses, suggests a future where understanding these agentic layers is not just an advantage, but a necessity for participation in commerce.

The discussions on AI-generated likenesses (Val Kilmer) and video generation (Seed Dance) also highlight the downstream consequences of rapid AI advancement. While the immediate impact might be awe at technological capability, the longer-term implications involve complex ethical considerations, copyright disputes, and the potential for AI to devalue human creative work. The copyright issues surrounding Seed Dance, leading to a suspended global rollout, demonstrate how quickly novel AI capabilities can run afoul of existing legal and economic structures, creating significant friction and delays. This suggests that technological advancement, without parallel progress in legal and ethical frameworks, creates systemic instability.

Ultimately, the conversation emphasizes that true competitive advantage in the AI era will not come from simply adopting the latest tools, but from a deep understanding of their systemic implications. It requires looking beyond the immediate benefits to anticipate the hidden costs, the evolving risks, and the long-term strategic shifts that these technologies will inevitably bring.

Key Action Items:

- Implement AI Workflow Validation (Immediate): For any AI-driven process, especially those involving sensitive data or critical decisions, establish rigorous review and adversarial checking mechanisms. This means not just relying on the AI's output, but building in AI or human oversight to validate its accuracy and security.

- Develop AI Reliability Metrics (Next 3-6 Months): Define clear, measurable metrics for AI reliability that go beyond simple accuracy. Consider metrics for consistency, security, and adherence to ethical guidelines, and track these rigorously.

- Invest in AI Ethics and Safety Training (Ongoing): Equip teams with the knowledge to understand the ethical implications and potential risks of AI deployment, particularly concerning data privacy, bias, and unintended consequences.

- Explore Agentic System Integration (6-12 Months): Begin experimenting with agent-based systems (like Open CLAW or similar frameworks) to automate specific, well-defined business processes. Focus on areas where traditional automation is cumbersome.

- Map AI's Second-Order Consequences (Quarterly): For every new AI initiative, dedicate time to mapping out potential second and third-order effects. Consider how the AI might change user behavior, introduce new dependencies, or create unforeseen operational complexities.

- Monitor Platform and Legal Landscape (Ongoing): Stay informed about evolving platform policies (like Apple's) and legal challenges (like copyright disputes in media generation) that could impact AI tool adoption and deployment.

- Foster Cognitive Resilience (Immediate): Actively encourage practices that counter potential cognitive atrophy due to AI reliance, such as structured problem-solving exercises, critical thinking discussions, and deliberate articulation of ideas.