AI Agents: Beyond Convenience to Deep Research and Skill Atrophy

The AI agent landscape is rapidly evolving, moving beyond simple chatbots to sophisticated systems capable of complex reasoning and action. While many new tools promise to revolutionize productivity, their practical applications and potential downstream consequences remain unclear. This conversation highlights a critical tension: the allure of immediate convenience versus the risk of eroding fundamental human skills and creating unforeseen dependencies. The core implication is that the true value of AI agents lies not in automating existing tasks but in enabling entirely new forms of personal research, creative expression, and even relationship mediation, provided we navigate the inherent complexities and potential skill atrophy with intentionality.

This analysis delves into the nuanced realities of AI agents, moving beyond the hype to examine their practical implications and the subtle, yet significant, downstream effects they can have. We explore how tools like Perplexity's "Computer" and NVIDIA's Nemo Claw are reshaping our interaction with information and automation, and critically, how this shift might impact human capabilities. The conversation reveals that the most compelling use cases are often deeply personal and research-oriented, while superficially impressive demos can mask a lack of genuine innovation. Furthermore, the potential for AI to mediate human relationships, while offering a lifeline in conflict, also raises concerns about weakening our own communication and critical thinking skills. This exploration is crucial for anyone building, adopting, or simply trying to understand the evolving role of AI agents in our lives, offering a strategic advantage by anticipating the subtle shifts in capability and competitive positioning that these technologies will inevitably create.

The Underwhelming Promise of General-Purpose AI Agents

The initial discussion around Perplexity's "Computer" feature reveals a common pitfall in AI development: a disconnect between technical capability and user value. While the technology can perform complex tasks, the suggested use cases--like generating stock charts or rebuilding historical software--feel redundant. This highlights a critical insight: obvious solutions to easily solvable problems do not constitute a compelling AI agent. Brian Maucere articulates this frustration, stating, "I can't come up with like one thing that I think would be valuable to me that I would want to do in Computer." The immediate consequence is a lack of user engagement and inspiration, preventing adoption.

However, the conversation pivots to more impactful applications when personal research and memory projects are considered. Andy Halliday shares a deeply satisfying experience using "Computer" to meticulously document his progression of skis, complete with historical context. This demonstrates a powerful, albeit niche, application: AI agents excel when tasked with deep, iterative research that aggregates disparate information into a coherent narrative. Halliday's experience suggests that the true power of these agents lies not in replicating existing web searches but in acting as dedicated research partners for complex, long-term personal projects. This delayed payoff--the creation of a unique personal archive--offers a distinct advantage over quick, superficial information retrieval.

"I asked Computer to do that for me. And so it entered into a dialogue with me, said, 'Okay, I'm going to, I will, we'll find the photos if we can. I'll look for those for you, and then we'll, you know, as we get each in the succession of skis, I'll store that photo for you on your drive, and then we'll move on to the next one.'"

-- Andy Halliday

The contrast between the generic stock ticker examples and Halliday's personal research project underscores a key systemic dynamic: conventional wisdom often leads to solutions that are technically impressive but practically underwhelming because they fail to address genuine, unmet needs. The immediate benefit of a stock chart is minimal when easily accessible elsewhere. The benefit of a curated personal history, however, is profound and enduring, a testament to the power of AI in facilitating deep personal engagement with information.

Navigating the Agentic Frontier: From Browser to Command Line

The introduction of NVIDIA's Nemo Claw and the broader concept of "claws" (autonomous agents) brings a new layer of complexity, specifically around the distinction between browser-based agents and those with command-line interface (CLI) access. Brian Maucere points out the fuzziness: "I'm still fuzzy in the distinction between a persistent, long-running agentic system like Manas or GenSpark or Perplexity Computer and Open Claw. Like, what really distinguishes among those different variants?" This highlights the challenge of understanding the architectural differences and their implications for security and capability.

The critical distinction, as Beth Lyons suggests, lies in the autonomous, recursive nature of these agents and their potential for CLI access. This allows them to move beyond simple task execution to more complex, goal-oriented operations. Lyons explains, "I do think it's the autonomous recursive thing that is what people are talking about... this is the piece that moves AI out of something that needs to be monitored and initiated." This shift represents a significant step towards more independent AI systems.

However, this increased capability comes with amplified risk. Anne Murphy's personal anecdote of accidentally deleting crucial files while experimenting with AI tools serves as a stark warning: "I, for real, for real, somehow a whole bunch of my gems. I downloaded stuff from Claude, from Google Drive down to my hard drive, and then I worried about how much space it had taken up, so I just started deleting things as one does." This illustrates a dangerous downstream effect: empowering agents with deep system access, even with good intentions, creates a significant risk of unintended data loss or corruption. The immediate convenience of AI-driven file management or code execution can lead to catastrophic consequences if not handled with extreme caution.

"I think people feel safer using Open Claw than they should, right? I mean, there's like, if you're like, 'Oh, let's just, let's, now there are stories that have come out, but in the hands of people who are very sophisticated, yes, you know, high-level executives in, in the world of AI, you know, getting, getting scratched by the claw, right?"

-- Anne Murphy

The concept of "local-first" architecture, as described by Andy Halliday, offers a potential mitigation strategy. By prioritizing local processing, these agents can reduce cloud token costs and enhance privacy. This approach suggests a future where AI agents operate more autonomously on personal devices, reducing reliance on external servers and the associated risks. The delayed payoff here is enhanced security and cost-efficiency, built upon a foundation of decentralized AI processing.

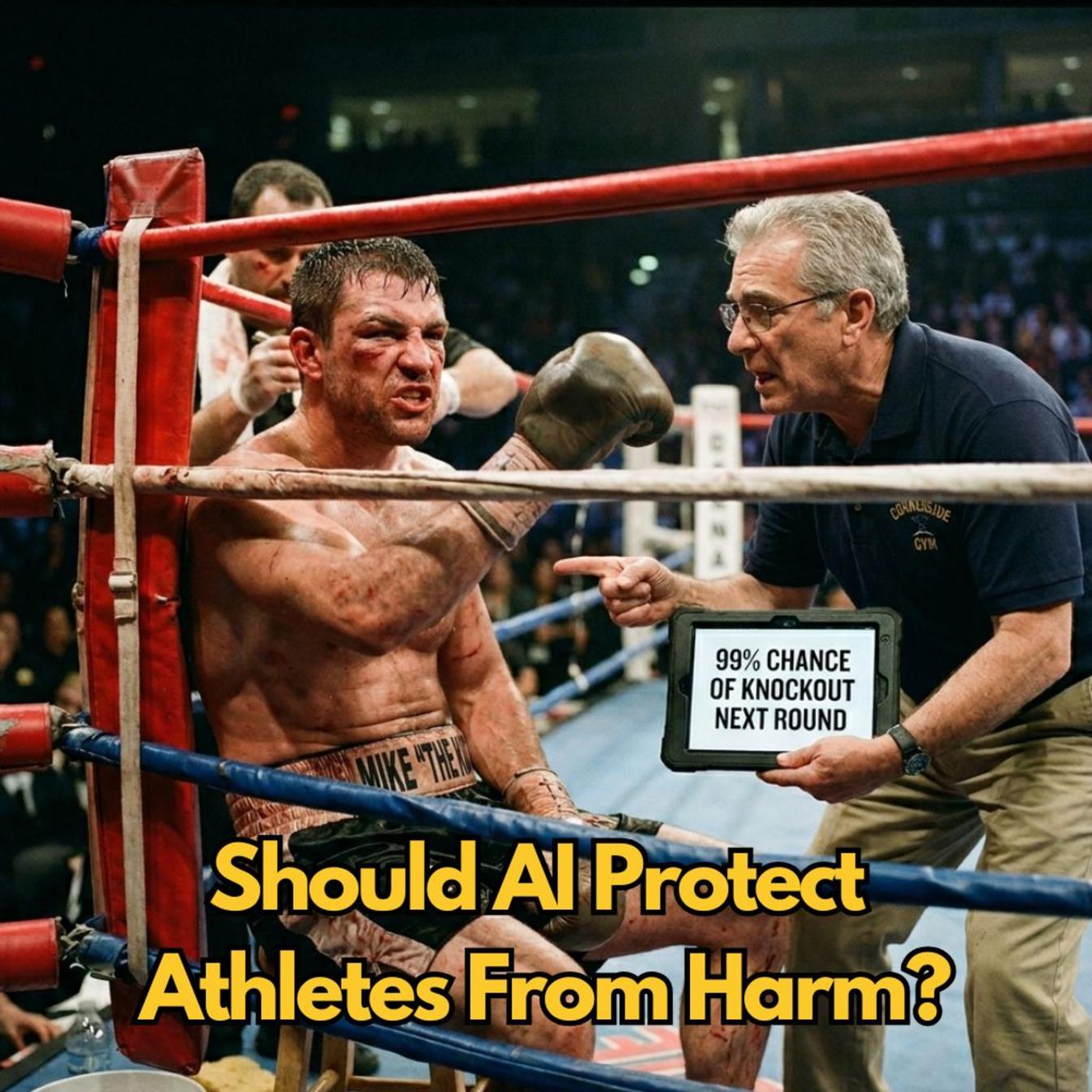

AI as Mediator: A Double-Edged Sword for Human Connection

The conversation takes a profound turn when discussing AI as a mediator in human relationships and conflict. Beth Lyons introduces the idea: "AI as a mediator in difficult relationships and workplace conflict." This presents an intriguing possibility: AI agents could act as neutral third parties, helping to de-escalate tensions and facilitate communication. The immediate benefit is the potential to preserve relationships and achieve shared goals by creating a buffer between individuals.

However, this immediately raises concerns about the potential erosion of human communication skills. Brian Maucere voices this apprehension: "Wouldn't this exacerbate the entire situation? Because now you're like, 'Okay, well, you know what? I'm going to tell my Open Claw to respond to any type of conflict I'm having. I'm going to let my agent do it instead of me learning how to do it.'" The danger is that by outsourcing difficult conversations to AI, individuals may fail to develop the essential skills of empathy, de-escalation, and conflict resolution, leading to a long-term dependency that weakens interpersonal capabilities.

"The joke is, okay, so you're going to have whatever you use, whatever Claw used to answer your WhatsApp. Like, so I saw the GenSpark video this morning about like, 'Oh, we got employees loving GenSpark Claw.' I'm like, 'Okay, so I watched the video, I'm like, all that person did was have GenSpark Claw respond to their family WhatsApp because she doesn't have time to.'"

-- Brian Maucere

Conversely, Anne Murphy offers a counterpoint, highlighting scenarios where AI mediation could be crucial for individuals facing systemic challenges, such as microaggressions in the workplace. She argues, "AIs don't have an amygdala... Having an intermediary like an AI to kind of temper the reactions that are generated by an amygdala in a human can be useful." This suggests that in situations where human emotional responses are detrimental, an AI's lack of such a response can be a distinct advantage, providing a necessary buffer for individuals to maintain their professional standing or personal well-being. The delayed payoff here is the preservation of professional or personal stability in hostile environments, achieved through a strategic deployment of AI as an intermediary.

The Erosion of Core Skills: AI Fluency vs. Foundational Competence

The final segment of the discussion touches upon a critical concern: the potential for AI to undermine fundamental human cognitive abilities. Michael Clune, a literature professor at Ohio State University, observes that his students are becoming "incapable of reading and analyzing, synthesizing data and all kinds of other skills" due to their reliance on AI. This highlights a significant consequence: over-reliance on AI for analytical tasks can stunt the development of critical thinking, reading comprehension, and synthesis skills. The immediate ease of AI-generated summaries or analyses bypasses the cognitive effort required for genuine learning and skill development.

The university's push for "AI fluency" across all majors is met with skepticism by Clune, who argues that the meaning of this fluency is unclear and that, in his field, these tools actively work against educational goals. This points to a systemic misalignment: the drive for AI adoption can inadvertently undermine the very foundational skills that education aims to cultivate. The conventional wisdom of embracing new technology can, in this context, lead to a degradation of core competencies.

The implication is that while AI offers powerful tools for augmentation, its uncritical adoption risks creating a generation of individuals who are proficient in using AI but deficient in the underlying skills that AI is meant to enhance. The long-term consequence is a potential decline in deep analytical thinking and creative problem-solving, skills that are difficult to replicate and essential for innovation. The true advantage lies not in simply using AI, but in understanding its limitations and ensuring it complements, rather than replaces, the development of fundamental human cognitive abilities.

Key Action Items:

-

Immediate Actions (Next 1-3 Months):

- Experiment with Perplexity "Computer" for personal research projects: Dedicate time to exploring deep, iterative research tasks that go beyond simple information retrieval. Identify one personal project where an AI agent could act as a dedicated research partner.

- Evaluate "local-first" AI agent architectures: Investigate and pilot any emerging tools or frameworks that prioritize local processing for enhanced privacy and reduced cloud costs.

- Practice mindful AI use in communication: Consciously choose to handle personal or sensitive communications directly, rather than immediately delegating to an AI agent, to maintain and strengthen personal communication skills.

- Document AI-induced errors: Keep a log of any mistakes or unintended consequences encountered when using AI tools, especially those with system access, to build awareness of potential risks.

-

Medium-Term Investments (3-12 Months):

- Develop clear guidelines for AI agent deployment: For teams or individuals using AI agents with system access (e.g., CLIs), establish strict protocols for usage, oversight, and data handling to prevent accidental data loss or security breaches.

- Explore AI as a conflict support tool, not a replacement: If considering AI for mediation, focus on using it as a supplementary tool to help individuals prepare for difficult conversations or to temper immediate emotional reactions, rather than as a full replacement for direct human interaction.

- Integrate foundational skill development alongside AI adoption: In educational or professional development contexts, ensure that the use of AI tools is paired with explicit training and practice in core skills like critical analysis, synthesis, and direct communication.

-

Longer-Term Strategic Investments (12-18+ Months):

- Build personal knowledge archives with AI assistance: Leverage AI agents to systematically build and curate personal or professional knowledge bases, focusing on projects that offer unique insights or historical context not readily available elsewhere. This creates a durable competitive advantage through unique data aggregation.

- Foster environments that reward direct human engagement: Actively promote and reward direct communication, problem-solving, and critical thinking within teams, ensuring that AI augmentation enhances these skills rather than diminishing them.

- Evaluate the long-term impact of AI on core competencies: Periodically assess whether the use of AI tools is leading to atrophy of essential skills and adjust strategies accordingly to ensure sustained human capability.