AI Code Review Pricing Sparks Developer Identity Crisis

The $25 Code Review: Unpacking Anthropic's Pricing Controversy and the Looming AI Revolution in Software Development

Anthropic's recent launch of an AI-powered code review feature, priced at $15-$25 per pull request, has ignited a firestorm. While the sticker shock is immediate and understandable, the controversy reveals far deeper implications than mere cost. This isn't just about a new tool; it's a stark confrontation with the existential anxiety developers face as AI agents begin to dismantle long-held rituals like human code review. The debate exposes a fundamental tension: should AI tools be priced like software subscriptions, or like the labor they ultimately replace? This analysis unpacks the hidden consequences of this pricing, the systemic shifts it signals, and why understanding this moment offers a critical advantage for anyone navigating the accelerating AI landscape.

The Inevitable Obsolescence of Human Code Review

The emergence of AI-driven code review tools, exemplified by Anthropic's Claude Code and Cognition's Devin Review, signals a profound shift in the software development lifecycle. This isn't merely an efficiency play; it represents the potential obsolescence of a deeply ingrained human ritual. The traditional code review process, once a cornerstone of software quality and knowledge sharing, is increasingly becoming a bottleneck in an era of AI-generated code. As entrepreneur Anca Jane argues in her essay "How to Kill the Code Review," the sheer volume and speed of AI-generated code make manual review impractical, if not impossible.

"Humans already couldn't keep up with code reviews when humans wrote code at human speed. Every engineering org I've talked to has the same dirty secret: PRs sitting for days, rubber stamp approvals, and reviewers skimming 500-line diffs because they have their own work to do. We tell ourselves it is a quality gate, but teams have shipped without line-by-line review for decades."

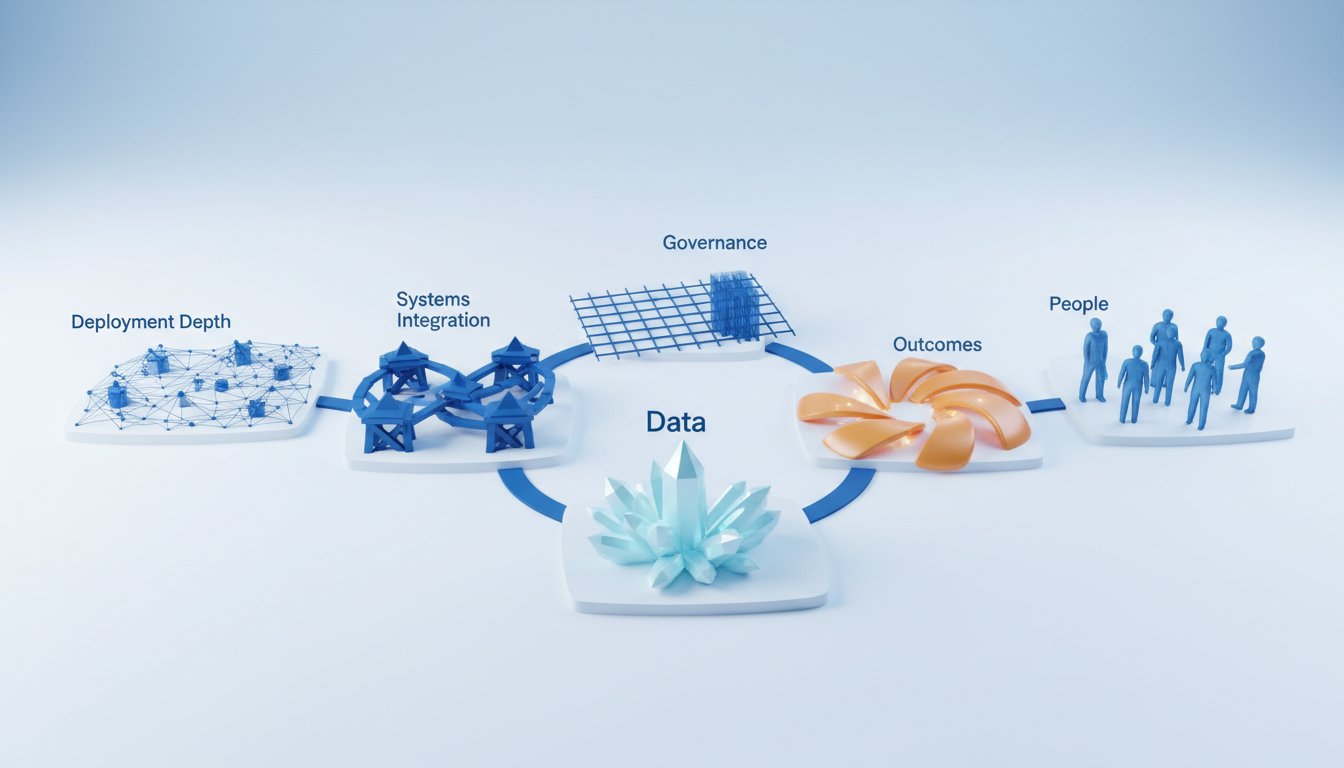

The data supports this contention: teams with high AI adoption merge significantly more pull requests, but their review times also increase dramatically. This suggests that the traditional workflow, designed for human-paced development, cannot scale. Boris Tane, in his piece "The Software Development Lifecycle is Dead," articulates this collapse: the discrete stages of the SDLC--requirements, design, implementation, testing, review, deployment--are merging into a continuous, agent-driven iteration loop.

"The agent doesn't know what step it's on because there are no steps. There's just intent, context, and iteration."

In this new paradigm, the pull request, a symbol of human gatekeeping, becomes a "fake bottleneck." Forcing a human ritual onto a machine workflow is not rigor; it's an identity crisis for developers who have built careers around these processes. This shift implies that the skills and workflows that defined software engineering for years are becoming outdated, creating a palpable sense of existential dread among practitioners.

The Sticker Shock and the Shifting Economics of AI

The pricing of Anthropic's Claude Code Review feature--$15 to $25 per pull request--has understandably triggered significant backlash. This price point, when extrapolated across a team's daily activity, appears exorbitant compared to traditional software subscriptions or even the perceived cost of human review. Developers are quick to point out that other tools, like Devin Review, offer similar functionality for free, or that unlimited token plans on other platforms could be leveraged for code review at a much lower cost. This sticker shock highlights a critical misunderstanding of AI inference costs, which are beginning to resemble labor costs more than software licensing fees.

"The appetite for tokens is insatiable. C-level FOMO is off the charts, and every spare dollar is going into Claude Code, Cursor, Amp, etc. Tens or even hundreds of millions of dollars in engineering organizations that cost billions in salaries seems reasonable. But if CTOs can't deliver headcount savings, we're going to see some real whiplash on token budgets in the next two to four quarters."

This observation from Sourcegraph CEO Dan Adler points to a looming reckoning. The current era of "subsidized inference" is likely unsustainable. As AI agents become more deeply integrated into workflows, particularly in high-volume areas like code generation and review, the cost profile will become a significant factor. Companies that fail to achieve commensurate cost savings through headcount reduction or efficiency gains elsewhere may face severe budget whiplash. This shift implies that the value proposition of AI tools will increasingly be measured not just by their capabilities, but by their ability to deliver tangible economic benefits that offset their inference costs.

The Identity Crisis and the Future of Knowledge Work

Beyond the financial implications, the controversy surrounding AI code review touches upon a deeper existential anxiety. For many developers, reviewing code is not just a task; it's a core part of their professional identity. The idea that an AI can perform this function, and do so more efficiently, challenges fundamental assumptions about their role and value. This sentiment is captured in the viral video of a developer lamenting, "I was a 10x engineer, now I'm useless." This feeling of obsolescence is not unique to developers; it serves as a bellwether for other knowledge worker professions.

The way developers grapple with this disruption--their personal and professional adaptations, and how organizations restructure around these new capabilities--will likely serve as a blueprint for how other industries navigate the AI revolution. The current moment is a liminal one, forcing a redefinition of what constitutes valuable human contribution in an increasingly automated world. The resistance to AI code review, while seemingly focused on price, is also a defense of established professional identities and a struggle to find meaning in work that is rapidly being redefined. This tension between the efficiency gains offered by AI and the preservation of human roles and identities is a central challenge that will shape the future of work across all sectors.

Key Action Items

- Immediate Action (Next Quarter):

- Audit AI Tooling Costs: Begin tracking token usage and AI inference costs for all AI-powered development tools. Identify which tools offer the clearest ROI in terms of productivity gains versus direct cost.

- Pilot AI-Assisted Code Review: Implement a small-scale pilot of AI code review tools (e.g., Anthropic's Claude Code, Cognition's Devin Review) on non-critical projects. Focus on evaluating accuracy, efficiency gains, and the actual time saved for human reviewers.

- Reframe Developer Roles: Initiate conversations within development teams about how AI tools are changing roles. Focus on upskilling developers for tasks that complement AI, such as prompt engineering, AI system oversight, and complex problem-solving that AI cannot yet handle.

- Medium-Term Investment (6-12 Months):

- Develop AI Integration Strategy: Create a strategic plan for integrating AI agents into the broader software development lifecycle, moving beyond individual tools to a cohesive workflow. This includes defining how AI will handle tasks traditionally performed by humans.

- Invest in AI Observability and Security: Prioritize tools and processes for monitoring AI agent behavior, ensuring security, and maintaining compliance, especially as AI takes on more critical functions. Consider solutions like Promptfoo (now OpenAI) or AIUC1 certification.

- Explore Agentic Workflow Redesign: Actively redesign workflows to leverage AI agents fully, rather than simply augmenting existing human processes. This may involve eliminating traditional bottlenecks like manual code review entirely.

- Long-Term Investment (12-18 Months):

- Quantify Headcount Savings: Establish clear metrics for measuring headcount savings or reallocation driven by AI adoption. This is crucial for justifying ongoing AI investment and managing token budget expectations.

- Foster a Culture of Continuous Adaptation: Cultivate an organizational culture that embraces continuous learning and adaptation to AI advancements. This includes providing ongoing training and encouraging experimentation with new AI capabilities.

- Strategic Partnership Evaluation: Continuously evaluate partnerships with AI providers, considering their long-term viability, pricing models, and potential for platform lock-in or competitive product development.