AI Maturity Maps Reveal Misleading Adoption Metrics

The AI Daily Brief podcast episode "Introducing Maturity Maps -- A New Way to Measure AI Adoption" reveals a critical gap in how organizations are measuring their progress with AI and agentic systems. The core thesis is that traditional benchmarks are obsolete, leading companies to overestimate their AI adoption while falling behind competitors. This conversation highlights the hidden consequences of this measurement deficit: a misallocation of resources, a widening gap between leadership perception and employee reality, and a failure to build the foundational systems needed for true AI value. Leaders in technology, strategy, and operations should read this to understand the non-obvious dynamics of AI readiness and gain a competitive advantage by focusing on systemic maturity rather than superficial adoption metrics.

The Illusion of Progress: Why AI Adoption Metrics Are Misleading

The rapid pace of AI adoption has created a dangerous illusion. As companies scramble to integrate new tools and workflows, they often lack the sophisticated metrics needed to accurately gauge their progress. This episode of The AI Daily Brief, featuring the introduction of "Maturity Maps," argues that conventional benchmarks are not just inadequate; they are actively harmful, leading organizations to believe they are further along than they actually are. This disconnect has profound downstream effects, impacting everything from resource allocation to employee morale and ultimately, competitive positioning.

One of the most pervasive findings across recent AI research is what the episode terms the "adoption embedding gap." While a high percentage of teams claim to be using AI, the depth and utilization of these tools are often superficial. This isn't just about having access to tools like ChatGPT; it's about how deeply these AI capabilities are woven into core workflows and autonomous systems. The consequence? Companies might be celebrating a 30% increase in content output, only to discover competitors have achieved 50% growth, revealing that their perceived progress is actually a relative decline.

"When we don't know how we're doing relative to peers and competitors, it makes it really hard for us to judge what we need to change, what we need to shift, and what we need to do next."

This gap is starkly illustrated by the divergence between worker-level data and leader-level perceptions. A significant majority of leaders report adequate AI training, while a much smaller percentage of employees agree. Similarly, leaders may prioritize AI as a strategic initiative, yet frontline staff feel their organizations are not proactively upskilling them. This disconnect creates a bottleneck, as the human element--often neglected in favor of infrastructure--becomes the primary constraint on realizing AI value. The episode points to Deloitte's finding that a staggering 93% of AI spend goes to infrastructure, with only 7% allocated to people-related initiatives. This misallocation is a direct consequence of focusing on tool adoption rather than holistic maturity.

The episode also underscores the critical role of data, not just as one dimension of maturity, but as the fundamental floor upon which all other AI capabilities are built. Without robust, well-managed access to proprietary data--customer history, codebases, financial records--AI applications are relegated to basic assisted usage. This "data as a ceiling" phenomenon means that even advanced AI models will falter without the right context. This is precisely why traditional benchmarks, which often focus on platform vendors rather than systemic integration, are becoming obsolete.

"Anyone who's felt the sting of the capability overhang -- in other words, the gap between what AI can do and what we're actually using it for -- knows that raw capability isn't really the question. It's the systems we put around it to get value from it."

Furthermore, the rush to adopt has led to a widespread challenge in outcome measurement. The pressure to demonstrate progress quickly has meant that organizations haven't paused to establish robust ROI tracking. This creates a situation where adoption is celebrated, but the actual business value remains elusive, making it difficult to justify further investment or course-correct. The episode suggests that this will be an area of significant focus and improvement in the coming quarters, as organizations realize the need to move beyond simply deploying tools to demonstrating tangible results.

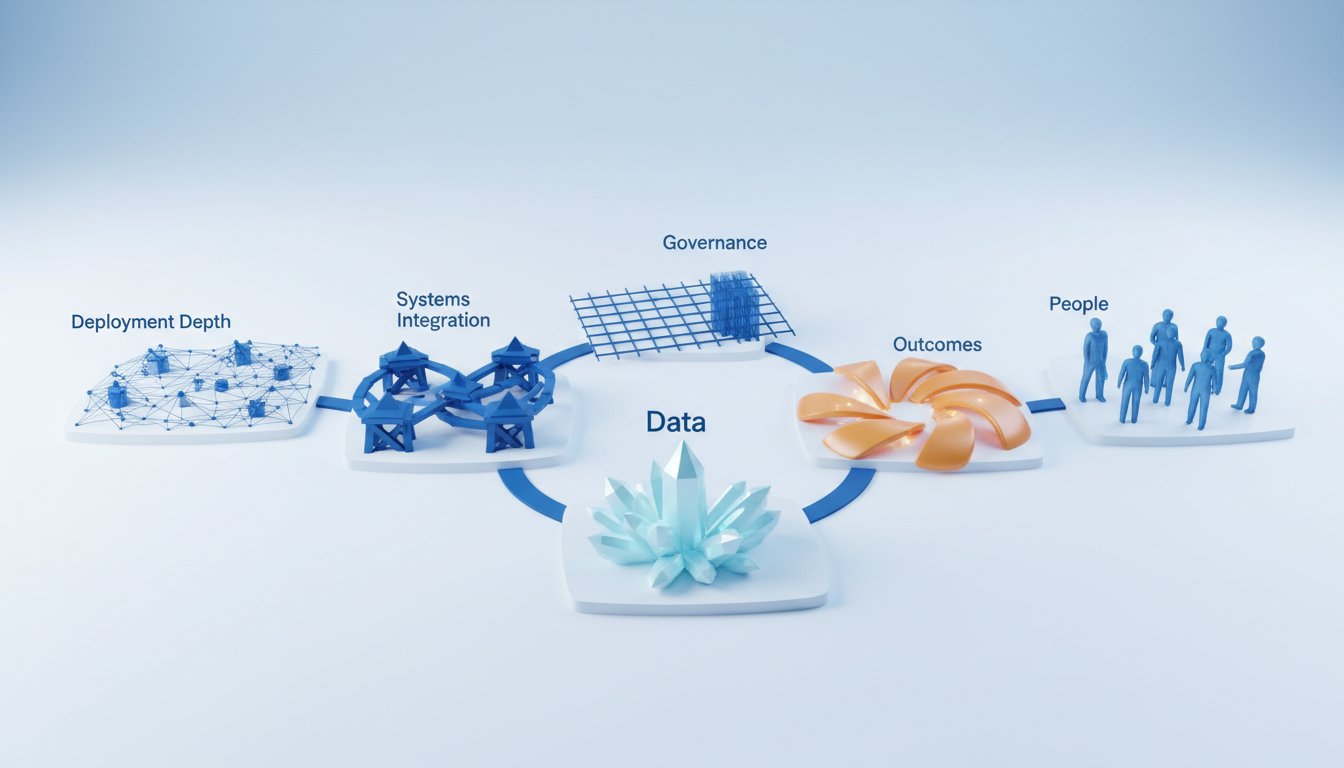

The introduction of "AI Maturity Maps" offers a new framework to combat these issues. By assessing AI readiness across six dimensions--Deployment Depth, Systems Integration, Data, Outcomes, People, and Governance--these maps provide a more nuanced view of an organization's true AI maturity. The maps highlight that most functions are not "on track," revealing a significant "capability overhang" where potential outstrips actual implementation. This is not merely an academic exercise; it's a strategic imperative. Organizations that understand and address these gaps--particularly in people and data--will build a more sustainable and defensible competitive advantage, one that pays off not in immediate buzz, but in long-term, impactful value.

Key Action Items

- Immediate Action (This Quarter):

- Take the AI Maturity Map quiz at bsuper.ai/quiz to benchmark your organization's current AI readiness against industry averages and "on track" benchmarks.

- Review your organization's AI spend. If it heavily favors infrastructure over people (upskilling, training, change management), re-evaluate resource allocation.

- Initiate a cross-functional working group to assess data accessibility and quality for AI initiatives, identifying immediate steps to improve data pipelines.

- Short-Term Investment (Next 1-2 Quarters):

- Develop and implement targeted upskilling programs for employees, focusing on both AI tool usage and the critical thinking skills needed to manage AI-augmented workflows.

- Establish clear, measurable KPIs for AI initiatives that go beyond simple adoption rates, focusing on demonstrable outcomes and ROI.

- Begin formalizing AI governance frameworks, particularly in areas like security and data privacy, even if current adoption is limited.

- Long-Term Investment (6-18 Months):

- Integrate AI capabilities deeply into core revenue-generating workflows, moving beyond standalone tool usage to systemic automation and agentic systems.

- Build a robust, scalable data strategy that ensures AI has access to high-quality, contextual information for advanced applications.

- Continuously refine AI maturity benchmarks, considering organizational size and industry, to ensure ongoing relevance and strategic alignment.