The AI Daily Brief: AI's Great Divergence

In this conversation, NLW of The AI Daily Brief dissects recent reports from Stanford and PwC to reveal a widening chasm in how artificial intelligence is impacting society and business. The core thesis is that AI is not a monolithic force; instead, it's creating distinct divides: between those who understand and leverage its potential (experts, leading companies) and those who don't (the public, laggard companies). This divergence has profound, often hidden, consequences, from job market stratification to the concentration of economic gains. Leaders, technologists, and strategists should read this to understand the non-obvious implications of AI adoption and gain a crucial advantage by anticipating these widening gaps.

The Widening Chasm: Experts vs. The Public

The most striking divergence highlighted by Stanford's AI Index Report is the stark difference in optimism between AI experts and the general public. While 73% of experts anticipate a positive impact of AI on jobs, a mere 23% of the public shares this view. This gap extends across various domains: the economy (69% vs. 21%), medical care (84% vs. 44%), and K-12 education (61% vs. 24%). This isn't just a difference in outlook; it suggests a fundamental disconnect in understanding AI's capabilities and potential benefits. The public's perception, often shaped by sensationalized headlines or a lack of direct experience, leans towards apprehension, particularly regarding job displacement, with two-thirds of US adults believing AI will lead to fewer jobs. This disparity creates a fertile ground for misinformed policy decisions and public anxiety, while experts continue to build and deploy the technology, potentially widening the gap in practical application and economic benefit.

"And other parts of the study show pessimism in more acute ways. When asked whether AI will create or eliminate jobs, almost a full two-thirds of US adults believe that it will lead to fewer jobs, although perhaps surprisingly, 39% of AI experts also think that it will lead to fewer jobs."

This divergence in perception is not merely academic; it has tangible downstream effects. If the public and policymakers largely view AI with suspicion or fear, it can stifle innovation through overly restrictive regulations or a lack of public buy-in for AI deployment. Conversely, the experts, armed with deeper knowledge, are more likely to be the ones capturing the economic gains. The report also notes that AI skills are largely being acquired informally, outside of structured educational settings, further stratifying those who can effectively leverage these tools.

The Enterprise Divide: Leaders vs. Laggards

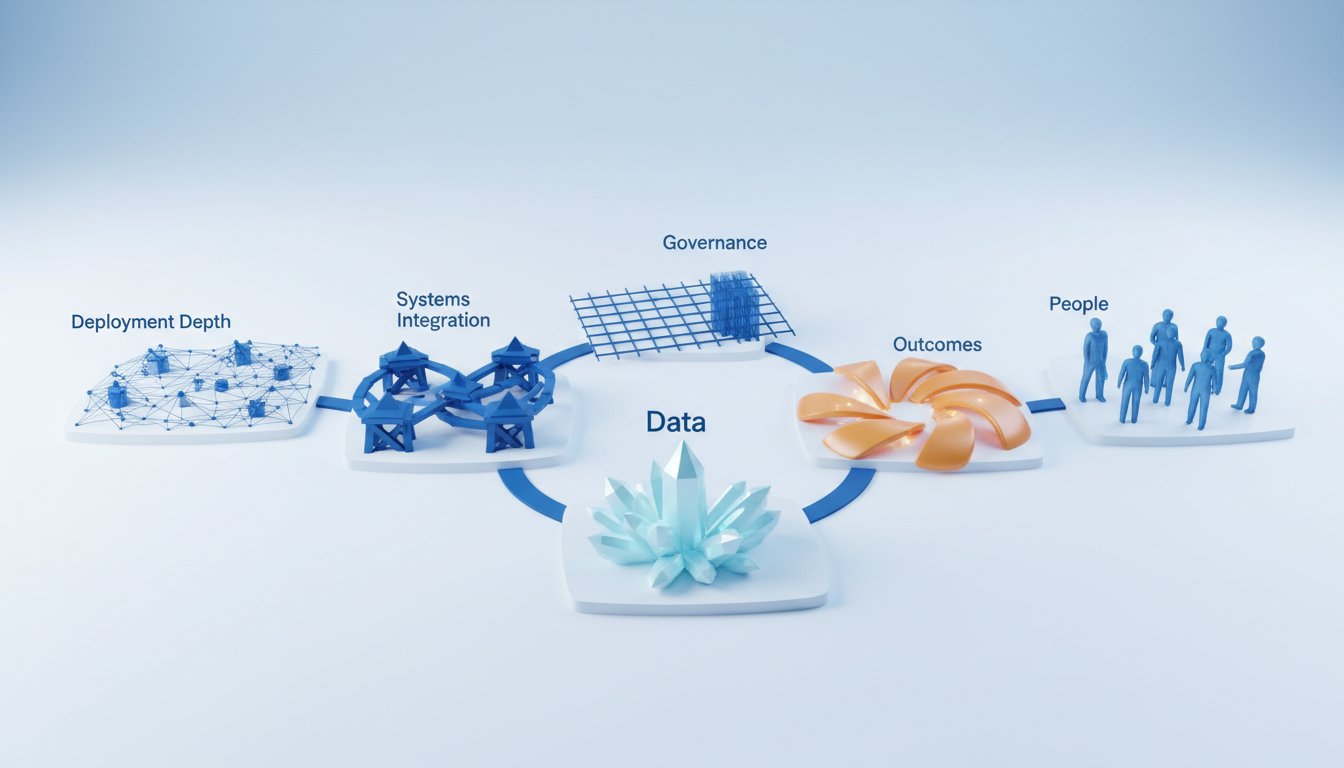

PwC's annual AI Performance Study provides a stark view of this divergence within the corporate world. The headline figure--that 75% of AI's economic gains are captured by the top fifth of companies--is a powerful indicator of a widening gap between AI leaders and laggards. This isn't about simply adopting more AI tools; it's about a fundamental difference in approach. Leading organizations are twice as likely to redesign workflows to incorporate AI, rather than just bolt on new tools. They are also two to three times more likely to use AI for growth opportunities and business model reinvention. This is the distinction between "efficiency AI" (doing the same with less) and "opportunity AI" (doing more, or doing new things).

"The research shows that these top-performing companies are not simply deploying more AI tools. Instead, they are using AI as a catalyst for growth and business reinvention, particularly by pursuing new revenue opportunities created as industries converge while building strong foundations around data governance and trust."

The consequence of this approach is significant. Leaders are not only more likely to see financial benefits but are also building more robust and trustworthy AI systems. They are more likely to implement responsible AI frameworks and cross-functional governance boards, leading to employees who trust AI outputs more than those in laggard companies. This creates a virtuous cycle for leaders: better AI integration leads to higher trust, which leads to more effective use, driving greater financial performance. Laggards, focused on incremental efficiency gains or simply adopting off-the-shelf tools without strategic integration, risk falling further behind, unable to capitalize on AI's transformative potential. This delayed payoff for leaders, achieved through strategic redesign and governance, creates a durable competitive advantage that is difficult for others to replicate.

The Jagged Frontier: Skill Stratification and Job Market Shifts

The concept of AI's "jagged frontier"--massively good in some areas, pathetically awful in others--also contributes to a divergence in skill requirements and employment. Stanford's report points to a concerning trend: productivity gains from AI are appearing in fields where entry-level employment is declining. For example, in software development, where AI has shown clear productivity gains (14% to 26%), US developers aged 22-25 saw employment fall nearly 20% from 2023 to 2024. Meanwhile, employment for older developers continued to grow.

This isn't to say AI is eliminating jobs wholesale, but it is fundamentally changing the nature of work and the demand for certain skills. The implication is that AI is automating tasks previously handled by junior roles, while augmenting the capabilities of more experienced professionals. This creates a stratification in the job market: those who can leverage AI to enhance their expertise are in demand, while those whose roles are primarily composed of tasks that AI can now perform are at risk. The conventional wisdom might suggest AI will simply replace jobs, but the reality is more nuanced: it's reshaping roles, demanding new skills, and potentially creating a bifurcated workforce where experience augmented by AI commands a premium, while rote tasks are increasingly automated.

The Geopolitical Chessboard: Divergence in International AI Dynamics

Beyond the societal and corporate divides, the transcript touches upon geopolitical divergence, particularly concerning the US and China. Jensen Huang's comments on the need for dialogue highlight a potential divergence in strategy: cooperation versus strict export controls. Huang argues that China will inevitably develop advanced AI capabilities, and the question isn't if but how they will use it. He suggests that viewing China solely as an adversary and victimizing them is counterproductive. Instead, he advocates for dialogue to agree on what AI not to use AI for, drawing a parallel to nuclear deterrence.

"If you're worried about them, what is the best way to create a safe world? Victimizing them, turning them into an enemy, likely isn't the best answer."

This perspective suggests that a purely adversarial stance might lead to a less safe world by fostering an arms race rather than mutual understanding. The consequence of continued divergence and open hostility could be an escalation of AI capabilities without shared safety protocols. Conversely, dialogue, even between adversaries, could lead to a more stable outcome. This highlights how geopolitical decisions, driven by perceptions of divergence and threat, can have long-term global consequences, impacting the trajectory of AI development and its ultimate impact on humanity.

Actionable Takeaways for Navigating the Divergence

- Embrace Opportunity AI: Shift focus from merely optimizing existing processes with AI (efficiency AI) to using AI as a catalyst for new products, services, and business models (opportunity AI). This requires strategic redesign, not just tool adoption.

- Invest in AI Literacy: For individuals and organizations, actively pursue AI education and training. Understand the "jagged frontier" of AI capabilities to identify where it can augment, not just replace, human work.

- Develop Governance Frameworks: Leading companies are implementing responsible AI frameworks and governance boards. Prioritize data governance, trust, and ethical considerations to build robust and reliable AI systems. This is a longer-term investment that builds crucial trust.

- Anticipate Skill Shifts: Recognize that AI is reshaping the job market. Focus on developing skills that complement AI, such as critical thinking, complex problem-solving, and strategic decision-making, especially for entry-level roles where automation is more prevalent.

- Foster Dialogue (Where Possible): While competition exists, explore avenues for dialogue on AI safety and ethical use, particularly in geopolitical contexts. This is a long-term play for global stability.

- Redesign Workflows, Not Just Add Tools: Instead of simply integrating AI tools into existing processes, fundamentally rethink workflows to leverage AI's full potential. This is a more challenging but ultimately more rewarding path.

- Accept Immediate Discomfort for Long-Term Gain: The strategic redesign and governance required for true AI leadership involve upfront effort and potential discomfort. This is precisely where durable competitive advantage is built, as most organizations opt for the easier, less impactful path. This pays off in 12-18 months and beyond.