The AI Daily Brief podcast, "All of AI's New Models and Tools," reveals a subtle but critical shift in the AI landscape: the growing emphasis on agentic capabilities and the complex infrastructure required to support them. While headlines often focus on the power of new frontier models, this conversation highlights the hidden consequences of their deployment. The non-obvious implication is that the real race isn't just about building more capable models, but about building the systems that can reliably and scalably leverage them. This analysis is crucial for product managers, AI engineers, and business leaders who need to understand the downstream effects of adopting these technologies. By mapping these consequence layers, readers can gain a significant advantage in navigating the rapidly evolving AI ecosystem, focusing on practical implementation rather than just theoretical model performance.

The Hidden Costs of "Solved" Problems: Agentic Infrastructure and the Real Engineering Challenge

The AI world is abuzz with talk of new frontier models, but the conversation often stops at the benchmarks. What's less discussed, and arguably more critical, is the engineering effort required to actually use these models effectively. This episode of The AI Daily Brief, "All of AI's New Models and Tools," dives into the practical realities, revealing that while coding agents might be "solved," the surrounding infrastructure for agentic AI is where the real, often overlooked, engineering challenge lies. This isn't just about building a better model; it's about building the entire ecosystem that allows those models to perform complex, long-horizon tasks reliably.

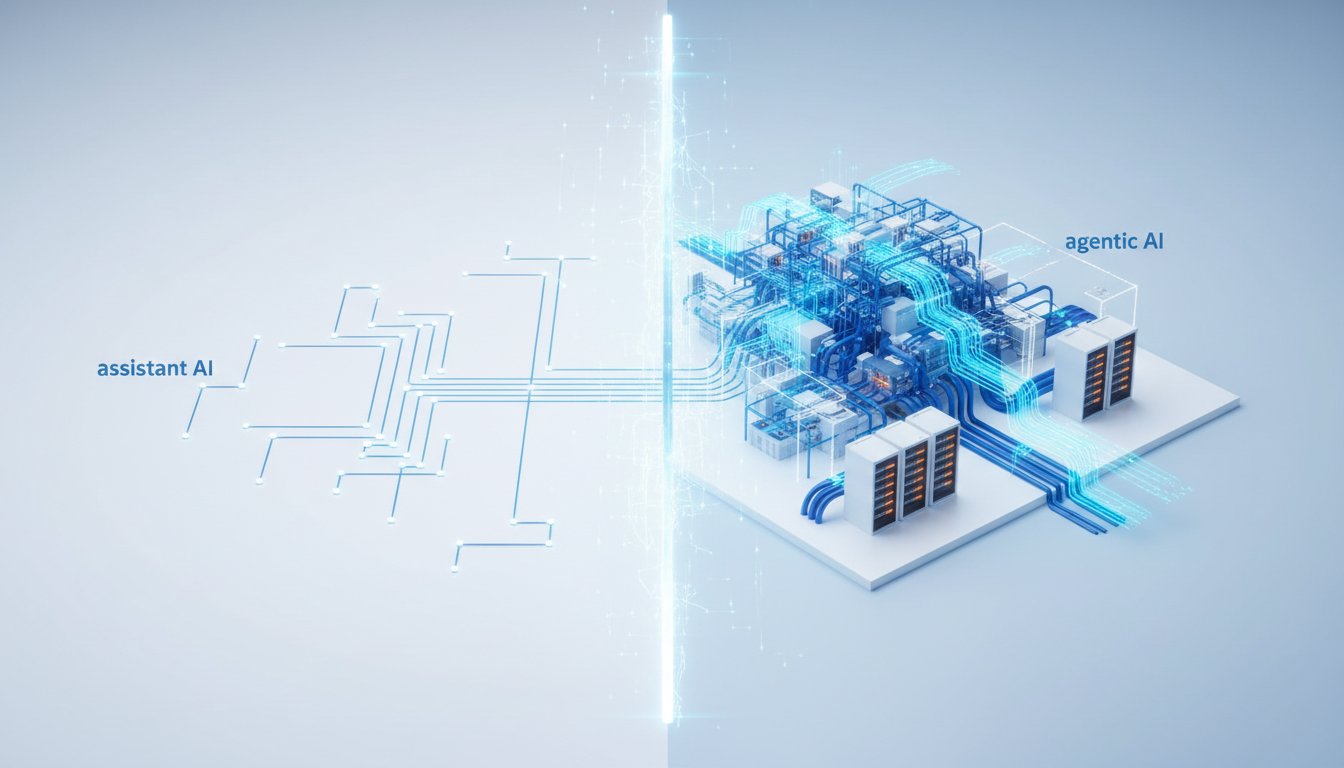

The Infrastructure Chasm: From Model Power to Practical Application

The excitement around new models like Meta's Muse Spark and Z AI's GLM 5.1 is understandable. Muse Spark, Meta's first release from its new Super Intelligence Lab, boasts multimodal reasoning capabilities and aims to drive personal agents. Z AI's GLM 5.1, on the other hand, has made waves by open-sourcing a model that rivals leading Western models on coding benchmarks, even claiming autonomous execution capabilities for long-horizon tasks. However, the podcast subtly points out that raw model performance is only one piece of the puzzle. The real differentiator, and the source of significant downstream complexity, lies in the "harness" -- the software infrastructure that wraps around an AI model to enable it to act agentically.

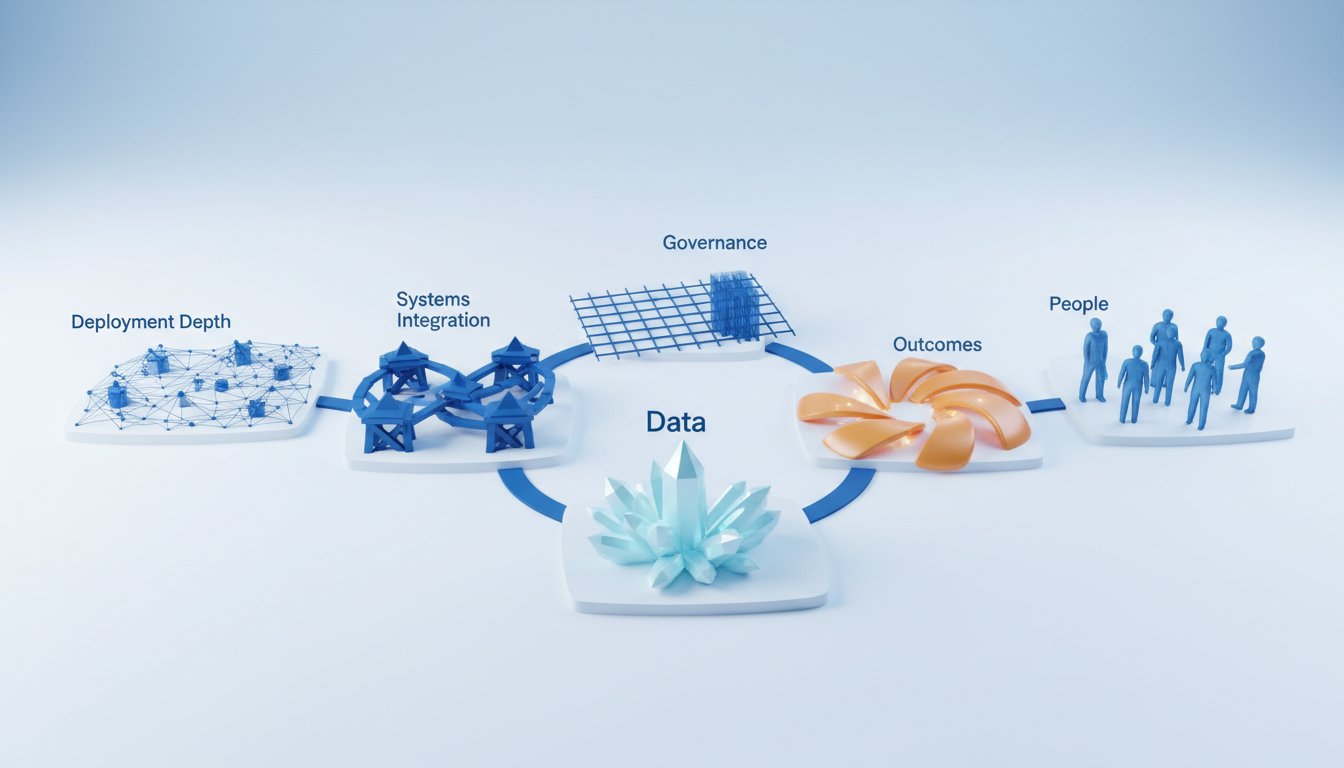

Anthropic's launch of Claude Managed Agents directly addresses this chasm. Pitched as "everything you need to build and deploy agents at scale," this offering highlights the complexity of agentic systems. It's not just about the model's intelligence, but about the "agent harness," which includes software tools, memory systems, and orchestration capabilities. The podcast underscores that many businesses are still struggling to bridge the gap between what AI models are capable of and what they are actually used for. This gap is precisely what Managed Agents aims to close, by abstracting away the "complex distributed systems engineering problem" of self-hosting and running agents at scale.

"When it comes to actually deploying and running agents at scale, this is a complex distributed systems engineering problem. A lot of customers we were talking about previously had a whole bunch of engineers whose job it would have been to build and run those systems at scale. Now that we are giving them that bit out of the box, they're able to have those same engineers be focused on core competencies of business and their product."

-- Caitlin Ross, Head of Engineering for the Claude platform

This quote reveals a critical consequence: by providing managed infrastructure, companies like Anthropic are enabling engineering teams to shift their focus from building foundational agent systems to core business competencies. This is a significant downstream effect that can accelerate product development and innovation.

The "Solved" Coding Agent: A Misleading Simplicity

The narrative around coding agents is a prime example of how immediate success can mask deeper challenges. While tools like GitHub are seeing an explosion in commits, attributed to both human and AI-assisted coding, the underlying infrastructure is straining. Peter Steinberger's complaint about hitting API quota limits from GitHub, noting it "hasn't been designed with agents in mind," is a stark illustration. This isn't a problem with the coding models themselves, but with the platforms they interact with.

The podcast suggests that coding is only a fraction of an engineer's day. The rest involves meetings, context-switching, and communication -- tasks that are ripe for agentic automation but require sophisticated orchestration. Zendesk's Zenflow Work, which connects their orchestration engine to daily tools like Jira, Gmail, and Calendar, exemplifies this broader need. The implication is that focusing solely on code generation overlooks the vast operational overhead that agents can address, but only if the supporting infrastructure is robust enough.

"So coding agents are basically solved at this point. They're incredible at writing code. But here's the thing nobody talks about: coding is maybe a quarter of an engineer's actual day. The rest is stand-ups, stakeholder updates, meeting prep, chasing context across six different tools."

-- Narrator, The AI Daily Brief

This highlights a failure of conventional wisdom: optimizing for the most visible task (code writing) without considering the broader system of an engineer's workflow. The true competitive advantage will come from agents that can seamlessly integrate into and optimize these less visible, but time-consuming, aspects of work.

The Long Horizon Payoff: Agentic Execution and Delayed Gratification

The concept of "long horizon tasks" is central to the evolving capabilities of AI agents, as exemplified by Z AI's GLM 5.1. The claim that this model can perform autonomous work for extended periods, like an 8-hour autonomous build of a Linux desktop, points to a future where agents tackle complex, multi-step projects with minimal human intervention. This is where delayed gratification creates significant competitive advantage.

Anthropic's Managed Agents also facilitate this, allowing agents to run autonomously for hours in the cloud. The example of event-triggered agents that write patches and open pull requests without human intervention in the loop is particularly telling. This represents a shift from AI as a tool to AI as an autonomous actor within a workflow.

However, this capability comes with its own set of challenges. Jared Orkin's observation that "Someone still has to tune the prompt every Friday and act on the brief by 9:00 AM Monday. That's a job. That's the job," is a crucial reminder. While agents can perform tasks autonomously, human oversight, strategy, and decision-making remain essential. The advantage lies not in eliminating humans, but in augmenting them with agents that can handle the heavy lifting over extended periods, freeing up human capacity for higher-level strategic work. This requires patience and a willingness to invest in the infrastructure and human roles needed to manage these agents effectively, a commitment many organizations may shy away from due to the lack of immediate, visible progress.

Google's Gemini Notebooks: Consolidating the Experience

In a different vein, Google's introduction of Notebooks within Gemini addresses a different kind of infrastructure challenge: user experience and knowledge management. The podcast notes that previous features like "Gems" were unintuitive, and the product suite can feel fragmented. Gemini Notebooks, by integrating features from NotebookLM directly into the Gemini app, aims to create a more cohesive "second brain" for users.

"Most AI chatbots give you basic projects. Gemini just built you a second brain."

-- Josh Woodward, Google

This move suggests a strategic understanding that even the most powerful models are less effective if users cannot easily organize, contextualize, and manage the information and tasks associated with them. The ability to build custom instruction sets within notebooks for specific projects is a direct response to the need for tailored agentic capabilities, bridging the gap between general-purpose models and specific application requirements. This consolidation, while seemingly a quality-of-life improvement, has downstream implications for user adoption and the practical utility of Gemini as a platform for agentic work.

Key Action Items

- Invest in Agent Orchestration Infrastructure: Beyond selecting powerful models, prioritize building or adopting robust "agent harnesses" that manage tools, memory, and execution environments. (Immediate Action)

- Map the Full Engineer Workflow: Identify and prioritize agentic opportunities beyond code generation, focusing on tasks like meeting prep, context retrieval, and stakeholder communication. (Over the next quarter)

- Develop Human-in-the-Loop Protocols for Agents: Define clear roles and processes for human oversight, prompt tuning, and actioning agent-generated outputs, recognizing this as a new form of "job." (Immediate Action)

- Evaluate Platform Integration Capabilities: When considering AI tools, assess how well they integrate with your existing software stack (e.g., Jira, Slack, Notion) to enable seamless agentic workflows. (Immediate Action)

- Pilot Long-Horizon Agent Tasks: Experiment with agents designed for multi-step, extended tasks, understanding that the immediate payoff may be low, but the long-term efficiency gains can be substantial. (This pays off in 6-12 months)

- Standardize Knowledge Management for AI: Implement systems like Gemini Notebooks or similar features to organize context, resources, and custom instructions for different AI projects, enhancing model utility. (Over the next quarter)

- Monitor Infrastructure Strain: For platforms heavily used by agents (e.g., GitHub APIs), anticipate and plan for increased load and potential bottlenecks, proactively engaging with platform providers. (Ongoing)