The AI Content Deluge: Navigating the Erosion of Trust and the Quest for Verifiable Truth

In a world awash with AI-generated content, the traditional gatekeepers of information are crumbling, forcing a fundamental re-evaluation of how we establish trust and verify reality. This conversation between Balaji Srinivasan and Taylor Lorenz reveals a stark, often uncomfortable, consequence of technological advancement: the proliferation of synthetic identities and the subsequent breakdown of established systems. The non-obvious implication is that the very tools designed to democratize information are simultaneously creating an unprecedented crisis of authenticity, demanding entirely new frameworks for truth. Anyone invested in the future of media, technology, or societal trust--from content creators and journalists to policymakers and everyday consumers--will find critical insights here that illuminate the hidden costs of our current trajectory and offer a roadmap for navigating the emerging landscape of verifiable truth.

The Phantom Menace: AI's Assault on the Information Commons

The ease with which AI can now generate text, images, and even convincing identities presents a profound challenge to the existing information ecosystem. What was once a relatively stable landscape, where institutions like media outlets served as anchors of verifiable truth, is now being flooded with synthetic content. This isn't merely about a few chatbots; it's about an impending deluge of AI agents capable of overwhelming human communication channels, creating a “digital commons” choked with spam and misinformation. The immediate consequence is a breakdown of trust between different “digital tribes,” where automated outreach--be it for resumes or sales pitches--degrades the quality of human interaction.

This erosion of trust isn't a distant threat; it's already impacting how we perceive authenticity. As Srinivasan notes, the very tools that accelerate digital tasks can be weaponized to break down shared understanding.

"As much as I like AI within the digital tribe, it accelerates coding, it's great for search, all this kind of stuff. Between digital tribes, it's often bad because it's just AI agents spamming 50 different people with a resume or a sales email or something like that, and it just breaks the commons."

The downstream effect of this is a growing need for "human-only" social networks, a concept that itself raises questions of verifiability. How do we ensure that the people we interact with online are, in fact, human? The conversation suggests a future where biometric methods might authenticate identity, but even then, the content generated could be AI-assisted. This creates a complex cat-and-mouse game, where systems must evolve to distinguish genuine human expression from sophisticated AI mimicry. The immediate payoff for bad actors is high--mass outreach with minimal effort--while the cost is the degradation of shared reality for everyone else.

The Premium of Physicality: Reversing the Digital Divide

A striking observation from the discussion is the reversal of the digital divide. In the 1990s, the fear was that only the wealthy would have access to digital technologies. Today, digital access is hyper-deflated; the same powerful devices and information resources are available to nearly everyone. This has flipped the script, making the physical world and genuine human experiences a premium product. The resurgence of interest in live streaming and communal, in-person experiences isn't just a nostalgic trend; it's a direct response to the difficulty of faking live, authentic human interaction.

Lorenz highlights this shift, emphasizing the value placed on tangible connections in an increasingly digitized world.

"This is why we're seeing such a resurgence in live streaming and interest in these sort of communal experiences, because live is something that is so hard to fake. It is such a human thing."

The consequence of this shift is a re-prioritization of formats that reward human connection. This extends to the need for offline focus to cultivate genuine thought and direction, a stark contrast to the constant digital stimulation that can fragment attention. The immediate benefit of digital access has been democratized, but the long-term advantage is now being sought in the scarcity of authentic, embodied experiences. Conventional wisdom, which once championed digital ubiquity, fails to account for this emergent premium on the physical.

The Ossified Gatekeepers: Wikipedia's Structural Flaws and the Call for Open Competition

The discussion around Wikipedia and its proposed competitor, "Grapedia," reveals systemic issues within established information platforms. While Wikipedia's decentralized, community-driven effort to compile information is lauded as noble, its structure has become ossified. Lorenz points out critiques from both the right and the left, highlighting how editors often lack global representation, leading to an Anglophone, Western-centric bias. This structural limitation means that valuable international or newer media sources can be locked out, hindering a truly representative global information landscape.

The downstream effect of this bias is that Wikipedia, despite its good intentions, can perpetuate a limited worldview. Furthermore, its practice of licensing content to AI companies without compensating creators is a point of contention. Srinivasan argues for truly open-source competitors, emphasizing that in an era where economies are shifting globally, the platforms for information must reflect this change.

"That's why I think that's something where the economy has shifted to Asia. The economy is shifting out of America in many ways, out of the West. So there's an inclusion argument as well to have voices outside, and I think it is definitely time for truly open-source competitors."

The immediate problem is the lack of diverse representation and fair compensation. The delayed payoff for fostering open-source alternatives lies in creating a more robust, equitable, and globally relevant information ecosystem, one that can adapt to the evolving economic and cultural landscape. Conventional wisdom, which relies on the established authority of platforms like Wikipedia, fails to address these structural inequities.

The Tech-Media Chasm: A History of Disruption and Distrust

The conversation delves into the historical roots of the animosity between the tech and media industries. Srinivasan posits that this divide wasn't inevitable but arose from a confluence of economic disruption and social critique. Initially, tech and media shared common ground, with figures from both worlds often coming from academic or journalistic backgrounds. However, the advent of platforms like Google and Facebook disrupted the traditional media's advertising revenue model, creating an economic competitor.

Simultaneously, in the 2010s, tech figures found themselves subjected to social critiques and cancellations, often amplified by media narratives. This created a perception among many in tech that media outlets were not just reporting but actively attacking them, leading to a deep-seated resentment.

"I think the media guys think the tech guys started it, and the tech guys think the media guys started it. I think the media guys think the tech guys started it by economically disrupting them. I think the tech guys started it, think the media guys started it by, by, by socially attacking them in the 2010s."

The consequence of this mutual distrust was the tech industry's realization that it needed to build its own media infrastructure--podcasts, live streams, and independent platforms. This created a parallel media ecosystem, driven by the need for self-representation and a desire to control the narrative. The immediate effect was the fragmentation of media consumption and the rise of direct-to-consumer content. The delayed payoff for the tech industry is a greater degree of control over its own narrative, while legacy media faces the challenge of adapting to a landscape where its gatekeeping role is diminished. Conventional wisdom, which assumed a symbiotic relationship between tech and media, failed to predict this adversarial evolution.

Actionable Takeaways

- Prioritize Human-Only Interaction: Actively seek out and foster platforms and communities that prioritize verified human interaction. This might involve seeking out live events, in-person meetups, or platforms with robust identity verification. (Immediate Action)

- Cultivate Offline Focus: Dedicate specific time for deep, offline work and reflection. This is crucial for developing independent thought and direction, counteracting the constant digital noise. (Immediate Action)

- Diversify Information Sources: Critically evaluate information from established media outlets, recognizing potential biases and structural limitations. Seek out a broader range of perspectives, including international and independent sources. (Immediate Action)

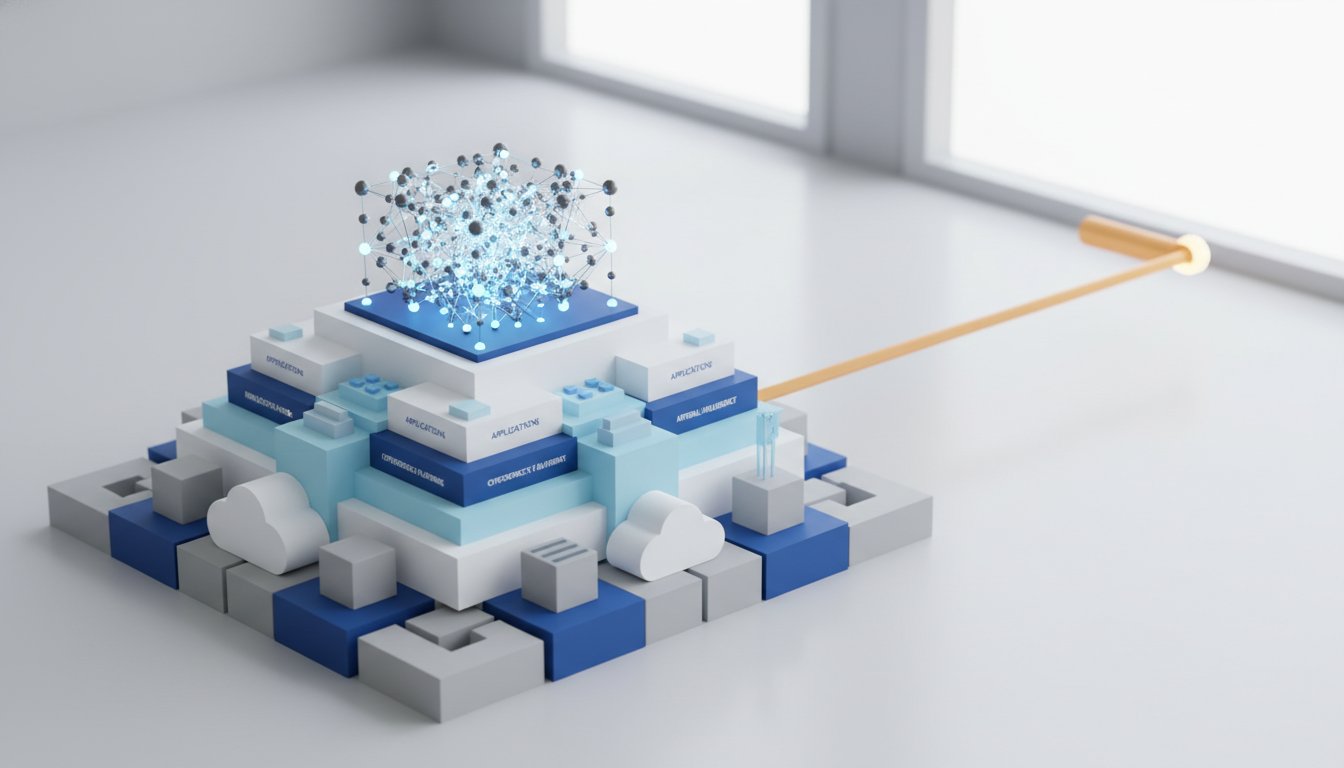

- Invest in Verifiable Truth Technologies: Support and explore emerging technologies and platforms focused on cryptographic verification and decentralized identity. These are crucial for building trust in an AI-saturated world. (Longer-Term Investment)

- Develop Personal Verification Habits: Practice skepticism and employ critical thinking when consuming information. Look for primary sources, cross-reference claims, and be aware of the potential for AI-generated content. (Immediate Action)

- Advocate for Open-Source Media Alternatives: Support initiatives that aim to create open, accessible, and equitably governed information platforms, challenging the dominance of paywalled or biased legacy systems. (Longer-Term Investment)

- Embrace Discomfort for Future Advantage: Recognize that solutions requiring immediate effort and potentially causing short-term discomfort (like building independent media or developing new verification systems) often yield the most significant long-term competitive advantages and societal benefits. (Strategic Mindset)