Vertical Integration Solves Data Center's Hidden Hardware and Software Costs

The following blog post is an analysis of a podcast transcript featuring Bryan Cantrill discussing the challenges of data center infrastructure and the philosophy behind Oxide Computer Company. It applies consequence-mapping and systems thinking to highlight non-obvious implications and strategic advantages.

This conversation reveals the deeply entrenched, often overlooked, systemic failures within conventional data center hardware and software stacks. It exposes how seemingly minor component substitutions and architectural compromises by vendors cascade into debilitating operational problems at scale, forcing end-users into a cycle of costly, frustrating, and ultimately unresolvable issues. For IT leaders, infrastructure engineers, and CTOs grappling with the true cost and complexity of on-premises deployments, this analysis offers a framework for understanding why hyperscalers build their own hardware and highlights the strategic advantage of embracing a vertically integrated, systems-level approach. It underscores that true control and long-term economic and operational benefits stem from owning the entire stack, not just renting components.

The Hidden Tax of "Commodity" Hardware: Why Data Centers Break at Scale

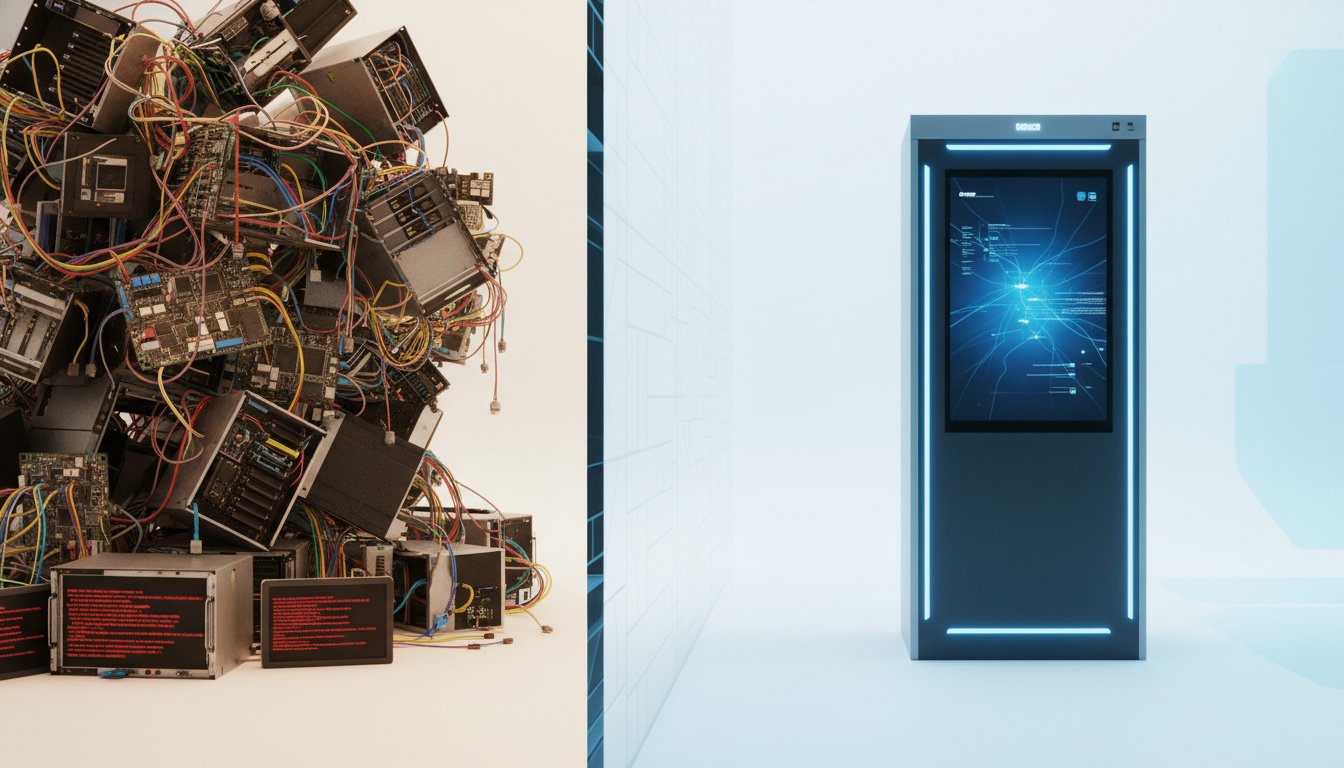

The modern data center, for all its perceived sophistication, is often built on a foundation of compromised components and vendor-driven decisions that create a cascade of problems for the end-user. Bryan Cantrill, CTO of Oxide Computer Company, articulates a painful truth learned through years of operating at massive scale: the "state of the art" in off-the-shelf server hardware is fundamentally broken, particularly when deployed at the scale required by large enterprises. The illusion of choice offered by traditional vendors like Dell, HP, and Supermicro crumbles under the weight of real-world operations, revealing a hidden tax levied by their reliance on commodity components and a lack of end-to-end system integration.

Cantrill's experience at Joyent, and later at Samsung, illuminated this reality. When scaling up to meet Samsung's immense cloud bill, the problems that were once rare, isolated incidents at Joyent's smaller scale became routine, debilitating issues. The common thread? Problems originating beneath the software layer--in the hardware, firmware, and componentry. A striking example was a data center plagued by "pathological IO latency." The initial investigation, focusing on workload and database performance, eventually led to a deeper dive at the disk layer. The culprit? Toshiba hard drives, not from HGST (Western Digital), which were standard elsewhere. These drives suffered from critical firmware flaws, including periods where they would simply stop acknowledging reads for nearly three seconds.

"The thought occurred to me, I'm like, well, maybe we are, do we have like different, are different rev of firmware on our HGST drives? ... And as it turns out, and this is, you know, part of the challenge when you don't have an integrated system, which not to pick on them, but Dell doesn't. And what Dell would routinely put just sub-make substitutes."

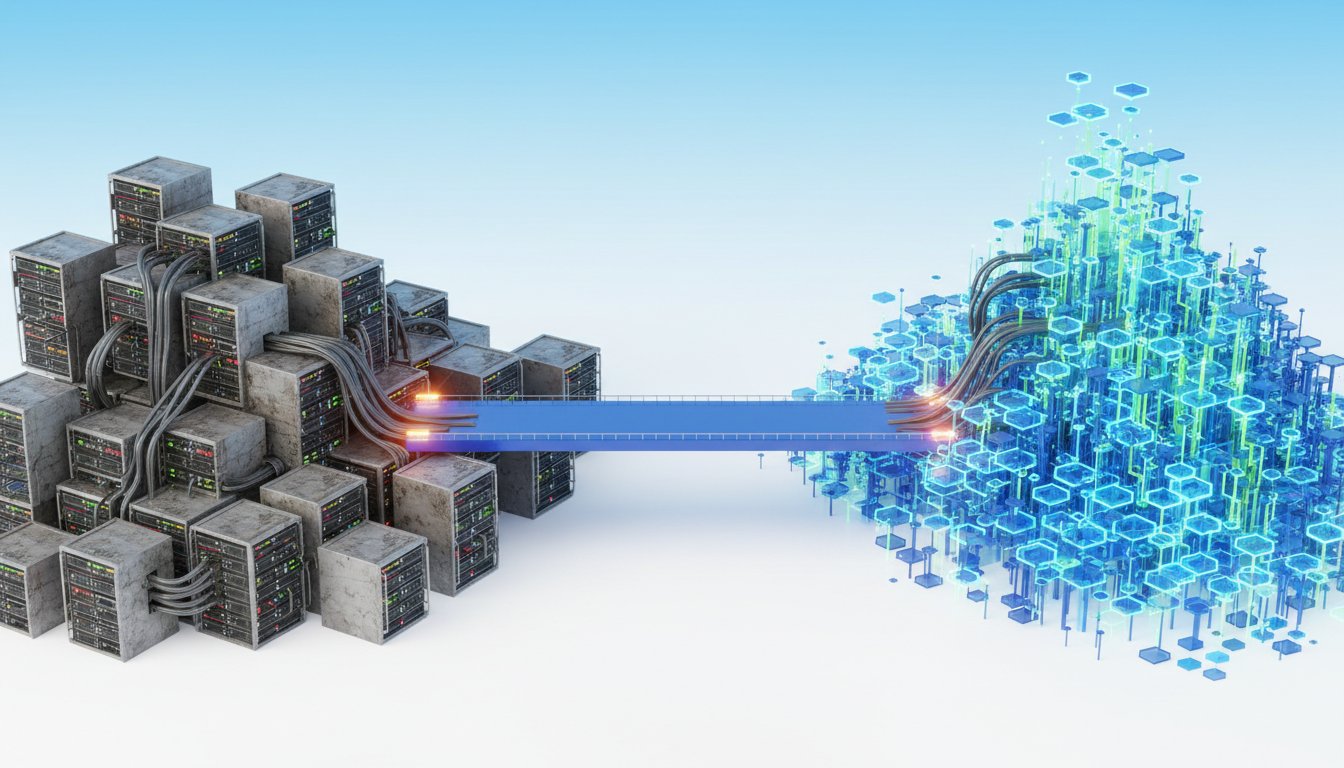

This substitution wasn't an isolated incident; it was a symptom of a larger systemic issue. Vendors like Dell, in their pursuit of cost reduction, would substitute components without rigorous end-to-end validation. The onus then fell entirely on the customer to discover these deep-seated problems. This lack of control over the entire stack, from firmware to networking, is precisely why hyperscalers like AWS and Google design their own hardware. They are not customers of these traditional vendors; they are the designers. They understand that to achieve true reliability and economic efficiency at scale, they must control every layer. The painful lesson learned by Cantrill and his colleagues was that this integrated, cloud-like infrastructure wasn't available for purchase; it could only be rented in the public cloud. This realization became the genesis of Oxide Computer Company: the belief that you should be able to buy cloud infrastructure, not just rent it.

The Shadowy World of BMCs: Where "Personal Computer" Architecture Fails

Beyond component substitutions, the very architecture of traditional servers, rooted in the 1980s personal computer model, presents significant challenges. A prime example is the Baseboard Management Controller (BMC). This "computer within a computer" is essential for managing hardware environments, handling diagnostics, and controlling fans. However, these BMCs, often proprietary silicon from companies like Aspeed, are notorious for their security vulnerabilities and operational pain points. They run ancient versions of Linux, are difficult to patch, and their root passwords are often hardcoded.

"The reality is messier. The BMCs, the computer within the computer that needs to be on its own network. So you now have like not one network, you got two networks. And that network, by the way, that's the network that you're going to log into to like reset the machine when it's otherwise unresponsive."

The operational burden of managing a separate, insecure network for BMCs is significant. Moreover, these systems frequently malfunction. Cantrill recounts a customer issue where broken temperature sensors on a BMC led to an inventive, but flawed, thermal control loop. This system would crank up server fans based on CPU inrush current, not actual temperature. The consequence? A workload that spiked current faster than the CPU could heat up resulted in fans running at full blast unnecessarily, wasting significant energy--estimated at 100 watts per server. Across thousands of servers, this translates to hundreds of kilowatts of wasted power, a direct result of a broken software-hardware interface at the lowest layer. This "shadowy world" of proprietary, poorly documented, and often unreliable BMCs is a primary reason why traditional infrastructure feels so "hairy and congealed," even at small scales.

The Control Plane Conundrum: Orchestrating Complexity

Building elastic infrastructure, or a cloud, on-premises requires more than just hypervisors. It necessitates a robust "control plane"--the software layer that translates API requests into actual infrastructure actions. This involves provisioning virtual machines, allocating storage, configuring networks, and managing metadata. While hypervisors like VMware ESXi are building blocks, they are not complete control planes themselves. True control planes, like those at AWS, handle the complex orchestration of distributed systems, ensuring reliability, availability, and manageability across vast numbers of machines.

Cantrill's experience at Joyent highlighted the difficulty of building such systems. They relied on PostgreSQL as their metadata database, a solution that proved problematic due to its primary-secondary architecture and lack of true distribution. This forced them to build significant custom software to bridge the gap. The choice to build their control plane in Node.js, while understandable given Joyent's history with the language, ultimately proved to be a significant challenge.

"We did it all in Node at Joyent, which I know sounds really, right now, just sounds like you built it with tinker toys. ... Let's just say that that experiment, that experiment did ultimately end in a predictable fashion."

The inherent looseness of JavaScript, allowing for undefined property references and conflating programmer errors with operational errors, made building rigorous, production-ready infrastructure software exceedingly difficult. This led to a constant struggle to maintain system robustness and diagnosability, creating what Cantrill describes as a "bad relationship with software." The eventual fork of Node.js (io.js) and Cantrill's departure from its stewardship stemmed from a fundamental misalignment of values: the community prioritized approachability and rapid iteration over rigor and long-term maintainability--values crucial for infrastructure software.

Rust: Embracing Cognitive Load for Sustainable Systems

This difficult experience with Node.js directly informed Oxide's technology choices. The company's foundational belief is that infrastructure software demands a different set of values, prioritizing rigor, determinism, and long-term sustainability. This led them to Rust. Unlike garbage-collected languages like Go, which Cantrill views as a lateral move from Node.js due to its own set of "autocratic decisions" and continued reliance on garbage collection, Rust offers memory safety and concurrency without a garbage collector.

"The fact that Go had garbage collection, it's like, no, I do not want. ... I want C, but I there are things I didn't like about C too. I was looking for something that was going to give me the deterministic kind of artifact that I got out of C, but I wanted library support."

Rust's ownership model and its robust error-handling mechanisms, particularly its use of algebraic types and forced pattern matching, shift cognitive load from production operators to developers. This upfront investment in rigor, while seemingly more difficult during development, results in more supportable, sustainable, and performant artifacts. This is particularly relevant in the age of LLMs, where Rust's inherent correctness can provide greater certainty in the generated code, potentially leading to a bifurcation in software development: highly rigorous, foundational systems built with languages like Rust, and more rapidly customizable applications built with less constrained languages, assisted by LLMs.

Illumos: A True Unix Heritage for Systemic Control

The choice of operating system was equally deliberate. While many assume Oxide chose Illumos (inheriting from Solaris) due to a historical Sun Microsystems connection, the decision was rooted in a deeper need for control and advanced tooling. Cantrill argues that Linux, while a powerful kernel, is insufficient as a complete host operating system. It requires extensive distribution management and lacks the built-in, first-class debuggability and diagnostic tools that Illumos offers, such as DTrace. The complexity of maintaining a Linux distribution, as experienced by former Red Hat engineers at Oxide, was a significant deterrent. Illumos, with its integrated features like ZFS, containers, and virtual networking, provided a more cohesive and controllable foundation. This choice, while involving a smaller community, allowed Oxide to build the precise technologies needed for their vertically integrated system, reinforcing their commitment to owning the entire stack.

The Oxide Rack: A Product, Not a Bag of Bolts

The culmination of these decisions is the Oxide Computer rack. Unlike traditional infrastructure, which is a "bag of bolts"--a collection of disparate components that must be manually integrated and troubleshooted--the Oxide rack is a designed product. It features a DC bus bar for power, a cabled backplane for networking, and compute sleds that blindly mate into both. This holistic design, extending from the rack to the switches and compute sleds, eliminates complex cabling and simplifies hardware management. The result is an infrastructure product that can be deployed rapidly, allowing teams to provision VMs, storage, and networks within hours, mirroring the ease of use found in public clouds. This integrated approach, where Oxide takes full responsibility for the entire stack, stands in stark contrast to the fragmented vendor ecosystem, where customers are left to resolve issues that fall into the gaps between component suppliers.

Key Action Items:

-

Immediate Actions (0-3 Months):

- Audit Existing Infrastructure for Component Substitutions: Investigate hardware bills of materials for non-standard or substituted components, particularly in networking and storage.

- Review BMC Security and Patching Practices: Assess the security posture and update cadence for all server BMCs.

- Map Control Plane Dependencies: Document all software and services involved in provisioning and managing infrastructure, identifying potential single points of failure or complexity.

- Evaluate Developer Tooling for Rigor: Assess current programming languages and development practices for their suitability in building robust, diagnosable infrastructure software.

-

Medium-Term Investments (3-12 Months):

- Pilot Rust for New Infrastructure Projects: Begin experimenting with Rust for new internal tools or services where rigor and performance are critical.

- Investigate Integrated Infrastructure Solutions: Explore vertically integrated hardware and software solutions that offer end-to-end control and support.

- Develop Internal Diagnostic Frameworks: Enhance observability and post-mortem diagnosability for existing systems, even if using less deterministic languages.

-

Long-Term Strategic Investments (12-18+ Months):

- Consider Vertical Integration for Critical Systems: For organizations with significant on-premises infrastructure needs, evaluate the long-term economic and operational benefits of designing and owning key hardware components.

- Adopt Languages Prioritizing Runtime Determinism: Strategically shift towards languages like Rust for core infrastructure components to reduce operational burden and improve system stability.

- Build a Culture of End-to-End Responsibility: Foster an organizational mindset where teams own problems across hardware, firmware, and software layers, regardless of vendor boundaries.