Hybrid Cloud Integration Requires Unified VM and Kubernetes Management

The enduring legacy of virtual machines, the promise of containers, and the complex dance between them reveals a fundamental truth: the most impactful technological shifts often hinge not on the novelty of the new, but on the stubborn persistence of the old. This conversation with Dan Ciruli, VP and General Manager of Cloud Native at Nutanix, unpacks the hidden consequences of this reality, exposing why the seemingly simple move to containers is anything but, and how enterprises must navigate the intricate interplay between their established VM infrastructure and the burgeoning world of Kubernetes. Those who master this delicate balance gain a significant advantage by avoiding the costly pitfalls of forced migrations and unlocking the true potential of both worlds. This is essential reading for technical leaders, architects, and engineers grappling with modernization strategies, offering a clear-eyed view of the challenges and a roadmap to sustainable cloud-native adoption.

The Ghost in the Machine: Why VMs Won't Disappear (And Why That Matters)

The allure of containers and Kubernetes is undeniable. They promise faster software delivery, increased scalability, and broader deployment flexibility. Dan Ciruli likens this to the early 2000s, when Google’s innovations, fueled in part by containerization, revolutionized search, maps, and email. The ability to ship smaller increments more frequently unlocked a new pace of innovation. However, the narrative often presented is that containers are the inevitable future, rendering virtual machines obsolete. This perspective, Ciruli argues, is a dangerous oversimplification. The reality is that millions of applications, built over decades on VM infrastructure, are not going away. Banks, for instance, have tens of thousands of applications running on VMs, many of which will never be rewritten.

"When are mainframes going away is a question people have been asking for a while I think since the 1900s."

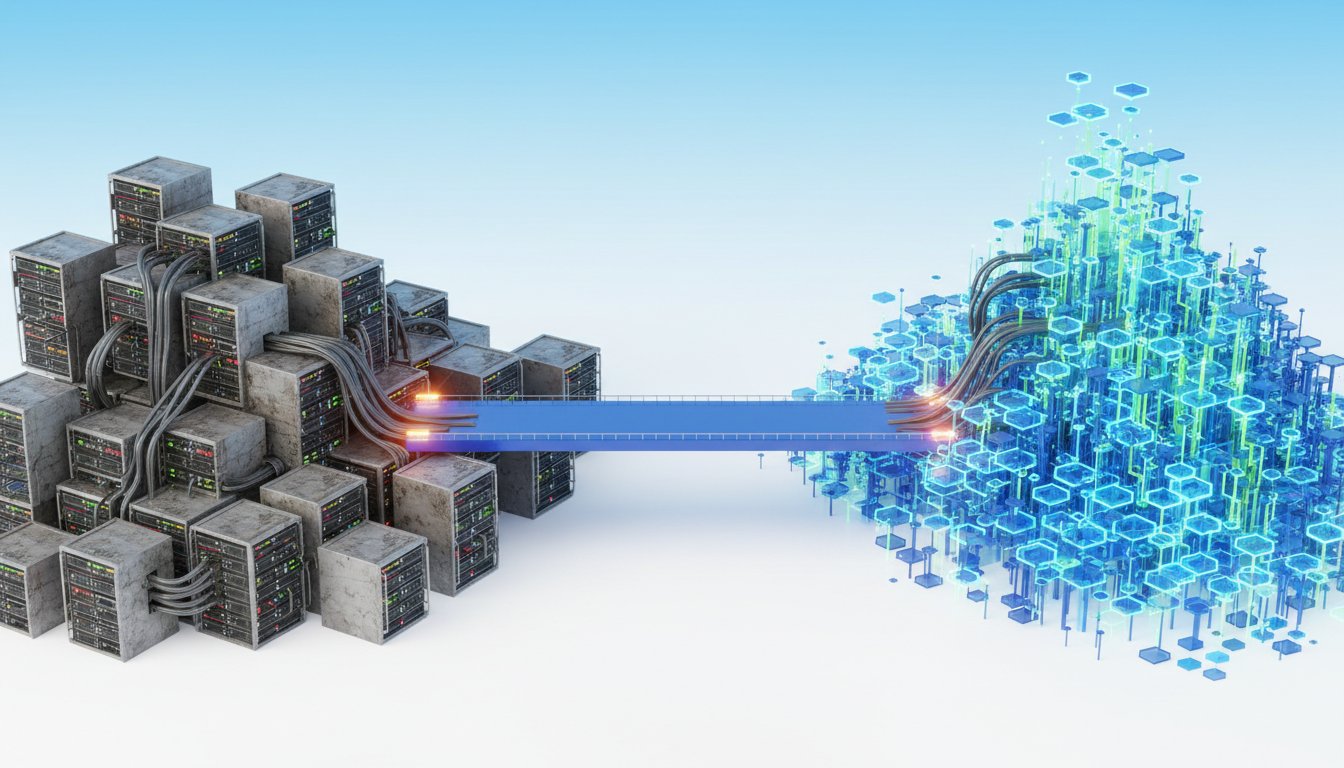

This statement, delivered with a touch of wry humor, underscores the enduring presence of legacy systems. The consequence of ignoring this reality is the creation of silos. When organizations build separate infrastructure teams for VMs and Kubernetes, the networking, identity management, and operational paradigms become so divergent that communication between them is akin to operating on different planets. This leads to significant friction, increased complexity, and a failure to leverage existing resources. A Kubernetes cluster might be full, while the VM infrastructure has available capacity, but the lack of a unified substrate prevents efficient resource utilization. The immediate benefit of adopting containers is often overshadowed by the downstream costs of managing this fractured ecosystem.

The Silo Effect: When Infrastructure Becomes a Barrier

The problem isn't just about communication; it's about operational efficiency and resource allocation. Ciruli recounts observing companies with entirely separate stacks of hardware for different purposes: one for virtual desktops, another for databases and large applications running on VMs, and a third for Kubernetes. This fragmentation means that even if VM clusters have available capacity, a full Kubernetes cluster cannot utilize it. This isn't just an inconvenience; it's a systemic inefficiency that hinders agility and inflates costs.

The ephemeral nature of IP addresses in Kubernetes--where pods are spun up and down, potentially changing IPs--further complicates direct communication with more static VM environments. While IP-to-IP communication is technically possible, managing network policies across these disparate systems becomes a labyrinthine task. This complexity can lead to workarounds, like routing all traffic through an egress point and implementing whitelisting, which introduces its own set of network policy challenges.

A more elegant solution, as Ciruli points out, is to run Kubernetes within VMs. This approach, which might seem counterintuitive, bridges the gap. By leveraging VM networking policies, a unified network policy can be applied, simplifying communication and security between containerized and VM-based workloads. Furthermore, running Kubernetes within VMs dissolves the hardware silos. The same physical nodes can host both VM-based workloads and Kubernetes clusters, allowing for greater fungibility and efficient use of existing infrastructure. This approach acknowledges the reality of enterprise IT landscapes, where a mix of technologies is the norm, not the exception.

"The fact is that the kind of networking the identity management the networking between all of those big stacks of metal is is all fundamentally different and it might as well be on the moon."

This highlights the core consequence of siloed infrastructure: it creates artificial barriers that impede seamless integration and operational synergy. The immediate gain from a new technology like Kubernetes is diminished if it cannot effectively interact with the existing, critical systems running on VMs.

The AI Gambit: Modernizing the Unmodernizable

The conversation then pivots to a particularly challenging aspect of legacy systems: mainframes and the languages they run on, like COBOL and Pascal. The prospect of rewriting these decades-old applications is often prohibitive. Here, Ciruli sees a significant opportunity for Artificial Intelligence, particularly Large Language Models (LLMs). The idea is not to replace the applications wholesale with new code, but to use LLMs to modernize them.

One promising pathway involves using the original code to generate a comprehensive suite of test cases. This allows teams to rigorously verify that any modernized version behaves identically to the original, a critical step in de-risking the process. Following this, LLMs can be employed to generate a modern equivalent of the application. While this is not a silver bullet, Ciruli expresses optimism that this is one area where AI can deliver tangible, practical value, potentially enabling organizations to finally decommission legacy systems that have long been considered untouchable.

"We will see LLMs put to work to something that will actually be be useful I don't I haven't heard of anyone actually you know say hey we just decommissioned our last mainframe yet but my guess is this is one of the areas where it will be successful."

This suggests a delayed but significant payoff. The immediate effort involved in using AI for modernization--generating tests, prompting LLMs, and verifying output--might seem substantial, but the long-term advantage of shedding costly and complex mainframe operations could be immense. The conventional wisdom of "just rewrite it" is often impractical; AI offers a potentially more achievable path to modernization, albeit one that requires patience and careful execution.

The Cloud-On-Prem Advantage: Flexibility and Economics

The discussion also touches upon the evolving relationship between cloud and on-premises infrastructure. While the hyperscalers have made Kubernetes incredibly accessible, Ciruli notes that on-premises Kubernetes adoption has lagged due to the significant "care and feeding" required. Nutanix aims to bridge this gap by offering an on-premises Kubernetes solution that is as easy to deploy and manage as cloud-based offerings. This is crucial for enterprises that, for security, legal, data, or latency reasons, must keep certain workloads on-premises.

Interestingly, Ciruli observes a trend of organizations moving workloads back on-premises, even those that were "born in the cloud." He shares an anecdote about a cloud-native company spending tens of millions of dollars monthly in the cloud, planning to move 80% of that back on-premises. The realization is that for stable, baseline workloads, renting cloud resources can be more expensive than buying and managing them on-premises. The cloud excels at flexibility, handling dramatic shifts in demand, and providing access to specialized services. However, for predictable, high-utilization workloads, the economics can favor on-premises deployment.

"They were born in the cloud and they've realized they've done the economics renting is more expensive than buying and for a workload that you're going to if you're going to use that machine all the time... then yeah you can probably run that cheaper at scale on premises."

This signals a shift from a purely cloud-first mentality to a more nuanced, "right tool for the right job" approach. The advantage lies in the ability to make intelligent decisions about workload placement, rather than being dictated by tool availability. Organizations that can effectively manage both on-premises and cloud environments, leveraging the strengths of each, will gain a significant competitive edge.

Key Action Items

- Short-Term (Immediate - 3 Months):

- Audit VM and Kubernetes Resource Utilization: Identify underutilized VM capacity and full Kubernetes clusters to understand current resource allocation inefficiencies.

- Map Inter-Application Dependencies: Document critical communication paths between VM-based applications and containerized services to identify potential integration challenges.

- Explore AI for Test Case Generation: For key legacy applications, pilot the use of LLMs to generate comprehensive test suites for future modernization efforts.

- Medium-Term (3-12 Months):

- Investigate Unified Infrastructure Platforms: Evaluate solutions (like Nutanix) that offer integrated VM and Kubernetes management to break down infrastructure silos.

- Develop Hybrid Cloud Networking Policies: Design and implement consistent network policies that span both VM and Kubernetes environments to simplify secure communication.

- Pilot Kubernetes-in-VM Deployments: Experiment with running Kubernetes clusters within VMs on existing infrastructure to assess performance and operational benefits.

- Long-Term (12-18+ Months):

- Strategic Workload Placement: Develop a framework for deciding whether workloads are best suited for on-premises or cloud environments based on economics, security, and performance requirements.

- AI-Driven Legacy Modernization Strategy: Based on initial pilots, formulate a long-term strategy for using AI to modernize critical legacy applications, potentially targeting mainframe systems.

- Build a Unified Developer Experience: Strive to create a seamless developer experience for both on-premises and cloud deployments, enabling rapid iteration and deployment regardless of location.