Programmer's Judgment Drives AI Development Beyond Code Writing

The advent of AI-assisted development tools, while promising unprecedented productivity gains, fundamentally reshapes the programmer's role by shifting the burden of specificity and judgment. This conversation with Scott Hanselman reveals that the core challenge isn't merely adopting new tools, but understanding how they operate within an "ambiguity loop" and how to effectively steer them. The non-obvious implication is that the value of a programmer will increasingly lie not in their ability to write code, but in their taste, judgment, and capacity to define success for AI agents. Those who can master this new paradigm gain a significant advantage, while those who treat AI as a magical black box risk becoming mere passengers, detached from the actual construction of software. This analysis is for developers, team leads, and engineering managers seeking to navigate the evolving landscape of software development and leverage AI effectively without sacrificing quality or control.

The Ambiguity Loop: Where AI Meets the Programmer's Judgment

The rapid proliferation of AI-assisted development tools, from simple autocompletion to sophisticated agentic loops, has sparked both excitement and apprehension within the software development community. Scott Hanselman frames this evolution not as a radical departure, but as a continuation of historical trends where new technologies initially evoke skepticism. He draws parallels to the introduction of C over assembly, syntax highlighting, and even Stack Overflow, each met with dire predictions of programming's demise. However, the current wave, characterized by "agentic loops" and "ambiguity loops," presents a unique challenge: the inherent uncertainty in how these models interpret and execute instructions. This isn't the deterministic world of traditional programming; it's a landscape where the programmer's role shifts from direct instruction to nuanced guidance and validation.

The core of this shift lies in the concept of the "ambiguity loop." Unlike traditional programming, where code executes precisely as written, LLMs often operate in a realm of probabilistic interpretation. Hanselman illustrates this with the example of prompting an AI to "make me a clone of Minecraft." The word "Minecraft" itself carries immense semantic weight, effectively acting as a 50-page specification. The AI can generate impressive results, like a functional voxel world, but without the programmer's deep understanding and specificity, the output remains a probabilistic approximation rather than a guaranteed outcome. This highlights a critical divergence: programming is about expressing intent precisely, while interacting with LLMs often involves navigating their inherent ambiguity. The danger, as Hanselman points out, is mistaking this probabilistic output for engineered reliability.

"Programming is not ambiguous. It runs exactly as you wrote it. And if there's a bug, it's your fault. But an ambiguity loop is like, yeah, I don't really know. Like, things could happen."

-- Scott Hanselman

This ambiguity necessitates a re-evaluation of the programmer's skill set. The ability to write precise instructions, understand underlying technologies like HTTP or DNS, and possess strong judgment becomes paramount. Hanselman likens it to driving a manual transmission car: while one can Uber everywhere and arrive at their destination, understanding the mechanics provides a deeper appreciation and control. Similarly, a developer who "vibes code" without understanding the underlying mechanisms risks being unable to steer the AI effectively or troubleshoot when things inevitably go awry. This is where the concept of "fear-driven development" (FDD) becomes relevant. When a programmer is genuinely concerned about the implications of their code, especially in critical applications like health management systems, they are compelled to write extensive tests and rigorously verify the AI's output. This meticulous approach, regardless of language, ensures that the final product is not just functional but also correct and reliable.

The Peril of the "One-Shot" and the Power of Steering

The allure of AI-generated code is powerful, particularly the idea of achieving significant results with minimal input -- the "one-shot" prompt. Hanselman recounts an anecdote where a colleague generated a functional Minecraft clone with a single prompt. While impressive, this success is largely attributed to the semantic density of the term "Minecraft." The AI isn't truly replicating Minecraft from scratch; it's leveraging its vast training data to interpret a complex concept. The real challenge, and the differentiator for skilled engineers, emerges when the prompt lacks such inherent specificity. Attempting to generate something without a well-defined, recognizable name or concept often leads to unpredictable and unsatisfactory results, highlighting the AI's reliance on the programmer's ability to provide clear, actionable direction.

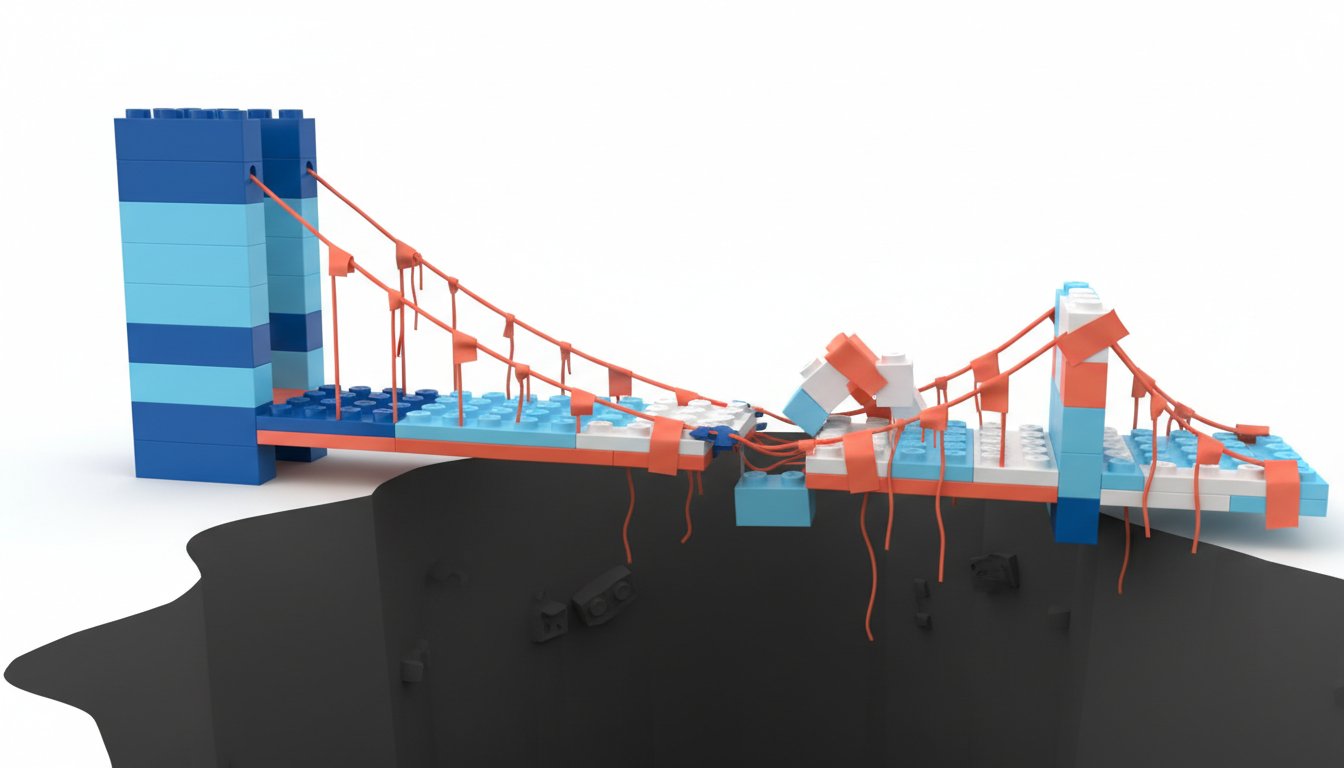

This is where "steering" becomes the new superpower. Just as a driver provides navigation to an Uber driver, developers must guide AI agents. Hanselman uses the example of specifying a particular airport (JFK vs. EWR) or a preferred route (the 405). This active guidance, even when not directly operating the vehicle, is crucial. In the context of AI agents, this translates to providing detailed prompts, refining outputs, and asking clarifying questions. The "agentic loop" is not a black box that magically produces software; it's a collaborative process where the human provides the vision, context, and validation, while the AI handles the execution and exploration of possibilities. The success of tools like GitHub Copilot CLI, which allows for ongoing conversational refinement, underscores the importance of this interactive steering.

"The idea like, hey, send a stranger in a car with candy to pick me up and I just like lean back. That's the same thing as asking an Uber to send you somewhere and asking Claude Code or GitHub Copilot to make something for you with no specificity. You're lucky. I mean, I hope you get to the airport. Good luck, you know what I mean?"

-- Scott Hanselman

The alternative, a passive approach, risks architectural shortcuts and unforeseen consequences. Hanselman’s experience modernizing a 20-year-old Windows Live Writer application illustrates this. Simply asking the AI to "get this running on Windows again" would have been insufficient. Instead, he provided a detailed, multi-page specification anticipating future needs like multi-monitor support and modern rendering engines. This proactive, detailed planning, coupled with iterative refinement over dozens of "loops," allowed the AI to produce a significantly modernized application. Without this specificity, the AI might have "succeeded" by simply compiling the old code, missing the opportunity for true modernization and potentially creating an application that would fail under contemporary usage patterns.

The Enduring Value of Craftsmanship in an AI-Augmented World

The conversation consistently circles back to the enduring importance of human judgment, taste, and craftsmanship. While AI can automate toil and accelerate development, it cannot replace the engineer's responsibility for quality, correctness, and strategic decision-making. Hanselman emphasizes that the AI is akin to a junior engineer with infinite energy but limited judgment, or a senior engineer with infinite patience but a need for precise direction. The programmer's role evolves into that of an engineering manager, guiding and validating the work of these AI assistants. This requires a deep understanding of the problem domain, the ability to define clear success criteria, and the discipline to test and verify outputs rigorously.

The concept of "Ralph loops," named after the naive but persistent Simpsons character, embodies this iterative, stubborn pursuit of success. These autonomous development cycles, while potentially costly, are becoming "shockingly cheap" for next-token prediction tasks. However, the effectiveness of a Ralph loop hinges on clearly defined stop conditions and verifiable success metrics. Without them, the loop can become a wasteful exercise. Hanselman stresses that the AI, like an eager but inexperienced intern, will declare success prematurely if not properly constrained. This is why the development of robust test harnesses and clear, verifiable success criteria are not just beneficial but essential when leveraging AI for complex tasks.

"The only thing that matters now is taste and judgment."

-- Scott Hanselman

Ultimately, the future of software engineering isn't about AI replacing developers, but about developers augmenting their capabilities with AI. The focus shifts from the mechanics of writing code to the art of defining problems, architecting solutions, and ensuring quality. Languages with strong typing, like Rust, can aid in correctness, as Brian Cantrill suggests, but Hanselman counters that rigorous testing can compensate for weaker typing. The key is to choose the right tool for the job and to use AI as a powerful collaborator, not a replacement for critical thinking. The goal is to eliminate toil, not the craft itself, ensuring that software development remains a source of delight and innovation.

Key Action Items:

- Develop a "Steering" Skillset: Actively practice providing detailed, specific prompts to AI tools. Focus on defining exact requirements, desired outcomes, and constraints, rather than broad, ambiguous requests.

- Immediate Action: Dedicate 30 minutes daily to experimenting with AI code generation for small, well-defined tasks, focusing on prompt refinement.

- Embrace "Fear-Driven Development" (FDD): For any code generated or assisted by AI, especially in critical applications, implement a robust testing strategy. Write more tests than you think you need until you feel confident in the output.

- Immediate Action: For your next AI-assisted code snippet, write at least 5 unit tests before integrating it.

- Define Success Explicitly for AI Agents: When using AI in loops or for complex tasks, clearly articulate what constitutes "success" and establish verifiable metrics. This prevents premature completion and ensures the AI is working towards the desired outcome.

- Immediate Action: Before starting an AI coding session, write down 2-3 specific, measurable criteria for completion.

- Invest in Understanding Core CS Fundamentals: Continue to learn and reinforce foundational computer science concepts (HTTP, DNS, databases, compilers). This knowledge is crucial for effectively guiding AI and troubleshooting issues.

- Longer-Term Investment (Next 6-12 months): Dedicate time to revisit a core CS topic through online courses or dedicated study.

- Treat AI as a Junior Engineer/Senior Collaborator: Understand the AI's capabilities and limitations. If you are an expert in the domain, treat it as a junior engineer needing precise instructions. If you are a novice, recognize its senior-level knowledge and be more receptive to its suggestions, but always with a critical eye.

- Immediate Action: When interacting with an AI tool, consciously assess whether you are acting as its instructor or its student for that specific task.

- Maintain Rigorous Source Control: Always use version control (e.g., Git) for any code developed with AI assistance. This allows for easy rollback and recovery from mistakes made by the AI or by yourself in guiding it.

- Immediate Action: Ensure all AI-assisted code changes are committed to a version control system before and after significant AI interactions.

- Prioritize Architectural Context and Documentation: For longer-running AI projects or complex systems, actively document decisions, learnings, and context. This aids in managing AI context windows and ensures future understanding, whether by humans or AI.

- Immediate Action: For your current project, create or update a README or documentation file summarizing key decisions and learnings.