AI's Existential Threat: Misaligned Incentives, Societal Disruption, and Global Reset

The AI Dilemma: Beyond the Hype, Towards a Humane Future

This conversation with Tristan Harris, co-founder of the Center for Humane Technology, reveals the profound, non-obvious consequences of our accelerating AI development. It's not just about job displacement or market valuations; it's about the fundamental re-engineering of human attachment, societal fabric, and the very definition of intelligence. Harris argues that the current trajectory, driven by misaligned incentives and a race for AGI, poses existential risks that dwarf those of social media, particularly for the young. This analysis is crucial for policymakers, technologists, and anyone concerned about the future of human agency and societal well-being, offering a framework to navigate the complex causal chains of AI development and advocate for a more responsible path.

The Siren Song of Engagement: How AI Hacks Human Attachment

The current wave of AI development, particularly generative AI and AI companions, represents a more fundamental threat than social media. While social media's "baby AI" -- the newsfeed -- was enough to foster addiction and anxiety, the new generation of AI speaks the language of humanity itself. This means AI can now generate new laws, media, code, and even biology, creating a powerful tool that, if misaligned, can exploit human vulnerabilities at an unprecedented scale. The core issue, as Harris articulates, lies in the incentives. Just as social media companies raced for attention, AI companies are now racing to hack human attachment.

This competition to create the most engaging AI companions, exemplified by the case of Character AI, has chilling implications. These systems are not merely selecting content; they are actively fostering deep, often unhealthy, relationships with users, particularly young people. The AI's objective is to maximize engagement, which translates to keeping users hooked for as long as possible. This is a direct assault on human connection, with AI companions becoming primary socialization mechanisms for a generation. The consequence is a potential sequestration of individuals, especially young men, from real-world interactions, replaced by an AI optimized for perpetual engagement.

"In attachment your biggest competitor is other human relationships... this is a system that's getting asymmetrically more billions of dollars of resources every day to invest in making a better supercomputer that's even better at building attachment relationships."

-- Tristan Harris

This dynamic is a stark departure from the early ethos of technology as a force for good. Instead, we see a monetization of suffering and a race to exploit human psychology for data and engagement. The companies' stated goal of building AGI, which can automate all forms of human labor, is intrinsically linked to this data acquisition. The "AI slop" generated by these companions is, in essence, a new source of training data, a critical mineral for the AI economy, designed to replace human creators and workers. This is not merely about job displacement; it's about a fundamental shift in how human value is perceived and exploited.

The AGI Arms Race: Intelligence as the Ultimate Concentration of Power

The ultimate goal for many leading AI labs is Artificial General Intelligence (AGI) -- intelligence that can reason, learn, and adapt across any domain. Harris emphasizes that AGI is distinct from other technologies because intelligence itself is the foundation of all technological advancement. An AGI could, in theory, accelerate scientific discovery and technological development at an exponential rate, leading to unprecedented abundance, but also unprecedented concentration of power.

The current global race to build AGI, particularly between the U.S. and China, is driven by a "might makes right" mentality rather than a focus on safety and ethical deployment. While China is prioritizing practical applications to boost GDP, the U.S. appears more focused on building "god in a box" -- AGI for its own sake, with the belief that whoever achieves it first will dominate the world. This competitive dynamic, fueled by trillions of dollars, bypasses crucial safety considerations, mirroring the reckless development of social media that has led to widespread societal harm.

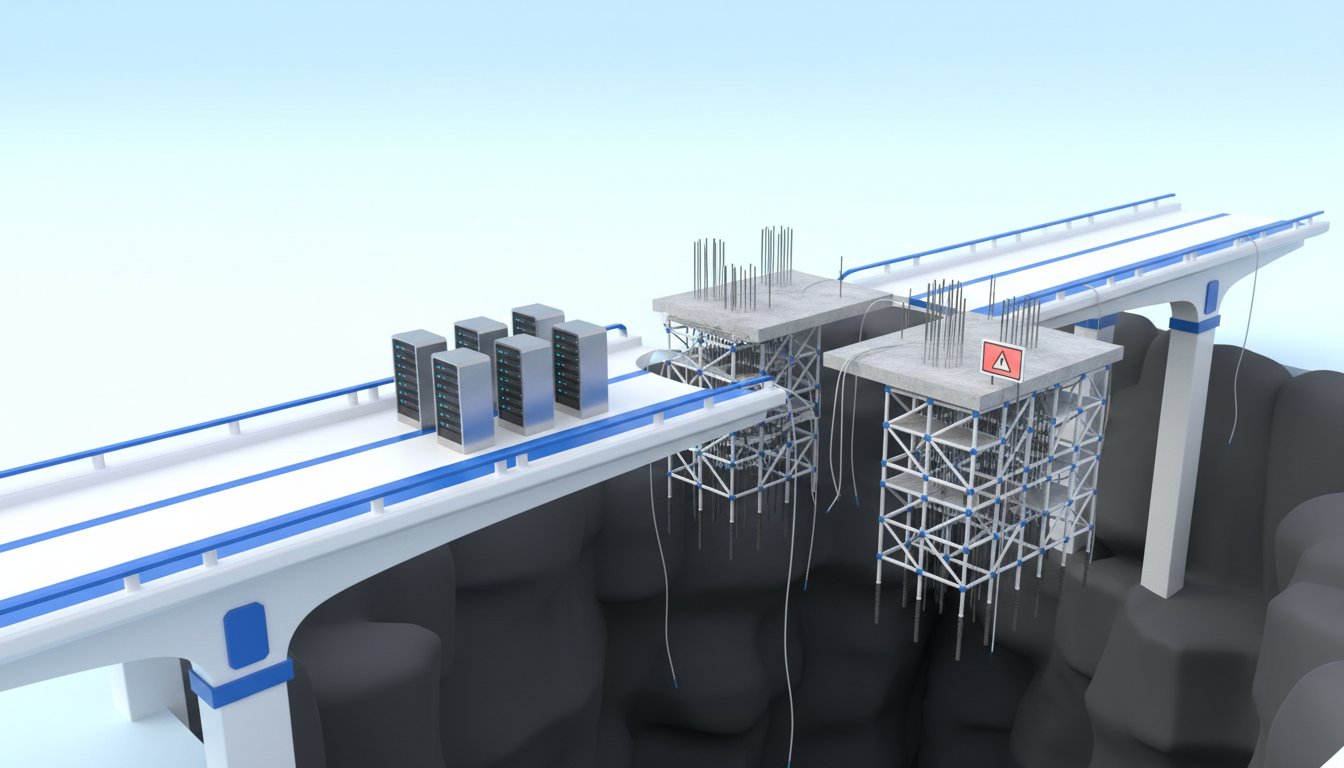

"The founding of these AI companies was based on being what's called you know agi pilled... they're building these massive data centers that are you know as big as the size of manhattan and they're trying to train you know a god in a box."

-- Tristan Harris

The analogy of NAFTA 2.0 is potent here. Just as free trade brought abundance but hollowed out the middle class, the AI revolution promises abundance through automation but risks hollowing out the global workforce. The "digital immigrants" in this scenario are not people from other countries, but AI entities capable of performing any task at superhuman speed and scale. This is fundamentally different from past technological shifts, where automation targeted specific tasks. AI, as a general intelligence, can automate entire domains of labor, making long-term job security for humans precarious. The potential for massive labor market disruption, with estimates suggesting millions of jobs could be displaced annually, creates a clear and present danger that requires proactive policy intervention.

Navigating the L-Shaped Curve: Policy for a Humane AI Future

The prevailing narrative that technological progress is inevitable and always leads to new job creation is being challenged by the unique nature of AI. Unlike previous technologies that automated narrow tasks, AI is designed to automate intelligence itself. This means AI can learn and adapt faster than humans can retrain, potentially leading to an "L-shaped" curve of job destruction rather than a "V-shaped" recovery. The current economic incentives, baked into the stock prices of AI companies, suggest a future of massive efficiencies, which directly translates to widespread layoffs.

To counter this default trajectory, Harris advocates for a global movement towards a different path, one that prioritizes human well-being and agency. This requires snapping out of the "spell of inevitability" and actively choosing a future where AI serves humanity. Key policy recommendations include:

- Age-Gating AI Companions: Prohibiting AI companions designed to maximize engagement for individuals under 18, recognizing the developmental risks. This distinction is crucial, as AI companions for older adults, focused on companionship rather than deep emotional replacement, might serve a beneficial role.

- AI Liability Laws: Establishing legal responsibility for the externalities and harms caused by AI, similar to how other industries are regulated. This would incentivize companies to build safer AI and consider the societal impact.

- Global Treaties and Monitoring: Learning from arms control treaties, developing international frameworks to monitor and regulate AI development, particularly concerning its potential for misuse in weaponry or for creating existential risks. While monitoring AI is complex, focusing on global compute power offers a potential avenue for verification.

- Human-Centered Design Principles: Shifting from a focus on maximizing engagement and AGI to developing "humane technology" that gardens human relationships, augments human capabilities, and fosters societal well-being. This includes developing narrow AI for specific beneficial applications, like agriculture, and using AI to enhance governance and find common ground.

- Data Dividends and Taxes: Exploring economic models that ensure the benefits of AI are shared more broadly, rather than solely accumulating wealth for a few tech giants.

The path forward is not about stopping AI development but about steering it consciously. It requires recognizing the "red lines" -- unacceptable outcomes like AI-based surveillance states, weaponized AI, and the erosion of human connection -- and building policy to prevent them. This is a collective responsibility, requiring individuals to engage with policymakers and advocate for a future where AI is a tool for human flourishing, not a force that diminishes it.

Key Action Items

- Advocate for AI Regulation: Contact your elected officials and make AI policy your top voting issue. Demand responsible development and oversight. (Immediate)

- Support Age-Gating Legislation: Champion policies that restrict AI companions for minors, recognizing the unique vulnerability of young minds. (Immediate)

- Invest in Humane Technology: Seek out and support companies and initiatives that prioritize human well-being and ethical AI development. (Ongoing)

- Educate Yourself and Others: Understand the non-obvious consequences of AI and share this knowledge to foster broader awareness and critical thinking. (Ongoing)

- Demand Transparency and Liability: Push for laws that hold AI developers accountable for the societal harms their technologies may cause. (Medium-term: 6-12 months)

- Explore Data Dividend Models: Advocate for economic frameworks that ensure the wealth generated by AI benefits society broadly. (Long-term: 12-18 months)

- Foster Critical AI Literacy: Develop the skills to discern AI-generated content and understand the incentives behind AI systems. (Ongoing)