Autonomous Vehicle Development: Technological, Societal, and Ethical Journeys

This podcast episode, "Are You a Good Driver?" from the Search Engine podcast, delves into the complex, decade-long journey of developing autonomous vehicles, revealing not just the technological hurdles but also the profound societal and ethical questions that emerge. Beyond the obvious promise of enhanced safety and convenience, the conversation uncovers the hidden consequences of this technological pursuit: the erosion of established industries, the ethical dilemmas of prioritizing data over human intuition, and the potential for significant labor displacement. Those invested in the future of transportation, urban planning, or the impact of AI on employment will find this exploration particularly valuable, offering a nuanced understanding of how a seemingly niche technological challenge is poised to reshape our world.

The narrative of driverless cars is not a simple march of progress, but a winding path marked by ambitious dreams, spectacular failures, and the quiet persistence of dedicated engineers. As explored in this episode, the quest to replace the human driver with a machine is as old as the automobile itself, a testament to an enduring desire to mitigate the inherent risks of human operation. This ambition, however, has consistently collided with the limitations of available technology and the unpredictable nature of real-world environments.

The early attempts, like those in the 1800s, were met with resistance, not just from those fearing job losses in horse-related industries, but also from a society grappling with the inherent dangers of these new machines. Regulations like red flag laws and the absurd Pennsylvania requirement to disassemble cars highlight a visceral, albeit sometimes misguided, fear of the unknown. The episode underscores that even these seemingly outlandish measures were rooted in a correct assessment: cars were unsafe, and their unregulated proliferation led to devastating consequences, particularly for children. It took decades of societal adaptation--laws, licenses, road design, safety features--to make cars a manageable, albeit still risky, part of life.

The true acceleration of the driverless car dream, however, can be traced to the DARPA Grand Challenges. These were not just races; they were crucibles that forged the foundational talent for the industry. The contrast between the academic, hardware-focused approach of teams like Carnegie Mellon's Red Team and the more software-centric, entrepreneurial spirit embodied by Anthony Lewandowski's early attempts, foreshadows the different philosophies that would later define the industry. Sebastian Thrun’s observation that the challenge was fundamentally a software problem, not a hardware one, proved prescient. His vision, shaped by personal loss from traffic accidents, was not just about military applications but about saving millions of lives globally.

"The challenge really is to build a self-driving car that can drive in the desert. I can get a rental car, they can do it just fine, provided there's a person inside. And the challenge is really to take the person out of the driver's seat and replace it by a computer. That is not a problem of bigger tires. That's actually really a software problem."

-- Sebastian Thrun

This emphasis on software and machine learning, particularly the concept of learning from vast datasets, became the engine of progress. The initial DARPA challenges, though filled with spectacular failures like Ghost Rider toppling over or Sandstorm melting its tires, served a critical purpose: they flushed out inventors and jump-started the ecosystem. The subsequent Grand Challenge, won by Thrun's Stanford team with Stanley, demonstrated the power of machine learning to recognize and generalize from road conditions, a significant leap forward.

The transition from the controlled environment of the desert to the chaotic reality of public roads was the next, and perhaps most perilous, hurdle. Google's Project Chauffeur, later Waymo, exemplifies the complex ethical tightrope walk. Larry Page’s insistence, despite Sebastian Thrun’s initial apprehension about safety, pushed the team to tackle the "impossible." The Larry 1K challenge--driving 10 specific, difficult routes without human intervention--forced the engineers to confront real-world complexities, from the subtle "nudging" behavior of human drivers to the contextual nuances of lateral acceleration.

The internal schisms within Google, particularly the clash between a "move fast and break things" mentality championed by figures like Anthony Lewandowski and the more cautious, safety-first approach of Chris Armison, highlight a fundamental tension in tech development. This tension escalated dramatically with the rise of Uber as a competitor. The ensuing legal battles, including accusations of trade secret theft and the eventual criminal conviction of Lewandowski, underscore the high stakes and the ethical compromises that can arise in a race for technological dominance.

"He was a move fast and break things kind of guy."

-- Chris Armison (describing Anthony Lewandowski)

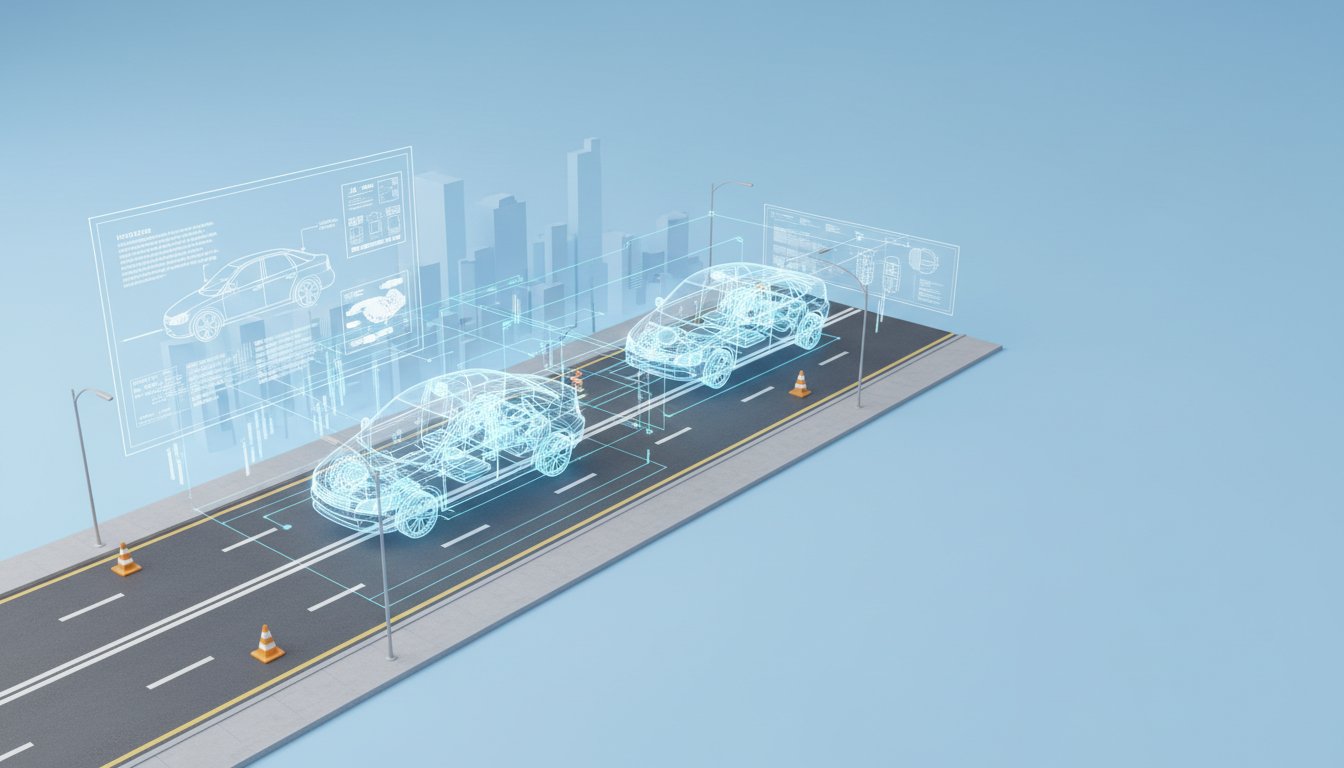

The tragic death of Elaine Herzberg in an Uber self-driving car accident serves as a stark reminder of the real-world consequences when safety is compromised for speed. The incident exposed critical flaws in Uber's system and its safety protocols, contrasting sharply with Waymo's more deliberate, data-driven approach. Waymo’s commitment to collecting millions of miles of data and making that information transparent, even with its limitations, has been crucial in building a credible safety case. While Waymo's data suggests a significant reduction in severe crashes compared to human drivers, the question of fatal accidents remains statistically uncertain, requiring hundreds of millions more miles of data for definitive conclusions.

The narrative also touches upon the broader societal impact, particularly the potential displacement of millions of professional drivers. The organized resistance from unions and drivers in cities like Boston illustrates the human element in this technological revolution, a counterpoint to the relentless drive for automation. The episode concludes by framing the ongoing development of driverless technology not just as an engineering feat, but as a profound societal experiment with implications that will continue to unfold.

- Embrace the "Software Problem" Mindset: Recognize that the core challenge in autonomous driving, and many complex systems, lies in the intelligence and decision-making logic, not just the physical components. Prioritize software development and AI training.

- Invest in Long-Term Data Collection and Analysis: Understand that true safety and reliability in complex systems like autonomous vehicles are proven over millions of miles and rigorous data analysis, not just short-term tests. Commit to gathering and transparently analyzing safety data.

- Acknowledge and Mitigate Job Displacement: Proactively plan for the societal impact of automation on employment. This involves not just developing the technology but also engaging with affected industries and workers to manage the transition.

- Prioritize Safety Over Speed (Especially in Public Deployments): Resist the temptation to rush deployment for competitive advantage. The long-term viability and public acceptance of autonomous technology hinge on an unwavering commitment to safety, even if it means a slower rollout.

- Foster Interdisciplinary Collaboration: Recognize that building complex systems requires expertise from various fields--robotics, AI, urban planning, ethics, and law. Encourage collaboration between these disciplines.

- Prepare for Ethical Dilemmas: Anticipate and develop frameworks for addressing the ethical quandaries inherent in autonomous systems, such as decision-making in unavoidable accident scenarios. This requires foresight and public discourse.

- Long-Term Investment for Delayed Payoffs: Understand that groundbreaking technologies often require significant, sustained investment with delayed returns. Be prepared for a protracted development cycle, especially when safety and societal integration are paramount. This pays off in 5-10 years with a market-leading, trusted product.