Inference Energy Demands Require New Distributed Data Center Paradigm

The AI inference wave is set to dwarf the energy demands of model training, creating a critical need for a new data center paradigm. This conversation reveals that the prevailing strategy of building massive, centralized data centers, while suitable for training, is ill-equipped for the distributed, latency-sensitive nature of inference. The hidden consequence is a potential grid strain and missed opportunities for resilience. This analysis is crucial for technology leaders, utility executives, and infrastructure planners who need to understand the systemic implications of AI's energy footprint and capitalize on the advantages of a decentralized approach. Ignoring these dynamics risks falling behind in the race to deliver low-latency AI services and build a more robust energy future.

The Hidden Cost of Centralized AI: Why Inference Demands a New Grid Strategy

The narrative around AI and energy consumption has largely focused on the colossal power demands of training massive models. While this is a significant challenge, the conversation with Ben Sooter of EPRI illuminates a far more profound, and often overlooked, implication: the overwhelming majority of an AI model's lifetime energy use will come from inference. This seismic shift demands a fundamental rethinking of data center strategy, moving away from the monolithic, centralized behemoths towards a distributed network of micro data centers. The conventional wisdom of concentrating compute power is precisely what fails when extended forward into the era of widespread AI application.

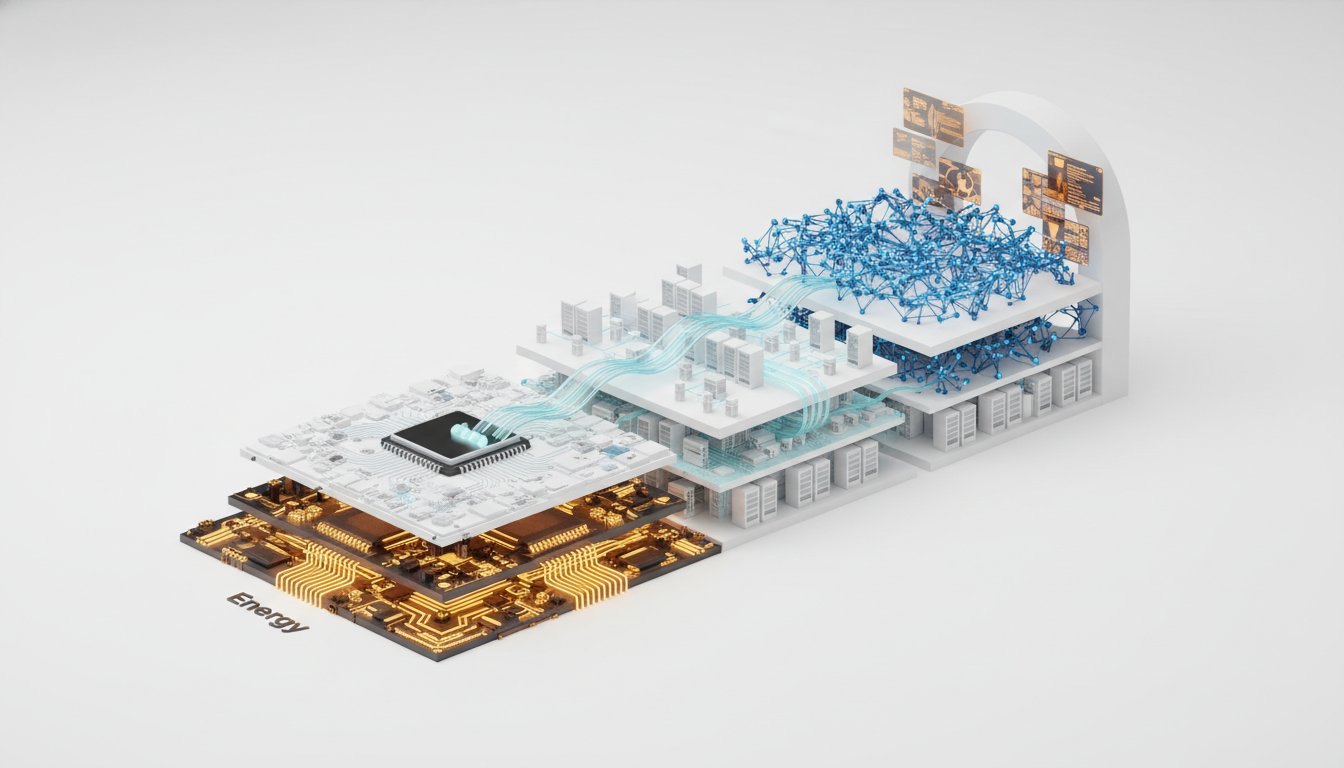

The core of the issue lies in the distinct energy profiles of training versus inference. Training, characterized by massive, instantaneous power spikes--hundreds of megawatts on demand--is a relatively short-lived endeavor for any given model. This "slam and fall off" characteristic, as Sooter describes it, presents significant technical hurdles for grid operators. However, the real energy tsunami is inference. Sooter highlights a startling statistic: "80% of it is in the inference side." This means that as AI applications move beyond simple chatbots to real-time translation, augmented reality, and autonomous systems, the demand for compute power will not only surge but will also become far more geographically dispersed and continuous.

"80% of it is in the inference side. So the vast majority is actually in the inference side. So if you think about how much capacity we're building for training, we're going to need a couple of times that to meet the demand for all the inference."

This statistical reality has profound implications for grid management. The assumption that inference loads will simply smooth out due to random user generation is already being challenged by the emergence of agentic AI, which can perform complex tasks autonomously, potentially at any time. Sooter notes this evolving understanding: "all of a sudden I'm like, well, that completely changes the paradigm because now it's running at night while I'm sleeping, and is it going to do more?" This unpredictability, coupled with the sheer scale of inference demand, renders the centralized mega-data center model increasingly untenable. Building more of these massive facilities simply to handle inference would place an unsustainable burden on the grid, requiring enormous new power generation and transmission infrastructure.

The solution, as explored in the conversation, lies in the strategic deployment of micro data centers. These facilities, often in the range of 3-20 megawatts, are designed to be located closer to end-users. This geographical distribution is critical for addressing the latency sensitivity of many inference applications. Sooter draws a parallel to the evolution of streaming media, where "mirroring onto the local networks" improved distribution. Similarly, game servers have long relied on geographically dispersed infrastructure. The advantage here is not just performance; it's also grid resilience and efficiency.

"Positioning them geographically around where the people are tends to make more sense because those are, they can be more latency sensitive, et cetera. So you don't necessarily want to have them just in one place in the middle of nowhere."

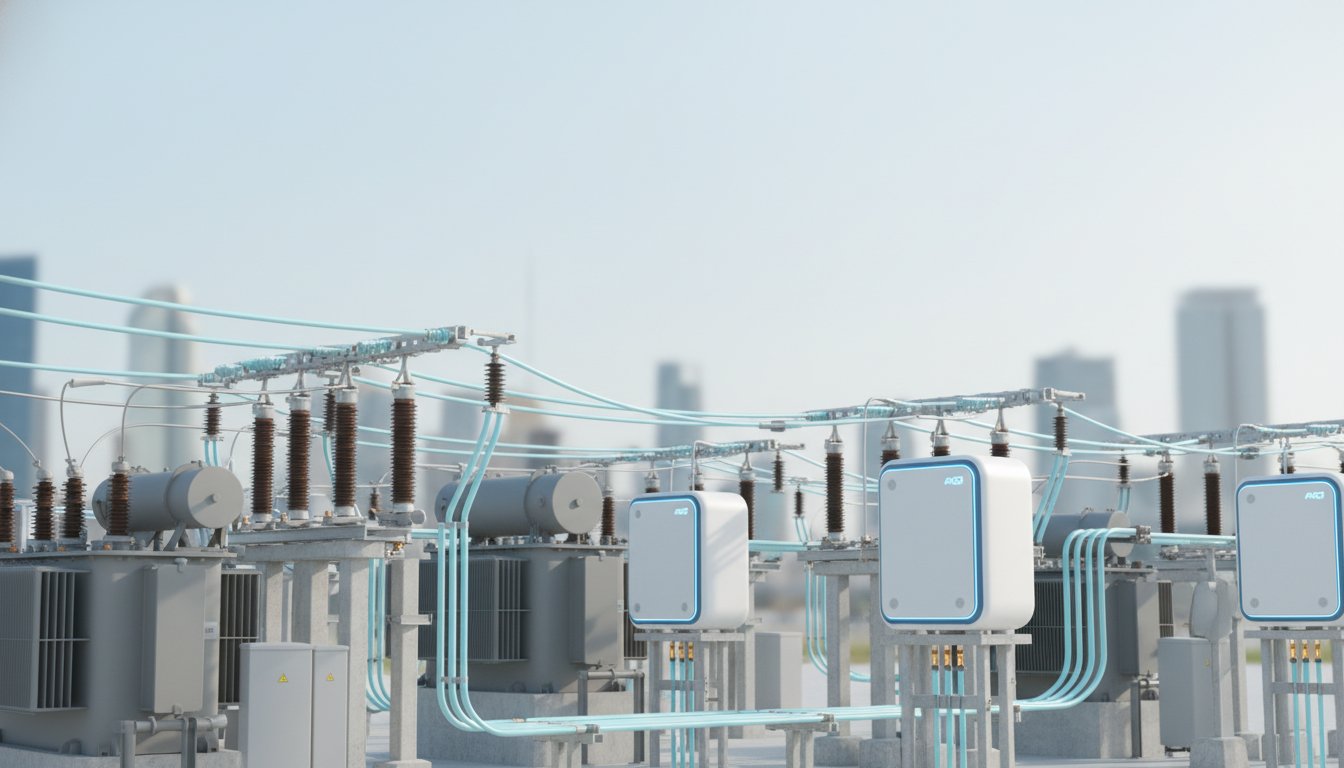

A key insight is the potential to leverage existing, underutilized substations. These locations often have available power capacity that can be tapped without the need for extensive new grid build-outs. This approach, dubbed "distributed inference," offers a faster path to deployment by circumventing the lengthy interconnection queues associated with large-scale grid connections. Instead of waiting years for new transmission lines, micro data centers can be integrated into the existing grid fabric, often on-site or adjacent to substations. This strategy directly addresses the "speed to power" imperative in the race for AI capabilities.

The systemic advantage of this distributed model extends to grid flexibility and resilience. Sooter explains how micro data centers can be engineered to reduce their load during peak demand events, thereby unlocking more overall capacity at a substation. This flexibility, when paired with energy storage or backup generators, allows for greater utilization of existing infrastructure. Furthermore, in the event of a local grid issue, compute tasks can be rerouted to other micro data centers in the network, providing a level of redundancy and fault tolerance that centralized facilities cannot match. This creates a more robust and adaptable system, capable of weathering disruptions.

"if you have, if you can engineer it so that you can have flexibility to reduce your load, you'd reduce your demand during those peaks. You actually have a lot more, you know, envelope that that that you could potentially use. And so pairing it with energy storage, backup generators..."

The conventional approach of building mega-data centers for AI is a first-order solution that ignores the second and third-order consequences of inference's energy demands. The true competitive advantage, and indeed the path to sustainable AI growth, lies in embracing the complexity of a distributed infrastructure. This requires a willingness to invest in infrastructure that offers delayed payoffs--improved grid stability, lower latency, and enhanced resilience--rather than solely focusing on immediate compute capacity. The teams and utilities that understand and act on this paradigm shift will be best positioned to navigate the AI inference wave.

- Immediate Action: Grid Load Analysis for Inference. Utilities and data center operators must collaborate to accurately model and forecast inference load profiles, considering factors like agentic AI and time-of-day usage shifts. This moves beyond traditional load forecasting to account for the unique, dynamic nature of AI compute.

- Immediate Action: Identify Underutilized Substations. Proactively map existing substations for available capacity and proximity to fiber infrastructure. This forms the foundation for a distributed micro data center strategy.

- Immediate Action: Pilot Micro Data Center Deployments. Initiate small-scale pilot projects (e.g., 3-5 MW) at suitable substation locations to test operational models, grid integration, and performance.

- Longer-Term Investment: Develop Grid Flexibility Services. Invest in energy storage and demand-response technologies that can be integrated with micro data centers to provide grid flexibility, enabling higher overall capacity utilization. This pays off in 12-18 months through reduced peak demand charges and enhanced grid stability.

- Longer-Term Investment: Foster Utility-Tech Partnerships. Establish formal partnerships between utilities and AI technology providers to co-design and deploy micro data center solutions that meet both compute needs and grid requirements. This requires sustained collaboration over 18-24 months.

- Strategic Investment: Explore Hybrid Centralized-Distributed Models. While micro data centers are key for inference, continue to optimize centralized facilities for training, ensuring they are powered by renewable energy sources and incorporate advanced cooling technologies. This is an ongoing strategic consideration.

- Requires Current Discomfort for Future Advantage: Grid Modernization for Distributed Loads. Utilities will need to invest in modernizing their distribution grids to better accommodate and manage numerous, smaller, dynamic loads like micro data centers. This upfront investment, which may face resistance due to immediate costs, is critical for enabling the future of AI.