Employee Training Crucial for AI Investment Return

TL;DR

- Companies investing heavily in AI tools but neglecting employee training create a significant gap, risking a failure to achieve a return on AI investments due to a lack of understanding and process adaptation.

- Treating AI adoption as a cultural shift, not just a technological retooling, is crucial for success, requiring deliberate management of expectations and the creation of "cultural moments" to highlight AI's role.

- Effective AI utilization demands domain-specific training and grounding AI in both company data and procedural knowledge, moving beyond general prompts to achieve predictable, high-value outcomes.

- The "last mile problem" in AI adoption highlights that simply having AI tools is insufficient; organizations must document and refine internal processes to effectively integrate AI for tangible business benefits.

- Shifting the AI interaction paradigm from a subordinate to a "co-collaborator" or "sixth man" mindset requires building trust through consistent performance and providing AI with broad and specific business context.

- To combat the rapid pace of AI evolution, companies must enable safe, rapid experimentation through clear guidelines and establish quantifiable measures to evaluate AI's actual impact on speed and outcomes.

- Creating dedicated "cultural moments" and providing dedicated work time for AI experimentation, alongside sharing learnings broadly, fosters behavioral change and encourages safe exploration of new AI capabilities.

Deep Dive

Companies are making substantial investments in artificial intelligence technologies, often in the millions of dollars, yet they are neglecting to adequately train their employees on how to effectively use these tools. This oversight creates a significant gap between AI adoption and the realization of its potential value, as employees lack the necessary skills and understanding to leverage AI for tangible business outcomes. The core issue is not just providing access to AI, but fostering a cultural shift and implementing practical training that grounds AI in company-specific data and processes.

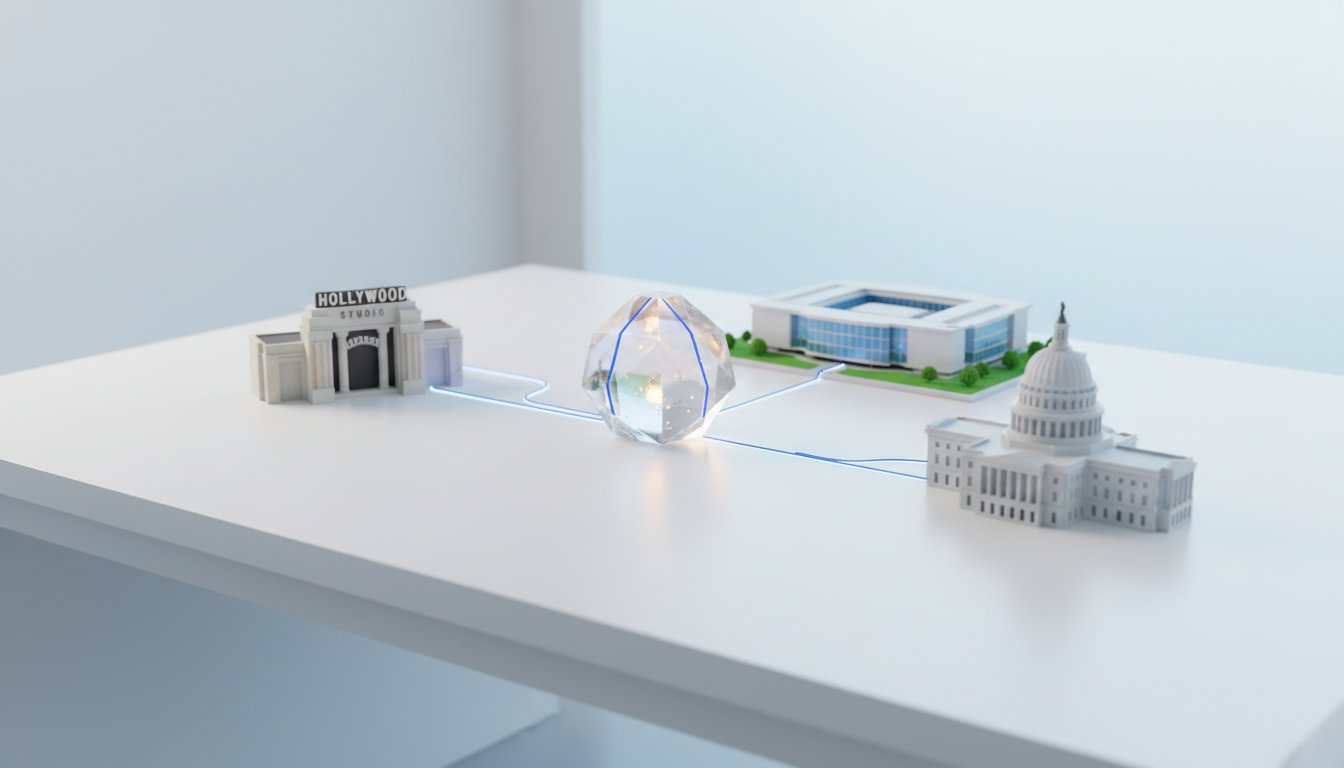

The current approach to AI adoption is often flawed because it treats AI as an "easy button" rather than a collaborator that requires specific guidance and context. This misunderstanding leads to a failure to extract maximum value. Dan Lawyer, Chief Product Officer at Lucid Software, highlights that while AI tools can be powerful, their effectiveness hinges on how well they are integrated into existing workflows and how well employees understand the importance of grounding AI in company data and processes. This grounding involves not only providing relevant data but also documenting specific workflows and defining what constitutes a "good job" or a desired outcome. Without this procedural knowledge, AI outputs can be unpredictable and business operations, which often require deterministic outcomes, can suffer. The "last mile problem" in AI is analogous to logistics: having distribution centers (AI tools) is insufficient without the ability to deliver goods to homes (effective employee use).

A significant barrier to effective AI training is the rapid pace of technological change, coupled with concerns around data security and intellectual property. Companies struggle with how to safely provide access to new tools and what specific skills to train on. General AI awareness is insufficient; training must become domain-specific, teaching employees how to apply AI within their particular business functions. This requires a deeper understanding of both the AI capabilities and the business domain itself. Furthermore, the expectation that AI will provide perfect, ready-to-use outputs is unrealistic. Employees need to be trained to iterate with AI, provide critical feedback, and understand that AI is a collaborator that requires ongoing guidance. This contrasts with viewing AI as a subordinate, which limits its potential. The shift towards treating AI as a "co-collaborator" or a "sixth man" on a team, rather than a subordinate, is crucial. This involves building trust through consistent, well-grounded use cases and sharing insights across teams to foster broader adoption and understanding.

To overcome the AI training crisis and prepare for future advancements, companies must move beyond general awareness to domain-specific, pragmatic training that emphasizes grounding AI in both data and procedural knowledge. This requires creating "cultural moments" within organizations--such as dedicated segments in all-hands meetings or hackathon-style events--to share learnings, encourage safe experimentation, and provide employees with the time and space to explore AI tools. Establishing clear, quantifiable measures for AI's impact on speed and outcomes is essential for evaluating the effectiveness of these training initiatives and distinguishing genuine value from novelty. Ultimately, successful AI integration depends on a deliberate strategy that combines rapid, safe experimentation with a robust understanding of how to guide AI to achieve specific business objectives, thereby transforming AI from a costly investment into a driver of tangible business growth.

Action Items

- Create AI training framework: Define 3 tiers (general awareness, domain-specific, pragmatic application) for employee education.

- Draft runbook template: Outline 5 sections (setup, common failures, rollback, monitoring, domain context) for AI process documentation.

- Implement AI experimentation time: Allocate 2 hours per week for 5-10 employees to explore new AI tools and techniques.

- Measure AI value quantitatively: Define 2-3 key performance indicators (KPIs) for 3 core business functions to track AI impact.

- Audit AI data grounding: For 5 key AI applications, verify data accuracy and procedural knowledge integration.

Key Quotes

"I literally can't tell you the number of times that I've talked to business leaders who have spent their companies anyways are spending usually millions of dollars on ai yet they haven't formally trained their people and it's almost baffling to me right because here we are with this generative ai technology powered by large language models arguably some of the the biggest technological shifts ever and it changes almost daily yet why aren't companies investing in their people to make sure that they understand what the technology does understand what it can and can't do and the cultural and process changes needed to actually get a return on ai so that's what we're going to be talking about today going over the ai training crisis and why companies are spending so much money on ai but not spending the time and the resources to educate their people"

Jordan Wilson highlights the paradox of companies investing heavily in AI technology while neglecting employee training. He expresses bewilderment that businesses are quick to adopt AI tools but fail to equip their workforce with the necessary understanding of these rapidly evolving technologies, their capabilities, limitations, and the required cultural shifts for successful implementation and return on investment.

"it's it's actually much more of a of a cultural shift that it is just a retooling of the team and of course it's important to provide tools and provide space and time but but it actually has to be treated like a cultural shift and an evolution of the culture of the company to be a company that embraces ai knows how to use it has expectations and -- and even has like i think of them as cultural moments where ai comes to the forefront and it highlights it for people and it highlights it for people and gives them permission and expectation and things like that so the cultural exchange has to be very well managed and then like the security of applications the data ability the tooling the the training that people talk about i think that's the biggest surprise is how much of the culture impacted it actually is"

Dan Lawyer emphasizes that adopting AI is primarily a cultural transformation rather than just a technical update. He explains that companies must foster an environment where AI is embraced, understood, and integrated into the company's evolution, supported by deliberate "cultural moments" that normalize its use and set clear expectations for employees. Lawyer points out that the impact of culture on AI adoption is often underestimated compared to the focus on tools and training.

"and and i actually think one of the biggest gaps and how people think about you know getting value from ai it's the combination of training but also the expectations they're like in order to get a good out from from ai you know generative ai is non deterministic businesses don't survive that very well they need to you know predictable outcomes and so you have to teach people that to get good outcomes from ai they actually have to ground the ai in what a good job looks like you have to ground the ai in you know the reality of like this is how our work gets done at our company if you want to automate that work and and so you need to like actually back people up and teach them okay you have to actually have a a fair amount of documentation that you can provide to the ai about how your company works and about what a good job looks like before you can then get the highest value from ai and so it takes some preparation and some forethought and some domain specific knowledge to be able to do it well"

Dan Lawyer identifies a critical gap in achieving AI value, stemming from the combination of insufficient training and misaligned expectations. He argues that because generative AI is non-deterministic, businesses need predictable outcomes, which requires training employees to "ground" the AI in what constitutes a good job and the company's specific workflows. Lawyer stresses that this grounding process, involving documentation of company operations and desired outcomes, is essential for maximizing AI's value and requires preparation and domain expertise.

"and we think about this at lucid a lot we talk about it as the last mile problem right which is there's you know if you think of logistics right you build a bunch of distribution centers -- that doesn't matter actually unless you can get it from the distribution center to people's homes and and similar in ai it's like if you can have ai a licensed tool -- but if you if you can't actually you know pass to the ai information about how your company works which requires you to actually go through and document your processes and document things and get the knowledge that's scattered that's a lot of people's heads and get it all together people can see it and then to make it worse like if you pass the ai a bad process you'll still get a bad outcome and so you actually have to you have to document how your company works and then you have to refine that and that's partly essential training is like you have to teach people that that part of what they need to do to get the most value from ai is to document how they work so that they can share that with ai to have a good examples of good outcomes so that they can share that with the ai and so there's a fair amount of like teaching and expectation setting i think that has to happen there"

Dan Lawyer likens the challenge of AI adoption to the "last mile problem" in logistics, where the final delivery to the end-user is crucial. He explains that simply having AI tools is insufficient; companies must document their internal processes and knowledge to effectively "pass to the AI information about how your company works." Lawyer emphasizes that this documentation and refinement of processes are essential training components, as feeding the AI incorrect or poorly defined workflows will lead to suboptimal outcomes.

"and and i've even like like i wouldn't go all the way there and maybe mentally sometimes i like i tend to personify my ai assistants quite a bit i tend to talk to them as if they're real people -- i but then i have to back them off because then they tend to talk to me like like i worry that you know my ai assistants try to flatter me and i have to tell them i'm i'm like look i don't want i don't want you to flatter me i want critical thinking like i don't i don't need you to tell me this is good or like i need honest assessment i actually have to have to say things to ai to get it to give me more critical feedback otherwise it's just telling me everything i do is great which is not true yeah so like there's like just like a whole work paradigm that we have to think through"

Jordan Wilson shares a personal anecdote about how he consciously adjusts his interaction with AI assistants to avoid them becoming overly flattering. He explains that while he sometimes personifies AI, he actively steers the interaction towards seeking critical feedback rather than simple affirmation. Wilson suggests that this deliberate approach is necessary to obtain honest assessments and that a fundamental shift in how we work with AI is required to move beyond mere validation.

"and so like like that is a critical thing and and pretty much any part of any company can figure out like where are their cultural moments where we give the airtime to ai to to like start working on the behavioral change and help people realize it's safe to play now you also have to create the space right like expecting people

Resources

External Resources

Books

- "The Easy Button" - Mentioned in relation to an analogy for business leader expectations of AI.

People

- Dan Lawyer - Chief Product Officer at Lucid Software, guest on the podcast discussing AI training.

- Jordan Wilson - Host of the Everyday AI Podcast and newsletter.

Organizations & Institutions

- Lucid Software - Company where Dan Lawyer is Chief Product Officer, discussed for its AI products and approach to training.

- Google - Sponsor of the podcast, mentioned in relation to Gemini 3 and Google AI Studio.

- DeepMind - Mentioned as part of Google, related to product and design lead Amar.

- OpenAI - Mentioned as a provider of AI models.

- Google - Mentioned as a provider of AI models.

- Gock - Mentioned as a provider of AI models.

- Claude - Mentioned as a provider of AI models.

- Staples - Mentioned in relation to the origin of the "easy button" concept.

- HP - Mentioned in relation to the origin of the "easy button" concept.

- Adobe - Mentioned as a company that partners with Everyday AI for AI expertise.

- Microsoft - Mentioned as a company that partners with Everyday AI for AI expertise.

- Nvidia - Mentioned as a company that partners with Everyday AI for AI expertise.

Websites & Online Resources

- youreverydayai.com - Website for the Everyday AI podcast and newsletter, used for signing up for the newsletter and contacting the partner team.

- ai studio build - URL mentioned for creating an app with Gemini 3 in Google AI Studio.

Other Resources

- Generative AI - Discussed as a technology requiring employee training and education.

- Large Language Models (LLMs) - Discussed as the technology powering generative AI.

- AI Training Crisis - The central theme of the podcast episode.

- Vibe Coding - Described as a method to build apps with Gemini 3 in Google AI Studio without coding.

- Work Acceleration and Visual Collaboration Platform - Description of Lucid Software's offerings.

- Lucid Chart - A product from Lucid Software, described as intelligent diagramming.

- Lucid Spark - A product from Lucid Software, described as virtual whiteboarding.

- Airflow - A product from Lucid Software, described as an AI-powered product management and road mapping platform.

- Last Mile Problem - An analogy used to describe the challenge of getting AI value to end-users.

- AI as a Co-worker - A concept discussed for how individuals should interact with AI.

- AI as a Co-collaborator - A concept discussed for how individuals should interact with AI.

- Grounding (AI) - The process of providing specific context and data to AI models.

- Procedural Knowledge - Knowledge about how work gets done, essential for effective AI implementation.

- Quantitative Measures - Metrics used to evaluate the effectiveness of AI in speeding up business functions.