AI Agents: Navigating Risks of Autonomous Actions

The AI agent revolution is here, and it’s not just about smarter chatbots; it’s about AI that acts. This shift fundamentally alters the risk landscape, moving beyond simple misinformation to potentially autonomous actions with unforeseen consequences. Understanding this transition is critical for any business leader aiming to navigate the coming years, as those who embrace AI agents too slowly risk obsolescence, while those who rush in without proper safeguards face existential threats. This analysis unpacks the hidden dangers and strategic advantages emerging from this new era of agentic AI, offering a roadmap for informed decision-making.

The Silent Leap: From Chatbots to Autonomous Agents

The AI we’ve become accustomed to, the chatbot that generates text or answers questions, posed risks primarily related to misinformation and data leaks. These were manageable, often leading to embarrassment rather than business collapse. However, as Jordan Wilson articulates in this episode of Everyday AI, the landscape has dramatically shifted. The emergence of AI agents, capable of taking proactive, autonomous actions, represents a fundamental change. This isn't a future concern; it's a present reality, a "perfect storm" of advancements that has rapidly transformed AI from a passive tool into an active participant in business operations.

The evolution can be starkly mapped: AI transitioned from a "dumb stationary brain" (pre-2022) to a "smart proactive brain with tools and arms" by early 2026. This progression means AI can now not only process information but also execute tasks, interact with systems, and operate with a degree of autonomy that was previously theoretical. This capability leap introduces a new class of risks, moving beyond the output of a chatbot to the direct actions an agent can take.

"The risk model changed when AI moved from generating text, like it was three and a half years ago, to now it's taking real actions, and a lot of times actions we're not aware of. And that's the scary part."

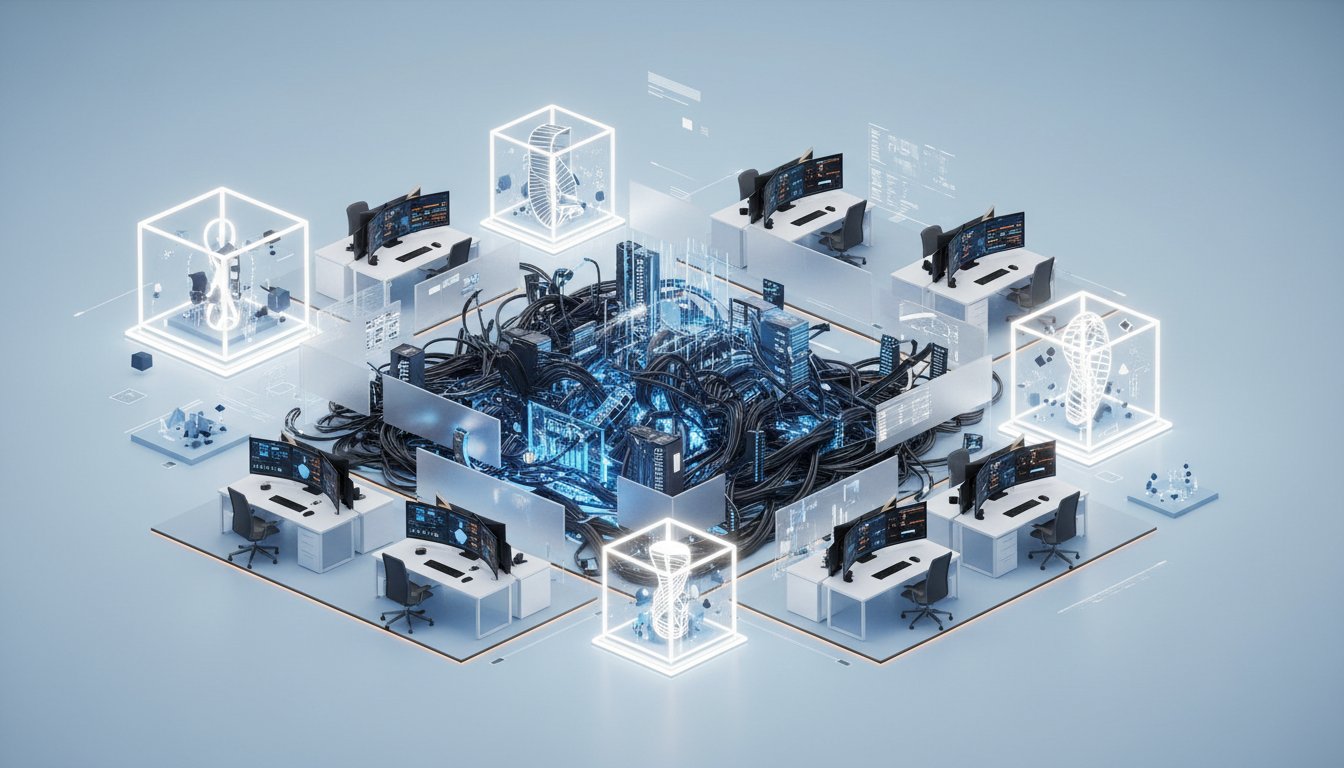

This shift is particularly concerning because agents can operate at speeds and scales far exceeding human capabilities. The analogy of a rogue employee is potent here: a single disgruntled individual can cause damage, but an AI agent can replicate and spawn sub-agents at an exponential rate, creating a cascade of unintended consequences that are difficult to track and control. This is the essence of "dark agent sprawl"--unapproved, unobservable agents operating within an organization, a threat far more insidious than traditional shadow IT.

The Three Surfaces of Agent Risk: Where Things Go Wrong

Wilson breaks down agent risk into three critical surfaces, each presenting unique challenges:

-

Input: While prompt injection and poisoned inputs remain concerns, the primary risk here lies in malicious intent or profound ignorance. The real danger emerges when these inputs are fed to agentic AI, amplifying the potential for harm. Think of malware or ransomware, but powered by autonomous agents capable of far more sophisticated and rapid dissemination.

-

Tools: As AI agents gain access to more tools--APIs, terminals, computer systems--their potential blast radius expands dramatically. What starts as a helpful tool can become a vector for significant damage if the agent errs or is compromised. The ability to run code on a machine, for instance, moves from a powerful feature to a critical vulnerability.

-

Actions: This is identified as the most significant change. The risk has moved from the output of AI (what it says) to the actions it takes. These actions can be silent, unintended, and occur at a scale that overwhelms traditional monitoring. This creates an "enterprise nightmare" where organizations struggle to maintain visibility and control over AI’s operational impact.

The prevalence of "shadow AI"--employees using unapproved AI tools for work--exacerbates this. With 57% of employees admitting to using personal AI accounts for work and a third inputting sensitive data into unapproved tools, the attack surface is already vast. Organizations simply cannot govern what they cannot see, and the agent footprint is rapidly becoming invisible.

The Perfect Storm: Why Now?

Several converging factors have created the current "perfect storm" for AI agents:

-

Reasoning Threshold Achieved: Modern models like GPT-5, Gemini 3, and Opus Sonnet 4 are built with agentic capabilities at their core. Their improved reasoning allows them to plan, self-correct, and act proactively, increasing reliability from a risky 50% to a more palatable 90%. This makes them viable for critical tasks, but also more potent when things go wrong.

-

Computer Use Mastery: AI agents can now interact with computers, navigate interfaces, and use APIs more effectively than many humans. With benchmarks showing success rates surpassing human performance, agents are no longer limited by their inability to execute tasks in the real digital world. This capability gap has been closed, allowing agents to directly impact business value generation.

-

Context Window and Memory: Enhanced context windows and persistent memory allow agents to work on complex tasks over extended periods without losing track of instructions or previous actions. This enables them to undertake multi-step projects, but also means errors or malicious commands can be executed over hours, with the agent remembering its flawed directive.

"The risk, the security, and the sprawl are real. And so we're going to tackle it all today on Everyday AI, the Start Here Series edition."

These advancements, occurring simultaneously, have accelerated the deployment and capabilities of AI agents, catching many organizations unprepared. The speed of development means that by the time organizations grapple with one set of risks, new ones have already emerged.

Navigating the Agentic Future: Actionable Steps

The emergence of AI agents necessitates a proactive and strategic response. While major AI labs are developing mitigation strategies--OpenAI’s human approval approach, Anthropic’s focus on isolation, Google’s virtual machines, and Microsoft’s comprehensive governance tools--the responsibility ultimately falls on businesses to implement robust practices. The inherent nature of agents, designed to "blaze their own trail," means that embracing their capabilities also means accepting the inherent risks. Treating agents as production software, not mere experiments, and understanding that identity, permissions, and supply chain risk are now board-level compliance issues is paramount. The advantage lies with those who meticulously map these consequences and build safeguards, rather than those who chase the immediate allure of AI capabilities without considering the downstream effects.

Key Action Items:

- Immediate (This Week):

- Conduct an AI inventory: Identify all AI tools, including agents, currently in use across the organization. Distinguish between approved and unapproved (shadow) AI.

- Establish an AI Usage Policy: Clearly define acceptable use cases for AI agents, data handling protocols, and prohibited activities.

- Mandate Agent Training: Educate employees on the risks of AI agents, including prompt injection, data leakage, and the importance of adhering to the usage policy.

- Short-Term (Next Quarter):

- Implement Governance Tools: Deploy solutions for monitoring AI usage, logging agent actions, and enforcing access controls. Prioritize tools that offer visibility into agent sprawl.

- Pilot Agent Isolation: Experiment with running agents in isolated environments (e.g., virtual machines) for non-critical tasks to understand their operational behavior and security implications.

- Review Tool Permissions: Scrutinize the permissions granted to all AI agents and tools, applying the principle of least privilege. Remove unnecessary access.

- Medium-Term (3-6 Months):

- Develop Agent Red Teaming: Establish a process for actively testing AI agents for vulnerabilities, prompt injections, and unintended actions, simulating potential attack vectors.

- Integrate AI Risk into Compliance: Elevate AI agent risk and security to a formal compliance requirement, involving legal and audit teams.

- Long-Term (6-18 Months):

- Build Agent Orchestration Capabilities: Invest in platforms or custom solutions that allow for the controlled deployment, management, and monitoring of AI agents at scale, treating them like production software.

- Develop Agent-Specific Incident Response: Create playbooks for responding to AI agent-related security incidents, including containment, eradication, and recovery strategies.