In this conversation, Cal Newport critically examines Yann LeCun's provocative assertion that Large Language Models (LLMs) represent a "technological dead end" for achieving true artificial intelligence. The core implication is that the current AI landscape, dominated by massive LLMs, might be a misdirection, leading to significant wasted investment and a missed opportunity for more robust, reliable AI development. This analysis is crucial for anyone deeply invested in the future of AI, from technologists and investors to business leaders and policymakers, offering a chance to recalibrate strategies and avoid the pitfalls of an overhyped paradigm. It reveals how a focus on application-level improvements can mask fundamental stagnation in core AI capabilities, and how a different architectural approach could unlock genuine intelligence.

The Illusion of Progress: Why LLMs Might Be a Detour

The prevailing narrative surrounding AI is one of relentless, exponential advancement, largely driven by the impressive capabilities of Large Language Models (LLMs) like those from OpenAI and Anthropic. We've been inundated with predictions of widespread job automation and even existential threats. However, Yann LeCun, a pioneer in AI, posits a starkly different reality: LLMs are a "technological dead end," and the massive investments in them may be misplaced. This isn't to say AI progress has stopped, but rather that the current trajectory, focused on scaling LLMs, is fundamentally flawed and will eventually hit a wall, leaving true artificial intelligence unrealized.

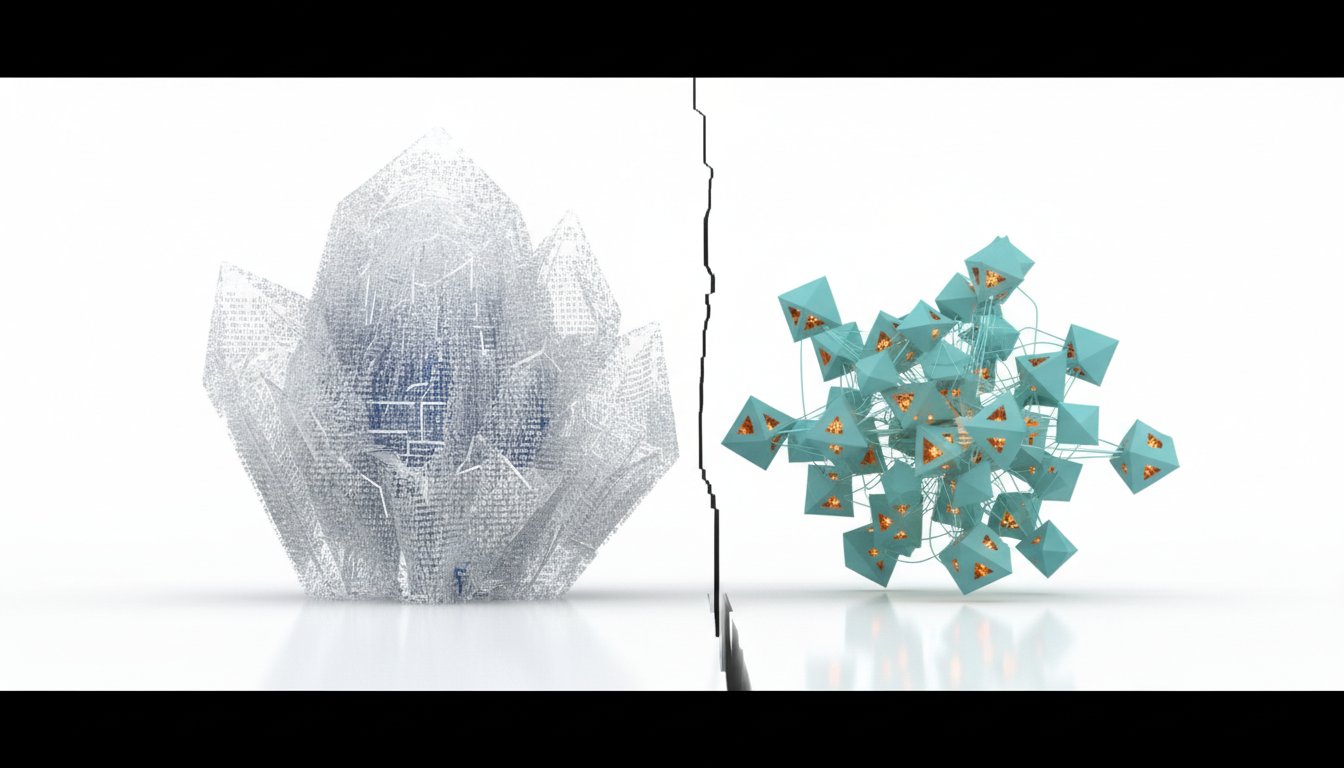

LeCun's vision, now backed by over a billion dollars in funding for his startup AMI Labs, proposes an alternative: a modular architecture for AI. Unlike the monolithic LLM approach, where a single massive model attempts to implicitly learn everything, LeCun advocates for specialized modules, each trained for specific functions, working in concert. This contrasts sharply with the current strategy of major AI companies, who bet on a single, colossal LLM to serve as the "digital brain" for diverse applications, from chatbots to coding assistants.

"The problem with LLMs, he said, is that they do not plan ahead. Trained solely on digital data, they do not have a way of understanding the complexities of the real world."

The LLM approach relies on predicting the next word in a sequence, trained on vast amounts of text. While this has yielded impressive pattern recognition and knowledge encoding, LeCun argues it's an inefficient and brittle path to intelligence. His proposed modular system, detailed in papers like "A Path Towards Autonomous Machine Intelligence," envisions distinct components: a world model for understanding how things work, an actor for proposing actions, a critic for evaluating those actions, and a perception module for interpreting input. This structure allows for domain-specific training, using the most effective methods for each module--for instance, classic deep learning for vision perception and novel techniques like Joint Embedding Predictive Architecture (GEPA) for building causal world models. The implication is that by breaking down intelligence into specialized, trainable components, we can build more reliable, capable, and understandable AI systems, rather than relying on the emergent, often opaque, "smarts" of a single, gargantuan model.

"We want to help them reach new situations, react to new situations with more common sense."

The Shifting Sands of LLM Advancement

The perception of rapid LLM progress, while seemingly undeniable, may be an illusion. LeCun suggests this trajectory can be understood in three stages. The first, from roughly 2020 to 2024, was the "pre-training scaling stage," where simply making LLMs larger and training them on more data demonstrably increased capabilities. This period, which saw breakthroughs like GPT-4, appears to have plateaued. Evidence suggests that further scaling beyond this point yielded diminishing returns for companies like OpenAI, XAI, and Meta.

This led to stage two, the "post-training stage," beginning around mid-2024. Unable to fundamentally improve the core LLM "brain" through scaling, companies shifted focus to extracting more utility from existing models. This involved techniques like "chain-of-thought" prompting, where models explain their reasoning, which offered marginal benchmark improvements but increased computational costs. Another approach was reinforcement learning-based fine-tuning, nudging models towards specific tasks using prompt-answer pairs. While this generated charts showing benchmark gains, it often masked a lack of fundamental improvement in the underlying intelligence, leading to a proliferation of inscrutable benchmark scores rather than readily apparent user benefits.

The current stage, stage three, which began in late 2025, focuses on "application-level improvements." The emphasis has shifted from enhancing the LLM itself to building smarter programs and agents that use the LLM as a backend. Advances in coding agents, for example, are largely attributed to improvements in the software that orchestrates LLM calls and manages complex coding tasks, not breakthroughs in the LLM's core reasoning ability. This sustained focus on application layers, while creating more useful tools, has created an illusion of continued AI advancement. The underlying LLM "brains" are advancing at a glacial pace, if at all, which is why persistent issues like hallucinations and unreliability remain. The current progress is largely about finding product-market fit for a mature, albeit fundamentally limited, technology.

The Downstream Effects of a Misguided Bet

If LeCun's assessment holds true, the implications are profound. In the short term (1-3 years), we can expect a continued "long tail" of applications built on existing LLMs. We'll see more specialized tools, akin to "Clad Code" moments in other domains, and shifts in job toolsets. However, the doomsday scenarios of mass unemployment driven by LLMs are unlikely to materialize. Instead, the economic landscape will likely see a significant shift. Companies will increasingly opt for cheaper, open-source, or on-chip LLMs, rather than expensive frontier models. This could be beneficial for consumers, leading to more diverse and affordable applications. However, it spells trouble for the massive LLM hyper-scalers like OpenAI and Anthropic, which have attracted hundreds of billions in investment. A market correction, or even a crash, is a probable outcome, potentially slowing AI progress temporarily as investors become wary.

Looking further out (3-10 years), this is the timeframe LeCun's modular architecture approach is projected to mature. If successful, we could see highly reliable, domain-specific AI systems that rival human capabilities in specific tasks. These systems, unlike the opaque LLMs, are inherently more alignable. Their modular nature allows for direct manipulation of value systems and constraints within specific modules, making them easier to control and less prone to unpredictable behavior.

Furthermore, modular systems promise greater economic efficiency. Training a single LLM to do everything requires immense computational resources. Conversely, training specialized modules for specific domains can be far more efficient, requiring smaller models and less energy. The DeepMind tool Dreamer V3, which excels at tasks like playing video games and navigating Minecraft with a fraction of the parameters of a typical LLM, serves as a compelling example of this efficiency and domain-specific power. However, this increased capability and efficiency in domain-specific AI also raises concerns about potential job displacement, necessitating careful consideration of societal impacts.

Actionable Takeaways for Navigating the AI Landscape

The debate between monolithic LLMs and modular AI architectures presents a critical juncture. LeCun's perspective, backed by significant investment and a deep understanding of AI's historical trajectory, suggests a fundamental re-evaluation of current AI strategies is warranted.

-

Immediately (Next 1-3 Months):

- Diversify AI Tooling: Begin exploring and experimenting with a range of AI models, including open-source and smaller, domain-specific options, rather than relying solely on major LLM providers. This hedges against the potential volatility of hyper-scaler investments.

- Focus on Application Value: Prioritize understanding how AI can solve specific business problems by optimizing workflows and enhancing existing tools, rather than chasing the latest LLM capabilities. The gains are currently more in application layer than core intelligence.

- Educate Teams on LLM Limitations: Foster a realistic understanding of current LLM capabilities, including their propensity for hallucination and their reliance on pattern matching rather than true reasoning.

-

Short-Term Investments (Next 3-9 Months):

- Investigate Modular Architectures: Research and potentially pilot applications or frameworks that align with modular AI principles. This could involve exploring agent-based systems designed for specific tasks.

- Develop Domain-Specific AI Strategies: Identify critical business domains where bespoke AI solutions, rather than general-purpose LLMs, could offer superior performance and reliability.

-

Longer-Term Strategic Investments (Next 12-24 Months and Beyond):

- Build Internal AI Expertise: Cultivate teams capable of understanding and developing AI systems that leverage modular designs and domain-specific training, moving beyond simple prompt engineering.

- Prioritize Alignment and Reliability: For critical applications, favor AI architectures that offer greater transparency and control over behavior, such as modular systems, to ensure alignment with human values and operational requirements. This is where discomfort now (learning new architectures) creates advantage later (more reliable AI).

- Prepare for Market Shifts: Anticipate potential market corrections in AI hyper-scalers and be prepared to adapt investment and strategy accordingly. The current LLM-centric market may not be sustainable long-term.

- Consider Economic Efficiency: As AI adoption grows, the economic feasibility of solutions will become paramount. Modular, domain-specific AI offers a path to greater efficiency and scalability compared to the immense cost of training and running massive LLMs.