AI Dominance Hinges on Controlling Value Chain, Not Just Models

The AI landscape is rapidly evolving, with major players like Nvidia, Meta, and Google making substantial investments and strategic shifts. This week's news reveals a complex ecosystem where hardware manufacturers are becoming software competitors, established tech giants are undergoing significant internal restructuring driven by AI, and new paradigms like "world models" and local AI agents are emerging. The non-obvious implication is that the race for AI dominance is not just about developing better models, but about controlling the entire value chain--from hardware and software to deployment and user adoption. This analysis is crucial for business leaders, developers, and investors seeking to navigate the accelerating pace of AI innovation and identify sustainable competitive advantages. Understanding these dynamics offers a strategic edge in a market where rapid change can quickly render conventional wisdom obsolete.

The Dual-Edged Sword of Nvidia's Open-Weight Gambit

Nvidia's announcement of a $26 billion investment in open-weight AI models over five years is a seismic shift, positioning the hardware giant as a direct competitor to the very AI firms it supplies. This move is not merely about expanding software offerings; it's a strategic play to solidify its dominance. By fostering open-weight models, Nvidia can ensure its hardware is optimized for these emerging standards, creating a powerful synergy. This strategy could accelerate innovation across the board, democratizing access to advanced AI. However, it also raises concerns about an unfair advantage, as Nvidia can tailor its hardware to perform exceptionally well with its own software, potentially disadvantaging rivals like OpenAI and Google.

The downstream effect of this investment is multifaceted. On one hand, it pressures closed-source leaders like OpenAI and Anthropic to innovate faster and more cost-effectively. As the speaker notes, "As the technology shrinks... you have to think the same to be true in three, five, ten years when it comes to AI models and GPUs. As an example, you can probably have something that's GPT-5.4 level or Gemini 3.1 level running on an iPhone, on an older iPhone." This suggests a future where powerful AI becomes more accessible and runs locally, a trend Nvidia's open-weight push will likely accelerate. On the other hand, Nvidia profits regardless of whether companies use its hardware for proprietary or open-source models. This "winning in three ways" scenario--companies paying Nvidia to compete, open-source advancement pushing all players, and new consumer markets for local AI hardware--demonstrates a sophisticated understanding of market dynamics.

"Nvidia is actually not just squeezing it on both sides, they're actually winning in three ways, or they could be winning in three different ways. One, the companies like OpenAI and Anthropic are going to have to pay Nvidia more to compete with Nvidia... Number two, the open-source side, that's huge. To push that boundary, that pushes all other companies like Meta... Last but not least, you're going to have probably millions of new customers, mainly consumers, who are going to want to be running these models locally, and they're probably going to be buying specialized Nvidia hardware to do so."

Meta's AI Pivot: Efficiency Through Restructuring

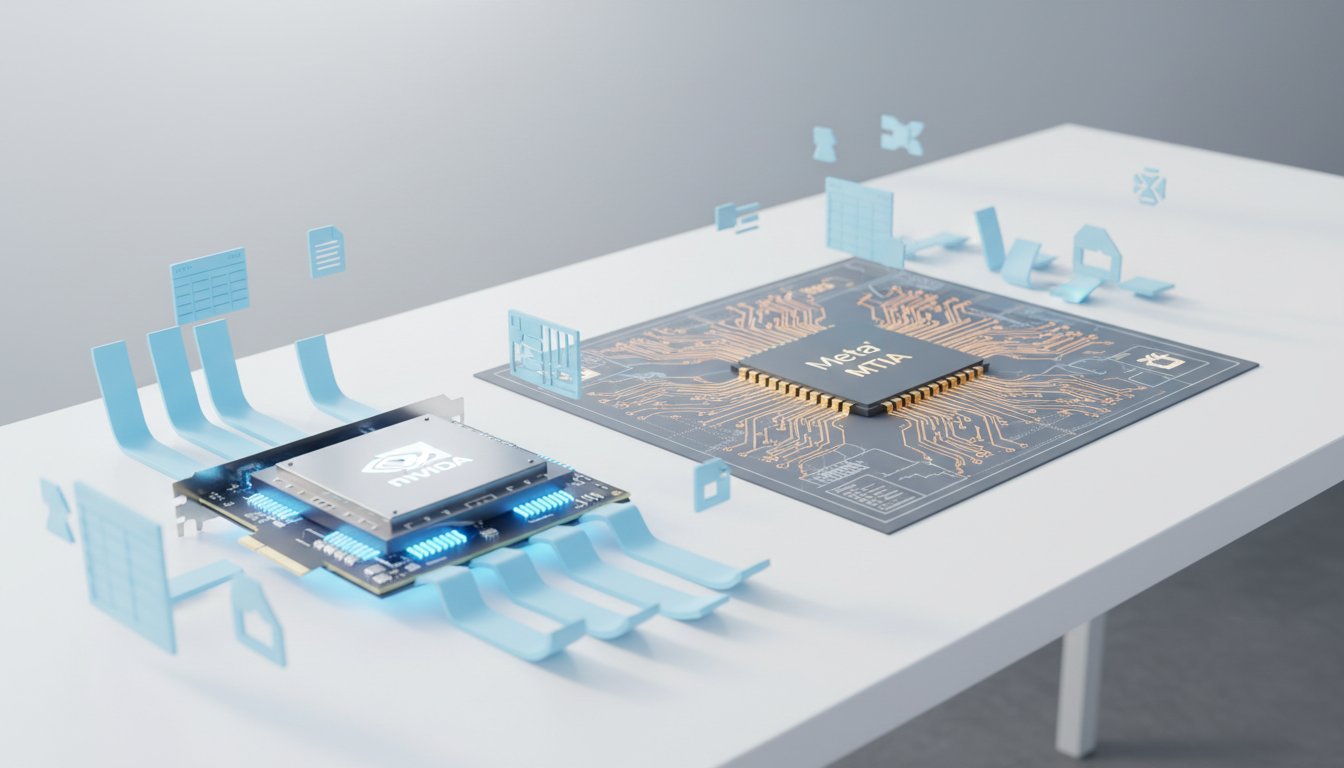

Meta's simultaneous development of its own AI chips (MTIA family) and reports of significant workforce reductions paints a picture of a company aggressively streamlining operations to fuel its AI ambitions. The MTIA chips are designed to reduce reliance on third-party suppliers like Nvidia, a move mirrored by other tech giants like Google and Amazon. This vertical integration is a long-term play for cost savings and performance optimization, aiming to deliver "cost savings and performance competitive with the top commercial products."

The non-obvious consequence of this strategy, however, lies in the reported workforce cuts. While Meta invests billions in AI infrastructure, it's also considering cutting "20% or more of its workforce." This suggests a shift from a broad-based operational model to one heavily reliant on highly skilled AI talent and automated processes. The implication is that AI is not just an add-on feature but a fundamental driver of operational efficiency, capable of replacing roles previously held by large teams. This transition from "opex to capex, or thousands of people to AI factories and chips" highlights a future where AI adoption directly impacts workforce structure, demanding a recalibration of skills and roles. The delay in Meta's own large language models, like Avocado, adds a layer of complexity, indicating that while the investment is massive, the tangible output is still evolving, creating potential internal friction and external skepticism.

The Rise of Localized AI and the "Personal Computer" Revival

Perplexity's launch of "Personal Computer," an AI-powered autonomous agent for Mac devices, signals a significant trend towards localized AI and a potential redefinition of the personal computer. Unlike cloud-dependent assistants, this agent operates persistently in the background, leveraging both local processing and Perplexity's hybrid online architecture. This approach addresses growing concerns about data privacy and security, offering a "more secure and sandboxed version of something like Open Claw."

The immediate benefit is enhanced productivity through automation of complex tasks. However, the deeper implication is a shift in how we interact with computing. By running AI agents locally, users can potentially achieve greater autonomy and control over their data and workflows. This resonates with the "open-claw trend," suggesting a desire for more open, customizable, and locally-controlled AI experiences. The fact that it integrates with existing productivity tools like Gmail, Slack, and Notion means this isn't just a niche experiment; it's an attempt to embed AI deeply into everyday workflows without requiring new hardware. This strategy, if successful, could fundamentally alter the personal computing landscape, making advanced automation accessible and potentially challenging the dominance of cloud-centric AI models.

"Unlike typical AI assistants that wait for user prompts, Personal Computer is one that operates persistently in the background on a local machine, but also using Perplexity's hybrid architecture online. So it can just carry out these tasks independently once it's given a goal."

AI in Government: A Policy Tightrope Walk

The US Senate's approval of generative AI chatbots for staff use, including official Senate data, marks a critical policy development. While enabling staff to leverage tools like Microsoft Copilot, Google Gemini, and ChatGPT promises increased efficiency, it also introduces significant risks. The policy's two-tier risk assessment system and undisclosed approval processes raise transparency concerns.

The commentary highlights the potential for "work slop in the government" due to a lack of technological understanding among some senators. The average age of senators and historical examples of technological miscomprehension underscore the critical need for robust training.

"I hope the US government and the Senate takes training seriously because I'm just going to be honest here, I think a lot of people, if you don't follow government and if you take off your politics hat, senators are not exactly always the smartest people in the room. They're not... Many of them on the older side, let's just call it out, not really understanding technology."

The downstream effect of poorly implemented AI in government could be the propagation of misinformation and inefficient processes, potentially impacting legislation and public trust. This situation underscores the challenge of integrating cutting-edge technology into established, often slow-moving, bureaucratic structures, where the immediate payoff of efficiency must be carefully weighed against the long-term consequences of inadequate oversight and training.

Google's Gemini Integration: Deepening Workspace Utility

Google's integration of Gemini across its Workspace apps (Docs, Sheets, Slides, Drive) represents a significant step towards embedding AI directly into daily productivity workflows. The ability to prompt Gemini to draft documents, analyze data, and summarize information from various sources reduces manual effort and accelerates project initiation.

The key insight here is the shift from AI as a standalone tool to AI as an inherent part of the application. Gemini's presence in Docs allows for drafting, rewriting, and formatting, while in Sheets, it can generate checklists and track data. The AI overview in Drive and the ability to query files with Gemini further enhance information retrieval. This deep integration aims to make AI assistance seamless and contextual, reducing the friction of context-switching. The rollout, starting with beta for specific subscribers and expanding globally, suggests a deliberate strategy to refine the user experience before widespread adoption. The implication is that future productivity will be heavily AI-augmented, requiring users to adapt to a more interactive and intelligent software environment.

Key Action Items

-

Immediate Action (Next Quarter):

- Evaluate Nvidia's Open-Weight Strategy: For organizations reliant on AI hardware, analyze how Nvidia's $26B investment in open-weight models might impact future hardware procurement and software development strategies.

- Assess Local AI Viability: Explore Perplexity's "Personal Computer" and similar local AI agent technologies to understand their potential for enhancing data security and workflow automation within your organization.

- Review Google Workspace AI Features: For Google Workspace users, actively engage with the new Gemini integrations in Docs, Sheets, and Drive to identify immediate productivity gains.

-

Short-Term Investment (Next 6-12 Months):

- Develop AI Training Programs: If your organization uses AI tools (especially in government or regulated sectors), invest in comprehensive training to mitigate risks highlighted by the US Senate's AI policy.

- Monitor Meta's AI Infrastructure: Keep track of Meta's MTIA chip deployment and its impact on the AI hardware market, especially for companies considering large-scale AI deployments.

-

Long-Term Investment (12-18+ Months):

- Strategic AI Workforce Planning: Anticipate workforce shifts driven by AI efficiency, as exemplified by Meta's potential restructuring. Invest in upskilling and reskilling employees for AI-centric roles.

- Explore World Models: As Yann LeCun's AMI startup focuses on "world models," monitor advancements in AI that understand the physical world, as these could unlock new applications in robotics, autonomous systems, and complex simulations.

- Benchmark AI ROI: For companies investing in AI tools, implement robust tracking mechanisms (like those offered by Section) to measure actual business impact beyond basic task automation, ensuring a true return on investment.