Modernization Enables AI Readiness Through Re-architecture

The hidden cost of modernization: Why waiting for AI readiness means re-architecting now.

In this conversation, Shilpa Kolhar, SVP of Product and Engineering at MongoDB, unpacks the complex realities of application modernization, particularly in the race to become AI-ready. The core thesis isn't just about migrating from legacy systems; it's about a fundamental re-architecture driven by the need for speed and the integration of AI capabilities. This discussion reveals the non-obvious consequence that delaying modernization due to perceived complexity or cost actually creates a significant competitive disadvantage, as legacy systems become insurmountable barriers to leveraging AI. Anyone involved in enterprise software development, data architecture, or product strategy should read this to understand how a document-first approach, coupled with strategic modernization, can unlock agility and future-proof their technology stack against the accelerating demands of AI. It offers a pragmatic roadmap for navigating the technical debt of the past to build the AI-powered applications of tomorrow.

The Unseen Drag of Legacy Systems on AI Ambitions

The drive to modernize applications is often framed as a technical necessity or a cost-saving measure. However, Shilpa Kolhar highlights a more profound, emergent consequence: legacy systems are actively hindering an organization's ability to innovate with AI. This isn't merely about slow performance; it's about a fundamental architectural mismatch. Traditional relational databases, with their rigid schemas and separation of concerns, create friction for the dynamic, context-aware data needs of AI applications. The Application Modernization Platform (AMP) at MongoDB aims to address this by facilitating a shift to a document-first architecture, which inherently supports faster change and better aligns with how AI models process information. The implication is clear: organizations clinging to legacy architectures are not just missing out on current AI trends; they are actively building a moat around their own future growth.

"So now, if your data and your business logic is stuck in the legacy system, then you are not able to leverage the new agentic AI-enabled features. You cannot build that easily on top of these stacks."

This statement underscores the critical juncture businesses face. The "cost" of modernization, often perceived as high and time-consuming, pales in comparison to the "cost" of not modernizing when AI capabilities become a competitive imperative. Kolhar points out that while cost has historically been a driver for modernization, the current primary driver is the enablement of AI. This shift means that the traditional, multi-year modernization projects are now being compressed into orders of weeks to months, driven by the urgency to deploy AI-powered features. The native JSON document model of MongoDB is presented as a key enabler of this speed, as it aligns the data model at the database layer with the application layer, reducing technical debt and facilitating rapid iteration--a crucial advantage in the fast-evolving AI landscape.

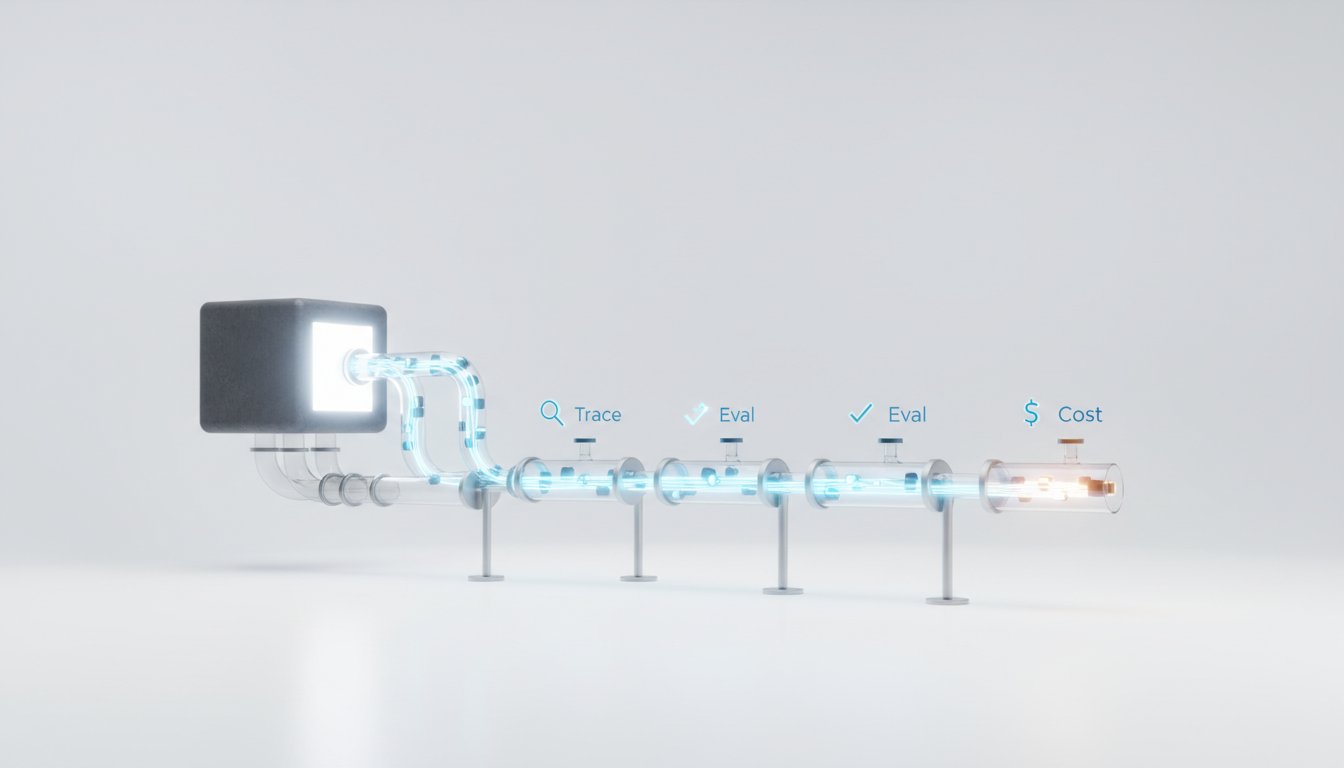

The Unified Data Layer: Eliminating AI Drift and Latency

A significant challenge in building AI applications is managing the data pipeline, especially when operational data, search indexing, and vector embeddings are involved. Traditional approaches often involve separate systems for each, leading to data drift and latency. MongoDB's approach, as outlined by Kolhar, is to unify these components within a single platform. This means operational data, search capabilities, and vector embeddings--critical for AI context and memory--reside together. This unification is crucial for real-time AI applications where the accuracy and relevance of information depend on keeping data, its index, and its embeddings in sync.

"So when there is an update to your dataset, to your row or a document, then we recently launched auto-embedding where at the right time itself, your embeddings get updated. So there is no, and your vector search, which leverages it, will be a lot more accurate, relevant, and real-time in sync with the data."

This eliminates the inherent "drift" that occurs when data is copied and transformed between disparate systems. For AI applications, especially those employing Retrieval Augmented Generation (RAG), this real-time synchronization is paramount. The ability to update embeddings automatically as operational data changes ensures that AI models are always working with the most current context, leading to more accurate and relevant responses. This unified approach also simplifies security and access control, as data duplication is minimized. The mention of MongoDB Atlas Vector Search being recognized for its adoption and user satisfaction by Retool further validates the effectiveness of this integrated strategy in meeting the demands of modern AI and RAG applications.

Taming Schema Drift: Pragmatism Over Purity

The flexibility of a schema-less or schema-on-read approach, inherent in document databases like MongoDB, is a double-edged sword. While it accelerates initial development and iteration, it can lead to schema drift over time, creating compatibility issues between application versions and historical data. Kolhar addresses this not by advocating for rigid schema enforcement, but by emphasizing pragmatic solutions. MongoDB's JSON schema validation allows developers to define and enforce specific constraints at the database level, acting as a gatekeeper to prevent incompatible data from entering the system.

Furthermore, the concept of schema versioning at the application layer is presented as a powerful pattern. Instead of forcing all data to conform to a single, constantly evolving schema, applications can be designed to be schema-aware, gracefully handling different versions of data. This provides a crucial buffer, allowing for gradual data migration and updates without disrupting ongoing operations. Constructs like aggregation pipelines, specifically the $addFields operator, can also be used at the read layer to provide a consistent view of the data, even if certain fields are missing in older documents. This layered approach--database-level validation, application-level versioning, and flexible data retrieval--offers a robust strategy for managing schema evolution without sacrificing the agility that makes document databases attractive.

The Long Game: Competitive Advantage Through Delayed Payoffs

The AMP platform and its underlying principles highlight a recurring theme: competitive advantage is often built on embracing discomfort now for future gains. Modernization, particularly the shift to a document-first architecture, requires upfront effort and a willingness to re-architect. This is precisely why it creates a durable advantage. Shilpa Kolhar notes that the speed and agility gained from this modernization are not just about keeping pace but about leading. The ability to rapidly iterate on AI features, integrate new data sources, and adapt to market changes becomes a significant differentiator.

"So that way, natively, MongoDB supports change much faster with lesser technical debt, and that helps not just through the modernization process, but also once you modernize, you are better equipped with change. And in this AI world today, we know that the one who can change fast is the one who will succeed."

This highlights that the payoff for modernization is not immediate but accrues over time. Teams that invest in re-architecting now, even if it involves immediate pain or complexity, are positioning themselves to capitalize on future opportunities far more effectively than those who delay. This is where conventional wisdom often fails; it prioritizes immediate problem-solving over long-term strategic advantage. The AMP approach, by enabling incremental modernization and reducing technical debt, ensures that this delayed payoff is achievable and sustainable, creating a compounding advantage that competitors who stick to legacy systems will struggle to overcome.

Key Action Items

- Immediate Action (Next Quarter):

- Audit current application architectures for legacy dependencies that hinder AI integration.

- Evaluate the data model's flexibility for supporting dynamic AI data requirements (embeddings, context memory).

- Begin exploring JSON schema validation patterns within existing MongoDB deployments to tame drift.

- Short-Term Investment (Next 3-6 Months):

- Pilot a small, non-critical application modernization project using MongoDB AMP principles to gain hands-on experience.

- Investigate the integration of MongoDB Atlas Vector Search for existing or new AI initiatives to unify data and embeddings.

- Develop internal guidelines for schema versioning at the application layer to manage data evolution gracefully.

- Long-Term Investment (6-18 Months):

- Develop a phased roadmap for modernizing core legacy applications, prioritizing those most critical for AI readiness.

- Establish a Center of Excellence for document-first architecture and AI data management best practices.

- Train development teams on leveraging MongoDB's unified data platform for both operational and AI workloads, focusing on reducing technical debt.

- Embrace incremental modernization units, allowing for continuous delivery of value while managing risk and complexity.