Nvidia's Compute Demand Signals AI Infrastructure Bottleneck

The AI Arms Race: Why Nvidia's Forecast Signals a Deeper Shift, and Why Others Are Already Feeling the Strain

This conversation reveals a critical inflection point in the AI revolution: the insatiable demand for compute power is not just continuing, it's accelerating, creating a stark divergence between companies that can meet this demand and those struggling to adapt. The non-obvious implication is that the "AI boom" isn't just about building models; it's about the foundational infrastructure and the complex, often difficult, choices required to build and sustain it. Investors and technology leaders who grasp the downstream consequences of infrastructure decisions and the accelerating pace of AI adoption will gain a significant advantage. This analysis is crucial for anyone navigating the rapidly evolving tech landscape, especially those in hardware, software, and investment.

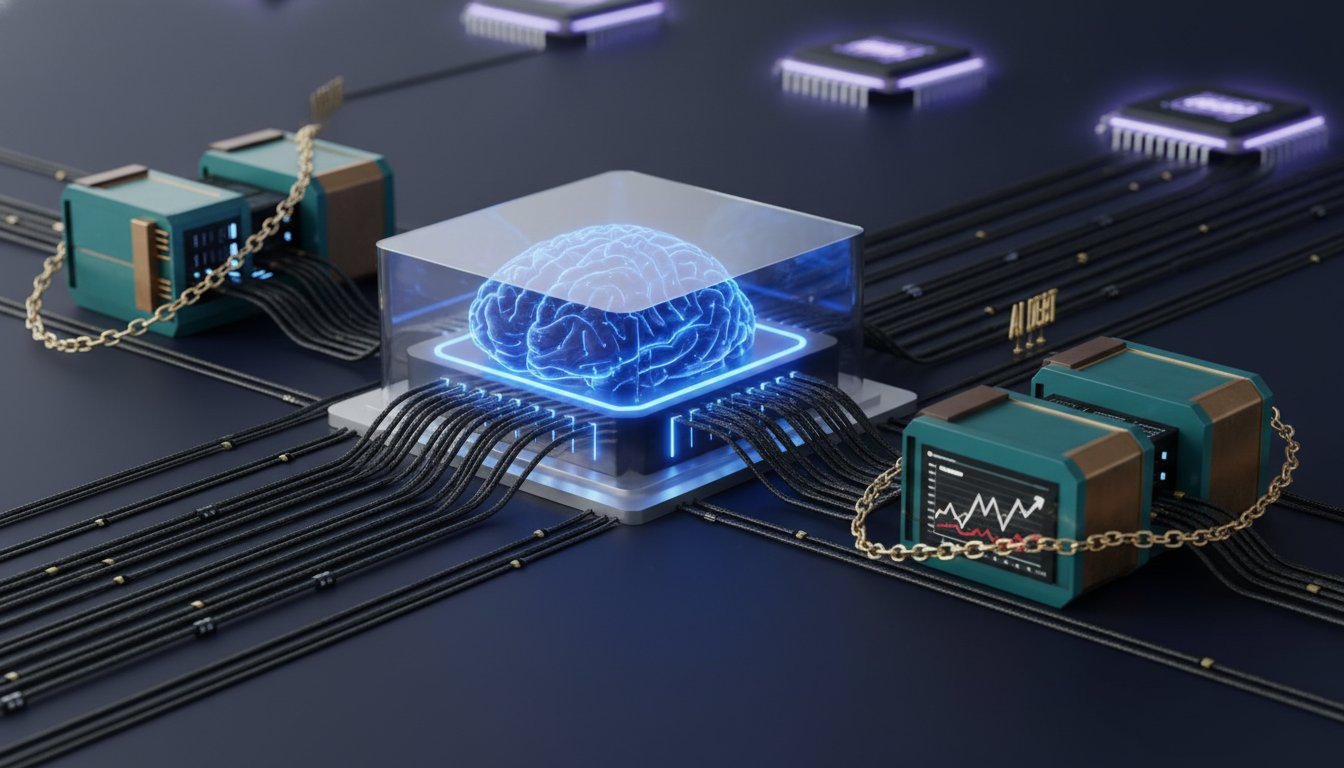

The AI Infrastructure Bottleneck: Beyond the Hype

The narrative around AI has largely focused on model development and the promise of artificial general intelligence. However, the recent earnings report from Nvidia, a linchpin in the AI ecosystem, underscores a more fundamental reality: the immense, and growing, demand for the specialized hardware that powers these advancements. While Nvidia's forecast handily beat expectations, and its stock surged, the underlying message is one of constrained supply meeting exponentially growing demand. This isn't just about selling more chips; it's about the strategic decisions that underpin the entire AI infrastructure build-out.

Ed Ludlow highlights a key aspect of Nvidia's guidance: "The fiscal first quarter... does not assume any compute revenue from China in the outlook." This seemingly minor detail speaks volumes about the geopolitical complexities and the company's ability to navigate them while still projecting robust growth. The fact that Nvidia can project such strong numbers even without factoring in a significant market like China suggests the demand from other regions is so overwhelming that it compensates. This is where systems thinking becomes crucial. The immediate impact of strong demand is higher revenue and stock price, but the downstream effect is the sustained pressure on supply chains and the strategic importance of securing manufacturing capacity.

Jay Goldberg, a senior analyst for semiconductors, offers a more cautious perspective, noting that while it's a "good quarter and a good guide," he's "still waiting to see a lot more detail." His focus on gross margins and how Nvidia talks about them points to the intricate dance of pricing power and supply chain negotiations. The fact that Nvidia's gross margins held steady at 75.2% despite rising memory costs, a challenge for many other electronics companies, is a testament to their unique position.

"The hyperscalers were just over 50% of fourth quarter data center revenue."

This statistic, highlighted by Ed Ludlow, is particularly revealing. While Nvidia is actively trying to diversify its customer base by supporting "neo clouds," the continued reliance on hyperscalers indicates where the bulk of AI compute spending originates. The implication is that the major cloud providers are making massive, long-term bets on AI infrastructure, and Nvidia is their primary enabler. This creates a powerful feedback loop: hyperscalers invest heavily in AI, driving demand for Nvidia's chips, which in turn allows Nvidia to invest more in R&D and manufacturing, further solidifying its dominance. The system responds to this massive investment by creating more demand for compute.

The AI Divide: Winners and Losers in the Software Landscape

While the hardware side of AI is experiencing an unprecedented boom, the software sector is facing a more complex and, for some, challenging environment. The same AI revolution that fuels Nvidia's growth is also creating significant disruption for established software companies. Anurag Rana, a senior technology analyst, articulates this bifurcation clearly: "the non AI spending is the one that's under pressure... companies have or the clients don't have that much money to spend in terms of how they're allocating AI spending versus non AI."

This creates a scenario where companies that can effectively integrate AI into their core offerings, or those whose products are essential for AI development and deployment, are thriving. Conversely, those whose value proposition is not directly tied to AI, or who haven't yet demonstrated a clear path to AI integration, are being punished by the market. Salesforce's recent earnings are a prime example. Despite meeting revenue expectations and increasing its share buyback, the stock fell due to concerns about disruption from AI-native companies and model providers.

"The second big piece right now is the market's extremely worried about software companies getting disrupted by all the the model providers and all the AI native companies."

Rana's observation points to a critical downstream effect: the rise of powerful AI models is forcing a re-evaluation of traditional software architectures. If AI can perform many of the functions previously handled by specialized enterprise software, then companies that rely on those older models face an existential threat. The "AI factory" concept, originally applied to data centers running AI workloads, can also be seen as a metaphor for how companies are restructuring their technology stacks to prioritize AI capabilities.

The question for companies like Salesforce isn't just if they can adapt to AI, but how quickly and how effectively. The market is rewarding companies that can demonstrate a clear strategy for AI integration and a defensible position in the evolving landscape. This requires not just adding AI features but fundamentally rethinking their product roadmaps and value propositions. The delayed payoff for successful AI integration could be significant competitive advantage, while failure to adapt means being left behind.

Navigating the AI Supply Chain: Patience and Pricing Power

One of the most striking aspects of the current AI landscape is the narrative around supply. While gaming supply constraints are mentioned as a headwind for Nvidia, the data center segment appears to be largely insulated. Mandip Singh, global head of technology research for Bloomberg Intelligence, explains this by highlighting Nvidia's ability to secure supply and, crucially, its integrated approach.

"Nvidia is actually selling their to their customers entire systems that have as hard asps they are helping them reduce the token cost and that's where the customers don't mind paying that extra you know premium that nvidia is charging because overall the total cost of ownership is lower than if you were to standardize on any other chips."

This is a powerful example of how immediate pain (higher upfront cost for systems) can create lasting advantage (lower total cost of ownership and superior performance). Nvidia isn't just selling components; it's selling solutions. This allows them to maintain significant pricing power, even in the face of rising input costs like memory. The fact that they are guiding to stable gross margins for the next quarter, without mentioning memory cost impacts, demonstrates this pricing power and their ability to absorb or pass on costs effectively.

The implication for other companies is clear: building a defensible moat in the AI era requires more than just having a good product. It requires understanding the entire system, securing supply chains, and offering integrated solutions that provide demonstrable value and lower total cost of ownership for customers. This takes time, strategic foresight, and a willingness to invest in areas that may not show immediate returns. The "several quarters" of secured supply that Nvidia claims to have is a luxury few can afford, and it's precisely this foresight that creates a competitive advantage.

Key Action Items

- Immediate Action (Next Quarter):

- For Hardware Companies: Aggressively secure supply chain capacity for AI-specific components, prioritizing long-term contracts and strategic partnerships.

- For Software Companies: Develop and clearly articulate an AI integration strategy for core products, focusing on how AI enhances existing value propositions or creates new ones.

- For Investors: Re-evaluate portfolios to identify companies with strong AI infrastructure plays versus those vulnerable to AI disruption.

- Short-Term Investment (Next 6-12 Months):

- For Hardware Companies: Explore opportunities to offer integrated systems rather than just components, focusing on total cost of ownership for customers.

- For Software Companies: Pilot AI-powered features and gather customer feedback, preparing for a broader rollout that demonstrates tangible ROI.

- For All: Invest in talent acquisition and upskilling for AI-related roles, recognizing the critical shortage of expertise.

- Long-Term Investment (12-18 Months and Beyond):

- For All: Build strategic relationships with key players across the AI ecosystem (chip manufacturers, cloud providers, model developers) to ensure alignment and access.

- For Software Companies: Proactively address potential disruption by investing in R&D that leverages AI models to enhance or transform existing product lines, creating a "moat" through AI integration.

- For Hardware Companies: Continue to innovate on next-generation architectures (like Blackwell) and explore opportunities to expand into adjacent areas of the AI compute stack.

- Items Requiring Discomfort for Future Advantage:

- For Software Companies: Embrace the potential for disruption and cannibalization by AI-native solutions, rather than resisting it. This may involve difficult decisions about existing product lines to make room for AI-first offerings.

- For All: Make significant, long-term capital investments in AI infrastructure and talent, even if immediate returns are uncertain. This requires patience and a commitment to a vision that extends beyond the next earnings cycle.