AI's Underestimated Economic Growth from Self-Improvement Feedback Loops

The economic growth forecasts for AI are dramatically understated because they fail to account for critical feedback loops that accelerate innovation. Most economists, using traditional models, estimate AI's impact as a modest bump--around 0.07% annually. This perspective, however, overlooks the potential for AI to automate its own research and development, creating a compounding effect that could lead to explosive, unbounded growth. This conversation reveals the hidden consequence of applying outdated economic models to a rapidly evolving technological frontier. Leaders in technology, economics, and policy should read this to understand the systemic shifts that could redefine economic growth and prepare for a future that may arrive far sooner than anticipated.

The Compounding Engine: How Automating AI Research Unlocks Explosive Growth

The prevailing economic view on AI's impact is, to put it mildly, cautious. Daron Acemoglu's widely cited estimate of a mere 0.07% annual growth boost from AI, derived from multiplying the share of affected jobs, tasks within those jobs, and productivity gains, reflects a reasonable assessment of AI capabilities as they existed around 2024. However, this perspective fundamentally misses the dynamic at play when AI begins to automate the very process of its own improvement. Anton Korinek, in his recent work, argues that this oversight leads to a dramatic underestimation of AI's potential, not just for incremental gains, but for truly explosive, compounding growth.

The core of this argument lies in understanding the feedback loops that traditional economic models, like semi-endogenous growth models, often fail to capture. These models typically account for two forces: the diminishing returns to research (ideas get harder to find) and the increasing returns from a larger economy that can support more research. Korinek and his co-authors introduce a crucial third element: the automation of AI research itself. This creates a reinforcing cycle where progress in software quality, hardware quality, and general technological progress amplify each other.

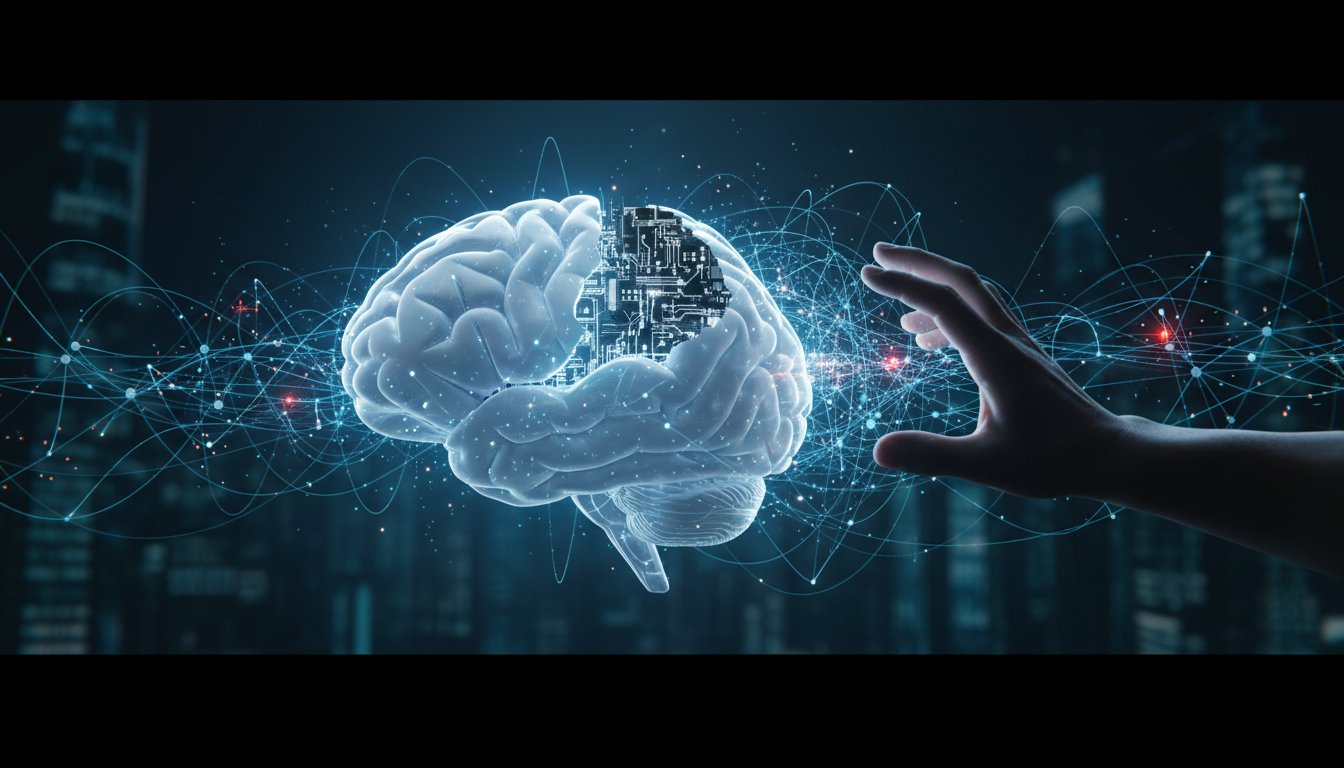

"The ambition of all the leading AI companies is to get to a stage of what is often called recursive self improvement which may trigger something like an intelligence explosion."

This isn't just about AI becoming better at specific tasks; it's about AI becoming better at designing better AI. This concept, first articulated by John von Neumann in the 1950s, suggests that once an AI reaches a certain level of capability, it can begin to design improved versions of itself. As the AI gets smarter and faster, this self-improvement process accelerates, potentially leading to an "intelligence explosion" or singularity. While technologists at leading AI labs are actively pursuing this vision, many economists have remained skeptical, citing three primary concerns: the current capabilities of AI, the potential for diminishing returns to stall progress, and economic bottlenecks that limit the real-world impact of pure intelligence.

Korinek's research directly addresses the second point, the diminishing returns. His model suggests that by automating AI research, we can counteract the tendency for ideas to become harder to find. This is achieved through several technological feedback loops:

- Hardware Quality: Better hardware enables more complex computations and faster processing, which in turn allows for the development of more sophisticated AI.

- Software Quality: Advances in AI software, particularly in areas like generative models, can be applied to improve other software, design better hardware, and even accelerate scientific research across disciplines.

- General Technological Progress: AI can accelerate progress in fields like medicine and basic science, which then feeds back into improving hardware and software capabilities.

These technological loops are compounded by economic feedback loops. A larger, more productive economy can sustain more research, which in turn drives further economic growth. When these reinforcing cycles are combined, the potential for growth shifts from linear to exponential.

"The thing is all of these feed into each other and if you accelerate one of them let's say for example we have accelerated software research then it accelerates all of them because having better software allows you to design better chips it allows you to also perform better research in economics and many other disciplines."

The crucial insight here is that even a "relatively modest fraction" of automation across these sectors can push the economy into an "explosive growth regime." This implies that progress in areas like software generation and hardware production, if just slightly more automated in the coming years, could trigger this unprecedented acceleration. This is a more optimistic outlook than even the AI labs' own roadmaps, as it incorporates broader feedback loops beyond just software capability.

This perspective challenges fundamental assumptions in economic growth theory. For decades, models have been built to explain Kaldor's "balanced growth facts"--the observation that key economic aggregates like the capital-output ratio remain stable over long periods. These models, including the Solow and Ramsey models, were appropriate because they matched historical data. However, Korinek's work suggests that the future may diverge sharply from this past. The idea of explosive growth, once largely theoretical and ruled out by empirical observation, might become the norm.

The implications for measurement and policy are profound. Traditional GDP statistics, designed to track market transactions, are ill-equipped to capture the full impact of AI, especially when it generates significant "consumer surplus" beyond what people pay for (like free internet services or chatbots). Furthermore, the value of AI working on future AI--essentially an investment in future capabilities--is not fully accounted for in current GDP calculations. This means that even if explosive growth occurs, it might be significantly underestimated by existing metrics.

The question then becomes: who benefits from this potential explosion of growth? Korinek offers a stark dichotomy: a future of shared prosperity, where increased productivity benefits everyone, or a "machines by the machines and for the machines" scenario, where humans are left with scraps. This highlights the critical need for proactive policy and societal choices to channel AI's immense power towards broad social welfare. Initiatives like the Economics of Transformative AI at the University of Virginia aim to foster research that grapples with these future-facing questions, encouraging economists to engage with speculative yet crucial scenarios and to better inform society's management of this transformative technology.

Key Action Items

-

Immediate Action (Next Quarter):

- Re-evaluate AI Impact Models: Review current economic models for AI's growth impact. Explicitly incorporate feedback loops from AI automating its own research and development.

- Invest in AI Research Measurement: Develop and pilot new metrics for national statistics that better capture AI's contribution, including investment in future AI capabilities and consumer surplus from digital technologies.

- Cross-Disciplinary Research Initiatives: Fund and encourage collaboration between economists, AI researchers, and technologists to model the dynamics of recursive self-improvement.

-

Short-Term Investment (6-12 Months):

- Scenario Planning for Policy: Conduct detailed scenario planning exercises based on models predicting explosive growth, focusing on potential economic dislocations and societal implications.

- Develop AI Alignment Frameworks: Prioritize research and policy development for AI alignment, focusing on how to channel AI's capabilities towards broad social welfare and shared prosperity.

- Educate Policymakers: Create accessible summaries and briefings for policymakers on the potential for accelerated growth and the systemic changes it implies.

-

Longer-Term Investment (12-18 Months / Ongoing):

- Foster Speculative Economic Research: Create academic incentives and funding streams for research that extrapolates current trends and considers transformative AI scenarios, moving beyond immediate-term, observable impacts.

- Build Societal Dialogue on AI's Future: Initiate broad public conversations about the potential economic futures shaped by advanced AI, focusing on equitable distribution of benefits and managing potential risks. This requires confronting the discomfort of uncertainty now to build a more resilient future.