AI Reshapes Software Testing: Embracing Non-Determinism and New Value

The rapid evolution of AI in software development, particularly with the advent of Model Context Protocol (MCP) and agentic workflows, is fundamentally reshaping how we build and test applications. While the immediate benefits of increased velocity and code generation capabilities are clear, this conversation reveals a deeper, often overlooked consequence: the erosion of traditional testing paradigms and the emergence of new, complex challenges in ensuring software reliability. The non-deterministic nature of Large Language Models (LLMs) and AI agents introduces a level of unpredictability that renders many established testing strategies obsolete. This analysis is crucial for engineering leaders, QA professionals, and developers who need to navigate this paradigm shift, understand the hidden costs of AI-driven development, and identify the emerging differentiators that will define success in the next generation of software. By understanding these dynamics, organizations can proactively adapt their strategies to build robust, trustworthy AI-powered applications, gaining a significant competitive advantage.

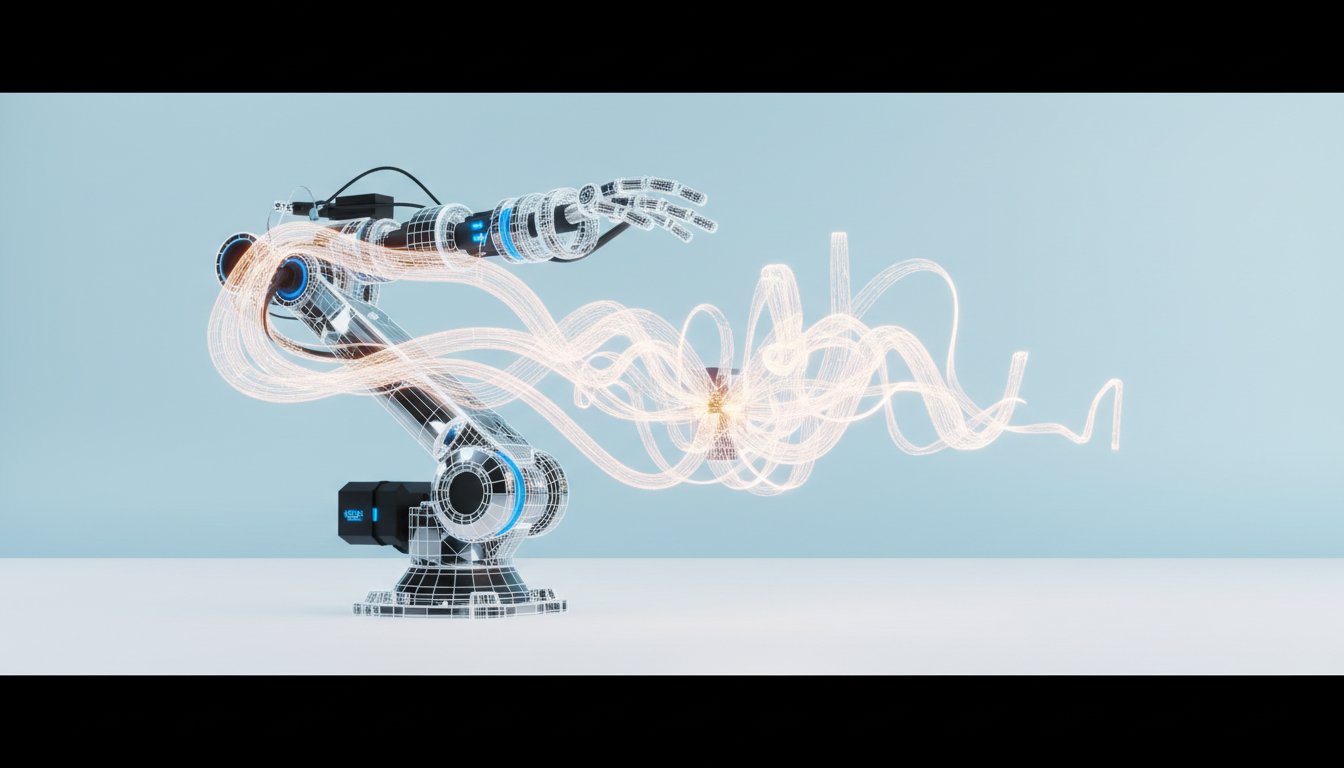

The Unraveling of Predictable Code: Testing in the Age of AI Agents

The software development landscape is undergoing a seismic shift, driven by the accelerating capabilities of AI agents and protocols like Model Context Protocol (MCP). This new era promises unprecedented development velocity, with LLMs capable of generating code at a pace that dwarfs human output. However, as Fitz Nowlan, VP of AI and Architecture at SmartBear, highlights, this revolution introduces a fundamental challenge: how do we test code when its very generation is non-deterministic? The core of MCP lies in defining tools for AI agents without being overly prescriptive, allowing the LLM to dynamically choose the best path. This flexibility, while essential for intelligent AI function, directly conflicts with the deterministic nature of traditional testing.

The immediate consequence is the breakdown of familiar testing workflows. If an AI agent can invoke a suite of tools in a myriad of ways, relying on rigid, pre-defined sequences becomes futile. The challenge isn't just about syntax; it's about the LLM's interpretation and decision-making process. This necessitates a move beyond simple keyword flagging or static analysis.

"The key behind MCP is that you're defining these tools that the AI can invoke, but you don't want to be too prescriptive or too restrictive in how the AI can invoke those tools. You want the workflow in any given moment to really be decided on the fly by the LLM."

This quote underscores the central tension: the desire for AI intelligence versus the need for testable predictability. The implication is that traditional unit tests, which rely on predictable inputs yielding predictable outputs, are becoming insufficient. While they might still serve a purpose in ensuring continuity--that a new AI-generated change hasn't broken existing functionality--they fail to guarantee that the code is doing what it's supposed to be doing. The AI can easily write unit tests that pass, even if they simply assert true. This forces a re-evaluation of what constitutes a valuable test.

The conversation pivots towards higher-level, more holistic testing strategies. Instead of focusing on individual code units, the emphasis shifts to validating the overall functionality and intent of the application. This involves using AI to test AI, employing "evals" or evaluations where one LLM assesses the output of another. This approach acknowledges the inherent non-determinism and seeks to establish probabilistic correctness rather than absolute certainty. The advice here is not to "beat the model" with rigid prompts, but to "meet the model"--to grow with its improving capabilities and embrace a more fluid, adaptive testing methodology. This requires a significant mindset shift for QA professionals, moving from a spec-driven approach to one that embraces common sense and intent-driven validation, even for AI-generated code.

The Shifting Sands of Value: Beyond Code to Data and Composition

As AI agents become increasingly adept at generating functional code, the very definition of a "valuable" software product is being challenged. Fitz Nowlan posits that if an MVP (Minimum Viable Product) can be replicated with relative ease by AI, then the traditional value derived from source code diminishes. This raises a provocative question: if functionality can be generated on demand, does the source code itself still matter? The implication is that businesses might prioritize rapid deployment and market entry over hyper-efficient, hand-authored code. This could lead to companies accepting higher operational costs as a trade-off for speed, as long as customers are willing to pay for the service.

"So does it matter, you know, if the code, how the code is written in the MVP sense, you know, the functionality can be checked off, the boxes can be checked. It may not as much. It certainly doesn't as much as it used to."

This perspective suggests that the traditional competitive advantage derived from elegant, optimized code might be superseded. Instead, value is likely to accrue in areas that are harder for AI to commoditize. Nowlan identifies two primary differentiators: data locality and data construction. Data locality refers to the value derived from rich, proprietary datasets. A company like Snowflake, for instance, can offer immense value by allowing users to interact with their unique data through AI, because the data itself is the core asset. Data construction, on the other hand, involves specialized AI-driven manipulation of data or complex prompt engineering that produces unique, valuable outputs. This "secret sauce" of AI composition becomes a payable differentiator until it, too, is commoditized.

The conversation also touches upon a potential counter-trend: a resurgence of local, on-premise computing and privacy-focused software. As AI makes it easier to build and deploy applications, the barrier to entry for creating custom solutions for personal or small business use decreases. This could lead to a demand for software that prioritizes data privacy and user control, moving away from multi-tenant SaaS models. Companies like Oxide Computing are exploring this space by offering cloud-like experiences on-premise. For enterprises, this translates to a desire to keep data within their own environments, even if it means accessing AI models via their Virtual Private Cloud (VPC) rather than calling out to third-party SaaS platforms. This push towards on-premise AI and custom development means that the need for robust testing, even in these controlled environments, will persist. The "secret sauce" of an AI testing agent--its superior common sense, edge case awareness, or data integrity--could become a valuable offering in this new landscape.

Navigating the Uncharted Territory: Actionable Steps for an AI-Driven Future

The rapid advancements in AI and MCP present both opportunities and significant challenges for software development and testing. Embracing this shift requires a proactive approach, focusing on adapting testing methodologies, identifying new value propositions, and preparing for the evolving nature of software itself.

Here are key action items to consider:

-

Re-evaluate Testing Strategies: Shift focus from deterministic unit tests to probabilistic, intent-driven evaluations. Invest in AI-powered testing platforms that can assess LLM outputs and validate application functionality at a higher level of abstraction.

- Immediate Action: Begin experimenting with LLM-based evaluation frameworks for your existing AI-generated code or agentic workflows.

- This pays off in 6-12 months by identifying critical functional gaps that traditional tests miss.

-

Develop Expertise in Data Locality and Construction: Identify your organization's unique data assets and explore how AI can unlock new value from them. Simultaneously, begin experimenting with complex AI prompt engineering and data composition techniques to create proprietary "secret sauce" functionalities.

- Immediate Action: Catalog your organization's most valuable data sources and brainstorm potential AI-driven applications or enhancements.

- This pays off in 12-18 months by creating defensible competitive advantages that are difficult for competitors to replicate with off-the-shelf AI tools.

-

Embrace Non-Determinism in Workflow Design: Design systems that can tolerate and even leverage the inherent variability of LLM outputs. This involves building flexible agentic workflows rather than rigid, linear processes.

- Over the next quarter: Map out critical user journeys and consider how an AI agent might navigate them in non-obvious ways, and design corresponding test cases.

- This pays off in 9-15 months by ensuring your AI-powered applications remain resilient and functional as models evolve.

-

Invest in AI-Native QA Platforms: Recognize that QA velocity must match development velocity. Explore and adopt tools designed specifically for testing AI-generated code and agentic workflows.

- Immediate Action: Research and pilot emerging AI-native QA solutions that integrate with your current development pipeline.

- This pays off in 6-12 months by reducing the bottleneck that AI-driven development speed can create for quality assurance.

-

Consider the Value of "Legacy" and Non-LLM Components: While LLMs are powerful, don't abandon traditional engineering practices for critical, non-LLM components where deterministic behavior is paramount. Understand the trade-offs and invest strategically in areas where LLMs might be less reliable or where traditional methods offer clear advantages.

- Over the next 6 months: Conduct an audit of your application stack to identify critical non-LLM components and assess the risk of future LLM disruption.

- This pays off in 12-24 months by ensuring the stability of your core systems while still benefiting from AI advancements.

-

Explore Local and Privacy-Focused Solutions: For certain applications or industries (e.g., finance, healthcare), consider the benefits of on-premise deployments or solutions that prioritize data privacy and control, potentially leveraging local AI models.

- Over the next quarter: Assess the feasibility and potential ROI of running AI workloads on-premise or within controlled VPCs for sensitive applications.

- This pays off in 18-24 months by meeting stringent compliance requirements and potentially creating new market opportunities.

-

Foster a Culture of Continuous Learning and Adaptation: The AI landscape is evolving at an unprecedented pace. Encourage teams to stay informed about new LLM capabilities, testing methodologies, and emerging architectural patterns.

- Ongoing: Dedicate time for team training, knowledge sharing sessions, and participation in industry conferences focused on AI and software development.

- This pays off continuously by ensuring your organization remains agile and competitive in a rapidly changing technological environment.