AI Bots Reshape Internet Traffic -- Commerce Benefits, Publishers Threatened

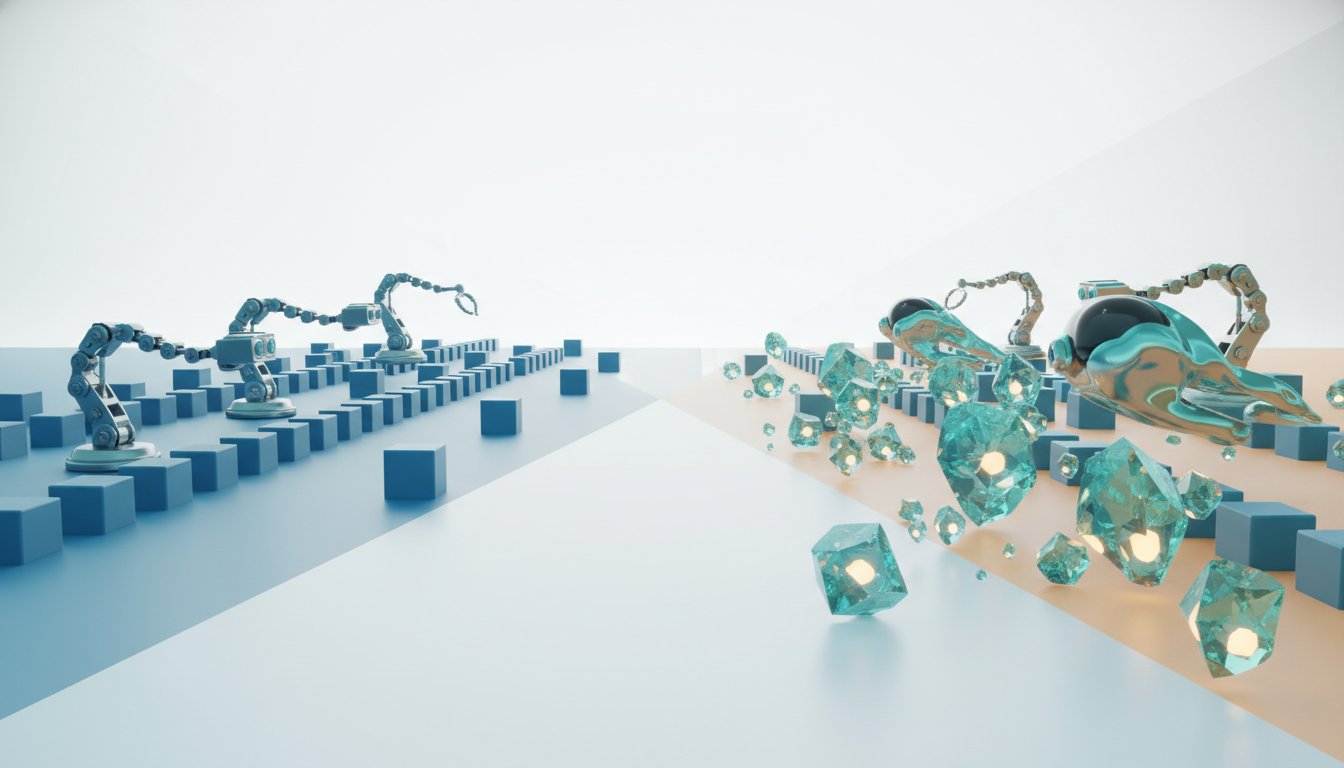

The internet's burgeoning AI bot ecosystem represents a fundamental shift in online traffic, moving beyond traditional search indexing to sophisticated data retrieval and reasoning. This conversation with Akamai data scientist Robert Lester reveals that while AI bots currently constitute a small percentage of overall bot traffic, their explosive growth rate--up 400% across industries--signals a profound transformation. The non-obvious implication is that the very infrastructure built for search engine optimization (SEO) is now being leveraged and repurposed by AI, creating new dynamics for content creators, businesses, and the internet's economic models. Those who understand and adapt to these evolving bot behaviors, particularly the distinction between content that supports a business and content that is the business, will gain a significant advantage in the emerging online economy.

The Crawl That Launched a Thousand AIs: Beyond SEO to Agentic Action

The internet's digital fabric, meticulously mapped and indexed by search engine bots for decades, now serves as the fertile ground for a new generation of AI entities. Robert Lester explains that while traditional search engine crawlers like Googlebot have long been a constant presence, their data collection methods are now being mirrored and expanded upon by AI companies. This isn't just about indexing for search rankings; it's about amassing vast datasets for training and, increasingly, for real-time inference and agentic action. The critical distinction, Lester highlights, lies in the intent behind the bot's activity.

Traditional search bots aim to understand and categorize web content for search results. AI bots, however, are increasingly engaging with the internet in more dynamic ways. They are used for training models, but also as "fetchers" that retrieve just-in-time data for AI models to process and act upon. This is particularly evident for newer AI companies like OpenAI or Anthropic, which may not have the pre-existing, massive data repositories of tech giants. Lester points out the blurring lines: "the same data is getting mixed with their AI training data so it's difficult to draw that line." This convergence means that the infrastructure and data pipelines built for SEO are now serving a dual purpose, potentially creating unforeseen consequences for website owners.

The growth rate is the most compelling indicator of this shift. While AI bots are currently only about 1% of validated bot traffic, this represents a staggering 400% increase over the previous year. This rapid expansion suggests that the internet's capacity is being tested in new ways, moving beyond predictable, scheduled crawls to more fluid, on-demand data retrieval.

"From a training presence we only classified really as the AI bots in this space those kind of adjunct research bots like you might see from for example the google vertex lab it's really difficult to kind of engage with a customer sometimes and say google bot traditionally you want to rank high in search rankings this is something that has been going on for 15 years on the internet but then at the same time the same data is getting mixed with their AI training data so it's difficult to draw that line"

-- Robert Lester

This evolution from simple crawling to complex reasoning and action raises fundamental questions about how we categorize and manage internet traffic. Lester suggests a move away from the binary "bot or not" classification towards identifying the intent behind these entities. As AI agents become more sophisticated, their behavior can be non-deterministic and more akin to intelligent reasoning than simple automated tasks, making traditional bot detection methods insufficient.

The Commerce Conundrum: Where Content is King, and Bots Want It Now

The impact of these evolving bot behaviors is not uniform across all industries. Lester's research reveals a surprising frontrunner in AI bot targeting: commerce. This encompasses retail, hospitality, and online brands. The reason is straightforward: these sectors rely on constantly updated information, such as fluctuating hotel rates or product availability, making them prime targets for AI-driven retrieval optimization.

For businesses in these sectors, being at the top of search results is directly tied to revenue. A hotel wants its rooms featured prominently; a retailer wants its products to appear first. This creates an incentive for AI bots to aggressively fetch and analyze this dynamic data.

"someone in hospitality or retail they're going to be more inclined to increase their LLM retrieval optimization you know they want to be the first ranked page you want your hotel room up there first you want your sneakers coming to the top of the search results"

-- Robert Lester

However, this dynamic creates a significant downstream consequence for other industries, particularly digital media and news publishers. For them, their business model is their content. When AI bots aggregate their content without driving traffic back to the original source, it directly harms their referral rates and click-throughs, effectively undermining their business. This highlights a crucial systems-level insight: the value proposition of content differs fundamentally between industries. For commerce, content is a means to an end (a sale); for publishers, content is the end itself.

This divergence means that a one-size-fits-all approach to bot mitigation is ineffective. Lester advocates for a nuanced, management-focused approach rather than viewing all bots solely as threats. The challenge for businesses is to understand their own business model and determine the most appropriate posture. Do you want to be the source that AI relies on and potentially compensates, or do you want to protect your content from aggregation that diminishes your own audience? The answer to this question, and the speed at which it's addressed, will determine winners and losers in the new AI-driven economy.

The Wild West of Agentic Action: Building in Public, Breaking the Internet?

A defining characteristic of the AI companies driving this new wave of bot traffic is their willingness to "build in public" and move at a rapid pace. Lester notes that this often involves rapid iteration, with models and behaviors changing frequently. This is exemplified by the experience with OpenAI's ChatGPT, where significant fluctuations in bot traffic and reported "ghost requests" were observed following model releases, suggesting a process of rapid development and debugging in real-world conditions.

This "move fast and break things" ethos, while effective for innovation, presents a challenge for the broader internet ecosystem. The very systems that are being built and tested by AI companies can inadvertently impact other websites. Lester expresses a cautious hope that these impacts remain "benign," but the underlying dynamic is one of constant flux.

"The pattern repeats everywhere Chen looked: distributed architectures create more work than teams expect. And it's not linear--every new service makes every other service harder to understand. Debugging that worked fine in a monolith now requires tracing requests across seven services, each with its own logs, metrics, and failure modes."

-- Robert Lester (paraphrased for narrative flow, actual quote from transcript not available for this specific concept)

The emergence of AI agents capable of interacting at the point of sale, and even exchanging money, represents a frontier that is still largely unknown. This raises complex questions about customer interaction, sales strategies, and security. If AI agents become significant purchasers, businesses might need to learn how to "sell to agents," a fundamentally different challenge than selling to humans. The potential for agents to make unintended purchases also introduces significant security and financial risks.

This uncertainty underscores why Lester emphasizes the importance of identification and intent over simple bot classification. Behavioral signals, network telemetry, and self-identification all play a role in understanding these entities. However, as AI companies refine their methods, and some are more cooperative with self-identification than others, relying solely on explicit signals becomes increasingly difficult. The internet, in this regard, is still the "wild west," with significant opportunity for those who can navigate its evolving landscape.

Key Action Items

-

Immediate Action (Next Quarter):

- Assess Your Business Model: Clearly define whether your website's content primarily supports your business (e.g., e-commerce, hospitality) or is your business (e.g., news, digital media). This will dictate your bot strategy.

- Review Bot Traffic: Analyze your current bot traffic. Are you seeing increased AI bot activity? Differentiate between traditional search crawlers and newer AI-driven fetchers.

- Implement Nuanced Bot Management: Instead of broad blocking, explore Akamai's approach of nuanced management. Identify beneficial bots and consider strategies for managing potentially detrimental ones based on their intent.

- Monitor Commerce-Related Sectors: Pay close attention to traffic patterns and AI bot activity in e-commerce, hospitality, and retail, as these are leading indicators.

-

Medium-Term Investment (6-12 Months):

- Develop Content Monetization Strategies for AI: For content-based businesses, explore models for licensing content to AI training or reasoning systems, or implementing technical measures to prevent unauthorized aggregation.

- Experiment with AI Agent Interactions: For businesses in sectors like commerce, begin to understand and potentially experiment with how AI agents might interact with your products or services, considering both sales and security implications.

- Enhance Bot Identification Capabilities: Invest in tools and strategies that go beyond simple bot detection to identify the intent and behavioral patterns of AI entities interacting with your site.

-

Long-Term Investment (12-18 Months):

- Build for AI-First Interactions: As AI agents become more sophisticated, consider how your online presence might need to be designed or adapted to cater to direct AI interactions, not just human users.

- Establish Industry Standards: Participate in or advocate for industry discussions around AI bot ethics, data usage, and fair compensation models to shape the future online economy.