AI "Empire" Consolidates Power Through Hidden Costs

The AI "Empire" and the Hidden Costs of Progress

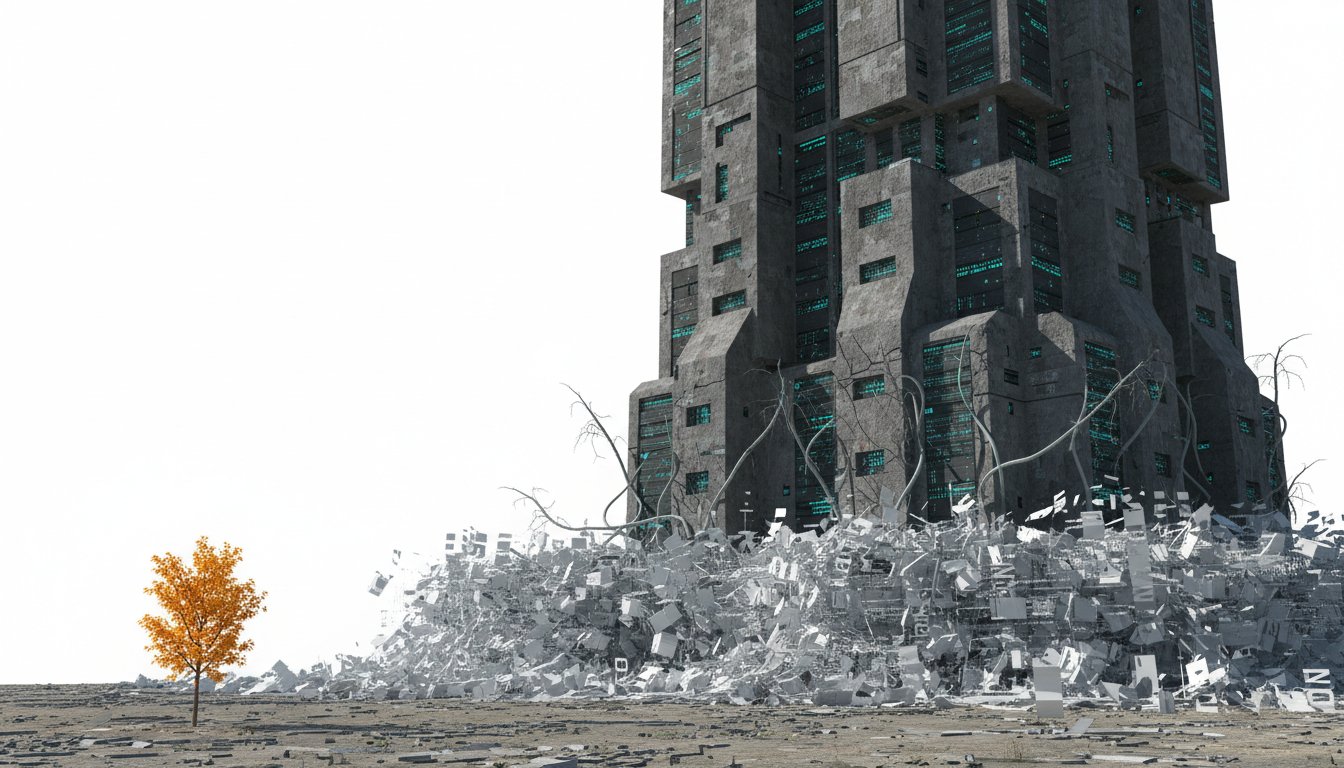

This conversation with AI investigative journalist Karen Hao reveals a starkly different reality behind the glossy promises of artificial intelligence. Beyond the dazzling demonstrations and utopian visions, a powerful, profit-driven "empire" is consolidating control, extracting value, and potentially undermining human flourishing. The non-obvious implications are profound: the very pursuit of "progress" as defined by a few is creating systemic harm, from labor exploitation and environmental degradation to the erosion of democratic decision-making and the atomization of human experience. Anyone invested in the future of technology, business, or society--especially those who feel the current trajectory is unsustainable or inequitable--will gain a critical lens to understand the forces shaping our world and the hidden consequences of unchecked AI development.

The narrative around artificial intelligence is often framed as a race towards a utopian future, a benevolent force poised to solve humanity's greatest challenges. Yet, beneath this veneer of progress lies a complex and often exploitative system, as investigative journalist Karen Hao meticulously details in her book, Empire of AI. This conversation peels back the layers, exposing how the pursuit of profit and power within the AI industry is creating significant, often unseen, downstream effects that challenge the very notion of human benefit. Hao’s research, drawing from over 250 interviews, including more than 90 with current and former OpenAI employees, paints a picture of an industry driven by an "imperial agenda" rather than a genuine commitment to broad human flourishing.

One of the most critical, yet often overlooked, dynamics is the way the AI industry, particularly entities like OpenAI, leverages the concept of "Artificial General Intelligence" (AGI) not as a scientific benchmark, but as a marketing tool. As Hao explains, the definition of AGI shifts depending on the audience: a cure for cancer for Congress, a superior digital assistant for consumers, and a multi-billion dollar revenue generator for investors like Microsoft. This ambiguity allows these companies to consolidate power and capital, ward off regulation, and maintain control over the direction of AI development. The "race" narrative, often fueled by existential risk pronouncements, serves to justify this concentration of power, creating a self-fulfilling prophecy where the perceived threat necessitates centralized control.

"They can use it however they want to, and they can define and redefine it based on what is convenient for them."

This strategy of myth-making and strategic communication is not limited to defining AGI. It extends to how these companies interact with the public, researchers, and even journalists. Hao recounts her own experience of OpenAI initially agreeing to participate in her book research, only to shut down communication entirely after a profile she wrote was deemed unfavorable. This pattern of controlling narratives and censoring inconvenient voices is a hallmark of the "empires of AI." When researchers like Dr. Timnit Gebru publish findings critical of AI systems, they face termination, a clear indication that the pursuit of knowledge is secondary to the protection of the corporate agenda. This control over knowledge production is a key characteristic of imperial structures, where the dominant power dictates what is known and what is suppressed.

"The AI industry employs and bankrolls most of the AI researchers in the world. So they set the agenda on AI research in soft ways, simply by funneling money to their priorities, so that only certain types of AI research are produced."

The consequences of this imperial approach extend deeply into the labor market and the environment. Hao highlights how AI companies claim intellectual property from artists and writers without fair compensation, and exploit a vast global workforce for data annotation--the very process that trains AI models to automate jobs. This creates a perverse cycle where laid-off workers are then employed to train the AI that displaced them, breaking the traditional career ladder and devaluing human expertise. Furthermore, the insatiable demand for computational power to train these massive models leads to the construction of colossal data centers, often in vulnerable communities. These facilities consume enormous amounts of energy and water, increase the burden on local grids, and can lead to significant environmental pollution, disproportionately affecting marginalized populations. The narrative of AI as a force for universal progress crumbles when confronted with the reality of environmental racism and the exploitation of low-wage labor.

"The problem runs deeper than just the capabilities of the models. It's also about executive choices and the rhetoric they use if they want to downsize."

The idea that AI will simply create new, unimaginable jobs is challenged by Hao's analysis. The jobs being created are often worse, breaking the career ladder by creating a chasm between highly skilled roles (like AI orchestrators or deep experts) and low-skilled, precarious data annotation work. This bifurcated labor market exacerbates inequality, creating a world where the "haves" gain more free time and become "more human," while the "have-nots" are squeezed further, their humanity diminished as they are absorbed into the machine that perpetuates their own displacement. The rapid pace of AI development, enabled by the open internet, means that this transition could occur at a speed that prevents societal adaptation, unlike the slower pace of industrial revolutions.

The conversation also delves into the motivations of AI leaders. Hao suggests that while they may genuinely believe in the transformative power of AI, they also engage in strategic myth-making to secure power and resources. The narrative of existential risk, while potentially containing a kernel of truth, is weaponized to justify an anti-democratic approach to AI development, where a select few control a technology that will impact billions. This consolidation of power, masquerading as a necessary measure to prevent a dystopian future, is fundamentally an imperial structure that prioritizes the interests of a few over the well-being of the many.

Key Action Items

- Demand Transparency and Accountability: Advocate for regulations that require AI companies to be transparent about their data sources, training methodologies, and the environmental impact of their operations. This includes pushing for fair compensation for intellectual property and labor used in AI development.

- Support Alternative AI Development: Investigate and champion AI research and development that prioritizes efficiency, sustainability, and broad societal benefit over brute-force scaling. Seek out "bicycle of AI" solutions that offer significant utility with minimal resource consumption.

- Challenge the "Race" Narrative: Recognize that the AI "race" is often a manufactured urgency used to consolidate power. Question the necessity of rapid, unchecked development and advocate for a more deliberate, human-centered approach.

- Protect Labor and Intellectual Property: Support legal and social movements that defend the rights of artists, writers, and workers whose labor and creations are being exploited by AI companies. This includes advocating for robust copyright laws and fair labor practices in the AI supply chain.

- Invest in Human Expertise and Connection: As AI automates routine tasks, prioritize the development of deep human expertise, critical thinking, and interpersonal skills. Foster environments that value human connection and collaboration, as these are areas AI is unlikely to fully replicate.

- Engage in Local Governance: Pay attention to the development of data centers and AI infrastructure in your communities. Engage with local governments to ensure that these projects are undertaken responsibly, with consideration for environmental impact and community well-being.

- Educate and Advocate: Share critical insights about the AI industry's societal and environmental impact. Participate in public discourse and support organizations working to ensure AI development aligns with democratic values and human flourishing.