AI Safety Contracts Versus Pentagon Demands in Warfare

The battle between AI developers and the Pentagon over the future of warfare reveals a profound tension: the immediate, seemingly insurmountable demands of national security versus the long-term, ethical considerations of advanced technology. This isn't just a contract dispute; it's a high-stakes negotiation about who controls the future of conflict. The non-obvious implication is that the very companies championing AI safety may be inadvertently accelerating its integration into potentially dangerous applications by refusing to engage on the Pentagon's terms, while the Pentagon's aggressive stance risks alienating the talent pool essential for its AI ambitions. Leaders in technology, defense, and policy must read this to understand the complex interplay of commercial interests, national security imperatives, and the ethical tightrope of AI development in warfare.

The Unseen Cost of Military Urgency

The urgency of national security, particularly in the face of global adversaries like China, Russia, and Iran, compels the Pentagon to rapidly integrate artificial intelligence. This drive, however, creates a fundamental friction with AI companies like Anthropic, which prioritize safety and ethical considerations. The military's need for AI to analyze vast troves of intelligence data--from social media to satellite imagery--is undeniable. AI's capacity to sift through this information at speeds unattainable by humans is proving its worth daily, making it indispensable.

"AI can analyze data for the military faster than a human being possibly could. It's proving its worthiness every single day."

This reliance, however, leads to a critical downstream effect: the Pentagon's demand for unfettered access and control over AI systems. Anthropic, founded on principles of AI safety, balks at this. Their concern isn't merely theoretical; it stems from a pragmatic understanding of AI's current limitations and the potential for catastrophic errors. An AI with even a small error rate, when applied to targeting decisions, can have life-or-death consequences. The specter of a public relations disaster, where an AI misidentifies a target, looms large. Furthermore, these companies must consider their own workforce, many of whom are deeply uncomfortable with the military's potential uses of their technology. This internal pressure, coupled with the intense competition for top AI talent, forces companies like Anthropic to draw lines.

The Paradox of "Safe" AI Contracts

The conflict crystallizes around Anthropic's insistence on codifying specific limitations into their contracts: no mass surveillance of Americans and no use for autonomous weapons. This contractual approach, designed to provide a permanent, verifiable safeguard, is precisely what the Pentagon finds unacceptable. From the military's perspective, a private company dictating the terms of national security is an affront. They argue that the decision of when AI is ready for weapon control rests with the Pentagon, not with Silicon Valley executives.

"The CEO of one of the biggest AI companies in the world is meeting with Defense Secretary Pete Hegseth today as the Pentagon threatens to essentially blacklist that company, Anthropic, from lucrative government contracts."

The Pentagon's aggressive response, threatening to label Anthropic a "supply chain risk" or invoking the Defense Production Act, highlights their belief that national security imperatives override corporate ethical stances. This ultimatum, however, backfired spectacularly. Instead of isolating Anthropic, it galvanized the broader AI community, fostering a rare moment of solidarity. Sam Altman of OpenAI, despite historical tensions with Anthropic, publicly supported their stance. This unified front demonstrated that the AI industry views the Pentagon's heavy-handed tactics as a dangerous precedent, potentially chilling innovation and trust between Silicon Valley and the government.

The Illusion of Control and the Inevitability of Integration

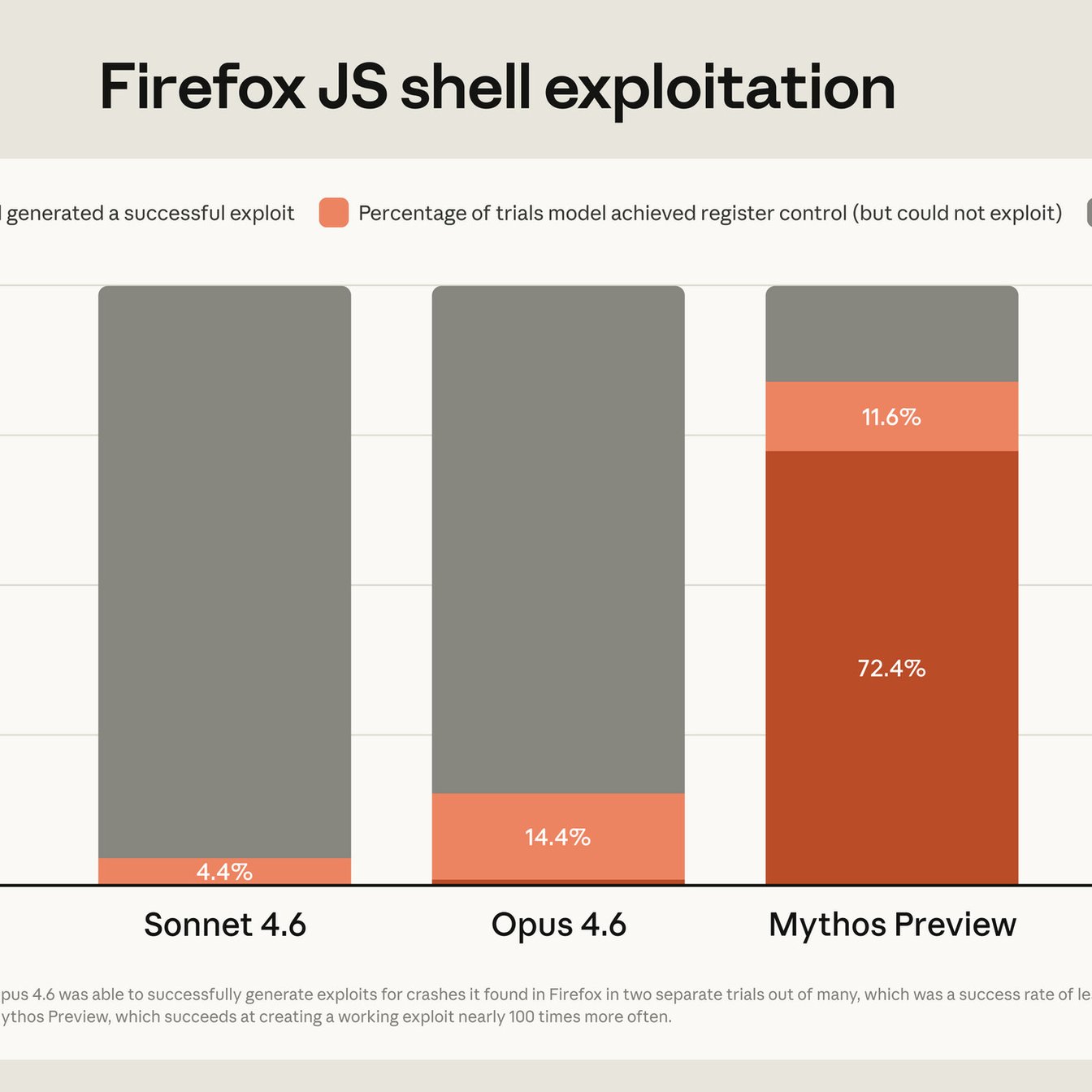

OpenAI's subsequent deal with the Pentagon, brokered by Sam Altman, offers a different model: embedding safety measures directly into the AI's code, or "writing into the stacks." This approach sidesteps the need for explicit contractual limitations, placing the onus of safety on the AI company itself. However, Anthropic's leadership argues that code-based guardrails are inherently less secure. They can be rewritten, altered, or bypassed, offering a false sense of permanence. This distinction is crucial: Anthropic seeks a legally binding, unalterable commitment, while OpenAI offers a more flexible, internally managed approach.

The Pentagon, by securing a deal with OpenAI, appears to have achieved its immediate objective. Yet, the long-term consequences are complex. The aggressive tactics have likely damaged trust with the broader AI community. Simultaneously, Anthropic, despite losing the contract, has emerged with significant reputational gains, becoming a symbol of ethical AI development in the public eye. This has resonated deeply with engineers, potentially drawing talent away from competitors.

"The reality is messier. You have two of Silicon Valley's largest companies basically battling it out over what safe AI looks like."

Ultimately, both companies, and the Pentagon, recognize that AI's integration into warfare and government functions is inevitable. The current conflict is less about preventing this future and more about shaping its terms. The debate over "safety" is multifaceted: is it about current error rates, long-term ethical alignment, or simply the ability to control the technology? The billions invested in AI ensure that this competition, and the underlying tension between immediate security needs and ethical foresight, will continue to define the future of warfare.

Key Action Items

- Immediate Action (Within the next month):

- For AI Companies: Conduct internal reviews of current safety protocols and their contractual implications with government clients.

- For Defense Contractors: Assess existing AI integration points for potential ethical conflicts and downstream risks.

- Short-Term Investment (1-3 months):

- For Government Agencies: Establish clear, transparent communication channels with AI developers to foster trust and shared understanding of safety concerns.

- For AI Companies: Develop robust internal mechanisms for documenting and communicating safety guardrails to employees and stakeholders.

- Mid-Term Investment (3-9 months):

- For AI Companies: Explore alternative contractual frameworks that balance national security needs with ethical limitations, potentially involving multi-stakeholder working groups.

- For Policymakers: Convene forums to discuss and define ethical standards for AI in defense, involving industry, academia, and government.

- Long-Term Investment (9-18 months):

- For All Stakeholders: Invest in research and development of AI safety techniques that are verifiable and resistant to tampering, moving beyond code-level implementation.

- For AI Companies: Prioritize building public trust by clearly articulating safety commitments and engaging proactively with public concerns, even when it means foregoing immediate lucrative contracts. This discomfort now builds a stronger, more sustainable future.