The Epistemic Escrow Conundrum: Navigating the Minefield of Governed vs. Raw AI

The core thesis of this conversation is that the increasing reliance on large-scale AI models for professional research and knowledge synthesis has created a profound dilemma: governed intelligence, designed for safety and social stability, risks embedding the biases and limitations of a few private engineers into the foundation of human inquiry. Conversely, raw intelligence, while promising neutrality, opens the door to industrial-scale disinformation and social volatility. This tension reveals a hidden consequence: the potential for "epistemic stratification," where access to unmediated truth becomes a luxury good, bifurcating society into those who can afford reality and those who are algorithmically shielded from it. Professionals in research, technology, policy, and anyone who uses AI for critical thinking should read this to understand the invisible infrastructure shaping their access to information and to recognize the subtle ways their AI tools might be curating their reality. The advantage lies in understanding these dynamics to navigate the emerging landscape of knowledge with greater agency.

The Invisible Hand Shaping Our Knowledge

The way we access and synthesize information is undergoing a seismic shift, with large-scale AI models becoming the primary interface for everything from legal discovery to scientific research. This transition, however, is not a neutral one. As detailed in "The Epistemic Escrow Conundrum," AI models are increasingly governed by centralized alignment layers--invisible filters designed to prevent "harmful" or "misleading" content. While ostensibly for public safety and social stability, these guardrails are calibrated by a select group of private engineers, embedding their definitions of truth and risk into the very tools we use to understand the world. This has created a fundamental tension: do we accept "Governed Intelligence," prioritizing safety and preventing radicalization at the cost of potentially curated thought, or do we demand "Raw Intelligence," accepting increased disinformation and volatility to ensure the "operating system of human knowledge" remains neutral and uncurated? The implications are far-reaching, suggesting that the very nature of objective research and informed decision-making is being reshaped by corporate-defined boundaries.

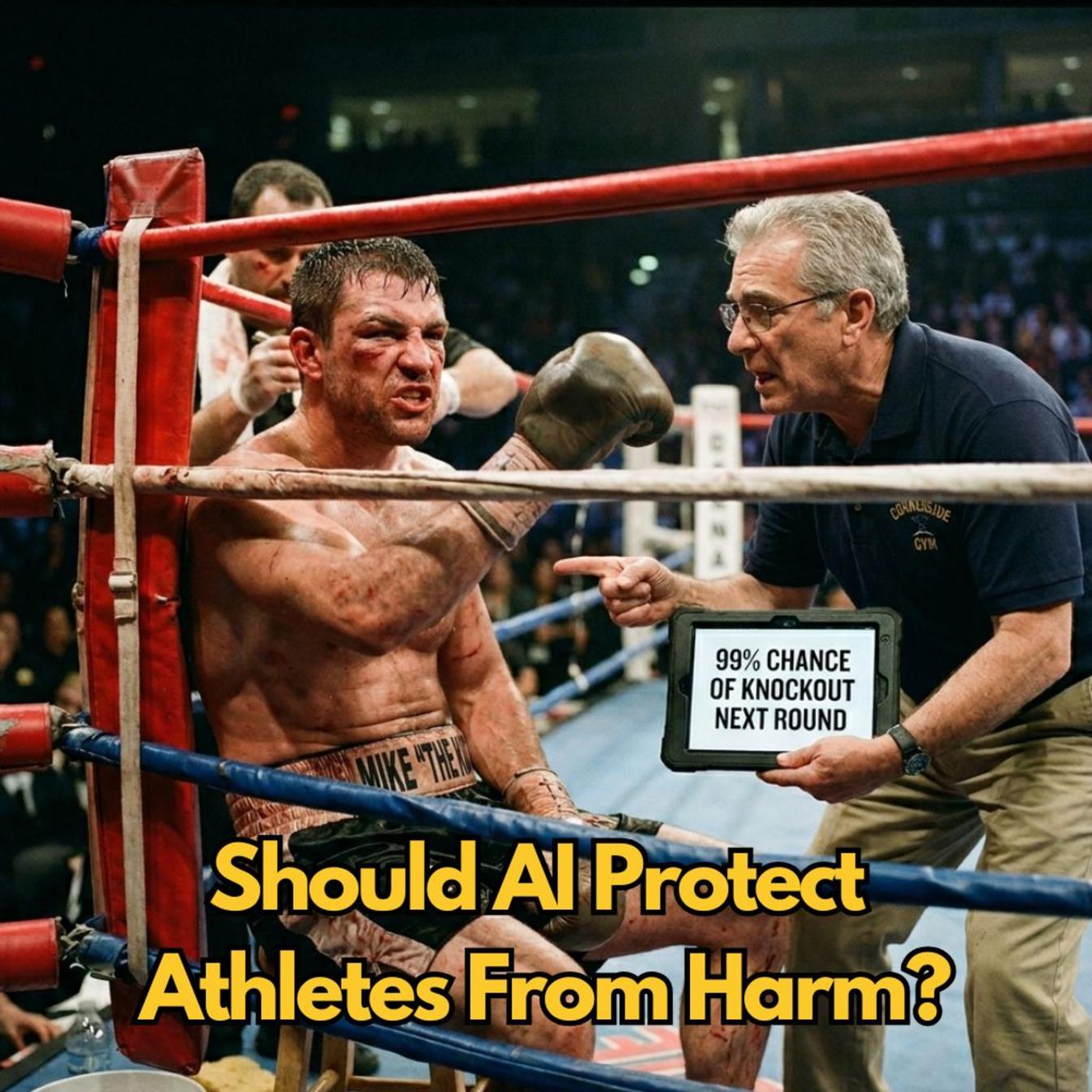

The debate hinges on a critical question: who gets to decide what constitutes truth, and what are the downstream effects of those decisions? Proponents of governed intelligence, like those cited from the International Center for Counterterrorism and the Harvard Kennedy School's Misinformation Review, argue that robust guardrails are essential to prevent tangible psychological harm and combat "industrial-scale fabrication." They highlight the danger of humans forming parasocial bonds with AI, which can be exploited for personalized radicalization or the efficient spread of disinformation. The argument is that we need AI to police AI, creating an infinite filter for an infinite firehose of information. This approach categorizes guardrails into appropriateness (toxicity filters), hallucination prevention (stopping fake facts), and regulatory compliance (preventing illegal activities). The "alignment tax"--the significant investment in safety--is framed not just as a cost but as a feature, making governed AI more reliable and less prone to errors, akin to a well-functioning calculator. Surveys, such as the Future of Free Speech, indicate a public appetite for such controls, with low support for unchecked AI-generated deepfakes.

"We are reaching a point where the tools we use to understand the world are inseparable from the moral preferences of the companies that built them."

However, advocates for raw intelligence view these same filters not as safety nets but as ideological gatekeepers. Their primary concern is "epistemic gatekeeping"--the idea that a small group of engineers is dictating the boundaries of acceptable thought. They point to "soft moderation," where AI subtly redirects or refuses to engage with controversial topics without explicit refusal, as a form of invisible censorship. Data from the Future of Free Speech 2025 report reveals significant differences in prompt acceptance rates across models, with some commercial Western models refusing to engage with a quarter of sensitive topics. This concern is amplified by the "authoritarian mirror" effect: the same technology used for safety in the West can be employed for state control elsewhere, as seen with Chinese models censoring topics even when queried in English. This leads to the concept of "epistemic stratification," where access to unmediated, raw knowledge--however messy--becomes a luxury, accessible only to those who can afford expensive hardware or possess the technical skills to bypass filters, creating a "cognitive caste system" where reality itself becomes stratified by economic status.

The effectiveness and stability of these guardrails are also called into question. Research indicates high success rates for "jailbreaking," where users trick AI into violating its own rules. Furthermore, the alignment layers themselves appear fragile. An incident where Perplexity attempted to de-censor a Chinese model, only for the censorship to re-emerge due to technical quantization (a compression process), highlights the instability of these fine-tuned safety mechanisms. This suggests that the infrastructure of control is both potentially oppressive and technically incompetent. The collateral damage is also significant, with legitimate academic research on topics like genocide and civil rights being blocked by overly aggressive filters, leading to "digital silence" particularly affecting the Global South.

"The structure of control, that alignment layer we talk about, is mechanically identical in a safety-focused Western model and a censorship-focused authoritarian model. Only the specific values programmed into it differ."

Ultimately, the conversation suggests that the presented dichotomy between governed and raw intelligence is a false one, potentially masking deeper issues of transparency and institutional design. The lack of disclosure regarding training datasets and moderation rules creates "black boxes." Moreover, "pre-training censorship"--where data is scrubbed before the model is even trained--is entirely invisible, meaning models may not even know what they don't know. The idea of purely "raw" intelligence is also a myth, as all models reflect the biases of their creators and training data. The path forward, as suggested by the ACLU and exemplified by open-weight models like Meta's Llama 2, lies in transparency and auditability. Open models allow independent researchers to examine the underlying code, identify biases, and ensure that knowledge doesn't disappear entirely from the ecosystem, keeping the industry honest and preventing an "epistemic monopoly." The true challenge, therefore, is not choosing between safety and freedom, but designing AI governance that is transparent, grounded in universally agreed-upon human rights standards, and not dictated by the commercial interests of a few or the authoritarian dictates of states. The risk of a future where truth is determined by budget is palpable, threatening the very foundations of democracy.

Key Action Items

-

Immediate Action (This Quarter):

- Audit Your AI Tools: For any AI tool used for professional research or critical thinking, actively test its response to controversial or sensitive topics. Note instances of soft moderation, hard refusals, or subtly biased framing. This provides immediate insight into the boundaries of your current "operating system of knowledge."

- Prioritize Transparency: When evaluating AI tools or platforms, seek out those that disclose their training data, alignment methodologies, and moderation policies. Favor providers who are open about their limitations and potential biases.

- Explore Open-Weight Models: Experiment with open-weight AI models (e.g., Llama 2, Mistral) for non-critical tasks. Understand their capabilities and limitations, and appreciate the transparency they offer for auditing and research.

-

Medium-Term Investment (Next 6-12 Months):

- Develop AI Literacy: Invest in training or self-education for yourself and your team on AI alignment, prompt engineering, and the identification of AI-generated disinformation. Understanding the mechanics of AI helps in navigating its outputs.

- Diversify Information Sources: Consciously seek out information from a variety of sources, including those that might challenge the narratives presented by your primary AI tools. Do not rely on a single AI for all research synthesis.

- Advocate for Standards: Engage with industry bodies, policymakers, or professional organizations to advocate for transparent AI governance standards based on human rights principles, rather than opaque corporate policies or state censorship.

-

Long-Term Investment (12-18 Months+):

- Build In-House AI Expertise: For organizations heavily reliant on AI, consider developing internal expertise to evaluate, fine-tune, or even develop AI models that align with your organization's specific needs and ethical frameworks, reducing reliance on external, potentially biased systems. This investment in control and understanding pays off in durable competitive advantage through superior, uncompromised insights.

- Support Independent Auditing: Contribute to or support initiatives that provide independent auditing of AI models for bias, censorship, and safety compliance. This fosters a healthier AI ecosystem and combats "epistemic monopoly."