AI Image Generation: Balancing Speed, Accuracy, and Human Oversight

The rapid advancement of AI image generation tools, exemplified by Google's Nano Banana 2 (Gemini 3.1 Flash Image), presents a complex landscape of both profound creative potential and significant practical challenges. Beyond the immediate wow factor of generating visuals from text or transcripts, this technology introduces subtle but impactful shifts in workflow, content creation, and even our understanding of AI reliability. The hidden consequence is a growing tension between the speed of AI-driven content generation and the enduring need for accuracy and human oversight. Those who can navigate this tension--by understanding the nuances of prompt engineering, the pitfalls of AI hallucination, and the strategic value of human-in-the-loop processes--will gain a distinct advantage in producing compelling and trustworthy AI-assisted content.

The Illusion of Effortless Creation: Unpacking Nano Banana's Implications

The recent unveiling of Google's Nano Banana 2, officially Gemini 3.1 Flash Image, has ignited excitement around the possibilities of AI-driven visual content generation. While the ability to transform a podcast transcript into a visual flow or generate comics from show notes is undeniably impressive, a deeper analysis reveals that the true value lies not in the immediate output, but in understanding the underlying systems and consequences. The conversation highlights a critical juncture where the ease of AI generation clashes with the persistent realities of AI limitations, particularly hallucination and the need for careful prompting.

Brian Maucere's exploration of Nano Banana 2 showcased its impressive ability to interpret a transcript and generate a visual summary, even creating a novel comic that merged disparate topics--the retirement of an AI model and the "Department of War" statement. This demonstrates a sophisticated level of synthesis, moving beyond simple image generation to conceptual blending. However, Beth Lyons' experience with hallucination, where an AI invented an entire interview, serves as a stark reminder of the technology's current fallibility. This isn't just a minor bug; it's a fundamental challenge to the trustworthiness of AI-generated content. The implication is that while AI can accelerate content creation, it cannot yet replace human judgment and verification. The speed at which these tools operate can create a false sense of completion, masking the necessary steps of review and correction.

"The scale problem is theoretical. The debugging hell is immediate."

This quote, while not directly from the Nano Banana discussion, encapsulates the broader tension at play. Teams often optimize for theoretical future problems (like massive scalability) while ignoring the immediate, practical difficulties (like debugging complex systems). Similarly, with AI image generation, the theoretical benefit of instant visuals can obscure the immediate challenge of ensuring accuracy and relevance. The prompts used are crucial, and as the hosts noted, even AI assistants like Claude and LLaMA don't always know the latest models, requiring explicit naming. This underscores that effective use of these tools demands a level of technical specificity and understanding that belies the "effortless" appearance.

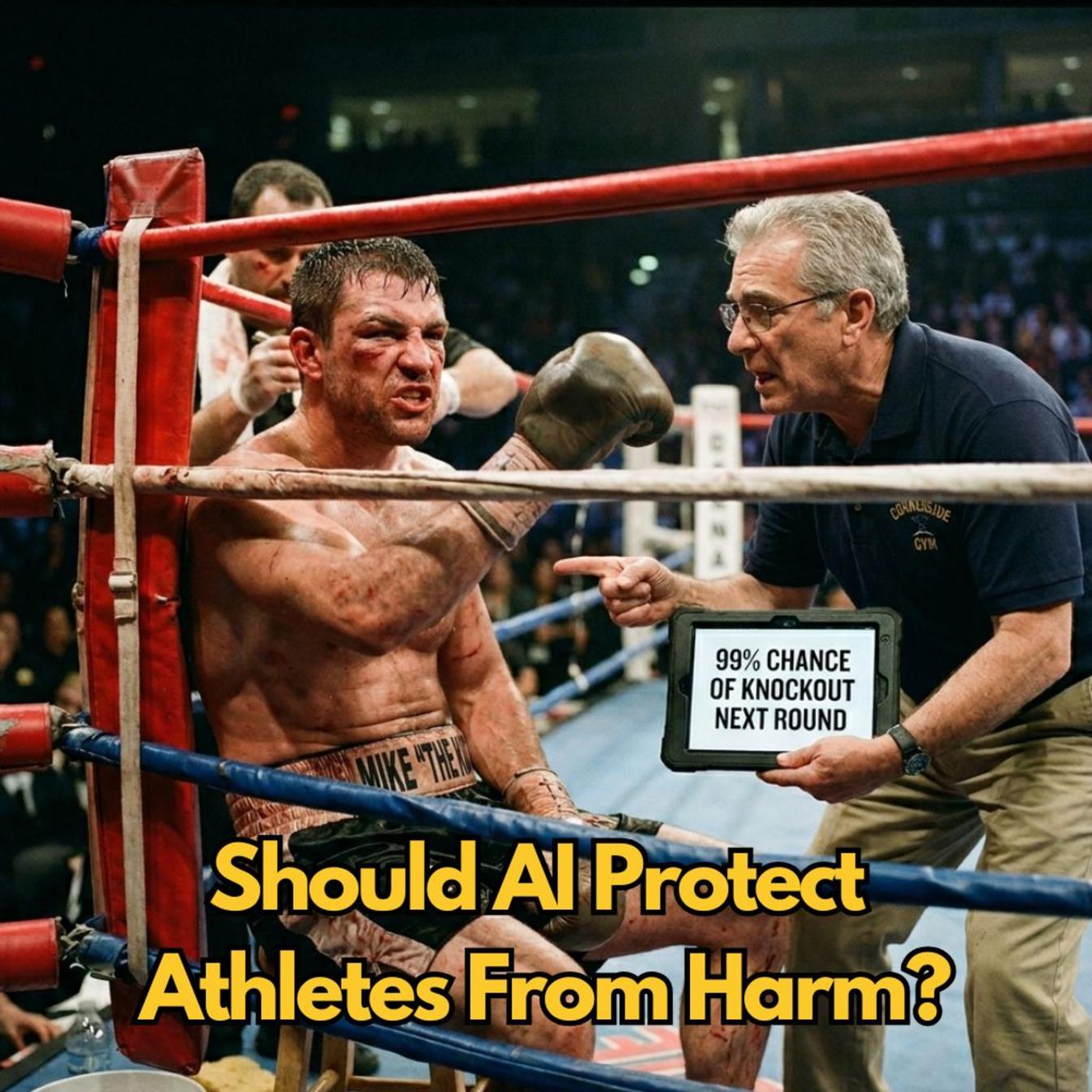

The idea of a "visual newsletter" or a "video newsletter" further illustrates this dynamic. The potential for automation is immense, but the hosts acknowledge the need for a human-in-the-loop. The workflow would involve linking various tools, a process that, while technically feasible, requires careful orchestration to avoid the very errors and inefficiencies that AI is supposed to solve. This is where delayed payoffs create a competitive advantage. Building a robust workflow that integrates AI generation with human oversight might take longer to implement, but it will yield more reliable and impactful content than a system that rushes to output without adequate checks. Conventional wisdom might push for immediate deployment of the latest AI features, but extending this forward reveals the hidden costs of unverified content and the potential for reputational damage.

"The reality is messier. You're not just generating an image; you're generating a narrative, and that narrative needs to be grounded."

This sentiment, paraphrased from the discussion's undertones, highlights the critical difference between mere image creation and effective communication. The power of tools like Nano Banana 2 lies not just in their ability to conjure visuals, but in their potential to augment human creativity and storytelling. However, this augmentation is only effective if the underlying AI’s output is treated as a first draft, a starting point for human refinement. The risk is that the sheer volume and speed of AI-generated content could lead to a dilution of quality and a rise in misinformation, making the deliberate and careful approach the truly disruptive strategy.

Key Action Items

- Immediate Action (This Week): Experiment with Nano Banana 2 (Gemini 3.1 Flash Image) or similar AI image generation tools using actual transcripts from your own content. Focus on understanding prompt effectiveness and identifying potential hallucinations.

- Immediate Action (This Week): Review your current content creation workflows. Identify points where AI could accelerate tasks (e.g., initial drafts, visual ideation) and where human oversight is critical for accuracy and nuance.

- Near-Term Investment (Next Quarter): Develop a standardized prompt library for AI image generation, including negative prompts to mitigate hallucinations and specific instructions for desired styles and accuracy.

- Near-Term Investment (Next Quarter): Establish a clear human review process for all AI-generated content. This involves defining who reviews, what criteria they use, and how corrections are implemented.

- Longer-Term Investment (6-12 Months): Explore the integration of AI-powered content generation into more complex workflows, such as creating visual newsletters or automated video summaries, while prioritizing a human-in-the-loop for quality control.

- Strategic Consideration (Ongoing): Educate your team on the limitations and ethical considerations of AI-generated content, emphasizing the importance of intellectual honesty and the potential for AI to amplify existing biases or inaccuracies if not managed carefully.

- Strategic Consideration (12-18 Months): Evaluate the ROI of AI content generation not just on speed, but on the quality, accuracy, and trustworthiness of the final output, understanding that a reputation for reliability will be a significant competitive advantage.