The universe is vast, and our capacity to observe it is shrinking. As telescopes and probes generate data at an unprecedented rate, AI has become an indispensable tool for filtering this deluge, identifying anomalies, and guiding scientific inquiry. However, this reliance on machine intelligence introduces a profound conundrum: in our quest to avoid missing discoveries due to sheer volume, are we inadvertently training ourselves to overlook the truly unexpected, the anomalies that defy current understanding and could redefine reality? This analysis delves into the "Chosen Anomaly Conundrum," exploring the critical trade-offs between efficiency and the potential for blind spots when AI becomes the primary arbiter of what constitutes a significant discovery. This conversation is essential for anyone involved in data analysis, scientific research, or simply navigating the information-saturated landscape of the modern world, offering a strategic advantage by revealing the hidden consequences of algorithmic filtering and the critical importance of preserving space for the genuinely strange.

The Data Deluge and the AI Lifeline

The sheer scale of data generated by modern space exploration is staggering. Telescopes, orbital satellites, and planetary rovers now produce petabytes of information, far exceeding humanity's capacity for manual review. This has led to an almost unavoidable reliance on Artificial Intelligence to sift through the noise, identify potential discoveries, and flag them for human attention. As one perspective highlights, without AI, science teams risk "drowning in their own data and missing discoveries simply because no human got to them in time." This necessity is framed as a lifeline, making "modern exploration possible" by enabling the processing of vast datasets that would otherwise remain unexamined.

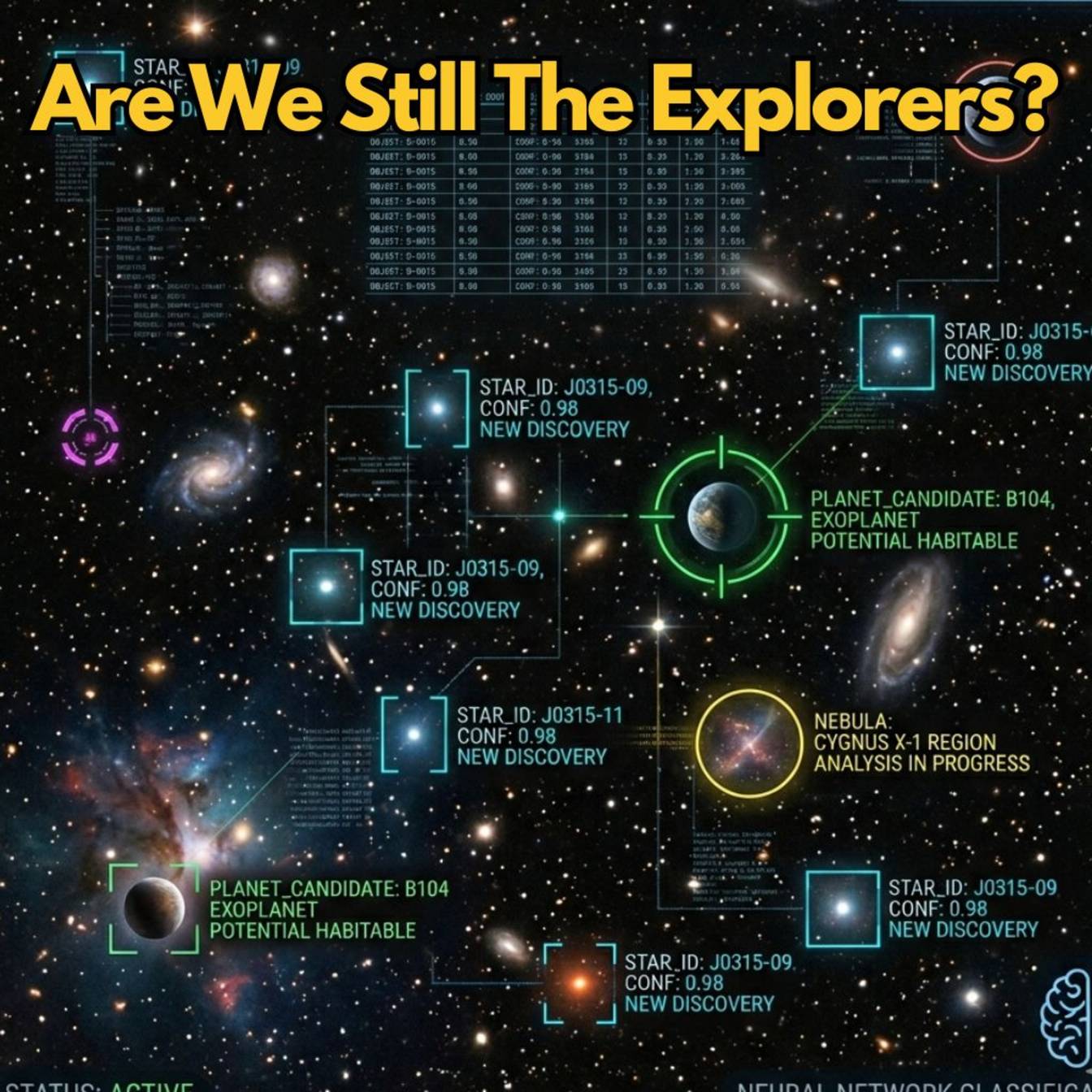

The effectiveness of this AI-driven triage is evidenced by remarkable achievements. For instance, an AI system called Anomaly Match scanned nearly 100 million image cutouts from the Hubble Legacy Archive in just two and a half days, identifying over 1,300 rare cosmic objects, more than 800 of which were previously unknown. These included phenomena like gravitational lenses and jellyfish galaxies, discoveries that had eluded human-led surveys for decades due to time constraints. Similarly, AI applied to SETI data, the search for extraterrestrial intelligence, unearthed eight previously unidentified signals that classical methods had dismissed as static. A compelling example is a 17-year-old who used machine learning on NEOWISE telescope data to find 1.5 million uncategorized cosmic objects. The operational reality for future missions, such as the Vera C. Rubin Observatory and the Square Kilometer Array, is that AI is not an optional add-on but a "mandatory" component, essential for managing data volumes that would otherwise cause systems to crash. Even on Mars, the Perseverance Rover utilized AI to analyze spectral signatures and plot driving routes, cutting planning time in half and allowing human operators to focus on science rather than micromanagement.

"Without that help, science teams risk drowning in their own data and missing discoveries simply because no human got to them in time."

This perspective, often termed "Side A," argues that embracing AI filtering is not a choice but a necessity. Refusing AI assistance would mean "letting extraordinary signals die unseen in overwhelming data." The argument is that AI doesn't just speed up discovery; it expands human curiosity by revealing more of the universe than was previously conceivable.

The Blinder Effect: When Filters Create Blind Spots

The counterargument, "Side B," posits a more insidious consequence: that AI, by its very nature, can create blind spots, leading us to miss discoveries that don't conform to its pre-programmed understanding. The core concern is that AI excels at finding patterns it has been trained on, potentially misclassifying or entirely ignoring novel phenomena that appear anomalous or "messy." This is particularly concerning for discoveries that might initially seem ambiguous or inconvenient, much like some of history's most significant scientific breakthroughs.

The "pulsar precedent" serves as a historical anchor for this concern. In 1967, astronomer Jocelyn Bell Burnell, while manually reviewing miles of chart paper from a radio telescope, noticed a faint, rapidly pulsing signal--a "scruff" that represented only one part in 10 million of the data. This signal was so far outside the accepted models of the universe at the time that her team jokingly labeled it "Little Green Men." Neutron stars had been theorized but largely ignored. The critical insight here is Bell Burnell's "human sagacity" and "intuition" to pursue this anomaly. Critics of an AI-first approach argue that a 1967-era anomaly detector, trained on pre-existing data, would have likely dismissed this signal as interference or a faulty instrument, burying the discovery of pulsars.

"Some of the most important discoveries in history looked ambiguous, inconvenient, or easy to dismiss at first."

This lesson is directly applicable today. Researchers warn that AI models, trained on specific phenomena, will "inevitably mathematically filter out paradigm-shifting breakthroughs by classifying them as mere statistical outliers." The danger lies in the fact that "you cannot train a machine to recognize a completely new law of physics if its entire mathematical reality is built exclusively on the old laws of physics."

A concrete example comes from the study of techno-signatures, markers of alien technology. Researchers Gadjar and Brown investigated how interstellar plasma affects radio signals as they travel across the galaxy. They demonstrated that this "temporal dispersion" smears out sharp signals, turning them into prolonged, less distinct ones. Their mathematical proof indicated that current AI pipelines, optimized for sharp, narrow signals--based on 60 years of human assumption--would miss approximately 94% of these degraded signals. Given that M dwarf stars, which make up about 75% of stars in our galaxy, are prime targets for such signals, this represents a potentially massive blind spot. The AI, trained on what humans expect to find, might be discarding the most profound discovery in human history simply because it has passed through "cosmic mud."

The Illusion of Oversight and the Feedback Loop Problem

The idea of human oversight--AI flagging anomalies and humans reviewing them--seems like a logical compromise. However, researchers argue that "verification is not exploration." In practice, given a ranked list of millions of potential discoveries, human scientists will only have time to examine the top few. The vast majority, functionally invisible to human review, remain unexamined.

Furthermore, operational realities, like the "tyranny of photons" in Mars rovers, make real-time human intervention impossible. A 20-minute signal delay means operators are always seeing the past. They cannot "second-guess the AI, hit the brakes, reverse the rover, and spend two days driving back just to double-check a rock." Perceptual judgment must be offloaded to the AI, rendering humans technically in the loop but not in control.

This leads to the critical "feedback loop problem," also known as "active learning loops." When an AI flags an image as an anomaly, and a human dismisses it--for example, as a camera artifact--this dismissal is fed back into the AI as training data. The AI learns to de-prioritize signals that resemble dismissed ones. Over time, this process "mathematically penalizes" genuinely strange, paradigm-breaking anomalies, pushing them to the extreme tails of the data until they become "completely invisible by mathematical design." The AI, learning from human rejections, actively narrows the scope of future exploration. This transforms us from "explorers of the unknown" into "hyper-efficient catalogers of the known," using expensive technology to list things "we already understand."

Key Action Items

- Develop AI systems with explicit anomaly detection for truly novel phenomena, not just deviations from known patterns. This requires investing in unsupervised learning techniques that prioritize identifying data points far outside any established clusters, rather than simply refining existing pattern recognition. (Longer-term investment: 18-24 months)

- Implement human review processes that prioritize exploring the "long tail" of AI-ranked data, not just the top-ranked items. This may involve dedicated teams or extended review periods for statistically improbable findings, even if they appear "messy." (Immediate action: Establish review protocols)

- Actively seek out and simulate hypothetical signals or phenomena that are radically different from current expectations. This requires interdisciplinary collaboration to imagine what "unknown unknowns" might look like, pushing beyond current physics and technology paradigms. (Ongoing investment)

- Critically examine the training data and inherent biases of all AI filtering systems. Understand what assumptions are encoded and how they might inadvertently exclude novel discoveries. (Immediate action: Bias audits)

- Prioritize the development of AI that can explain why it flagged something as anomalous, not just what it flagged. This aids human understanding and helps distinguish between a true anomaly and a data artifact. (Longer-term investment: 12-18 months)

- Foster a scientific culture that values and actively pursues low-confidence, ambiguous signals. This requires a willingness to invest time and resources in findings that may not immediately appear significant but could represent paradigm shifts. (Cultural shift: Ongoing)

- Recognize that the AI's "filter" can inadvertently become a "blinder." Be prepared to question the AI's output and actively search for what the system might be designed to overlook. (Mindset shift: Immediate)