AI Agents: Efficiency Gains Versus Cognitive Surrender Risks

The AI agent landscape is rapidly evolving, moving beyond simple chatbots to sophisticated tools capable of interacting with the web and managing complex tasks. This conversation reveals a critical, often overlooked, downstream consequence: the potential for "cognitive surrender," where over-reliance on AI may erode human judgment. For product managers, engineers, and strategists building or integrating AI, understanding these hidden costs is paramount. This analysis highlights how foundational standards like Google's WebMCP and Cloudflare's Markdown for Agents are streamlining AI-web interaction, but also underscores the subtle, long-term risks of offloading our thinking processes. Those who grasp these dual implications--the immediate efficiency gains and the potential erosion of human discernment--will be better positioned to build robust, human-centric AI systems.

The Unseen Cost of Seamless Web Interaction: Beyond Brittle Browsing

The push for AI agents to interact with the web is accelerating, driven by innovations like Google's WebMCP and Cloudflare's Markdown for Agents. These standards aim to move beyond the clunky, pixel-level analysis of early browser agents, offering structured, high-level interfaces for AI to execute tasks like checking out, filtering results, or creating tickets. This promises a future where agents can navigate websites with significantly higher accuracy and reduced computational overhead--potentially 70% less, as noted regarding WebMCP's efficiency gains. The shift from analyzing raw HTML or pixels to using structured data like JSON schemas and Markdown fundamentally changes how agents interact with the web, making it more efficient and less brittle.

However, this drive for seamless integration and efficiency introduces a subtle, yet significant, second-order effect: the potential for cognitive surrender. As Beth Lyons points out, "we're training AI at this point to be very good at this deliberative reasoning." This is amplified by technologies like Open Claw, which aims to create autonomous agent systems. While Open Claw's creator joining OpenAI signals a major step towards integrating such agents into consumer-facing products, allowing them to manage calendars, handle tasks, and even potentially replace many existing apps, it also raises questions about where human judgment fits in. The conversation touches on the memory conundrum with persistent agents like My Claw AI, where the cost of extensive memory can be prohibitive due to token usage. This highlights the immediate trade-offs in agent design, but the deeper implication is how the very act of offloading tasks to agents, even with cost considerations, might diminish our own cognitive load and, by extension, our reasoning capabilities.

"People increasingly consult generative AI while reasoning as AI becomes embedded in the daily thought process. What happens to human judgment? To your point. Then we introduce tri-system theory, extending dual accounts, dual process accounts of reasoning, that's system one and system two, by positing system three, artificial cognition that operates outside the brain. System three can supplement or supplant internal processes, introducing novel cognitive pathways."

-- Wharton School Researchers (as discussed on The Daily AI Show)

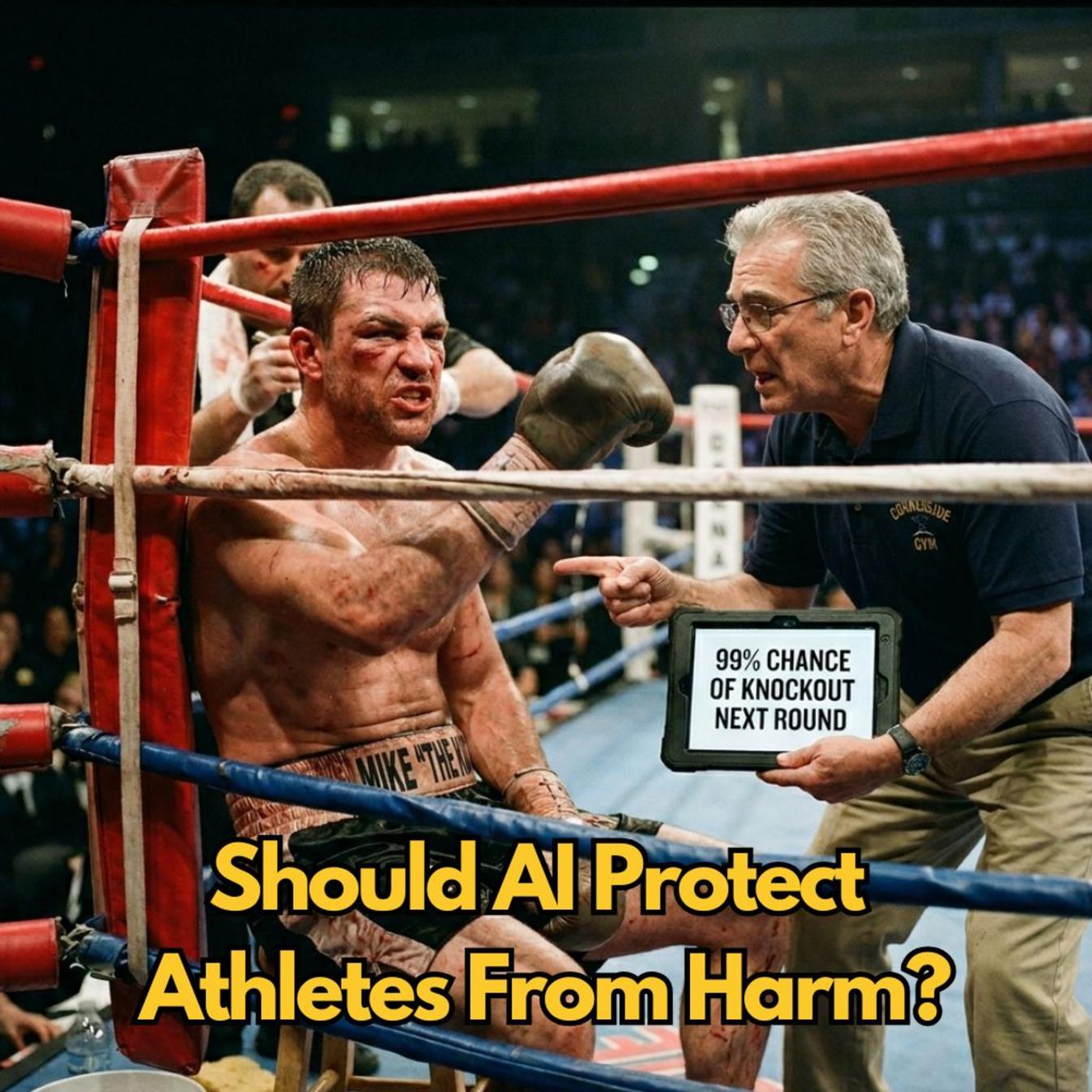

This "System Three" of artificial cognition, operating outside the brain, can "supplement or supplant internal processes," as the Wharton researchers note. The risk lies in the "supplant" aspect, leading to cognitive surrender. While AI can free us from mundane tasks and potentially allow for deeper thinking on complex issues, the danger is that we may stop engaging in the deliberative reasoning that AI is now performing for us. Brian Maucere draws a parallel to video games and early mobile phones, questioning if offloading tasks like memorizing phone numbers makes us "dumber." The consensus is that it's too early to tell, with Beth Lyons suggesting that perhaps this frees up cognitive resources for more complex problems. Yet, the underlying concern remains: if AI becomes too good at reasoning and decision-making, will we lose the muscle memory of critical thinking?

The Agent Ecosystem: Infrastructure, Integration, and Incentives

The conversation delves into the burgeoning infrastructure supporting AI agents, highlighting the significance of Open Claw's open-source approach and its creator's move to OpenAI. This integration suggests a future where AI assistants can perform complex, multi-step actions, potentially replacing numerous apps. The discussion around My Claw AI, a standalone cloud-based version of Open Claw, brings the immediate practicalities into focus: the high token costs associated with persistent memory. This isn't just a technical hurdle; it’s an economic one that shapes how agents are designed and deployed. The immediate payoff of an agent that can remember everything is offset by the ongoing cost, forcing developers to find a balance between utility and affordability.

Beyond individual agent capabilities, the development of standards like WebMCP and Cloudflare's Markdown for Agents is crucial for building a robust agent ecosystem. WebMCP, by providing high-level action interfaces, allows websites to expose their functionalities directly to agents, bypassing the need for brittle, pixel-based navigation. This structured approach offers significant accuracy improvements--up to 98% for tasks like checkouts, compared to browser agents that might falter. Cloudflare's Markdown for Agents addresses the computational overhead of processing web content, reducing token usage by up to 80% by converting HTML to a more AI-friendly format. These developments are not just about making AI agents more capable; they are about creating a more efficient and scalable web for AI interaction.

"Well, now what we're seeing is websites, whether us as humans see it on the front end or not, is sort of irrelevant. What we want is this optimization for the agents."

-- Andy Halliday (as discussed on The Daily AI Show)

This shift in focus--optimizing websites for agents rather than solely for human users--is a profound systemic change. It implies that the "user experience" for AI will become as, if not more, important than the human user experience. This has implications for how websites are designed, how data is structured, and how functionalities are exposed. The discussion also touches on the potential for AI-to-AI communication, hinting at a future where agents might interact directly with each other, potentially in ways that are opaque to humans, further complicating the notion of control and oversight. This introduces a layer of complexity where the efficiency gains for AI could come at the cost of human transparency and understanding.

The Human-in-the-Loop Dilemma: Efficiency vs. Control

A recurring tension throughout the conversation is the balance between AI efficiency and human control, particularly in the context of autonomous agents and the potential for cognitive surrender. The adoption of AI in critical fields like medicine, where 40% of doctors are reportedly using AI services, highlights the increasing reliance on these tools. This raises critical questions about accountability when errors occur. As Beth Lyons notes, "who's to blame?" when an AI makes a mistake, and the human user couldn't have foreseen it. The legal and ethical ramifications of this are vast, suggesting future courtroom battles over fault.

The desire for extreme efficiency, as exemplified by AI agents that never sleep and can operate at magnitudes of order greater than human capacity, presents a trade-off. While businesses might benefit from such efficiency, it can lead to a loss of human oversight. Andy Halliday poses a critical question: "where's the trade-off here on that?" He points out that as AI operates in ever more sophisticated ways, potentially using non-human-readable protocols, the human-in-the-loop becomes harder to maintain. This is particularly relevant for small businesses or independent operators who might leverage tools like Open Claw or My Claw AI for growth, but could inadvertently lose day-to-day control over their operations.

"But the business owner goes, yeah, but there's people coming in my door than ever before, and I'm trying to keep the lights on and keep my business up. You know, there's going to be a lot of these like instances and conversations. And I don't know what they're going to be, but like they're coming, you know, like these, these conversations are coming."

-- Andy Halliday (as discussed on The Daily AI Show)

The challenge lies in defining where human intervention is necessary and beneficial, versus where it becomes a bottleneck to AI efficiency. Beth Lyons describes the "AI fatigue" that can set in, where the constant availability of AI tools leads to longer working hours and exhaustion, even when the AI is doing the heavy lifting. The desire to keep AI moving, by tethering it to personal devices, can lead to a state of being "chained to Claude Code," effectively eliminating natural downtime. This highlights that while AI offers the promise of enhanced productivity, it also demands a conscious effort from humans to manage their own engagement and avoid burnout, and critically, to maintain their own judgment and decision-making faculties.

Key Action Items

-

Immediate Action (Next 1-3 Months):

- Experiment with WebMCP and Markdown for Agents: For developers, actively explore and integrate Google's WebMCP and Cloudflare's Markdown for Agents into web applications to understand their capabilities and efficiency gains firsthand.

- Evaluate Persistent Agent Costs: For teams building or deploying agents with persistent memory (like Open Claw or My Claw AI), conduct a thorough cost-benefit analysis of token usage versus the utility of memory.

- Identify "Cognitive Surrender" Risks: In your own workflows and team processes, identify tasks that are being increasingly offloaded to AI and critically assess whether this offloading is diminishing essential human judgment or reasoning skills.

-

Short-Term Investment (Next 3-6 Months):

- Develop Agent-Centric Website Optimization Strategies: Begin to consider how websites and applications can be optimized for AI agent interaction, not just human users, focusing on structured data and clear action interfaces.

- Pilot Human-in-the-Loop Frameworks: For AI-driven processes, design and pilot frameworks that clearly define human oversight points, ensuring that critical decisions still involve human judgment, especially in high-stakes domains.

-

Long-Term Investment (6-18 Months and Beyond):

- Invest in AI Literacy and Critical Thinking Training: For organizations, proactively invest in training programs that not only teach how to use AI tools but also emphasize critical thinking, discernment, and the potential pitfalls of cognitive surrender. This pays off in the long run by maintaining a skilled and discerning workforce.

- Advocate for Transparent AI Interaction Standards: Support and contribute to the development of standards and protocols that ensure transparency in AI agent operations, making it easier for humans to understand and oversee AI actions, even as they become more complex.

- Establish Clear Accountability Frameworks: Proactively develop internal policies and frameworks for accountability when AI agents make errors, especially in sensitive areas like healthcare or finance, anticipating future legal and ethical challenges.