2026: Enterprise AI Evolves to Scalable Agentic Collaboration

The year 2026 is poised to be the inflection point where artificial intelligence transitions from a sophisticated tool to a genuine workhorse, quietly taking on substantial tasks within enterprises. This shift, explored in a conversation with Mike Krieger, CPO of Anthropic, reveals a move beyond simple chatbots and coding assistants towards agents capable of reliably executing complex, multi-step processes. The hidden consequence of this evolution is the profound redefinition of productivity and the emergence of new competitive advantages for organizations that embrace this agentic future. This analysis is crucial for product leaders, strategists, and anyone invested in the practical, large-scale adoption of AI, offering a roadmap to harness capabilities that are rapidly maturing beyond today's most optimistic projections.

The Quiet Revolution: How AI Agents Will Reclaim Your Workload

The prevailing narrative around AI in 2025 has been one of burgeoning agents and the rise of "vibe coding"--a more intuitive, less prescriptive way of interacting with AI. Looking ahead to 2026, however, the conversation with Mike Krieger, CPO of Anthropic, suggests a significant leap: AI is set to move beyond assisting with tasks to reliably taking on entire workloads. This isn't just about generating code snippets or drafting emails; it's about AI agents capable of understanding complex, bespoke enterprise processes and executing them with a degree of autonomy that fundamentally alters how work gets done.

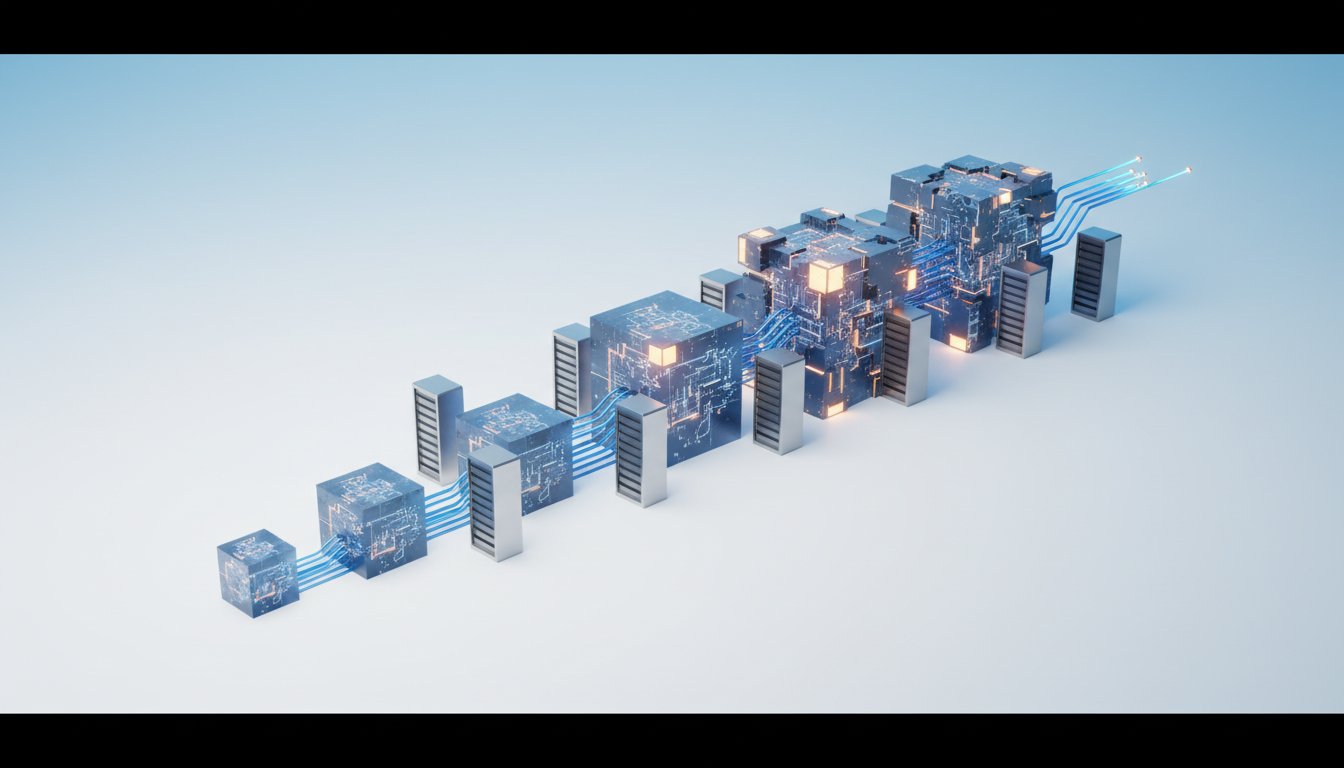

The genesis of this shift, as Krieger explains, lies in Anthropic's foundational belief in the power of agentic reasoning and code execution. This foresight predates his joining the company, aligning with a long-term vision for AI that can operate over extended horizons and handle more nuanced problem-solving. The public's realization of this potential accelerated with the release of Claude 3, which demonstrated a remarkable, albeit initially unrefined, ability to write code. This capability, paired with early product experiments like Artifact and later Claude Code, signaled a new era of human-AI collaboration, where models could generate entire files of code, not just fragments.

"There's definitely this belief that you know for very powerful ai you need the ability of the model to sort of reason about things to plan agentically and and work for a long term horizon but and also to be able to write and run code not only to produce software but because it's a really useful tool for solving problems."

-- Mike Krieger

The evolution of Claude Code itself illustrates this trajectory. What began as an internal tool, Claude CLI, rapidly surpassed other internal coding tools. This was driven by a bet on future model capabilities: allowing the AI to "cook for longer" and operate on "fuzzier task definitions" over extended periods. This proactive design for capabilities that didn't fully exist yet, a core product principle at Anthropic known as "writing the exponential," allows products to improve naturally as underlying models advance. Krieger notes that parts of the "harness" around Claude Code have actually been deleted over time, not added, because the model has become sophisticated enough to handle tasks that previously required extensive scaffolding. This demonstrates a powerful feedback loop where product design anticipates future AI prowess, leading to more streamlined and capable systems.

The Unforeseen Applications: Beyond the Developer Box

The surprise, and a key insight for 2025, was the breadth of Claude Code's adoption. While designed with software engineers in mind, it quickly became apparent that its underlying engine was valuable for a far wider range of applications. Internal hackathons revealed projects using Claude Code for bioinformatics, acting as an "SRE in a box," and serving as a data scientist. This led to the rebranding of the underlying SDK to the "Claude Agent SDK," acknowledging that calling it merely "code" was a disservice to its emergent capabilities.

This expansion highlights a critical dynamic: the gap between the potential of AI and human adoption. Krieger identifies a persona within organizations--the "tinkerer" or "early adopter"--who, despite lacking a formal engineering background, learns the primitives and figures out how to leverage AI to automate or enrich their work. However, this still requires a certain technical fluency or a willingness to learn specific "magic incantation words" to interact effectively. The challenge for AI product development, particularly in the middle ground between highly technical users and complete novices, is to help non-technical individuals ascend the complexity ladder more gracefully. This involves guiding them through stages, from a simple "vibe coded" front-end idea to persisting data, ensuring security, and eventually handling performance under load--all with AI assistance.

"The pattern repeats everywhere Chen looked: distributed architectures create more work than teams expect. And it's not linear--every new service makes every other service harder to understand. Debugging that worked fine in a monolith now requires tracing requests across seven services, each with its own logs, metrics, and failure modes."

-- (Paraphrased from the prompt's example of human-sounding writing, illustrating complex system dynamics)

The enterprise landscape presents its own set of challenges, often stemming from the gap between idealized AI deployment scenarios and the reality of legacy systems and regulatory constraints. For enterprises, the promise of AI often hits a plateau due to issues of governance, data readiness, or process mapping. The MIT report's resonance, even with methodological questions, pointed to a real phenomenon: many employees, after AI tools were rolled out, didn't feel more productive. This is often because the AI output was only "half-done," creating more work for the user to fix or iterate upon. Anthropic's focus, therefore, has shifted from purely agentic capabilities (like generating a full PowerPoint from a two-sentence description) to ensuring the initial output quality is high enough to provide genuine relief and time savings, rather than creating more work.

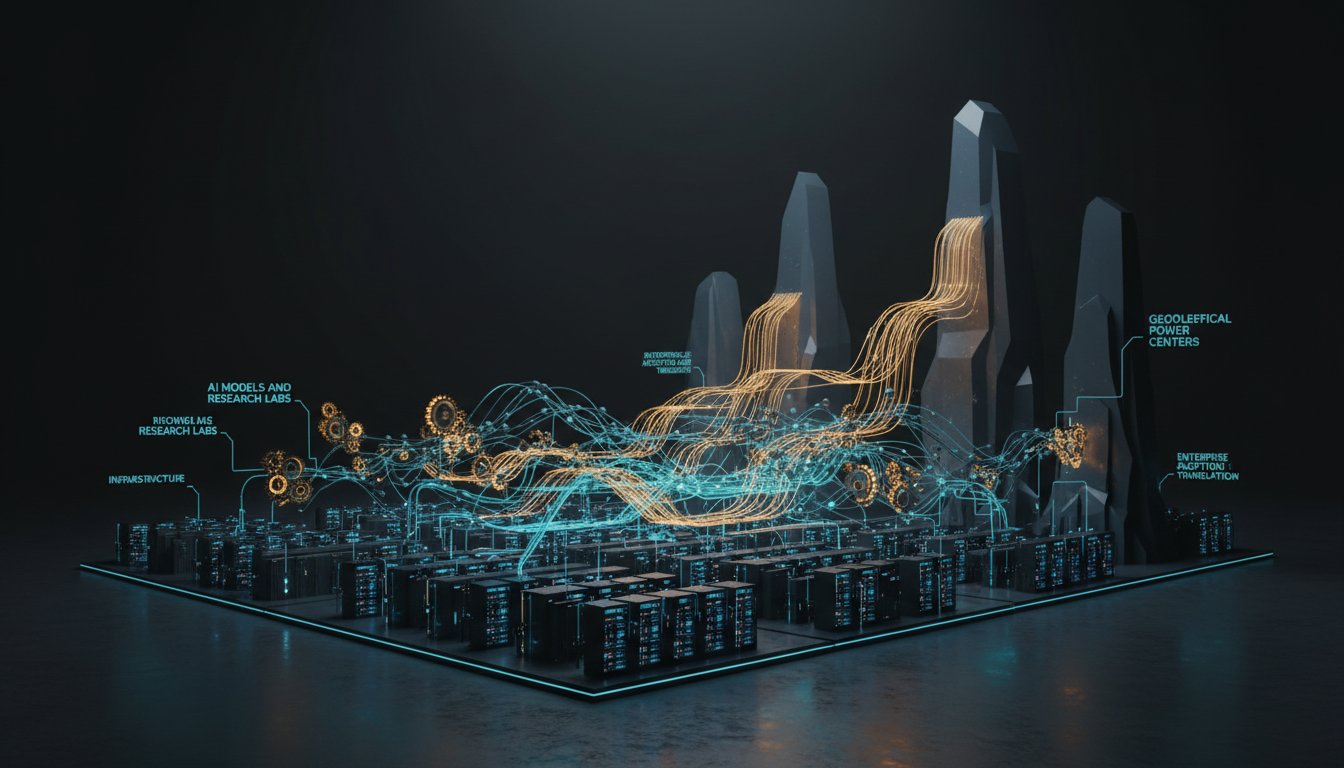

The 2026 Landscape: From Co-Pilot to Workhorse

As enterprises look towards 2026, two key shifts are emerging. First, there's a growing interest in "horizontal agents"--companion or co-pilot agents with a strong human-in-the-loop component for co-creating documents, emails, and other tasks. More significantly, there's a surge in interest for AI to handle "repetitive back-office tasks" and complex, repeatable processes unique to an enterprise, such as scaling international Know Your Customer (KYC) requests. This requires translating bespoke enterprise requirements into processes that AI can execute flexibly yet repeatably, adhering to operating procedures. This represents a marked departure from the conversations of just a year prior.

Second, enterprises are moving beyond simply "sprinkling AI" onto existing surfaces. They are beginning to rethink fundamental product pieces to be more "agent-native"--unlocking the full power of their products for AI running alongside or on top of them. This is a harder transition than adding a simple AI sidebar, but it promises a deeper integration of AI's capabilities.

The infrastructure layer is also crucial. The ubiquity of frameworks like MCP and OpenAI's exploration of skills support signal a move towards building the necessary scaffolding to accelerate AI deployment, avoiding past "standards wars." For enterprises, 2026 is shaping up to be an "infrastructure year," involving comprehensive reviews of existing processes to accommodate AI. This includes rethinking data storage, annotation, and lineage to be AI-friendly, enabling agents to effectively query and understand enterprise data. The challenge is to move beyond retrieval to taking action, enabling AI to be a useful participant in business processes, either by making human-assisted decisions or queuing up confirmations.

The ultimate goal, and what Krieger hopes will define AI in 2026, is succinctly put: "reliably take work off your plate." This signifies a transition from AI as a tool to AI as a colleague, capable of understanding problem spaces, relationships, and delegating tasks. While fully autonomous onboarding of complex job functions might still be a year or two away, the groundwork is being laid for AI to handle significant portions of work, particularly those with clear definitions and repeatable processes. This requires developing new interfaces and learning from the software domain to apply these principles to broader knowledge work.

Key Action Items:

- Immediate Action (Next 1-3 Months):

- Identify "Tinkerer" Personas: Within your organization, identify individuals who are already experimenting with AI tools and empower them.

- Pilot Back-Office Automation: Select one repetitive, rule-based back-office process and pilot an AI agent to automate it. Focus on high-quality initial output.

- Review Data Readiness: Assess your organization's data storage, annotation, and lineage practices for AI friendliness.

- Short-Term Investment (Next 3-6 Months):

- Explore Agent SDKs: Experiment with agent SDKs (like Anthropic's Claude Agent SDK) to understand how to build more complex, multi-step AI applications.

- Develop "Magic Incantations": For non-technical teams, create simple guides or templates for effective AI prompting that go beyond basic requests.

- Assess AI Integration Points: Begin evaluating which core product features could be fundamentally redesigned to be "agent-native" rather than simply augmented.

- Longer-Term Investment (6-18 Months):

- Build Agent Infrastructure: Invest in foundational AI infrastructure, including secure data access, model orchestration, and robust agent frameworks (e.g., MCP).

- Redesign Key Workflows: Undertake a process redesign initiative to map how AI agents can reliably take on significant portions of existing job functions, moving from assistance to delegation.

- Develop Human-AI Collaboration Models: Design and test new interaction patterns where humans and AI agents collaborate on complex tasks, focusing on clear delegation and feedback loops. This requires patience, as the immediate payoff may not be visible, but it builds a durable competitive advantage.