Australia's Social Media Age Limit: Missed Opportunity for Nuanced Regulation

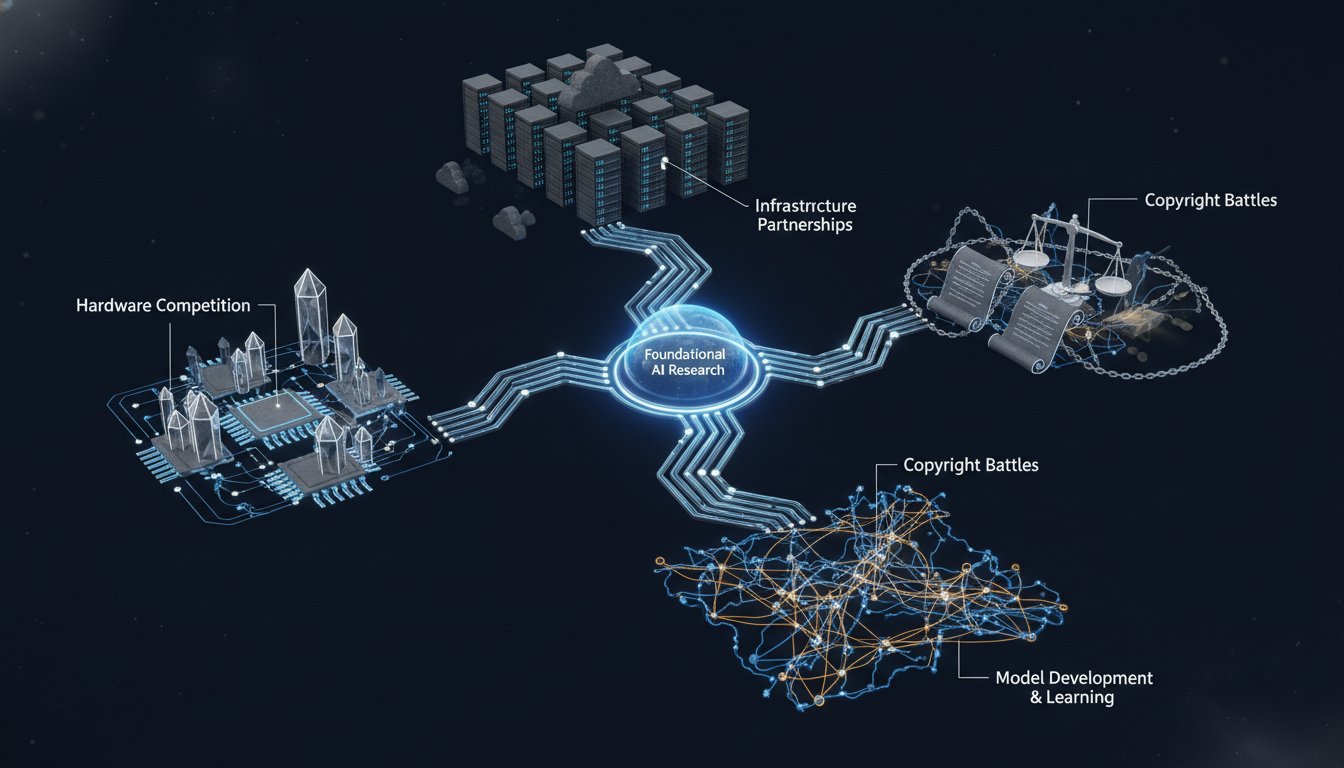

Australia's new social media age limit for under-16s, while a bold move, reveals a critical missed opportunity: a failure to leverage regulation to fundamentally reshape platform design rather than simply restrict access. The conversation highlights how broad, blunt-force policies, driven by populist sentiment and media campaigns, can overshadow more nuanced, impactful interventions. This analysis is crucial for policymakers, tech ethicists, and parents seeking to understand the systemic implications of digital regulation, offering a cautionary tale about the difference between appearing to act and enacting truly transformative change. Those who grasp this distinction gain an advantage in advocating for more effective digital governance.

The rollout of Australia's social media age limit for individuals under 16 presents a complex case study in digital regulation, highlighting how a seemingly decisive action can mask a more profound failure to address the root causes of online harm. While the intention to protect young users is laudable, the implemented policy, a broad ban, sidesteps a more potent strategy: compelling big tech to redesign its platforms to align with societal expectations and user well-being. This approach, driven by a confluence of media pressure, populist appeal, and political expediency, missed a critical window to enact reforms that could have fostered genuinely safer and healthier online environments for all users, not just those who will eventually turn 16.

The Illusion of Control: Why a Blanket Ban Falls Short

The Australian government's decision to implement a legal expectation for social media platforms to prevent under-16s from having accounts, backed by substantial fines for non-compliance, initially appeared as a decisive step against the perceived harms of social media. However, as Cam Wilson, associate editor at Crikey, points out, the very simplicity of the "ban the kids" message, while effective for public consumption, obscures the complexity of the problem. This broad-stroke approach, amplified by media campaigns and a groundswell of community concern, particularly post-COVID, bypassed opportunities for more targeted and effective regulation.

"But for me and and like speaking to for example people who worked on the law who drafted the law they were just like it was like a gut punch to them because they were like instead of taking this law that we said we're banning kids but we're going to give tech platforms a way out if they produce versions of their applications that are in line with our expectations that we think by our rules are less damaging to kids we create an incentive structure for them to actually do something..."

-- Cam Wilson

The original legislation included an "exemption framework" that would have allowed platforms to avoid the ban if they removed harmful features such as endless scrolling, push notifications, and gamified "streaks." This was a crucial element, offering a tangible incentive for platforms to redesign their user experience. However, this framework was removed through political negotiation, transforming a potentially transformative regulatory tool into a blunt instrument. This decision, driven by concerns that tech companies might "game the rules," ultimately stifled innovation in platform design and perpetuated a system where the default is to restrict access rather than reform the environment. The consequence is a missed opportunity to force platforms to confront their incentive structures, which, as Wilson argues, prioritize user engagement and continued existence over genuine user safety.

The Hidden Cost of Simplicity: Erosion of Deeper Reforms

The focus on a social media age limit also had the unintended consequence of overshadowing and even undermining existing, more nuanced regulatory efforts. Australia had already introduced measures like the Online Safety Act and a Children's Online Privacy Code, aimed at regulating content and data collection. The age ban, however, effectively sidelined these initiatives, creating a narrative that the problem was "solved" by simply banning young users. This created a "ban or do nothing" dichotomy, ignoring the potential for regulatory frameworks that could have addressed issues like predatory algorithms and harmful targeting across all age groups.

The "fix our feed" campaign, advocating for chronological feeds as an opt-out default, exemplifies the kind of targeted reform that was sidelined. By focusing solely on age, the government failed to leverage its regulatory power to compel platforms to alter the fundamental mechanics that drive harmful engagement. This is a critical system-level failure: instead of re-engineering the incentives that lead to problematic design choices, the policy opted for a simpler, albeit less effective, solution. The implication is that young users, upon turning 16, will be "turfed into an internet into platforms that haven't really improved," having merely waited out the restriction rather than experiencing a genuinely safer digital landscape.

The Specter of AI and the Unaddressed Data Question

The rise of generative AI and chatbots introduces another layer of complexity, further underscoring the limitations of the age-limit-centric approach. While Australia has taken steps to require age verification for chatbots, this is occurring in parallel to, rather than in integrated concert with, the social media ban. The discourse around AI has highlighted distinct, yet related, harms, particularly concerning data privacy and the potential for misuse of sophisticated AI tools by minors. The Australian government's embrace of AI technologies, alongside its strict social media age limit, reveals a piecemeal approach to digital regulation.

Furthermore, the conversation around digital sovereignty and reliance on US technology, particularly in the context of potential US government retaliation against regulatory measures, casts a long shadow. Despite the geopolitical sensitivities, Australia's close ties with the US, including defense agreements and data-sharing laws, mean that Australian data held by US tech companies remains subject to US legal orders. The Australian government's recent collaborations with companies like OpenAI and Palantir, while framed as beneficial, do little to address the underlying vulnerabilities of Australian data being subject to foreign jurisdiction. This creates a systemic risk where regulatory efforts, like the age limit, are implemented without a robust framework for data protection and digital sovereignty, leaving users exposed despite perceived protections.

"I think that like having a reform that just says no matter what if you are a piece of social media like if you're a social media application you are banned is like fundamentally kind of just condemning the whole internet and any potential as like broken whereas i think like the point is we should be thinking about this whether we're legislators or just like normal people who are like trying to set up their own communities like how can we use technology in a way that is not harmful what incentive structures can we do or how can we ourselves choose to be part of places online that are not harmful..."

-- Cam Wilson

The core lesson here is that effective regulation requires a systemic understanding. It's not enough to restrict access; the goal must be to reshape the incentives and design of the platforms themselves. The Australian experience demonstrates that while bold actions can garner attention, they are insufficient if they fail to address the underlying dynamics that create harm. The true advantage lies not in simply banning young users, but in forcing platforms to evolve into spaces that are inherently safer and more aligned with human well-being, a goal that requires a more sophisticated and integrated regulatory approach.

Key Action Items

- Advocate for "Design-Based" Regulation: Instead of solely focusing on age limits, push for regulations that mandate the removal or alteration of specific harmful platform features (e.g., infinite scroll, algorithmic amplification of harmful content). This requires a longer-term investment in policy development and advocacy.

- Support Chronological Feeds: Champion initiatives like "fix our feed" that advocate for chronological timelines as the default, moving away from engagement-maximizing algorithms. This is an immediate action to support through public awareness and by choosing platforms that offer this option.

- Demand Data Localization and Sovereignty: Investigate and advocate for stronger data sovereignty laws that ensure Australian data is stored and processed within Australian jurisdiction, with robust protections against foreign legal orders. This is a medium-term investment in legal and policy reform.

- Integrate AI Regulation with Existing Frameworks: Ensure that the regulation of AI, including chatbots, is not treated in isolation but is integrated with broader digital safety and privacy laws, addressing issues like age verification and data handling holistically. This requires ongoing monitoring and policy adaptation.

- Foster Public Discourse on Platform Incentives: Actively engage in conversations that highlight how platform business models drive harmful design choices, shifting the focus from individual user behavior to corporate responsibility. This is an immediate, ongoing effort in public education and awareness.

- Invest in Nuanced Policy Development: Prioritize the development of regulations that are informed by experts and address the multifaceted nature of online harm, rather than relying on simple, broad-stroke bans. This is a long-term strategic investment in robust policy research and development.

- Challenge the "Ban or Do Nothing" Narrative: Actively counter the simplistic framing of digital regulation by highlighting existing, albeit imperfect, regulatory measures and advocating for their strengthening and expansion. This is an immediate, communication-focused action.