Artemis II Reveals Hidden Hurdles of Complex Projects

The Artemis II mission's successful return marks a significant milestone in space exploration, but its implications extend far beyond a new record. This conversation reveals the hidden consequences of ambitious technological endeavors: the unexpected challenges that arise even with meticulous planning, the critical role of adaptable infrastructure, and the long-term strategic shifts required for future deep-space missions. Anyone involved in complex, multi-year projects, from aerospace engineers to project managers in any field, will gain an advantage by understanding how to anticipate and navigate the inevitable "bugs" in the system and how to strategically plan for delayed payoffs, rather than just immediate success.

The Unseen Hurdles of Reaching for the Stars

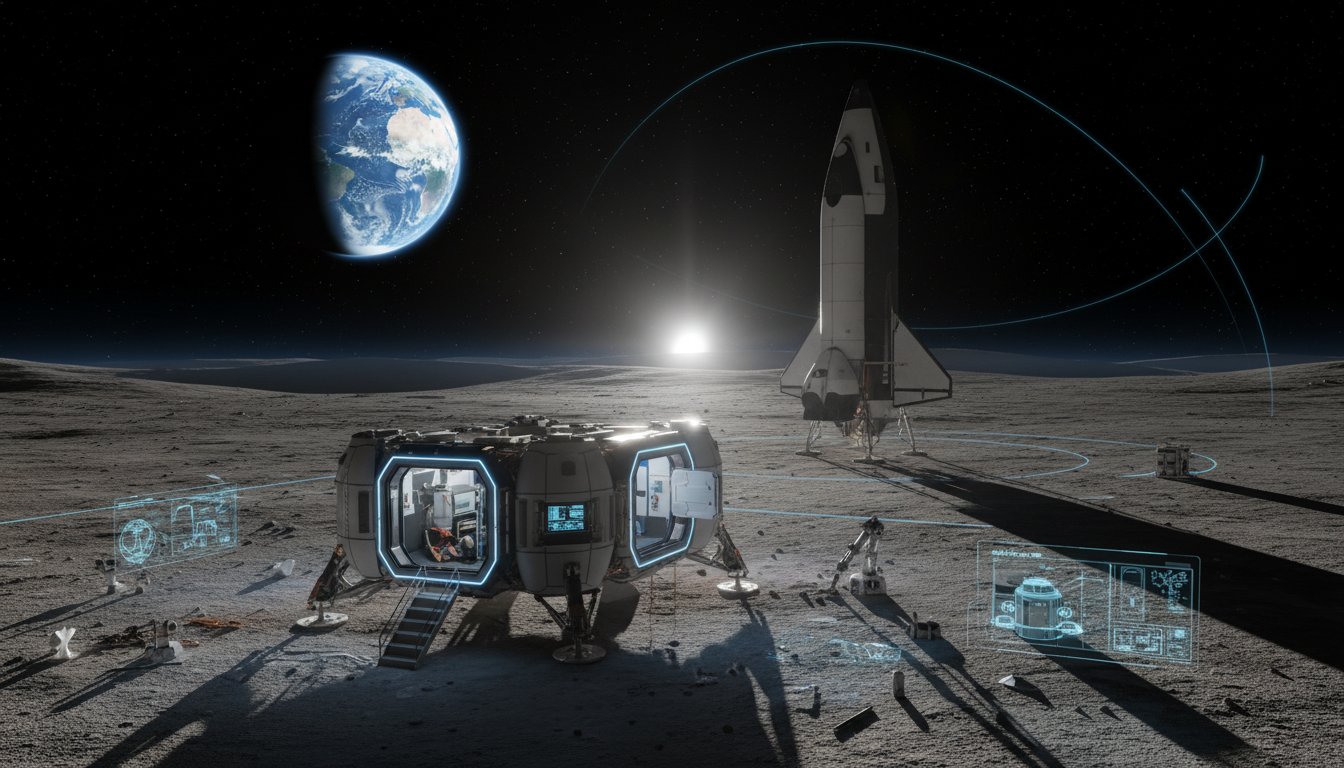

The Artemis II mission's splashdown is a triumph, a testament to human ingenuity and perseverance. Yet, beneath the surface of this monumental achievement lies a complex web of challenges that NASA and its partners are now grappling with. While the mission successfully sent four astronauts further into space than any humans before them, and tested the Orion spacecraft's capabilities for future lunar missions, the journey was far from flawless. The transcript highlights that even with rigorous planning, unexpected issues emerge, forcing a re-evaluation of timelines and strategies. This isn't just about space travel; it's a potent metaphor for any large-scale project where the initial plan inevitably encounters the messy reality of execution.

The mission's primary objective was to test the astronauts' ability to safely travel to the moon and back, ensuring the Orion spacecraft was ready for subsequent missions. However, the return journey revealed a host of issues, ranging from a leak in the service module--a potentially serious concern--to the more mundane yet equally disruptive problem of a non-functional toilet. Even a familiar foe, Microsoft Outlook, proved troublesome, demonstrating that even advanced technology is susceptible to everyday glitches, amplified by the extreme environment of space.

"What you might not know is that one of the big worries beforehand was the Orion's heat shield and whether it would survive the re-entry. As we now know, it thankfully did, but the flight turned up other troubles that the team at NASA will now have to work out."

This quote underscores a crucial system-thinking principle: the focus on a single, high-stakes component (the heat shield) can inadvertently mask or delay the discovery of other, less dramatic but still critical, system failures. The "troubles" that the flight turned up are not isolated incidents but rather emergent properties of a complex system under stress. These are the hidden costs that become apparent only after the initial investment of time and resources.

The implications of these findings are significant for future missions. NASA has already adjusted the plan for Artemis III, shifting its focus from a lunar landing to testing docking systems before Artemis IV attempts a surface return in 2028. This strategic pivot, driven by the lessons learned from Artemis II, illustrates how initial failures can lead to more robust, albeit delayed, success. The long-term goal of reaching Mars, facilitated by a lunar base, hinges on this iterative process of learning and adaptation.

The Competitive Edge of Delayed Payoffs

The narrative around Artemis II, and its subsequent strategic adjustments, highlights a powerful dynamic: competitive advantage often emerges not from immediate wins, but from the willingness to endure short-term difficulties for long-term gains. The criticism that the vast sums spent on space exploration could be used for Earth-bound problems is a common refrain. However, the transcript implicitly argues that such endeavors, despite their expense, inspire and drive innovation that can have broader, albeit delayed, benefits.

The very act of pushing the boundaries of what's possible, as Reid Wiseman noted, is a "herculean effort." This effort, and the subsequent analysis of its outcomes, provides invaluable data and experience. For those managing complex projects, the lesson is clear: optimizing solely for immediate results can lead to overlooking critical system vulnerabilities. The true advantage lies in building systems that are not only functional but also resilient and adaptable, even if that requires patience and a willingness to confront inconvenient truths revealed by the system itself.

When Conventional Wisdom Fails Under Pressure

The challenges faced by Artemis II--from leaks to malfunctioning toilets--serve as a stark reminder that conventional wisdom, often focused on the most visible or dramatic potential failures, can fall short when confronted with the cumulative effect of smaller, systemic issues. The focus on the heat shield, while critical, meant that other problems had to be addressed post-flight. This is analogous to businesses that meticulously plan for major market disruptions but are blindsided by the slow erosion of customer loyalty due to a series of minor service failures.

The transcript also touches on the broader geopolitical implications, with the US President ordering naval blockades and failed peace negotiations in Pakistan. This demonstrates how interconnected global systems are, and how decisions in one domain can have cascading effects in others, particularly concerning fuel security for nations like Australia. The government's advertising campaign encouraging fuel conservation is a direct response to these global pressures, a downstream effect of geopolitical friction.

The Bondi Junction attack anniversary, while a somber reminder of tragedy, also highlights the courage of individuals who acted decisively in a crisis. The recognition of bravery awards for those who intervened or saved lives underscores the human element within complex events. Nurse Catherine Mollohan's statement, "it was good that I was on the scene because it saved someone else from seeing what I saw," speaks to the profound, often unseen, positive consequences of courageous action in the face of immediate danger. This is the flip side of consequence mapping: identifying not just negative downstream effects, but also the positive ripple effects of bravery and decisive action.

Key Action Items

-

Immediate Action (Next 1-2 Weeks):

- Review your current project's critical path. Identify the single most visible, high-stakes component and then deliberately investigate at least two less obvious, but still critical, supporting systems for potential vulnerabilities.

- For any upcoming project, allocate specific time during the planning phase to brainstorm "mundane but critical" failures (e.g., communication breakdowns, resource allocation issues, tool malfunctions) alongside the "big picture" risks.

- If facing a complex problem, actively seek out perspectives from individuals who are not directly involved in the day-to-day operations, as they may spot systemic issues missed by those too close to the problem.

-

Short-Term Investment (Next 1-3 Months):

- Implement a feedback loop for post-launch or post-project analysis that explicitly looks for unexpected, secondary consequences, both positive and negative, rather than just evaluating against initial objectives.

- When making strategic decisions, explicitly map out the potential downstream effects on different stakeholders or departments, even if these effects are not immediately apparent.

-

Longer-Term Investment (6-18 Months):

- Develop a culture where acknowledging and addressing "inconvenient truths" or "bugs" discovered post-implementation is rewarded, not penalized. This fosters resilience and adaptability.

- For projects with long lead times or complex dependencies, build contingency and buffer time not just for known risks, but for the unknown unknowns that complex systems inevitably reveal. This delayed investment in resilience pays off significantly in avoiding major overruns or failures.

- Invest in training that emphasizes systems thinking and consequence mapping for teams involved in complex project design and execution. This builds the capability to anticipate and manage downstream effects proactively.