PyPI Package Removals and UV Lock Files Create Ghost Package Vulnerabilities

The Ghost in the Machine: Unpacking the Hidden Dangers of PyPI Removals and UV Lock Files

This episode of Python Bytes delves into a surprisingly complex security vulnerability lurking within the Python ecosystem, specifically concerning the PyPI package index and the popular uv tool. The core thesis is that the seemingly simple act of "removing" a package from PyPI doesn't actually delete its distribution files, creating a dangerous "ghost package" problem. This conversation reveals the non-obvious implications of this behavior, particularly how uv.lock files can inadvertently preserve access to these removed, potentially malicious packages, bypassing standard security checks. Developers and security professionals should read this to understand a critical blind spot in their dependency management and to fortify their supply chain security against a sophisticated attack vector. The advantage gained is a deeper, more nuanced understanding of dependency resolution and a more robust defense against emerging threats.

The Persistent Phantom: How "Removed" Packages Linger

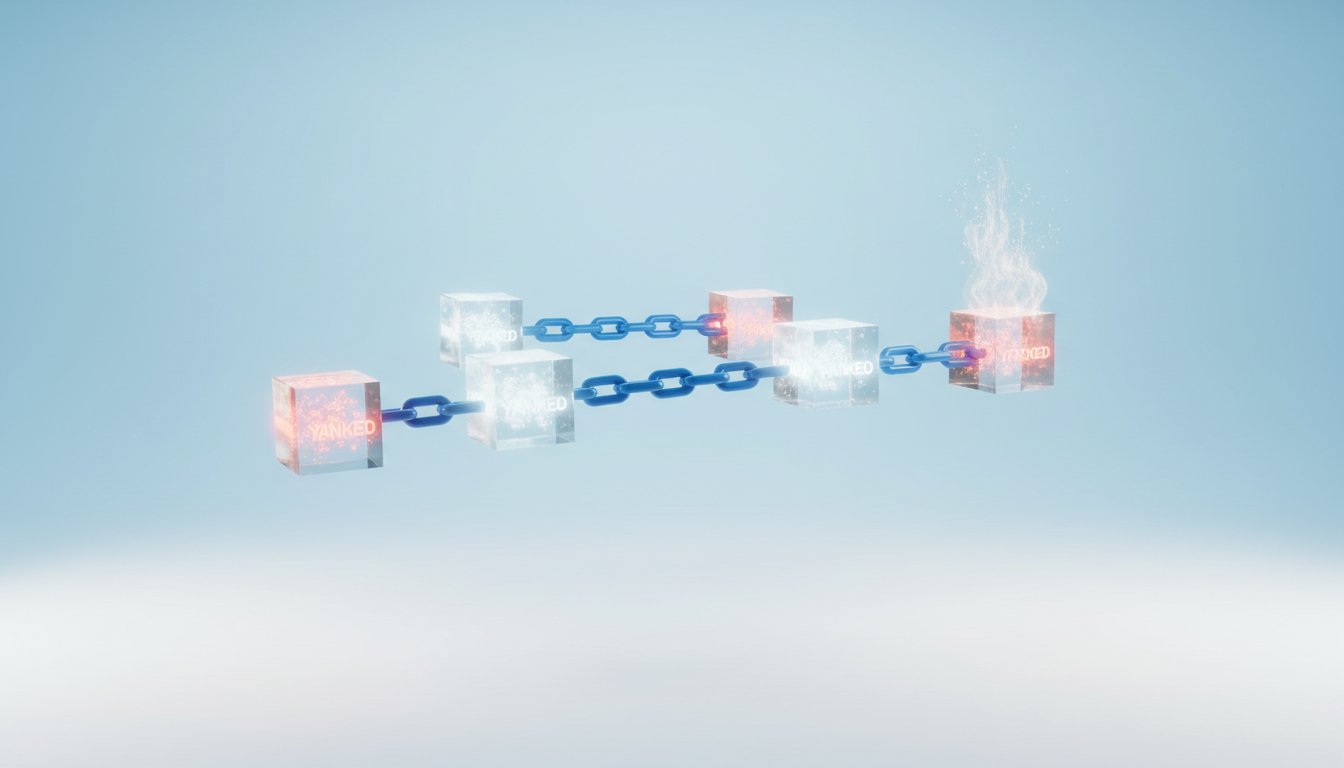

The concept of a package being "removed" from PyPI conjures an image of complete erasure. In reality, as discussed in this episode, PyPI's removal process is more nuanced: the package disappears from the index and its project page, but the underlying distribution files often remain accessible if one possesses a direct URL. This is a crucial distinction that opens the door to significant security risks.

The article "Lock the Ghost" highlights a specific vulnerability related to the uv.lock file format. Unlike other Python lock file implementations that might rely on index APIs for installation, uv.lock uniquely preserves direct URLs to these distribution files. This optimization, while contributing to uv's impressive speed, creates a critical security gap. When a malicious package is uploaded to PyPI and then immediately removed, it evades automated security scanning that typically targets the live index. However, if a uv.lock file was generated before the removal, it still contains the direct URL to the malicious distribution. Consequently, uv sync can successfully install this "ghost package," even with caching disabled, effectively bypassing security measures.

"PyPI 'removal' doesn't delete distribution files. When a package is removed from PyPI, it disappears from the index and project page, but the actual distribution files remain accessible if you have a direct URL to them."

-- Michael Kennedy

This persistence of "ghost packages" creates a potent supply chain attack vector. An attacker can leverage this by uploading a malicious package, swiftly removing it to circumvent detection systems, and then relying on pre-existing uv.lock files to distribute the compromised code. This is compounded by the potential to hide malicious code within large, auto-generated lock files, a tactic reminiscent of the xz backdoor, making manual review impractical. Furthermore, the article points out that removed package names can be hijacked. Once a package is removed, its name can be reclaimed by another party who can then upload different distribution types under the same version number. This exposes users who regenerate their lock files, as they will then pull in the malicious, re-hijacked package.

"This creates a supply chain attack vector. An attacker could upload a malicious package, immediately remove it to dodge automated security scanning, and still have it installable via a uv.lock file..."

-- Michael Kennedy

The implication here is profound: traditional dependency scanning, which often focuses solely on manifest files like pyproject.toml or requirements.txt, is insufficient. These files only list intended dependencies, not the resolved URLs and hashes that are critical for security. The actual threat lies within the lock file itself, which is where the direct links to potentially compromised distributions are stored. This necessitates a shift in security practices, emphasizing the need to scan and audit lock files with the same rigor as manifest files. The conventional wisdom of simply relying on package index availability is thus challenged by the reality of lingering distribution files and the tools that can still access them.

The Sandbox Solution: Containing Untrusted Code

Moving beyond package integrity, the discussion touches on sandboxing with the introduction of "Fence for Sandboxing." This Go binary, extracted from Claude Code, addresses the inherent risks of running untrusted code, a common scenario in coding platforms and AI agent development. While many platforms offer optional sandboxing, it's often not enabled by default. This leaves users vulnerable to code that might attempt to write to unauthorized directories or establish unwanted network connections.

"Some coding platforms have since integrated built-in sandboxing (e.g., Claude Code) to restrict write access to directories and/or network connectivity. However, these safeguards are typically optional and not enabled by default."

-- (Paraphrased from Brian Okken's summary of the Fence article)

The existence of "Fence" suggests a growing awareness of the need for robust isolation mechanisms. This is particularly relevant in the context of AI agents, as highlighted by the mention of Simon Willison's "lethal trifecta" article. AI agents, often interacting with external code or APIs, present a unique attack surface. The ability to sandbox these agents, restricting their access to the host system and network, becomes paramount. The immediate benefit of sandboxing is clear: preventing malicious code from causing direct damage. The downstream effect, however, is the creation of a safer environment for experimentation and development, fostering innovation without the constant fear of compromise. This proactive approach to containment offers a lasting advantage by reducing the blast radius of potential security incidents.

The AI Copyright Conundrum: MALUS and the Future of Licensing

The conversation then pivots to a more speculative, yet equally important, area: the intersection of AI and intellectual property with the introduction of MALUS. This service proposes to use AI to circumvent licensing and copyright rules by generating library specifications and then using another AI to build the software based on those specs, effectively bypassing original licensing. The hosts rightly question the legitimacy and intent of such a service, wondering if it's a genuine attempt to exploit legal loopholes or a provocative statement about the future of open-source licensing.

This highlights a fundamental tension. On one hand, AI offers incredible potential for code generation and accelerating development. On the other, it raises complex questions about ownership, attribution, and the very economic models that sustain open-source software. If AI can effectively "re-license" existing code by generating new versions based on its specifications, what does that mean for the original creators and the open-source community? The immediate implication is a potential devaluing of traditional licensing models. The longer-term consequence could be a radical restructuring of how software is developed, licensed, and monetized. This is a complex system dynamic where technological advancement directly challenges established legal and economic frameworks, demanding careful consideration and potentially new approaches to intellectual property in the age of AI.

Hardening Workflows: Securing the CI/CD Pipeline

Finally, Brian brings attention to hardening GitHub Actions workflows, referencing an article by Matthias Schoettle and the concerning "hackerbot-claw" incident. This issue underscores the critical importance of securing the CI/CD pipeline, which has become a prime target for attackers. The "hackerbot-claw" incident, where an AI-powered bot exploited GitHub Actions in several high-profile projects, demonstrates that even sophisticated security measures can be bypassed.

The article's focus on techniques like dependency pinning, dependency cooldowns, and using tools like zizmor points to a layered security approach. Dependency pinning ensures that only specific, vetted versions of dependencies are used, preventing the injection of malicious code through version updates. Dependency cooldowns introduce a delay before new dependencies can be used, allowing time for potential threats to be identified and mitigated. These are not quick fixes; they require deliberate effort and a willingness to accept a slightly slower development cycle in exchange for significantly enhanced security. The immediate discomfort of stricter dependency management pays off in the long term by creating a more resilient and trustworthy build process. This proactive hardening of the CI/CD pipeline is essential for protecting the integrity of software releases and preventing downstream compromises.

Key Action Items:

- Audit

uv.lockfiles: Immediately review your project'suv.lockfiles for any direct URLs pointing to packages that have been recently removed from PyPI. - Integrate Lock File Scanning: Enhance your CI/CD pipelines to include security scanning of lock files (e.g.,

uv.lock,Pipfile.lock,poetry.lock), not just manifest files (pyproject.toml,requirements.txt). - Implement Dependency Pinning: Rigorously pin your dependencies to specific versions in your

pyproject.tomlorrequirements.txtto prevent unexpected updates. (Immediate Action) - Explore Sandboxing Solutions: For any platform or workflow that executes untrusted code, investigate and implement sandboxing tools like Fence. (Medium-term Investment)

- Monitor AI & Licensing Developments: Stay informed about the evolving landscape of AI-generated code and its implications for open-source licensing and copyright. (Ongoing Vigilance)

- Adopt Dependency Cooldowns: Consider implementing a policy of "dependency cooldowns" for critical production environments, delaying the adoption of new dependency versions for a set period (e.g., 1-2 weeks) to allow for security vetting. (Medium-term Investment, pays off in 1-2 months)

- Review GitHub Actions Security: Conduct a thorough security audit of your GitHub Actions workflows, ensuring adherence to best practices for hardening, including limiting permissions and using trusted actions. (Immediate Action)