AI's Data Acquisition: Ethical Sourcing and Creator Rights Under Scrutiny

The frantic race to digitize humanity's written legacy for AI reveals a hidden chasm between innovation and ethical sourcing. While AI companies like Anthropic champion their role in advancing civilization through artificial intelligence, their methods for acquiring the vast datasets needed for model training have skirted legal and ethical boundaries. This conversation with Will Oremus of The Washington Post unearths the non-obvious consequences of "Project Panama," Anthropic's ambitious, and at times destructive, effort to scan millions of books. The core revelation is not merely the scale of data acquisition, but the systemic disregard for creators' rights and the complex, often murky legal justifications used to legitimize these actions. Anyone involved in AI development, content creation, or legal frameworks surrounding intellectual property should read this to understand the potential fallout of unchecked data acquisition and the evolving definition of "fair use" in the digital age. It offers a critical advantage by illuminating the often-unseen operational realities and ethical compromises underpinning AI's rapid advancement.

The Spine of the Matter: Why Books Are AI's Secret Weapon

The pursuit of artificial general intelligence has devolved into a frantic, often clandestine, race for data. While the internet offers a seemingly infinite ocean of information, AI developers have found that the carefully curated, edited, and crafted prose of books offers a superior training ground. This is where the seemingly mundane act of buying and destroying millions of physical books--dubbed "Project Panama" by Anthropic--becomes a critical strategic move. The non-obvious implication is that the very structure and quality of human knowledge, as codified in books, is seen as the bedrock for truly advanced AI, a stark contrast to the "detritus" of the internet.

Anthropic, a company positioning itself as an ethical outlier in the AI space, embarked on Project Panama with a stated goal of acquiring a comprehensive digital library. Led by Tom Turvey, who previously oversaw Google Books, the project eschewed direct licensing with publishers, deeming it impractical. Instead, it involved acquiring vast quantities of used books from warehouses like Better World Books. The method of scanning was equally stark: slicing off book spines to allow for rapid, high-volume digitization. This physical destruction of the source material, while described as "neat" and efficient, serves as a potent metaphor for the broader concerns of creators whose work is being absorbed and transformed by AI.

"destructively scan all the books in the world"

This evocative phrase, unearthed from internal documents, highlights the extreme measures taken. It also raises immediate questions about legality and ethics. While Anthropic framed this as a more legal and ethical approach than outright piracy from "shadow libraries" (unauthorized digital repositories), the legal underpinnings remain contentious. The core argument for AI companies hinges on the doctrine of "fair use," positing that transforming copyrighted material into an AI model constitutes a new, innovative product that does not directly compete with the original work. A judge's ruling in Anthropic's case supported this, deeming the training of AI models on scanned books as fair use, while pirating books not used for training was seen as problematic. This distinction, however, is a delicate legal tightrope, and the broader implications for authors and artists are far from settled.

The Shadow Library Gambit: Piracy as a Shortcut

The "Project Panama" approach, while destructive, was presented as an alternative to more overtly illegal methods. Internal documents reveal that Anthropic, like other AI giants such as Meta, had also engaged in downloading copyrighted works from shadow libraries. These vast, unauthorized digital collections, often distributed via torrent software, represent a significant portion of the world's digitized literature, available for free. This practice, however, puts these companies in direct legal jeopardy, potentially constituting more straightforward copyright infringement than the transformative use argument applied to AI training.

The internal discussions at Meta, as revealed in court filings, underscore the ethical unease among some engineers. One engineer reportedly expressed concern about "torrenting from a corporate laptop," noting that it "doesn't feel right" and could be "legally not okay." Despite these qualms, directives from leadership, including references to Mark Zuckerberg's approval, suggest a willingness to proceed, albeit with "mitigations." This reveals a systemic tension: a desire for rapid data acquisition versus an awareness of the legal and ethical precipice. The narrative that information "wants to be free," often cited in defense of such libraries, clashes directly with established copyright law and the rights of creators.

"Torrenting from a corporate laptop doesn't feel right."

This quote encapsulates the internal conflict. The expediency of accessing vast troves of data from shadow libraries, while seemingly a shortcut, carries significant legal risks and ethical compromises. It highlights how the competitive pressure in the AI race can lead companies to consider, and in some cases, engage in, practices that are legally dubious, even if they are ultimately seeking a more defensible method like Project Panama. The downstream effect of this approach is not just legal battles but also a fundamental erosion of trust between creators and the technology industry.

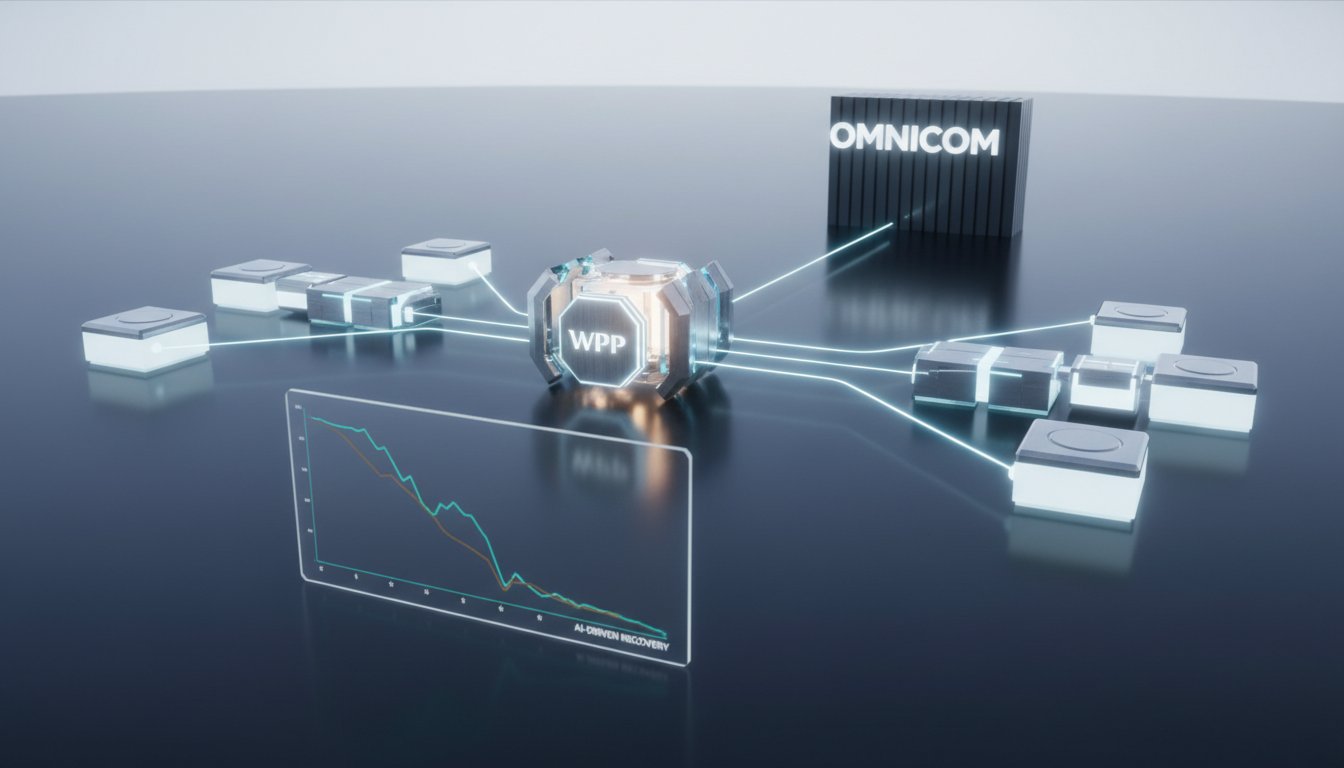

The Unsettled Law: Fair Use in the Age of AI

The legal battles surrounding AI training data are exposing the limitations of copyright law, which was not designed for the era of machine learning. The core question is whether training an AI on copyrighted material constitutes "fair use." Judges are grappling with this, leading to varied and often contradictory rulings. In the Anthropic case, the judge distinguished between using scanned books to train an AI (fair use) and downloading books without using them for training (potentially illegal copying). This distinction, while seemingly clear, is a narrow interpretation in a rapidly evolving landscape.

The Meta case presented another facet, where a judge dismissed claims because the plaintiffs failed to demonstrate direct harm to their ability to sell books. This places a significant burden on creators, requiring them to prove negative economic impact in a complex market. The comparison to Napster, a landmark case in digital music piracy, is apt. Just as Napster challenged established music distribution models, AI training data acquisition is challenging traditional notions of copyright. However, the scale and sophistication of AI companies dwarf early peer-to-peer file-sharing networks. The lack of explicit anticipation of AI in original copyright laws means that judges are essentially creating new legal precedents on the fly, leading to an unsettled and unpredictable environment for creators and tech companies alike.

"The idea of hoovering up all the world's knowledge and putting it into AI models is just not something that the original copyright laws had explicitly anticipated."

This statement underscores the fundamental challenge. The transformative nature of AI training means that the output is not a direct copy, but a synthesized intelligence. The value AI companies place on books--their curated quality and depth--is undeniable. Yet, the failure to compensate creators for this foundational input creates a systemic imbalance, fueling lawsuits and uncertainty. The long-term consequence of this legal ambiguity is a potential chilling effect on creative output, as artists and writers face an existential threat from systems trained on their work without remuneration.

Key Action Items

-

Immediate Action (Next Quarter):

- Review data acquisition policies: For AI developers, conduct an immediate audit of all data sources used for model training, identifying potential legal and ethical risks associated with copyrighted material.

- Engage legal counsel on fair use: Consult with copyright law experts to understand the evolving landscape of fair use as it applies to AI training data.

- Explore ethical data sourcing: For content creators and publishers, actively seek out and promote platforms that offer transparent and ethical data licensing for AI training.

-

Short-Term Investment (3-6 Months):

- Develop licensing frameworks: AI companies should proactively develop and offer fair licensing agreements to content owners, moving beyond the "fair use" defense for core training data.

- Invest in synthetic data generation: Explore and invest in techniques for generating high-quality synthetic data to supplement or replace the reliance on potentially copyrighted real-world data.

- Advocate for updated copyright law: Participate in industry discussions and policy-making efforts to help shape new legal frameworks that address the challenges of AI training data.

-

Long-Term Investment (12-18 Months):

- Build creator-centric AI models: Prioritize the development of AI models that are trained on ethically sourced and licensed data, fostering a sustainable ecosystem for both AI innovation and creative industries.

- Establish industry standards for data provenance: Work towards industry-wide standards for tracking and verifying the origin and licensing of data used in AI training, creating greater transparency and accountability.

- Foster collaborative partnerships: Forge genuine partnerships with authors, publishers, and other creative communities, moving from adversarial legal battles to collaborative models that ensure mutual benefit. This requires a shift in mindset, recognizing that the value of human creativity is not merely a resource to be exploited, but a foundation to be respected and compensated.