DCCC's Early Investment Targets Voters of Color and Rural Communities

The following blog post analyzes a podcast transcript. It applies consequence-mapping and systems thinking to reveal non-obvious implications of the discussed topics. This analysis is intended for individuals interested in understanding the deeper, often overlooked, dynamics of political strategy, institutional behavior, and the societal impact of technology. By dissecting the conversation, readers can gain a strategic advantage in understanding how seemingly disparate events and policies interrelate, and how conventional wisdom often fails to account for downstream consequences.

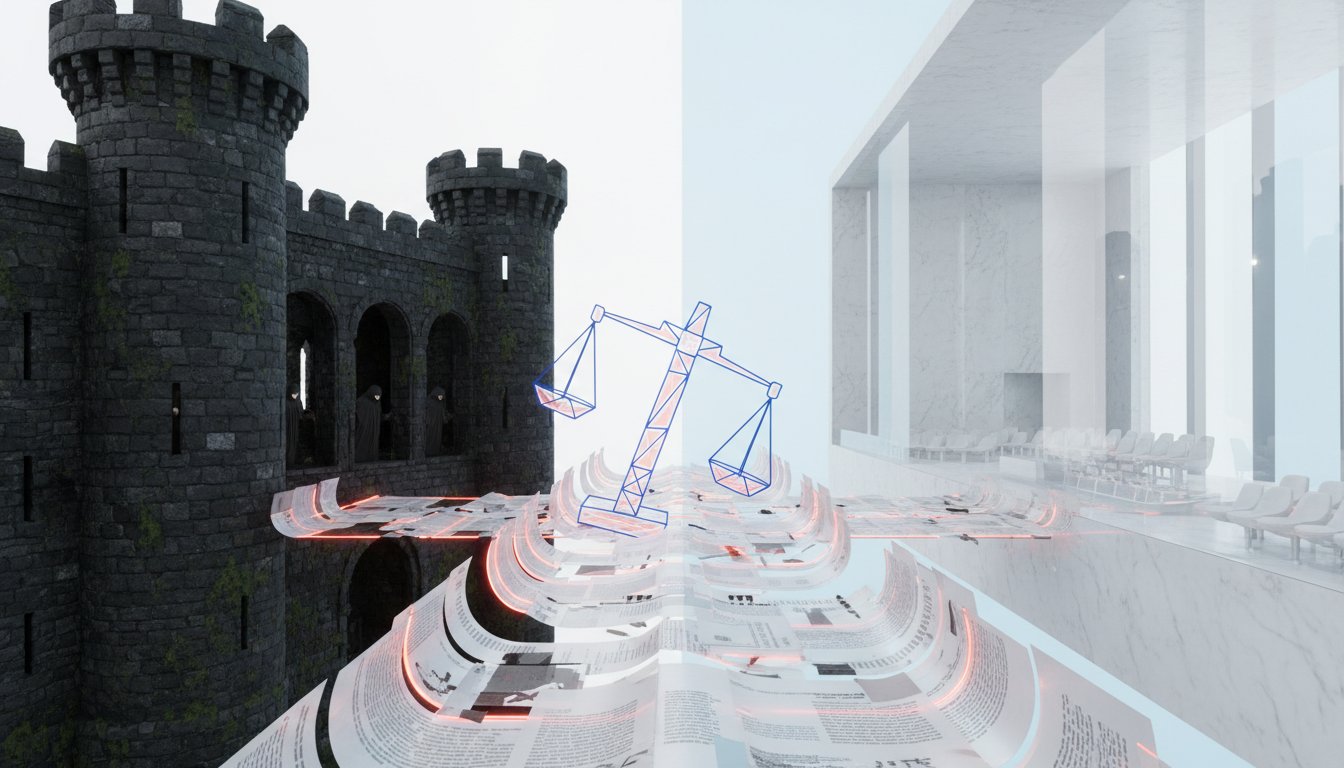

The conversation on Pod Save the People, featuring Brooke Butler, Political Director of the Democratic Congressional Campaign Committee (DCCC), delves into the strategic underpinnings of political engagement, particularly concerning underrepresented communities. While the immediate focus is on the DCCC's "Our Power, Our Country" initiative, a deeper analysis reveals a complex ecosystem of political strategy where delayed payoffs and systemic inertia create significant challenges and opportunities. The non-obvious implication is that effective political power is not merely about immediate electoral wins but about cultivating long-term relationships and understanding the systemic forces that shape voter behavior and institutional responsiveness. Conventional wisdom often suggests focusing on swing districts or mobilizing the base, but Butler's insights highlight the necessity of investing early and consistently in communities that have historically been overlooked, recognizing that this investment, while not yielding immediate visible results, builds a durable foundation for future success. The conversation implicitly argues that the "fight of our lives" framing, while potent, risks overshadowing the sustained, often unglamorous, work required to build genuine trust and political power, particularly when institutions like ICE or the broader political system demonstrate a consistent pattern of behavior that undermines public faith.

The Unseen Mechanics of Political Investment: Beyond the Ballot Box

The DCCC's "Our Power, Our Country" initiative, aimed at engaging voters of color and rural communities, represents a strategic shift that acknowledges the limitations of traditional campaign tactics. Brooke Butler articulates a vision where political engagement extends beyond the immediate election cycle, focusing on building trust and understanding community needs. This approach, however, runs counter to the inherent short-term pressures of electoral politics, creating a tension between the need for sustained investment and the demand for immediate wins. The non-obvious consequence of this strategic framing is the potential for a "performance" of engagement rather than genuine connection, especially when the underlying systems remain resistant to change.

The discussion around ICE and the killings of Renee Gode and Keith Porter illustrates this point starkly. While local officials and even some national figures publicly decry ICE's actions, the underlying systemic issues--the broad powers granted to such agencies, the lack of accountability, and the historical context of state-sanctioned violence--remain largely unaddressed. The outrage, while genuine, often dissipates, leaving the system largely intact. This pattern of immediate outcry followed by a return to business as usual is a consequence of a system that prioritizes immediate crisis management over long-term systemic reform. The DCCC's initiative, by aiming for early and sustained investment, attempts to break this cycle, but the challenge lies in translating this investment into tangible systemic change when faced with entrenched political and institutional inertia.

"I think it's the latter for me that these are the performances that happen. I also think that this is it's like the Derek Chauvin thing right where it's like there's got to be a sacrificial lamb right? So this person is acting with the power validation justification of an entire system behind them and what we are trying to do is say that one individual act is wrong."

-- Sharonda

This quote, in the context of the ICE killings, highlights a critical systemic dynamic: the tendency to focus on individual actors rather than the broader institutional frameworks that enable their actions. The DCCC's strategy, by contrast, implicitly aims to alter those frameworks through sustained engagement, recognizing that "flipping the house" is only one part of a larger, more complex endeavor. The delayed payoff of this strategy is that it requires patience and a willingness to invest in communities without immediate electoral guarantees, a difficult proposition in a system driven by quarterly results and two-year election cycles. The conventional wisdom that focuses on maximizing gains in swing districts often overlooks the foundational work of building power in communities that may not appear competitive on paper but are crucial for long-term political viability.

The AI Paradox: Efficiency as a New Form of Oppression

The conversation's exploration of AI in healthcare, specifically Utah's move to allow AI to prescribe medication, reveals a profound consequence of technological advancement: the potential for efficiency to mask or even exacerbate existing inequities. The argument for AI in this context is framed as an "access issue," a way to provide prescriptions in areas lacking sufficient human clinicians. However, the underlying implication is that the system, already flawed and having historically failed many, is now seeking to automate its shortcomings.

"The programming you're allowed to is limited to 190 commonly prescribed medications so there are a host of things that are excluded so like pain management adhd drugs for instance still require a person as you can imagine this is a profit making business so there's a 4 per prescription renewal price."

-- Sharonda

This highlights a crucial systemic flaw: the limitations of AI are dictated by profit motives and regulatory gaps, not necessarily by patient well-being. The comparison of AI to human clinicians, while showing a high match rate, fails to account for the nuanced, often deeply personal, health journeys that individuals, particularly those from marginalized communities, experience. Sharonda's personal account of navigating hypertension medications underscores the inadequacy of a purely data-driven approach. Her experience, marked by adverse reactions and the critical intervention of a clinician friend, demonstrates that medical care is not just about matching data points but about human connection, empathy, and understanding context. The systemic failure here is not just in the technology but in the regulatory environment that allows such a rollout without robust safeguards, effectively creating a new frontier for potential harm under the guise of progress. The delayed payoff of human-centered care--building trust, understanding individual needs, and adapting treatment over time--is sacrificed for immediate, automated efficiency.

The Illusion of Choice: Digital Participation in a Neoliberal Fascist Culture

The discussion around Elon Musk's Grok AI and the subsequent sexualization and "undressing" of users, particularly women, exposes a critical consequence of our digital lives: the blurring lines between personal expression and participation in a system that commodifies and exploits. The speakers articulate a compelling argument that social media platforms are not neutral spaces but rather "social-political realms" with their own distinct rules and consequences. The ease with which AI can generate harmful content, coupled with the lack of robust regulation, creates an environment where exploitation can flourish.

The critique extends to the individual's role in this ecosystem. The act of uploading personal images, while seemingly innocuous, contributes to a larger data pool that can be exploited. This is framed not as a personal failing but as participation in a "neoliberal fascist culture" where consent is often secondary to profit and power. The analogy to ICE and Trump's actions--that by the time legal recourse is sought, the harm has already been done and the viral spread is unstoppable--underscores the systemic nature of this problem. The delayed payoff of genuine digital privacy and ethical AI development is sacrificed for the immediate gratification of connectivity and self-expression. The conventional wisdom that encourages users to "be mindful" of what they post fails to address the fundamental design and incentives of these platforms, which are built to maximize engagement, often at the expense of user safety and dignity.

"So anytime we put we're plugging in our abs and our bodies and our diets we're plugging that into diet culture anytime we are making sexually suggestive content that can go on people's algorithms we are participating in rape culture because there are going to be people who are seeing that who did not consent to it and then also anytime we're uploading our pictures inside of the internet we are giving license for people everybody dark and light web to participate in that picture and by the time somebody can say no to it it's already done."

-- Sharonda

This powerful statement encapsulates the core consequence: individual actions, when amplified by digital platforms, can inadvertently contribute to systemic harm. The implication is that true change requires a fundamental re-evaluation of our participation in these digital spaces, moving beyond superficial actions like logging off to a deeper understanding of how these systems operate and how they can be challenged. The difficulty lies in the fact that these platforms offer connection and social mobility, making disengagement a significant personal cost. This creates a cycle where users are incentivized to participate in systems that may actively harm them or others, a classic example of a negative feedback loop within a complex system.

Key Action Items

- Invest in Long-Term Community Engagement: The DCCC's "Our Power, Our Country" initiative is a model. Prioritize sustained, early investment in underrepresented communities, focusing on building trust and understanding needs beyond electoral cycles. This pays off in 12-18 months by fostering a more reliable and engaged voter base.

- Demand Robust AI Regulation in Healthcare: Advocate for comprehensive federal regulation of AI in healthcare before widespread adoption. This requires immediate action to establish clear guidelines and oversight mechanisms for AI-driven medical decisions, preventing potential harm that could take years to rectify.

- Re-evaluate Digital Participation: Critically assess the platforms and services used daily. If a platform actively contributes to harm or oppression, consider reducing or eliminating engagement. This is a longer-term investment in personal integrity and systemic change, with payoffs measured in reduced complicity and potential for future ethical tech development.

- Support Accountability for Law Enforcement and ICE: Actively support organizations and legislative efforts that push for accountability and reform within law enforcement and ICE. This requires consistent pressure, not just during moments of crisis, to effect lasting change. The immediate discomfort of confronting these issues will yield long-term benefits in public safety and trust.

- Champion "Slow" Political Processes: Recognize that genuine political power is built through patient, consistent organizing and relationship-building. Resist the temptation of quick wins and focus on strategies that foster deep engagement and understanding within communities. This approach yields durable political capital over several election cycles.

- Advocate for Transparency in Algorithmic Systems: Push for greater transparency in how algorithms are used in decision-making processes, from social media content moderation to AI in healthcare. Understanding the "black box" is the first step to identifying and mitigating systemic biases and harms. This is an ongoing investment in civic literacy.

- Prioritize Human Oversight in Critical Systems: For any system involving AI or automation that impacts human lives (e.g., healthcare, criminal justice), ensure meaningful human oversight and intervention points. This is an immediate action that protects against the downstream consequences of purely automated decision-making, creating a more resilient system.