SAP's AI Transition: Re-engineering Business Models for Outcomes

SAP's enduring legacy as an enterprise "operating system" is being fundamentally reshaped by AI, moving beyond mere technological integration to a profound business model transformation. This conversation with SAP CTO Philipp Herzig reveals that the true challenge isn't building AI capabilities, but rather teaching them to operate at enterprise scale and deliver tangible customer outcomes. The non-obvious implication is that the AI transition demands a re-engineering of UI, business processes, and the data layer, creating a distinct competitive advantage for companies that can navigate this complexity. This analysis is crucial for enterprise leaders, technologists, and strategists who need to understand how to bridge the gap between AI innovation and real-world business results, moving from an "innovation race" to an "outcome race."

The Generative UI and the "Upleveled" Enterprise

The narrative around AI in enterprise software often focuses on integrating new models or automating existing tasks. However, Philipp Herzig, CTO of SAP, argues that the AI transition is more akin to the shift from on-premise to cloud computing: a fundamental re-engineering of how software is built and experienced. This isn't just about adding AI features; it's about transforming the very fabric of enterprise operations across three key layers: the user interface (UI), business processes, and the data layer.

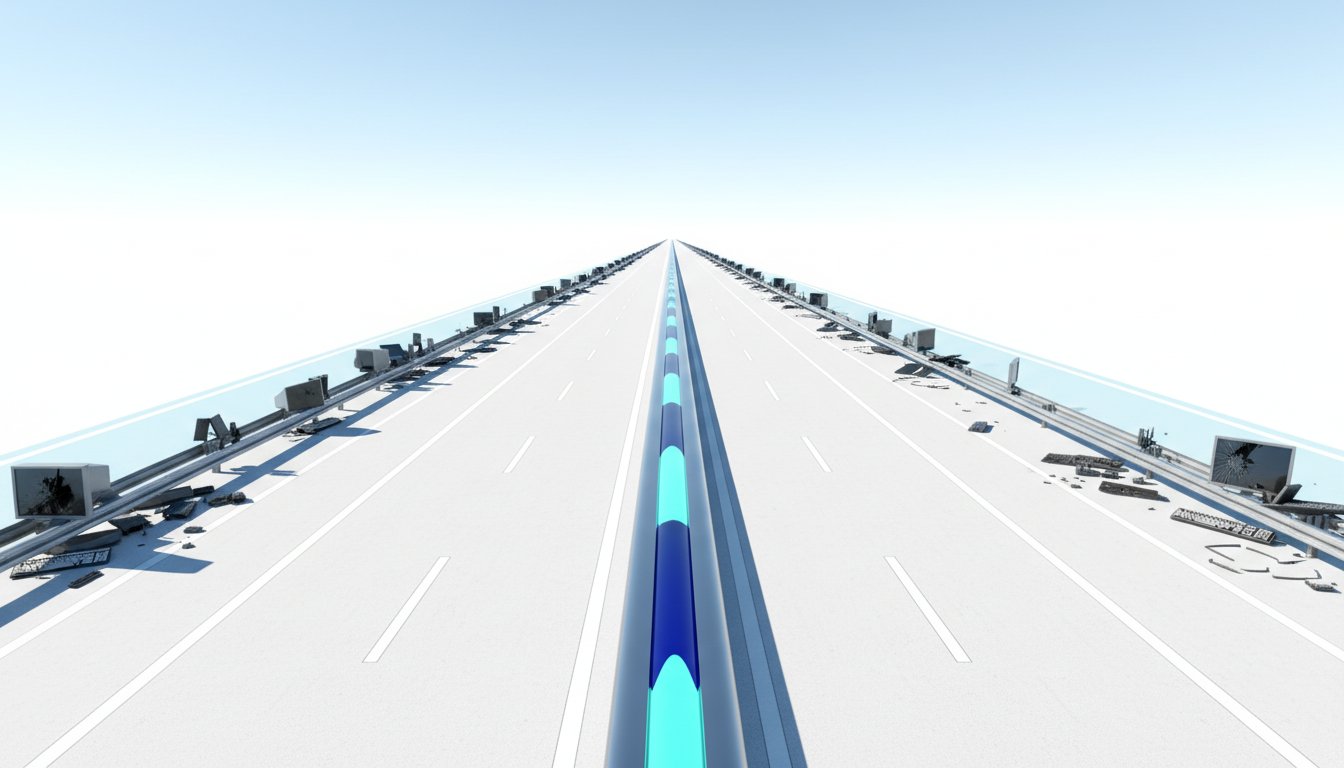

The most striking shift is in the UI. The era of static, human-navigated interfaces is giving way to "generative UI." Instead of users clicking through menus, the system will dynamically generate interfaces and proactively offer insights. Herzig envisions a future where SAP's platform can analyze complex scenarios, like the impact of new tariffs or geopolitical events on supply chains, and present this information alongside a company's own financial and operational data. This proactive, multimodal approach moves beyond simple chatbots to systems that can identify potential issues--like a dip in sales orders--and offer immediate recommendations. This proactive stance is a significant departure from traditional software, where the burden of intelligence lay with the user.

"The time is clearly over where you design software where the dumb software, where the that requires the intelligence to sit in front of the computer. So I mean if you look at classical software, what did you do? You decided a user interface, trying to hopefully you did some user research, try to figure out in the easiest way or the most intuitive way to figure to teach a human how they get their task done by clicking through the UI essentially. And this is over. It's now we call it generative UI."

-- Philipp Herzig

This transformation in UI directly impacts business processes. Herzig describes a move from "software as a service" to "service as a software" or "outcome as a service," powered by AI agents. These agents can blend structured enterprise data with unstructured information, enabling more fluid and efficient end-to-end processes like order-to-cash. The implication is that roles within finance, HR, and supply chain will be "upleveled." Mundane tasks like data collection and report generation will be automated, freeing up professionals to focus on strategic thinking, scenario planning, and deeper analysis. This is not about replacing humans, but about augmenting their capabilities, allowing them to achieve more by offloading routine work to intelligent agents.

The third crucial layer is the data itself. SAP's strength lies in its vast repository of enterprise data--financial ledgers, inventory information, customer interactions. AI's power is amplified when it can harmonize this internal data with external sources to create a unified, semantically rich view. This harmonized data layer is the bedrock upon which AI-driven insights and actions are built, enabling more accurate predictions and more intelligent automation.

The Scale of AI: From POCs to Enterprise Reality

While the potential of AI is vast, Herzig emphasizes that the most significant engineering challenge is not the AI models themselves, but teaching them to operate reliably and effectively at enterprise scale. The ease of building a proof-of-concept chatbot on a few documents is misleading. The reality for companies like SAP, serving hundreds of thousands of customers with complex, interconnected systems, is far more demanding.

Scaling AI involves intricate challenges, such as personalizing responses based on an individual's role, location, and specific data context--for instance, tailoring a travel policy answer to a US versus a German employee. This requires deep integration with master data and a sophisticated understanding of context. Similarly, connecting AI agents to thousands of APIs, a necessity for an enterprise platform like SAP, presents a significant hurdle. The sheer volume and interconnectedness of enterprise systems mean that simple API calls can lead to context bloat and performance issues, demanding robust orchestration and disambiguation logic.

"The problem or why does agentic coding works so well, Sarah, is of course you can verify the outcome. You can either say, 'Hey, the program is compiling,' or that are your unit tests, right? Does it work etc. And of course combined with a little bit of taste and a lot of hard engineering work and OpenAI built these phenomenal code generation models. The problem is if you now want to build a reliable outcome in finance and so on, you need the data that say, 'Hey, with this input, that's the output,' in order so that the coding agent can validate that and assert that against the reliable outcome."

-- Philipp Herzig

This leads to the critical importance of verifiability and what Herzig terms "agent mining." Unlike generic code generation, enterprise AI must be rigorously validated against desired outcomes. This requires a shift in developer mindset towards defining precise boundary conditions, ensuring security, data privacy, and code maintainability. The concept of "test-driven development," once unpopular, is resurfacing as essential for AI. By recording decision traces and user inputs--the "agent mining" process--companies can identify anomalies, elevate successful patterns to standard operating procedures, and create a data flywheel that continuously improves AI performance and reliability. This meticulous attention to detail and validation, while less glamorous than rapid prototyping, is what builds durable, scalable AI solutions.

Beyond LLMs: The Durable Advantage of Predictive Models

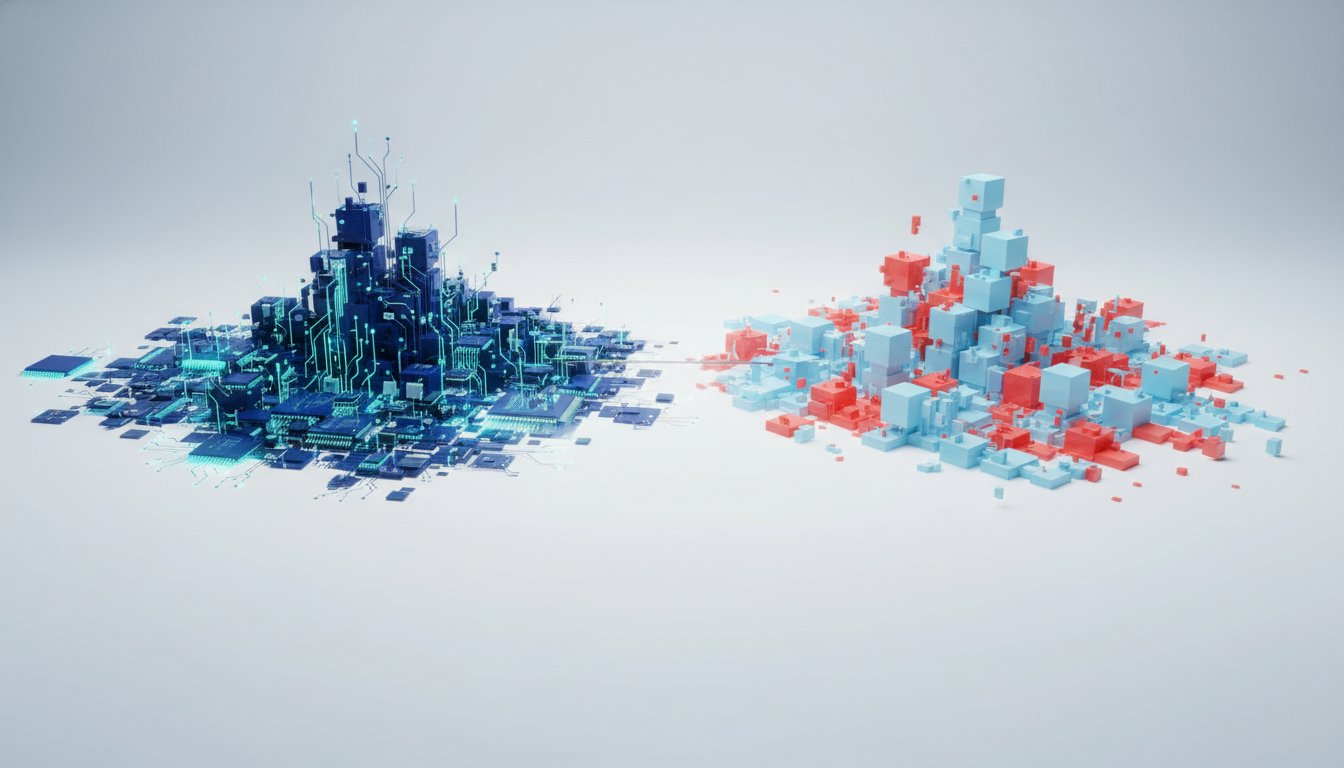

A significant point of divergence from the dominant AI narrative is SAP's focus on predictive and tabular models, rather than relying solely on Large Language Models (LLMs). Herzig argues that while LLMs excel at handling unstructured data, they are fundamentally ill-suited for the predictive tasks crucial for business planning and decision-making.

Predicting demand, forecasting cash flow, or assessing payment delays requires analyzing structured, tabular data with numerous input variables. LLMs, with their token-by-token generation, are not designed for the nuanced classification and regression tasks inherent in these predictions. While classical machine learning approaches like XGBoost exist, they often fail to scale in enterprise environments, requiring specialized data scientists and the manual training of hundreds or thousands of models across different regions and product lines.

SAP's research into "Relational Pre-trained Transformers" (RPT1) aims to bridge this gap. By adapting the transformer architecture for structured, relational data, SAP seeks to democratize predictive analytics. The goal is to enable users to achieve high-accuracy predictions with less data and specialized expertise, simply by providing context and a small amount of relevant data.

"Now the problem is of course still today, if we look at these predictive questions, and then you want to maybe do what if analysis from it. If you want to do these predictions, quite frankly, then the challenge is large language models are not made for this. The way how they, you know, generate just one token after another essentially in a sequence to sequence modeling. I mean, they're language models, so that and they do this phenomenally well. But if you still want to do these predictors, you have to go back to these classical machine learning approaches."

-- Philipp Herzig

This focus on robust, scalable predictive capabilities represents a potential competitive advantage. By making sophisticated forecasting and analysis accessible, SAP empowers its customers to move beyond reactive problem-solving to proactive strategic planning. This is where the "outcome race" truly begins, differentiating companies that can leverage AI for tangible business improvements from those merely experimenting with new technologies. The challenges of data fragmentation, security, and integration remain significant barriers to enterprise AI adoption, but those who successfully navigate them, particularly by mastering the structured data domain, will unlock substantial value.

Key Action Items

-

Immediate Action (0-3 months):

- Assess Data Readiness: Conduct an audit of existing data sources, identifying fragmentation and harmonization needs. Prioritize cleaning and structuring key datasets for AI consumption.

- Pilot Generative UI for Internal Tools: Explore implementing generative UI features for internal productivity tools (e.g., reporting, knowledge retrieval) to gain early experience and demonstrate value.

- Define "Outcome Metrics" for AI Projects: For any upcoming AI initiatives, clearly define the specific business outcomes and KPIs that success will be measured against, moving beyond technical performance.

-

Short-Term Investment (3-12 months):

- Invest in Agent Mining Infrastructure: Begin building or adopting tools to capture and analyze agent decision traces and user interactions, creating a feedback loop for AI improvement.

- Explore Predictive Model Applications: Identify 1-2 critical business areas (e.g., demand forecasting, cash flow prediction) where current predictive capabilities are lacking and pilot RPT1 or similar models.

- Develop Internal AI Governance Framework: Establish clear guidelines for AI usage, focusing on security, data privacy, and ethical considerations, particularly concerning sensitive enterprise data.

-

Long-Term Investment (12-18+ months):

- Re-engineer Core Business Processes with AI Agents: Systematically review and redesign key end-to-end business processes, integrating AI agents to automate tasks and enhance decision-making.

- Transition to Hybrid/Consumptive Pricing Models: Prepare for and pilot a shift towards more consumption-based pricing for AI-driven services, balancing customer predictability with vendor scalability.

- Foster a "Strategic Thinking" Culture: Actively encourage and train employees to leverage AI-augmented insights for higher-level strategic planning and innovation, moving away from operational execution.

- Invest in Cross-Functional AI Literacy: Ensure teams across different departments understand the capabilities and limitations of AI, fostering collaboration between business users and technical AI teams.