AI Materials Discovery Bottlenecked by Synthesis and Testing

The AI materials discovery field is awash in funding and promise, yet grappling with a significant disconnect between virtual potential and physical reality. While AI can rapidly generate millions of theoretical material structures, the true bottleneck--and the source of hidden consequences--lies in the arduous, expensive, and time-consuming process of actual synthesis and real-world testing. This conversation reveals that the "ChatGPT moment" for materials science is elusive because the tangible creation of materials, not just their conceptualization, is where the real challenge and opportunity reside. Anyone involved in deep tech innovation, R&D strategy, or venture capital should read this to understand where the true value lies in AI-driven scientific discovery: bridging the gap between simulation and the lab, and recognizing that the hardest problems often yield the most durable competitive advantages.

The Tangible Chasm: Why Virtual Wins Aren't Enough

The narrative surrounding AI in materials discovery is dominated by the dazzling potential of generative models to predict millions of novel compounds. DeepMind’s announcement of 380,000 stable crystals, for instance, painted a picture of imminent breakthroughs. However, this focus on theoretical discovery overlooks a critical, and often underestimated, downstream consequence: the immense difficulty and cost of actually making these materials in the physical world. As the transcript highlights, "structure helps us think about the problem but it's neither necessary nor sufficient for real materials problems." This gap between computational prediction and laboratory validation is the central bottleneck, a stark reminder that scientific progress is not solely a function of computational power.

The initial excitement, fueled by successes in fields like protein folding with AlphaFold 2 and the general-purpose utility of LLMs like ChatGPT, led to a flood of investment into startups aiming to automate scientific discovery. Yet, the expected "eureka moment" has not materialized. The reason, according to the discussion, is that the most time-consuming and expensive phase is not ideation, but synthesis and testing. This reality complicates the narrative of rapid AI-driven progress, revealing that the "ChatGPT moment" for materials science requires not just clever algorithms, but a fundamental re-engineering of experimental processes. The consequence of focusing solely on virtual discovery is a pipeline filled with theoretical possibilities but lacking in tangible, testable outcomes, leading to a disconnect between investor expectations and actual progress.

"By far the most time consuming and expensive step in materials discovery is not imagining new structures but making them in the real world before trying to synthesize a material you don't know if in fact it can be made and is stable and many of its properties remain unknown until you test it in the lab."

This statement underscores the core challenge. The immediate payoff of AI is in generating ideas, which feels productive and aligns with the speed of software development. The delayed payoff, however, comes from the laborious process of physical validation. Startups that focus primarily on computational discovery risk building impressive theoretical models that may never translate into practical applications. This delay creates a competitive advantage for those who can effectively bridge the virtual-physical divide, as the effort required is substantial and the payoff is distant, deterring many. Conventional wisdom, which often prioritizes rapid prototyping and visible progress, fails here because the critical path involves patience and investment in physical infrastructure and processes that do not yield immediate results.

The Unseen Complexity of Physical Validation

The critique of DeepMind's material discovery announcement serves as a crucial case study in the limitations of purely computational approaches. While the AI identified millions of potential crystal structures, scientists noted that many were not truly novel, or were simulated under conditions (like absolute zero) that do not exist in the real world. The implication is that AI models, trained on existing literature and data, can extrapolate and combine known principles but struggle with emergent properties or the nuanced realities of physical systems.

"The deepmind research not only offered a gold mine of possible new materials it also created powerful new computational methods for predicting a large number of structures but some materials scientists had a far different reaction after closer scrutiny researchers at the university of california santa barbara said they'd found scant evidence for compounds that fulfill the trifecta of novelty credibility and utility in fact the scientists reported they didn't find any truly novel compounds among the ones they looked at some or merely trivial variations of known ones"

This highlights a second-order negative consequence: the potential for AI-generated "discoveries" to be dismissed or invalidated upon rigorous physical scrutiny, eroding credibility and wasting resources. The systems thinking here involves understanding that the "system" of scientific discovery requires not just prediction but also validation. When validation is difficult or computationally misrepresented, the entire system falters. The perceived advantage of AI in generating vast numbers of candidates dissolves when those candidates prove difficult to synthesize, unstable, or lack practical utility. The real advantage lies in AI that can guide the physical process, learning from experimental outcomes to refine subsequent attempts, rather than simply generating theoretical lists.

The difficulty in automating solid-state synthesis, as opposed to liquid handling in drug discovery, further illustrates this point. Preparing and processing powders, controlling temperature and pressure, and accurately characterizing the resulting materials are complex physical processes that are harder to automate than many computational tasks. Startups like Lyra Sciences and Periodic Labs are attempting to tackle this by integrating AI with automated labs, aiming to create a feedback loop between computation and experimentation. This approach, however, requires significant investment in physical infrastructure and a deep understanding of experimental science, a far cry from the software-centric development cycles common in other AI fields. The delayed payoff for this investment--a truly autonomous, AI-driven materials discovery pipeline--is precisely what creates a potential moat, as it demands a level of effort and integration that is difficult to replicate quickly.

The Long Game: Building Durability Through Effortful Science

The pursuit of materials like room-temperature superconductors exemplifies the long-term, high-stakes nature of materials science, and the limitations of current AI in accelerating such grand challenges. Decades of research have yielded only incremental progress, partly because no overarching theory predicts high-temperature superconductivity, making experimental synthesis and testing the primary, albeit slow, path to discovery. This is where the narrative shifts from immediate AI capabilities to the enduring value of foundational scientific work, augmented by AI.

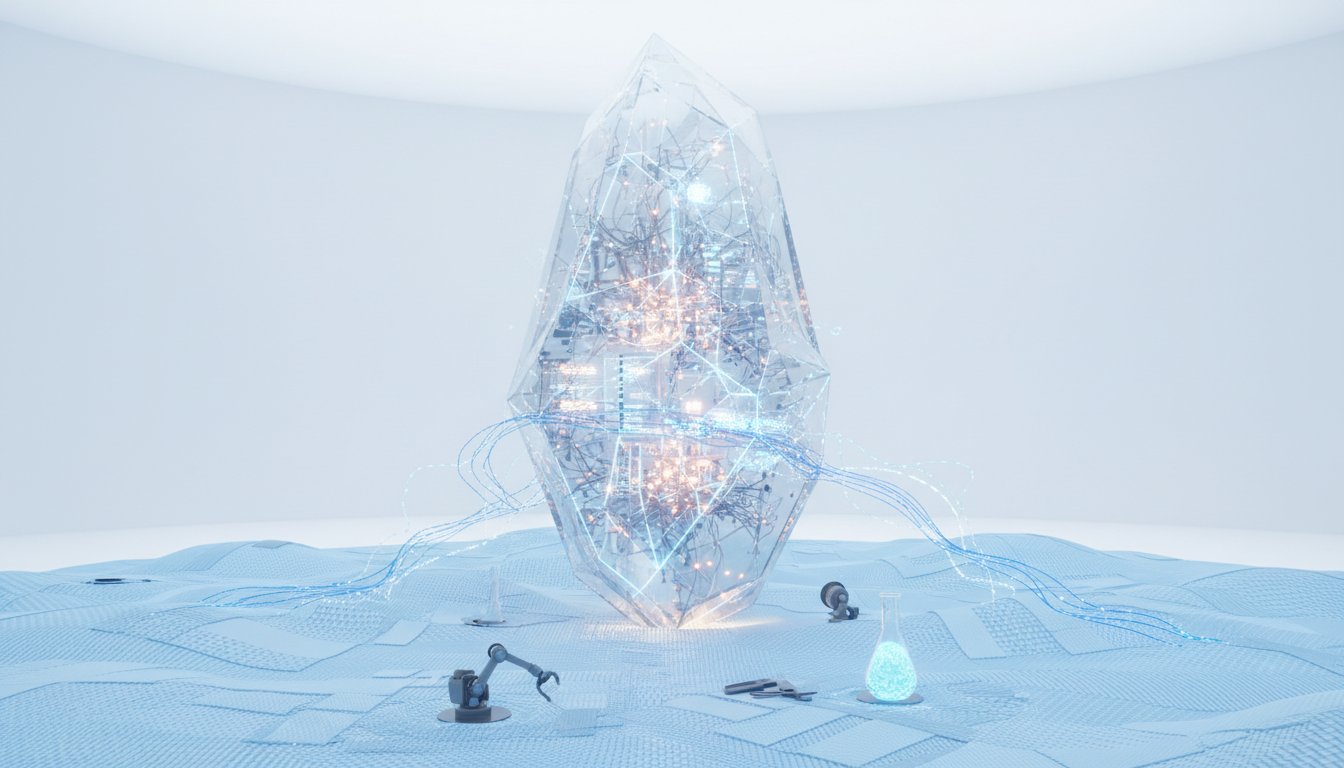

The startups discussed are not just building AI models; they are building "AI scientists" capable of performing simulations, conducting experiments, and interpreting results. This vision, while ambitious, recognizes that true scientific advancement requires more than pattern recognition; it requires understanding and agency within the physical world. The LLM as an "Orchestrator" or "head honcho" suggests a hierarchical AI system that can manage complex experimental workflows. This is a significant undertaking, requiring the integration of diverse AI agents and a deep understanding of scientific expertise, including "diffuse knowledge" gained from years of experience.

"The AI revolution is about finally gathering all the scientific data we have. Last summer, Seider became the chief science officer at an AI materials discovery startup called Radical AI and took a sabbatical from the University of California Berkeley to help set up its self-driving labs in New York City. A slide deck shows the portfolio of different AI agents and generative models meant to help realize Seider's vision. If you look closely, you can spot an LLM called the orchestrator. It's what CEO Joseph Kraus calls the head honcho."

This quote points to a strategic advantage: leveraging AI to process the overwhelming volume of scientific literature, a task impossible for humans. This ability to synthesize vast amounts of information is a key differentiator, providing AI with a broader knowledge base than any individual scientist. However, the ultimate success hinges on translating this knowledge into tangible discoveries. The "ideal" scenario described by investor Susan Shofet--responding to an industry-defined problem with a scaled-up, proven new material and a clear revenue model--highlights the commercial imperative. This requires not just invention, but also a robust business strategy and manufacturing partnerships. The startups’ current stage, setting up labs and beginning manual synthesis, underscores that the journey from AI concept to commercial material is long and requires significant upfront investment. The advantage here is for those who can demonstrate a clear path through this difficult terrain, proving that their AI-guided approach can deliver real-world value, even if it takes years.

Key Action Items

- Invest in physical lab infrastructure: Prioritize building and automating experimental synthesis and testing capabilities, not just computational models. (Immediate: Next 6-12 months)

- Develop AI agents for experimental guidance: Focus on AI that can learn from real-time experimental data to suggest modifications to recipes and synthesis conditions. (Longer-term investment: 12-24 months payoff)

- Integrate LLMs for scientific literature synthesis: Utilize LLMs to process the vast corpus of scientific papers, identifying overlooked connections and potential research avenues. (Immediate: Next 3-6 months)

- Define clear industry problems for material solutions: Proactively engage with potential industrial partners to identify specific material needs that AI can address, rather than solely pursuing theoretical discovery. (Immediate: Next 6 months)

- Focus on translating theoretical candidates to tangible prototypes: Allocate resources to rigorously synthesize and test AI-identified materials, even if the process is slow and expensive. This is where true differentiation occurs. (Ongoing: This pays off in 18-36 months)

- Build multi-disciplinary teams: Ensure teams comprise not only AI experts but also seasoned materials scientists and experimentalists who understand the nuances of physical synthesis and characterization. (Immediate: Build over next quarter)

- Embrace the long payoff cycle: Recognize that AI-driven materials discovery is a marathon, not a sprint. Commit to the multi-year development timelines required for physical validation and commercialization, understanding this difficulty creates a durable competitive advantage. (Strategic mindset shift: Immediate and ongoing)