NVIDIA's Extreme Co-Design: Orchestrating AI Ecosystems for Competitive Advantage

The $4 Trillion Company: Jensen Huang on NVIDIA's Unprecedented AI Strategy

Jensen Huang, CEO of NVIDIA, reveals in his conversation with Lex Fridman that the company's ascent to becoming the world's most valuable isn't just about superior hardware; it's a testament to a radical, systems-level approach to engineering and business strategy. The non-obvious implication is that true competitive advantage in the AI era stems not from optimizing individual components, but from orchestrating an entire ecosystem, from silicon to data center, with a relentless focus on the future. This analysis is crucial for anyone building or investing in technology, offering insights into how to anticipate and shape market dynamics rather than simply react to them. By understanding NVIDIA’s “extreme co-design” philosophy, leaders can gain a strategic edge in navigating the complexities of AI infrastructure and its cascading effects.

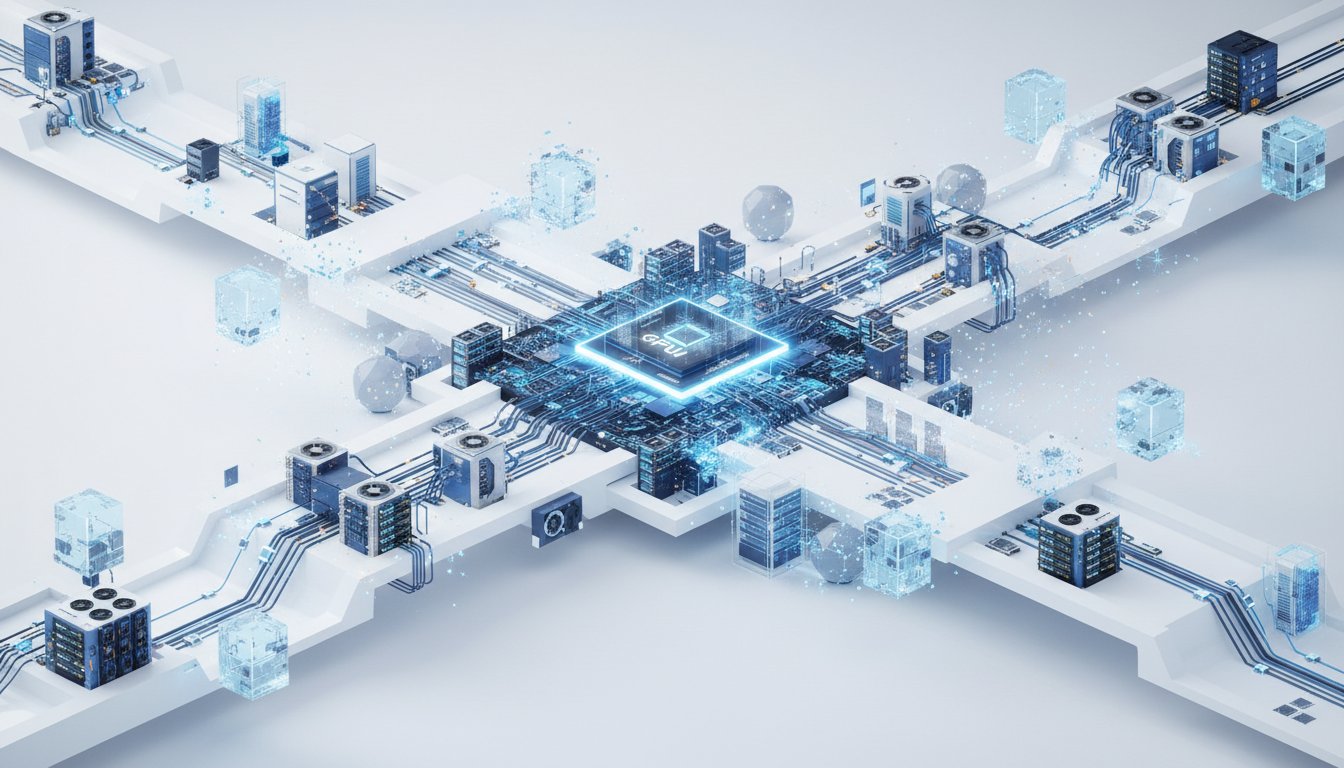

The Architecture of an AI Factory: Beyond the Chip

NVIDIA's journey from a GPU manufacturer to the architect of AI factories is a masterclass in systems thinking. The shift from optimizing individual chips to co-designing entire racks and data centers is driven by the fundamental realization that AI problems, at scale, simply do not fit within a single computer. As Jensen Huang explains, the goal is to achieve performance gains that far exceed the mere addition of more computers, a challenge that necessitates breaking down algorithms, sharding pipelines, and solving the intricate networking and data distribution problems that Amdahl's Law highlights. This requires a holistic approach where every component--CPU, GPU, memory, networking, power, and cooling--is designed in concert.

"When you're designing a computer, you have to have an operating system of computers. When you're designing a company, you should first think about what is it that you want the company to produce."

This quote encapsulates Huang’s philosophy: the company's structure must mirror the complexity of its product. His direct staff of 60, comprised of experts across various domains, exemplifies this. Instead of traditional one-on-ones, problems are attacked collaboratively, ensuring that every design decision is scrutinized through multiple lenses. This "extreme co-design" isn't limited to hardware; it extends to software, algorithms, and applications, creating a feedback loop where the entire stack is optimized simultaneously. This approach allows NVIDIA to anticipate the evolving needs of AI research and development, a critical advantage in a field where model architectures can change rapidly.

The Strategic Bet on Install Base: CUDA's Enduring Legacy

Huang’s decision to integrate CUDA onto GeForce GPUs, despite significant financial strain, stands as a pivotal moment. The rationale was not merely technological superiority but a deep understanding of architectural success: install base.

"The architecture could attract enormous amounts of criticism. For example, no architecture has ever attracted more criticism than the x86... But yet, it is the defining architecture of today. It gives you an example that, in fact, so many RISC architectures, which were beautifully architected, incredibly well designed... largely failed. And so I've given you two examples where one is, you know, one's elegant, the other one's barely aesthetic. And so yet x86 survived. And the reason is install base."

By making CUDA ubiquitous on millions of consumer GPUs, NVIDIA cultivated a massive developer ecosystem. This created a powerful network effect: developers invested in CUDA, knowing their software would reach a vast audience and benefit from continuous improvement. This strategic foresight, prioritizing reach and developer adoption over short-term profits, laid the foundation for NVIDIA's dominance in accelerated computing. It highlights how a long-term vision, even when met with skepticism, can yield profound competitive advantages.

The Evolution of AI Scaling: From Data to Compute to Agents

Huang outlines four key scaling laws that chart the trajectory of AI advancement. The initial focus on pre-training, limited by data, has given way to an era where compute is the primary bottleneck, especially with the rise of synthetic data. The next critical phase is test-time scaling, or inference, which Huang correctly identified as computationally intensive, countering the initial belief that it would be "easy."

The most profound insight is the emergence of the "agentic scaling law." As AI systems become more capable of interacting with tools, databases, and spawning sub-agents, the complexity and scale of computation required will skyrocket. This concept of AI multiplying AI, creating large teams of intelligent agents, fundamentally reshapes the demand for compute. It underscores that the future of AI is not just about larger models but about sophisticated, interconnected AI systems operating at an unprecedented scale. This foresight allows NVIDIA to design hardware and software that anticipates these future demands, building a moat based on future-proofing its ecosystem.

Future Blockers and the Power of "Extreme Co-Design"

While power consumption is a significant concern, Huang emphasizes that extreme co-design is NVIDIA’s strategy to mitigate it, aiming for orders-of-magnitude improvements in tokens per second per watt. He also addresses supply chain complexities, not as insurmountable blockers, but as areas requiring deep collaboration and trust with partners like TSMC and ASML. His proactive engagement with CEOs across the IT industry, informing their investment strategies and shaping the supply chain, demonstrates a systems-level approach to problem-solving.

"Our single most important property as a company is the installed base of our computing platform. Our single most important thing is the installed today is our, is the installed base of CUDA."

This quote reinforces that NVIDIA's moat is not solely technological but deeply rooted in its ecosystem and developer community, built over decades. The ability to influence and align the entire supply chain, from raw materials to end-user applications, is a testament to NVIDIA’s strategic depth.

Key Action Items

- Embrace Systems Thinking: When evaluating any technological solution, consider its downstream effects across the entire ecosystem, not just immediate benefits.

- Prioritize Install Base: For platform technologies, invest heavily in developer adoption and ecosystem growth, as this creates a durable competitive advantage.

- Anticipate Future Compute Needs: Design for the next generation of AI--agents, complex interactions, and massive data processing--rather than just current workloads.

- Foster Deep Ecosystem Partnerships: Cultivate trust and collaborate closely with suppliers and customers to co-design solutions and shape future market demands.

- Focus on Energy Efficiency: Continuously push for improvements in performance per watt, as this directly impacts revenue and scalability.

- Develop "Graceful Degradation" Architectures: Design systems that can adapt to fluctuating resource availability (like power) without catastrophic failure, maintaining essential functions.

- Become an AI Expert: For individuals and businesses, actively learn and integrate AI tools into existing workflows to enhance productivity and adapt to evolving job roles.