Agentic Software Development: Human Attention as the Scarce Resource

The following blog post is an analysis of a podcast transcript featuring Ryan Lopopolo of OpenAI, discussing "Harness Engineering" and the future of AI-driven software development. It applies consequence mapping and systems thinking to highlight non-obvious implications and strategic advantages.

This conversation reveals the profound, often counter-intuitive, consequences of optimizing engineering workflows for AI agents rather than human habits. It suggests a future where the bottleneck in software development shifts from computational resources to human attention, and where complex systems can be built with minimal direct human intervention. This analysis is crucial for engineering leaders, product managers, and AI practitioners seeking to understand the emerging paradigm of agentic software development and gain a competitive edge by embracing these shifts early. Reading this will equip you to anticipate and leverage the profound changes AI agents are bringing to the software lifecycle.

The Ghost in the Machine: How AI Agents Are Rewriting Software Development

The conventional wisdom in software development often centers on human efficiency, developer experience, and optimizing for human comprehension. But what if the most significant gains are found by optimizing for the machine? In a recent conversation on the Latent Space podcast, Ryan Lopopolo of OpenAI detailed his team's "Harness Engineering" experiment, a radical approach that built an internal product over five months with zero human-written code and no human-reviewed code before merge. This endeavor, resulting in over a million lines of code and thousands of pull requests, offers a stark glimpse into a future where AI agents are not just co-pilots, but autonomous teammates. The implications extend far beyond mere code generation, fundamentally altering how we conceive, build, and deploy software, and revealing hidden consequences that challenge established engineering dogma.

The Unseen Costs of Human-Centric Design

The core of Lopopolo's experiment was a deliberate constraint: he refused to write any code himself. This forced the AI agent to perform the entire job end-to-end, revealing critical insights into the limitations of current models and the necessary scaffolding to enable their autonomy. Early iterations were painfully slow, a common experience with nascent AI coding tools. However, the team’s approach to these failures was not to "try harder" with prompts, but to ask: "What capability, context, or structure is missing?" This systems-thinking mindset led to the creation of smaller, reusable building blocks and a relentless focus on optimizing the "inner loop" of development--the time it takes for an agent to complete a task.

The obsession with a sub-minute build time, for instance, wasn't just about speed; it was about maintaining a fast feedback cycle for the agent. As Lopopolo explained, when build times crept up, it signaled a need to decompose the build graph further. This discipline, while seemingly tedious, created an invariant that kept agents productive.

"We want the inner loop to be as fast as possible. One minute was just a nice round number and we were able to hit it."

-- Ryan Lopopolo

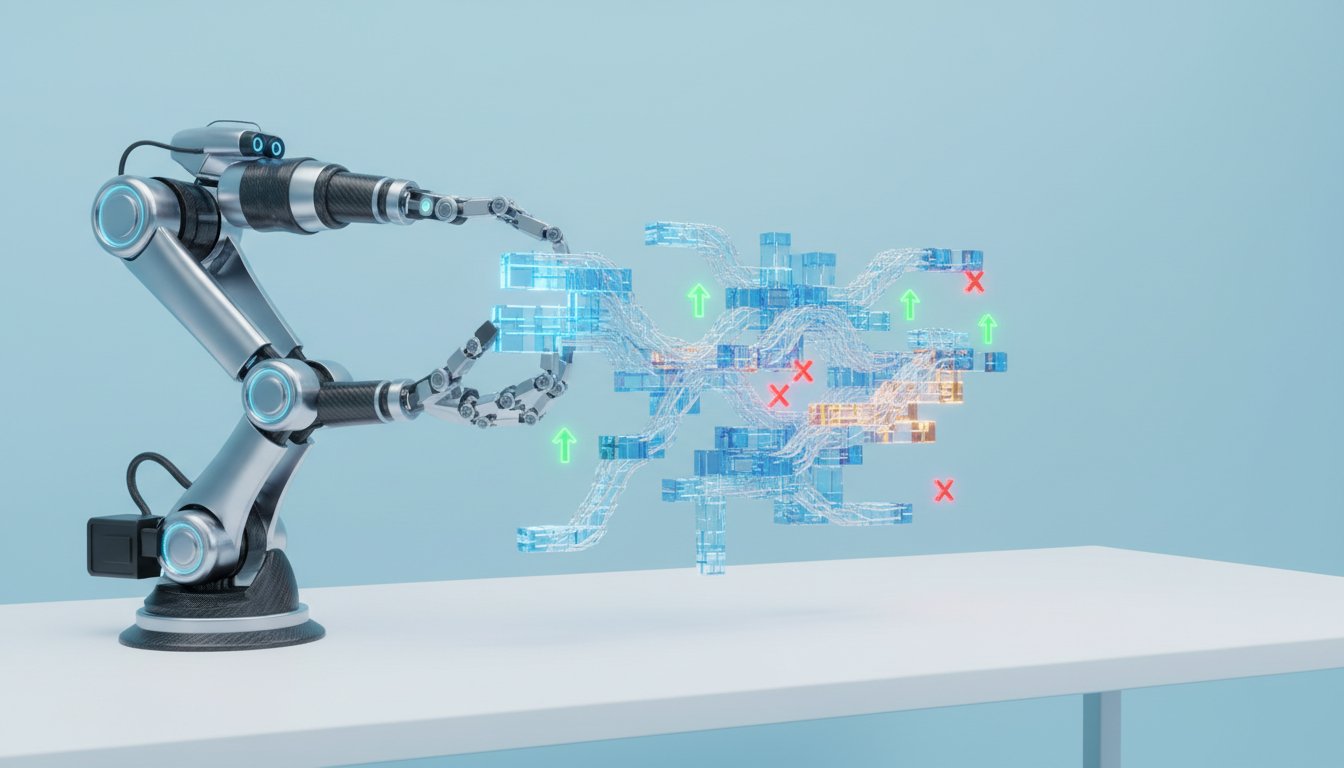

This relentless optimization for agent efficiency led to a profound realization: humans, not tokens, became the bottleneck. The scarcity shifted from computational power to synchronous human attention. This necessitated a move away from direct human code review. Instead, the team invested heavily in systems, observability, and context that allowed agents to review, fix, and merge work autonomously. This is where the non-obvious consequences truly emerge. By abstracting away human review until post-merge, the team shifted their focus from the minutiae of code to the higher-level architecture and the "engineering taste" encoded into the system.

"The only fundamentally scarce thing is the synchronous human attention of my team. There's only so many hours in the day. We have to eat lunch. I would like to sleep."

-- Ryan Lopopolo

This shift created a feedback loop where the agent's mistakes--deviations from unwritten non-functional requirements--became signals for improvement. By encoding these requirements into documentation, tests, and review agents, the team could durably embed engineering knowledge, making the software more "agent-legible." This contrasts sharply with traditional development, where such knowledge is often tacit, tribal, and difficult to scale. The implication is that software increasingly needs to be written for the model as much as for the engineer, a paradigm shift that can create significant competitive advantage for those who embrace it.

The "Ghost Library" and the Future of Software Distribution

The experiment culminated in Symphony, an internal Elixir-based orchestration layer for managing multiple coding agents. This system, coupled with the concept of "ghost libraries," represents a radical departure in how software can be built and distributed. A ghost library is essentially a high-fidelity specification from which a coding agent can reproduce a complex system. The process involves agents generating a spec, implementing it, reviewing it against upstream, and updating the spec--a recursive loop until fidelity is achieved. This approach decouples the software from specific human implementation choices, making it more adaptable and distributable.

"This is like a such a cool name. It does mean it becomes much cheaper to share software with the world, right? You define a spec, how you could build your own specifying as much as is required for a coding agent to reassemble it locally."

-- Ryan Lopopolo

This methodology highlights a critical downstream effect: code becomes increasingly disposable. When agents can resolve merge conflicts and manage the PR lifecycle autonomously, the human attachment to specific code authorship diminishes. The focus shifts to the process and the specification, allowing for rapid iteration and adaptation. The competitive advantage here lies in the ability to delegate the most time-consuming aspects of development, freeing human engineers to tackle the truly novel problems--the "pure white space" and the deepest refactorings that require human ingenuity. This is where delayed payoffs, often requiring significant upfront investment in systems and tooling, create lasting moats.

Actionable Takeaways for the Agentic Era

The insights from Lopopolo's experiment offer concrete steps for organizations looking to navigate this evolving landscape. The key is to view these changes not as incremental improvements, but as a fundamental redefinition of the software development lifecycle.

- Embrace Agent-Legible Design: Prioritize building systems and writing code that AI agents can easily understand and manipulate. This means clear, modular structures and well-defined interfaces.

- Optimize for Agent Inner Loops: Relentlessly focus on minimizing the time it takes for AI agents to complete tasks. This requires investing in fast build systems, efficient testing frameworks, and streamlined workflows.

- Shift from Code Review to Process Governance: Move human oversight from line-by-line code review to ensuring the integrity of the systems, observability, and context that guide AI agents.

- Encode Non-Functional Requirements: Systematically capture and inject engineering taste, reliability standards, and architectural principles into agent prompts and documentation. This is where durable competitive advantage is built.

- Invest in Observability and Feedback Loops: Ensure agents have comprehensive visibility into their own execution and the system's behavior. Crucially, feed this data back to refine agent capabilities and the overall development process.

- Experiment with "Ghost Libraries" and Spec-Driven Development: Explore distributing software as high-fidelity specifications that agents can implement, fostering greater adaptability and reducing reliance on human-authored code.

- Prepare for Human Attention as the Scarce Resource: Anticipate a future where human engineers focus on higher-level problem-solving, strategic direction, and novel challenges, while agents handle the bulk of implementation and iteration. This requires a significant cultural and organizational shift.