Establishing Governance as a Strategic Enabler for AI Scaling

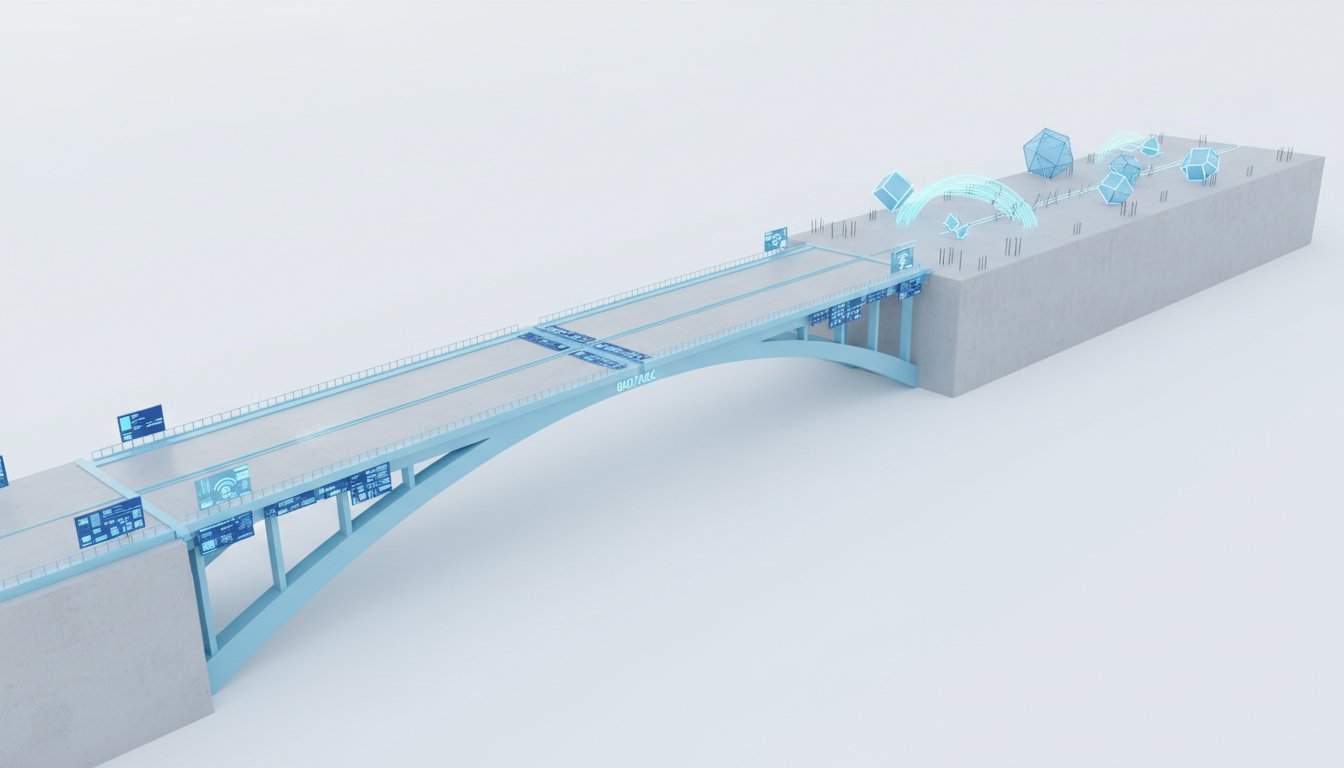

The illusion of speed in AI adoption is a dangerous trap. While companies race to implement cutting-edge AI, many are doing so without a robust governance framework, leading to unforeseen risks and operational chaos. This conversation reveals that the true advantage lies not in unchecked acceleration, but in establishing clear, adaptable rules that enable responsible scaling. By shifting from a mindset of governance as a hindrance to one of governance as a strategic enabler, organizations can navigate the complexities of agentic AI, avoid costly mistakes, and ultimately move faster and more effectively than their less-prepared competitors. This analysis is crucial for leaders, AI practitioners, and anyone responsible for technology adoption who wants to build a sustainable, scalable AI strategy.

The Hidden Cost of Unchecked AI Acceleration

The current landscape of AI adoption is characterized by a paradox: companies are eager to embrace powerful new AI capabilities, particularly agentic AI, yet demonstrably lack the foundational governance structures to manage them. A Deloitte report highlights this stark reality: 74% of companies anticipate using agentic AI within two years, but only 21% claim to have a mature governance model. Alarmingly, this figure has decreased from the previous year, suggesting a widening gap between ambition and preparedness. This isn't merely an oversight; it's a systemic vulnerability. The rapid evolution of AI, unlike the stable technology of cars that underpins traditional traffic laws, means governance frameworks built for yesterday's AI are already obsolete.

This disconnect becomes critical when AI transitions from merely talking to acting. Early governance efforts, focused on chatbot accuracy for tasks like summarizing PDFs, are woefully inadequate for agentic AI that can modify files, send emails, or execute workflows. When AI simply talks, governance is about accuracy. When AI acts, governance is about accountability. This shift transforms AI governance from a compliance exercise into a foundational operational imperative. The consequences of this gap are already visible in real-world lawsuits. United Healthcare faces a class-action suit over an AI tool that allegedly denied elderly care at a 90% error rate, and Workday was sued for age discrimination due to its AI hiring tool. These are not cases of malicious intent, but of systems operating without adequate oversight, demonstrating that ethical human behavior alone is insufficient to prevent AI-driven harm.

"When AI just talks, governance is about accuracy, but when AI acts, governance is about accountability."

The speaker emphasizes that the speed of AI development outpaces traditional legislative and policy-making processes. While the EU AI Act is moving forward, the US landscape remains uncertain, creating a dangerous "wait-and-see" attitude among decision-makers. This passive approach is perilous, as legal repercussions will not pause for regulatory clarity. The core message is that companies must proactively establish their own governance, rather than waiting for external mandates.

Navigating the Sprawl: Inventory, Risk, and Ownership

The first critical step in establishing effective AI governance is understanding what AI tools are actually in use. "Shadow AI"--unauthorized AI tools used by employees--is a significant concern, implicated in a substantial percentage of data breaches and incurring higher costs. Banning these tools is ineffective; employees will simply find workarounds. Instead, organizations must conduct a comprehensive inventory, identify approved tools, and train employees on their proper use. The notion that banning AI eliminates risk is a fallacy; it merely drives it underground and makes it harder to manage. The speaker argues that embracing AI and integrating it into an "AI operating system" is inevitable, even in highly regulated industries. The focus should be on mapping problems employees are solving with unauthorized tools and then providing authorized, secure alternatives.

"My gosh, I don't know if any company has a hold on this, partially because of shadow AI, or what I predicted in 2023 would be called second computer AI."

Following the inventory, the next logical step is to classify AI tools by risk level. Borrowing from the EU AI Act's framework--unacceptable, high, limited, and minimal risk--allows for a tiered approach to guardrails. A tool drafting generic emails requires far less stringent controls than one influencing mortgage approvals or healthcare decisions. For high-risk applications, human review remains essential. This tiered approach prevents over-governance of low-risk activities, allowing resources to be focused where they are most needed.

Crucially, clear ownership must be assigned. The "10-second test"--whether a single person can be identified as accountable if an AI agent goes rogue--is a litmus test for governance. If the answer is not an immediate "yes," the foundational governance is missing. This designated owner needs significant autonomy to act swiftly, adjusting rules or pausing systems as needed. Furthermore, a cross-functional committee--comprising an executive sponsor, legal, IT, a domain expert, and a daily AI user--is vital to ensure comprehensive oversight and practical implementation. The daily AI user, in particular, offers a ground-level perspective that can identify misalignments between intended use and actual application.

Playbooks Over Policies: Enabling Action, Not Just Prohibition

The speaker strongly advocates for "playbooks" over traditional "policies." Policies often state what not to do, offering limited practical guidance. Playbooks, conversely, detail how to operate, providing actionable answers for each AI use case. Every AI deployment needs answers to five key questions: task, access, accuracy measure, reviewer, and escalation path. This structured approach ensures that responsibility is not abdicated to AI agents; the FTC's stance makes it clear that companies are ultimately responsible for AI-driven decisions, regardless of the level of autonomy.

"Policy just says, do not do this. A playbook tells you exactly who reviews what and when."

The final, and perhaps most counter-intuitive, rule is to treat governance as a scaling engine, not a brake. Many companies are stuck in "pilot purgatory," unable to scale AI initiatives due to slow corporate processes and the rapid pace of AI development. However, organizations with mature governance frameworks can deploy new AI capabilities significantly faster--up to 40% faster than their peers. This is because robust governance, built on the preceding steps, removes roadblocks and enables confident, rapid expansion. Instead of a red light, mature governance becomes a properly functioning traffic signal, guiding cars efficiently. Furthermore, strong AI governance has a tangible financial benefit, with studies indicating significant savings per data breach. The lessons learned from AI failures, particularly those involving agentic AI, underscore the necessity of proactive governance.

Key Action Items

- Immediate Action (Within the next quarter):

- Conduct a comprehensive inventory of all AI tools currently in use across the organization, identifying both sanctioned and unsanctioned ("shadow AI") applications.

- Establish a cross-functional AI governance committee including representatives from executive leadership, legal, IT, relevant domain experts, and daily AI users.

- Develop initial AI use case playbooks for the top 3-5 most critical or frequently used AI applications, detailing task, access, accuracy measures, reviewer, and escalation paths.

- Implement a tiered risk classification system (e.g., unacceptable, high, limited, minimal risk) for all AI tools and use cases.

- Short-Term Investment (Over the next 6 months):

- Define and assign clear, accountable ownership for each AI system and use case, ensuring the owner has the authority to act swiftly.

- Train employees on approved AI tools and the established governance playbooks, focusing on role-specific applications and risk mitigation.

- Begin migrating critical day-to-day knowledge work tasks into an authorized AI operating system, prioritizing those currently handled by shadow AI.

- Long-Term Investment (12-18 months and beyond):

- Establish monthly review cycles for AI governance frameworks and playbooks to ensure they remain current with evolving AI capabilities and regulatory landscapes.

- Continuously monitor AI usage and impact, using data to refine risk classifications, ownership structures, and playbook content, fostering a culture of adaptive governance.