Claude's Computer Use: Sophisticated Party Trick, Not Workforce Multiplier

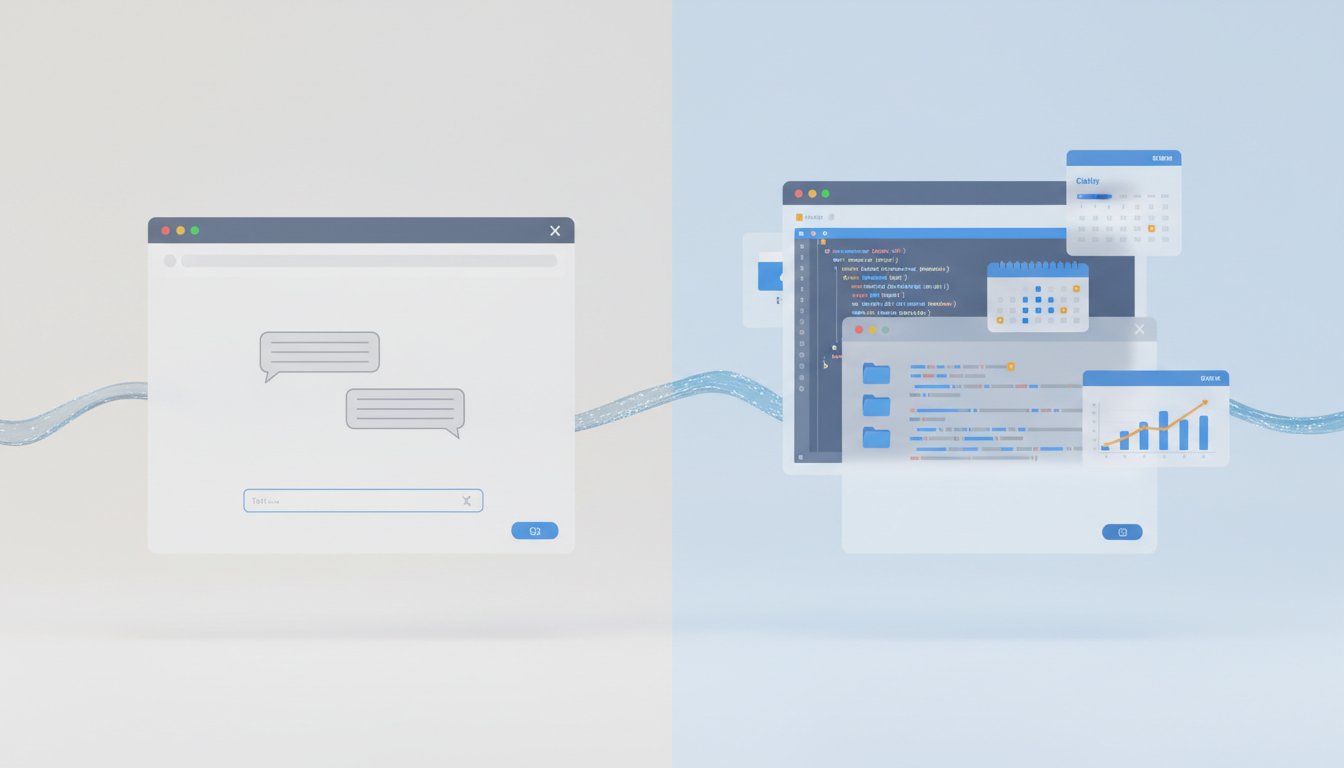

The viral sensation of Anthropic's Claude "Computer Use" feature offers a tantalizing glimpse into the future of AI agents, but its current iteration is more of a sophisticated party trick than a ready-to-deploy workforce multiplier. While the ability for an AI to directly interact with a computer's interface--opening apps, clicking buttons, and navigating files--is a significant technical leap, its immediate practical utility is hampered by slowness, finickiness, and a fundamental limitation: it screen hijacks, preventing users from working alongside the AI. This conversation reveals that the true value lies not in the current execution, but in the underlying architecture, which hints at a future where AI agents seamlessly integrate with existing software before dedicated APIs become widespread. Professionals in tech, product management, and AI development should pay close attention, as understanding this nascent interface now offers a strategic advantage in anticipating the next wave of human-AI collaboration.

The Illusion of Control: Why Claude's Computer Use is a Glimpse, Not the Destination

The recent explosion of interest around Anthropic's Claude "Computer Use" feature is understandable. The ability for an AI to mimic human interaction with a computer--opening applications, clicking buttons, and navigating interfaces--feels like a profound step towards true AI agents. However, a deeper analysis, as explored in this conversation, reveals that while the technology is impressive, its current implementation is riddled with limitations that relegate it to a "party trick" status for now. The real insight lies not in what it can do today, but in what its existence signifies for the future of how we interact with software.

The core problem with Claude's Computer Use, as highlighted, is its "screen hijack" nature. Unlike other agentic browsers that might operate in a separate window or on a different monitor, Claude's direct control of the mouse and keyboard means you, the human user, are largely sidelined. This creates a fundamental conflict: the AI needs full control of your interface, preventing you from performing your own tasks concurrently. This isn't a collaborative environment; it's a handover of control.

"This might not be an AI agent that sits down with you and works alongside with you like you might want it to be."

This limitation is critical because, as the speaker notes, the "overwhelming majority of apps or where we work" are inside existing software like Salesforce, Slack, or Microsoft Word. While Claude's Computer Use can theoretically interact with these, it does so inefficiently. For applications with existing APIs or direct Claude integrations, using the Computer Use feature is "overkill" and "token-inefficient," consuming resources without offering a significant advantage over direct integrations. The AI's reliance on visual cues--taking screenshots and interpreting them--inherently makes it slower and more prone to errors than a direct programmatic interaction.

The speaker points out that even when instructed to use a specific agentic browser like Perplexity's Comet or ChatGPT's Atlas, Claude might default to using its Chrome extension instead, bypassing the intended Computer Use functionality. This suggests that the system's logic prioritizes existing, more direct integrations over the more cumbersome visual interface control. This is a classic example of a system optimizing for its existing pathways, even when a user requests a different one.

"So, even when I tell it, 'Hey, go open ChatGPT Atlas and do this task,' it's like, it'll open ChatGPT Atlas, but then it's going to decide to do the task elsewhere and not use computer use really at all."

The setup process itself, requiring extensive permissions for accessibility and screen recording, underscores the significant security implications. While Anthropic provides warnings about potential misuse and the inability to undo certain actions, the very nature of granting an AI full control over a computer demands extreme caution. This is not a feature to be deployed lightly, especially in enterprise environments, and requires careful sandboxing and consideration of sensitive data. The fact that permission prompts may re-appear for each new run, hindering automated scheduling, further complicates its practical application for tasks requiring proactive automation.

The Dispatch Integration: A Strategic Pivot

The true potential, or at least a more immediately useful application, emerges when Computer Use is paired with Anthropic's Dispatch feature. Dispatch allows mobile interaction with the Claude Mac app, enabling users to send files or initiate tasks remotely. This combination addresses one of the key limitations: the inability to actively use your computer while the AI is in control. If you're away from your machine, using Dispatch to direct Computer Use for a specific task becomes a viable workflow.

However, even here, the underlying slowness and potential for errors persist. The speaker's live demo, while successfully demonstrating Claude opening an application and interacting with its UI, also highlighted the need for re-authorization and the inherent visual nature of the process. The faint orange highlight indicating Claude's control serves as a constant reminder of its presence and the user's temporary displacement.

The critical insight here is that this visual, mouse-and-keyboard-driven interaction is a temporary bridge. As the internet and software applications evolve to support more standardized agentic protocols (like A2A, MCP, and ACPs), the need for this "AI duct tape" will diminish. But until then, for tasks that cannot be easily integrated via APIs, this layer of agentic computer use will serve as the primary interface for AI automation.

The Future Layer: AI as the New Human Duct Tape

The viral nature of this feature, despite its current limitations, points to a significant shift in how we will interact with technology. The speaker posits that this is not just a temporary novelty but a preview of the "next layer of knowledge work." This layer exists precisely because many applications and websites do not yet have robust APIs or direct integrations that allow AI to perform complex tasks.

"So, even though it's buggy, yes, it's a research preview right now, it's a party trick, but I also firmly believe that this is the future of work."

This perspective frames the current iteration as a necessary, albeit imperfect, stepping stone. It acknowledges that while AI agents can automate many tasks, the majority of enterprises still rely on disparate software, local files, and cloud-agnostic systems. The manual copy-pasting and data transfer between AI systems that many knowledge workers currently perform is precisely the gap that agentic computer use aims to fill.

The long-term advantage, therefore, comes from understanding and experimenting with these nascent tools now. While the immediate payoff is minimal and the process is fraught with errors, investing time in learning how these systems operate, their limitations, and their potential for future integration offers a strategic edge. Companies and individuals who grapple with these tools early will be better positioned to adapt and leverage them as they mature, creating a competitive moat built on early adoption and understanding of the evolving AI interface. The discomfort of dealing with buggy, slow, and permission-heavy AI today is precisely what will yield dividends in the form of operational efficiency and competitive advantage tomorrow.

Key Action Items

-

Immediate Action (Within 1-2 weeks):

- Experiment with Claude's Computer Use: For individuals and teams, sign up for a paid Claude plan for a month to test the Computer Use feature on non-critical, personal tasks.

- Audit Existing AI Integrations: Review current workflows to identify applications that already have direct API or Claude integrations, prioritizing their use over the Computer Use feature for efficiency.

- Document Limitations: For any attempted use of Computer Use, meticulously document the specific limitations encountered (e.g., slowness, permission re-prompts, screen hijacking issues) to inform future strategy.

-

Short-Term Investment (1-3 Months):

- Sandbox Enterprise Use: For decision-makers, conduct controlled sandboxing of Computer Use on a dedicated, isolated machine to assess security risks and potential benefits for specific, low-risk workflows.

- Monitor Competitor AI Integrations: Actively track developments from Microsoft, OpenAI, and Google regarding their own agentic computer interaction capabilities and integrations.

- Explore Dispatch Workflows: Identify specific remote tasks (e.g., file retrieval, simple data entry) that could be effectively managed using the Dispatch feature in conjunction with Computer Use, particularly for users who are often away from their primary workstation.

-

Long-Term Investment (6-18 Months):

- Develop Internal Automation Playbooks: Based on early experimentation, begin to formulate internal guidelines and best practices for leveraging AI agentic capabilities, anticipating future, more robust versions of Computer Use.

- Advocate for Agentic Protocols: For product managers and developers, consider how existing or future products can better support agentic protocols (A2A, MCP, ACPs) to reduce reliance on screen-scraping methods.

- Invest in AI Literacy Training: Plan and begin implementing training programs for employees to build foundational understanding and practical skills in interacting with AI agents, preparing them for a future where this becomes a standard interface.