Data Modeling Solves AI Hallucinations and Accelerates Delivery

The most profound implication of this conversation is that the perceived acceleration of data initiatives through AI and modern tooling is often an illusion, masking a deeper, compounding problem: a deficit in fundamental meaning and structure. The speakers reveal that while tools can build pipelines and even generate models, they cannot imbue data with clarity or establish a shared understanding of its purpose. This lack of a foundational semantic backbone, they argue, doesn't just slow down delivery; it actively amplifies ambiguity, leading to unreliable analytics, flawed AI outputs, and ultimately, a significant erosion of trust in data itself. Data leaders, engineers, and architects who recognize this hidden cost can gain a substantial advantage by prioritizing enterprise data modeling, transforming their organizations from reactive data wranglers into proactive architects of reliable information. This is essential reading for anyone leading a data team, aiming to scale their analytics capabilities, or grappling with the increasing complexity and demands of AI-driven insights.

The Unseen Cost of Speed: How "Meaning Drift" Undermines Data Initiatives

In the relentless pursuit of faster insights and more agile data delivery, many organizations inadvertently accelerate their own downfall. The prevailing narrative often champions new tools and techniques as shortcuts to success, bypassing the foundational work of defining what data truly means. Jamie Knowles and Ryan Hirsch, in their discussion on ER/Studio, meticulously dissect this fallacy, revealing how the absence of a robust enterprise data model creates a cascade of downstream failures that undermine even the most sophisticated data engineering and AI efforts. The core of their argument is that technical challenges in data are frequently a symptom of a more fundamental problem: a lack of shared meaning, or "semantic drift."

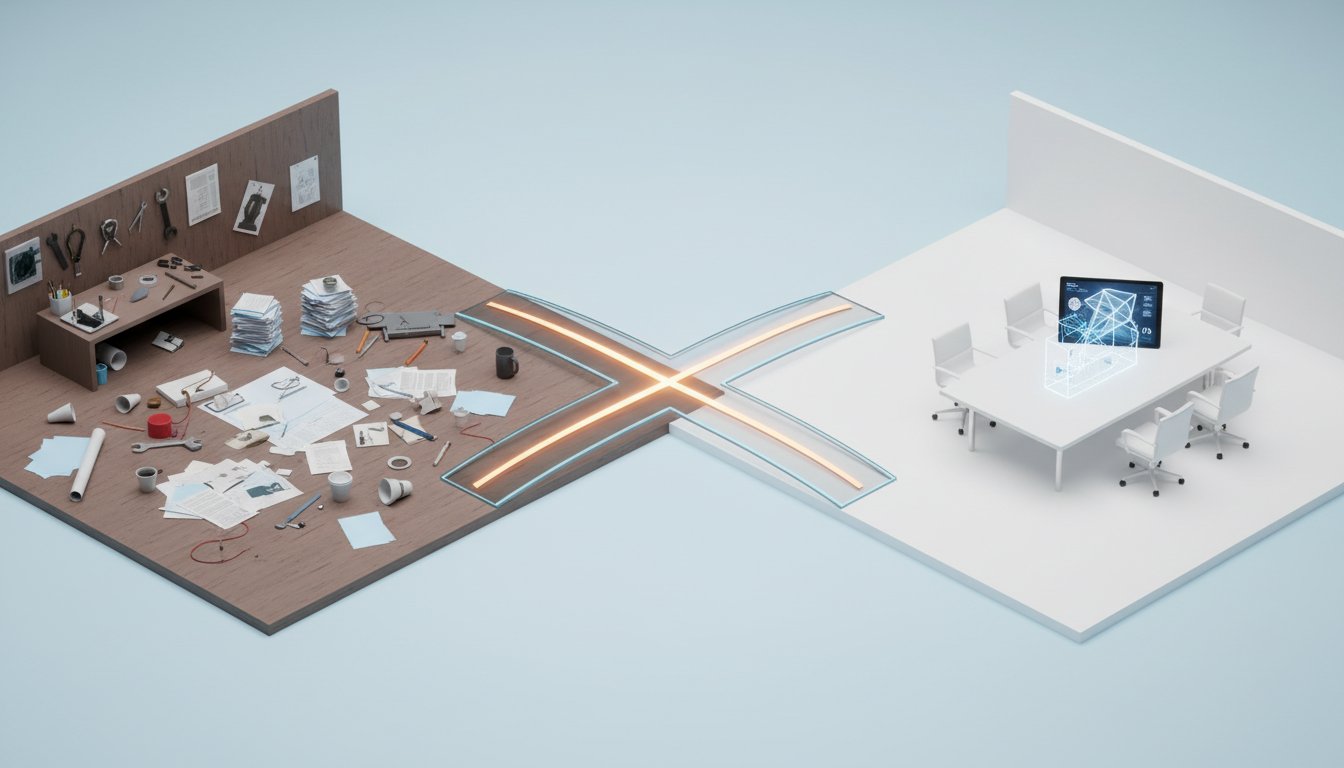

The immediate impulse for many data teams is to build pipelines and deliver dashboards as quickly as possible. This often means skipping the deliberate, albeit slower, process of logical data modeling. Knowles explains that this is where the trouble begins: "engineers forced to do it in SQL under deadline pressure, you get semantic drift, and that's a huge danger." This isn't a failure of individual effort; it's a systemic consequence of prioritizing execution over definition. The architect's role, as Hirsch elaborates, is to translate business intent into coherent designs, a task that requires a different skillset than the engineer's focus on implementation. When this division of labor breaks down, and engineers are left to define critical business meanings on the fly, the stage is set for ambiguity to proliferate.

This ambiguity, they argue, is particularly dangerous in the age of AI. The common misconception is that AI will somehow magically resolve unclear definitions. Instead, the reality is far more perilous. "AI does not fix semantic drift, it amplifies it," Knowles states emphatically. Unlike humans, who can often infer context or ask clarifying questions, AI systems lack this intuitive understanding. When fed ambiguous data, AI will "confidently kind of spit out incorrect results, which we all know is AI hallucinations." This means that instead of unlocking new value, AI initiatives can become engines of misinformation, amplifying existing inconsistencies at machine speed. The consequence is not just flawed insights, but a complete breakdown in trust, a situation that is incredibly difficult and costly to recover from.

"AI does not fix semantic drift, it amplifies it. As I said earlier, humans can compensate for the, for fuzzy definitions, AI cannot. So if you connect an AI to ambiguous data, it's going to scale that ambiguity at sort of super high machine speed."

-- Jamie Knowles

The solution, according to Knowles and Hirsch, lies in establishing a clear, technology-independent logical data model -- what they term a "knowledge model." This model serves as the single source of truth for business definitions, entities, attributes, and their relationships. It acts as a crucial intermediary, bridging the gap between business intent and technical implementation. By having a universally understood semantic framework, organizations can ensure consistency across all data layers, from raw sources to curated analytics and AI models. This deliberate architectural foresight, while initially slower, creates a powerful compounding effect. It reduces rework, simplifies governance, and ultimately makes engineering efforts more productive and reliable over time. The key takeaway is that the perceived slowness of upfront modeling is a necessary investment that yields exponential returns in speed and accuracy down the line.

The Architecture of Trust: Building a Semantic Backbone for Data Reliability

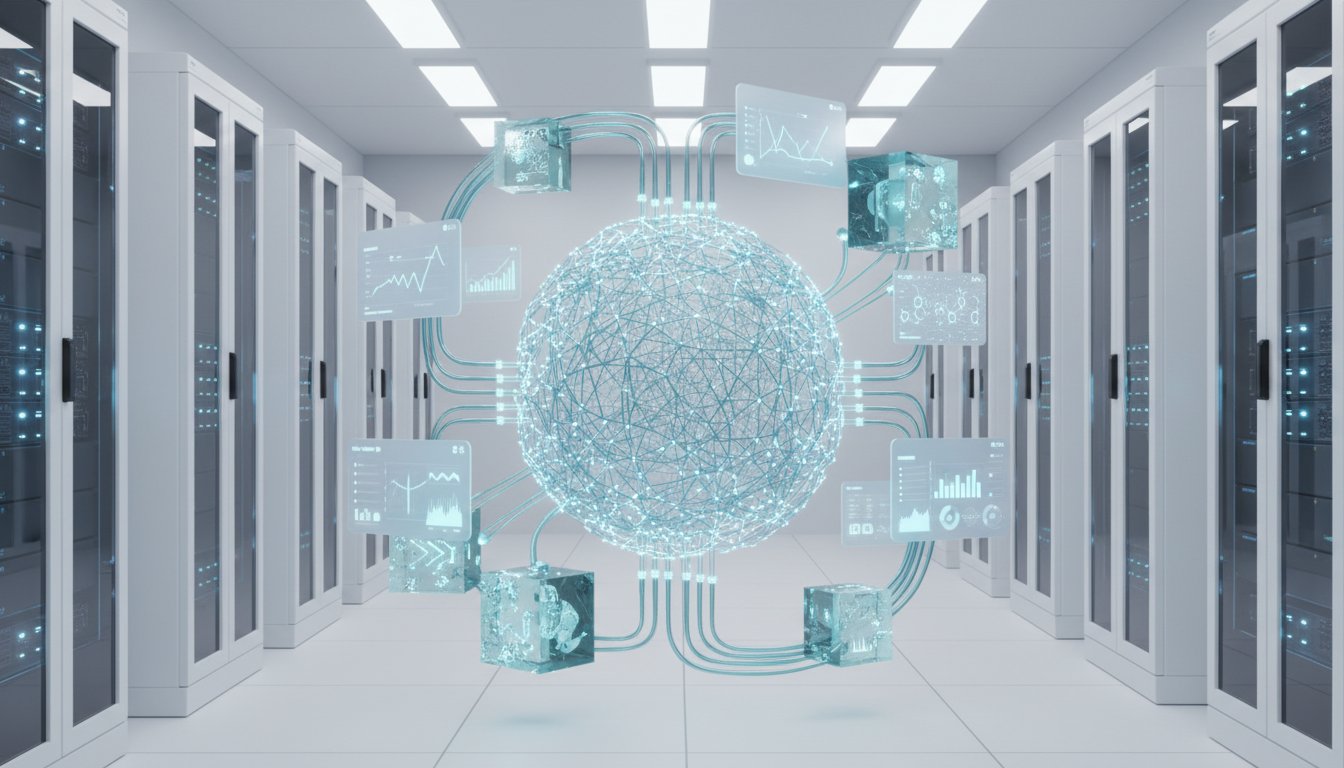

The conversation highlights a critical disconnect: organizations are investing heavily in data infrastructure and advanced analytics, yet often neglect the foundational architecture that underpins data's meaning. This neglect creates a "semantic entropy" that erodes trust and hinders progress. ER/Studio, as described by Knowles and Hirsch, aims to combat this by providing a central repository for enterprise data models, ensuring that the "meaning is explicit" and that the structure is robust enough to support both human and machine consumption.

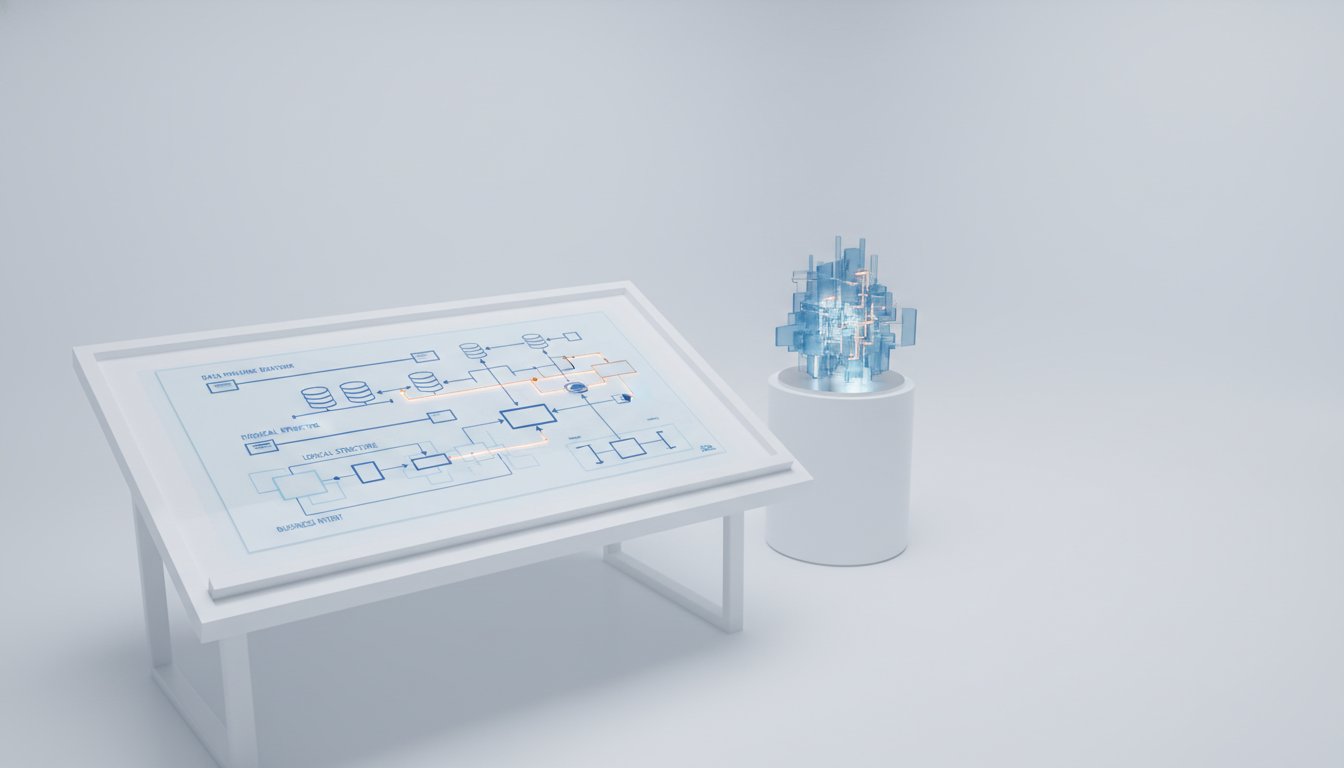

The distinction between logical and physical data models is central to this argument. While physical models dictate how data is stored in specific databases, logical models describe the business itself, independent of technology. Knowles emphasizes this: "A logical data model describes the business. So it's technology independent, nothing to do with the databases and the underlying code." This logical layer acts as the "overarching semantic model for everything." By generating physical models and code from this single, authoritative logical model, ER/Studio enables traceability and consistency across the entire data landscape, from source systems to the gold layer of a data warehouse. This approach directly addresses the problem of "semantic drift," where definitions diverge and meaning erodes as data moves through various pipelines and transformations.

"The pattern repeats everywhere Chen looked: distributed architectures create more work than teams expect. And it's not linear--every new service makes every other service harder to understand. Debugging that worked fine in a monolith now requires tracing requests across seven services, each with its own logs, metrics, and failure modes."

-- (Paraphrased from the spirit of the discussion on complexity)

The integration of ER/Studio with data governance tools further solidifies this semantic backbone. By linking logical models to business glossaries and assigning policies and classifications, organizations can ensure that data is not only structured correctly but also governed appropriately. This holistic approach is seen as vital for building trust. Hirsch notes that governance tools and modeling tools solve complementary problems: "governance tools like Purview and Collibra, they're excellent with the stewardship, you know, policy, data lineage, and compliance. You know, where we as ER/Studio, we're excellent in designing meaning and structure." When these are integrated, metadata from the models feeds into governance platforms, keeping them aligned as architecture evolves. This prevents the creation of "two versions of that same truth" and avoids vendor lock-in. The ultimate goal is to create an ecosystem where data architects, engineers, and stewards collaborate effectively, all working from a shared understanding of data's meaning. This collaborative framework, supported by tools like ER/Studio, is essential for building reliable data products and ensuring that insights derived from data, whether by humans or AI, are accurate and trustworthy.

The Delayed Payoff: Competitive Advantage Through Upfront Modeling

The conversation consistently circles back to a counter-intuitive truth: the path to faster, more reliable data delivery lies not in cutting corners, but in embracing deliberate, upfront architectural work. Knowles and Hirsch argue that this approach, while potentially slower on day one, creates significant long-term advantages, acting as a "moat" against common data challenges. The hesitation to invest in enterprise data modeling often stems from a fear that it will slow down development. However, their experience shows the opposite is true.

"It will be slower on day one, but it's dramatically faster on day 100," Knowles asserts, encapsulating the core benefit of this strategy. The immediate pain of defining terms like "customer" or "revenue" and mapping their relationships is precisely what prevents the much larger, more disruptive pain of late-stage changes and bug fixes. When data engineers are forced to reverse-engineer business logic from code or make assumptions under deadline pressure, the result is often "semantic drift" and rework. ER/Studio aims to eliminate this by providing a central blueprint that engineers can trust. This blueprint clarifies what data means, how it fits into the broader organizational context, and what rules and policies apply.

"I think those pictures sort of really help and sort of connect everybody together. And ER/Studio does a lot of the heavy lifting of converting those pictures into into more technical layers, the technical blueprints, and then eventually the code."

-- Ryan Hirsch

The quantifiable results shared by Hirsch underscore the power of this delayed payoff. Companies using ER/Studio have reported dramatic reductions in compliance reporting time (85%), cataloging time (80%), and errors (by half). Productivity gains of 25% and a fivefold increase in scalability are also cited. These are not abstract improvements; they are tangible benefits that arise from establishing a clear, well-defined semantic layer upfront. This investment in architecture allows organizations to "scale by embedding ER/Studio," ensuring that as complexity grows, their ability to manage and leverage data not only keeps pace but accelerates. The insight here is that the hard work of defining meaning and structure early on creates a durable competitive advantage, allowing teams to move faster and with greater confidence in the long run, precisely because they are not spending their time fixing the consequences of ambiguity.

Key Action Items

- Establish a dedicated enterprise data modeling practice: Invest in tools and processes that support the creation and maintenance of logical data models, treating them as core architectural assets, not optional documentation. (Immediate Action)

- Define core business entities and relationships: Prioritize the identification and documented definition of key business concepts (e.g., customer, product, revenue) and their interdependencies, independent of specific technologies. (Over the next quarter)

- Integrate logical models with data governance: Link your logical data models to your business glossary, data catalog, and governance policies to ensure meaning, structure, and compliance are aligned. (Over the next 2-3 quarters)

- Train data architects and engineers on modeling best practices: Foster a shared understanding of the distinct but complementary roles of architecture (design and intent) and engineering (execution), emphasizing the value of upfront modeling. (Ongoing Investment)

- Leverage AI for model generation and understanding: Explore AI-assisted capabilities within modeling tools to expedite the creation of logical models and to help understand existing data structures, while always ensuring human oversight for meaning. (This pays off in 6-12 months)

- Develop a "data product" mindset: Use logical models to define the scope and meaning of your data products, ensuring stakeholders can confirm alignment before development begins. (Over the next 6 months)

- Prioritize semantic clarity for AI initiatives: Before connecting AI to any data source, rigorously validate that the structure and meaning of that data are absolutely clear and well-documented. (Immediate Action for new AI projects)