Cloudflare Builds Deep Moat Through Reinforcing Network Effects

Cloudflare's Quiet Dominance: Beyond the Speed and Security Headlines

Cloudflare has quietly become an indispensable layer of the internet, controlling over 20% of global web traffic and absorbing millions of cyberattacks per second. This episode, featuring investor Sam Ewen, reveals that Cloudflare's true genius lies not just in its scale, but in a meticulously constructed reinforcing loop that leverages network effects, product-led growth, and a unique approach to infrastructure. The non-obvious implication is that Cloudflare has built a moat so deep by embracing complexity and delayed payoffs that it fundamentally alters competitive dynamics. This analysis is crucial for anyone seeking to understand the foundational infrastructure of the modern internet, offering a strategic advantage in identifying businesses that build enduring defensibility through systems-level thinking, not just immediate feature deployment. Investors and operators will gain insights into how to spot companies that intentionally create barriers to entry through strategic reinvestment and a relentless focus on compounding advantages.

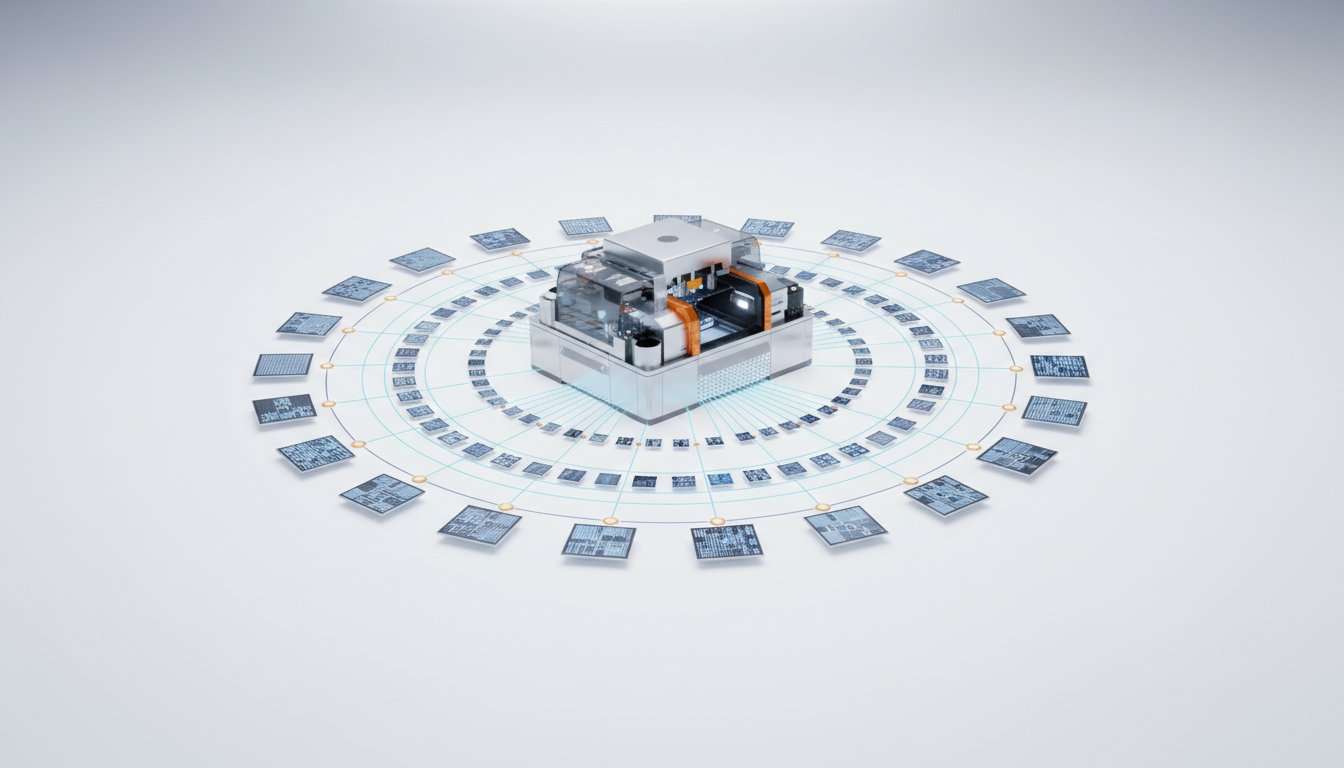

The Reinforcing Loop: How Scale Begets More Scale, and Why It's So Hard to Replicate

Cloudflare's ascent from a startup to a foundational internet infrastructure provider is a masterclass in building a defensible business through a powerful reinforcing loop. The core of this loop, as detailed by Sam Ewen, begins with a product-led growth strategy and a generous freemium model. This approach, particularly for their early web security and performance products, attracted a vast number of users, from small hobbyist websites to nonprofits. This seemingly simple act of offering free services had profound downstream consequences.

Firstly, it allowed Cloudflare to intercept an enormous volume of internet traffic. This scale, in turn, provided invaluable data. By observing millions of requests and attacks, Cloudflare's systems became exponentially better at identifying malicious actors, optimizing network speed, and refining their service offerings. This continuous improvement, driven by data from their massive user base, created a superior product.

"This creates a reinforcing loop. So I'll try and help you visualize it. That at the top of the cycle, we talked about their low bandwidth and low hardware costs and their easy-to-use products that enables this product-led growth motion. So they can serve that long tail of customers. What that enables is more traffic goes through their servers, so they collect more signals, they collect more data, and then they get better and better at blocking malicious actors."

This superior product then attracted more paying customers, including large enterprises, further increasing traffic and data collection, and thus strengthening the loop. Critically, this scale also gave Cloudflare significant negotiating power with Internet Service Providers (ISPs). By establishing direct peering relationships, Cloudflare could reduce bandwidth costs and improve network performance for both itself and the ISPs, turning a potential cost center into a competitive advantage. This win-win scenario with ISPs is a crucial, often overlooked, element that underpins their cost structure and network efficiency. Competitors, particularly legacy players, were often constrained by business models that prioritized revenue from large enterprises, leaving the long tail of the internet underserved and thus unable to generate the same network effects. Cloudflare's willingness to serve this long tail, even with free offerings, was a strategic choice that built a moat over time, making it incredibly difficult for others to replicate their scale and efficiency.

From Web Guardian to Corporate Sentinel: The Internal Cybersecurity Play

Cloudflare's evolution from protecting external websites to securing internal corporate networks is a testament to their ability to leverage existing infrastructure for new markets. The transition from "reverse proxy" (intercepting traffic to a website) to "forward proxy" (inspecting traffic from employees to the internet) was a natural, albeit complex, expansion. This allowed them to tap into the burgeoning "zero trust" security market, where the traditional network perimeter has dissolved due to remote work and cloud adoption.

The brilliance here lies in using the same commodity hardware and global network for both external and internal security. This means that the investment in their infrastructure yields benefits across multiple product lines, enhancing the return on capital. The challenge, however, is that this market is more competitive. Unlike their foundational web services where they were pioneers, in internal cybersecurity, they are often a second mover, competing with established players like Zscaler.

"The way they did that was building a lot of the software themselves to be able to provide these services at a global scale. They often had to build a lot of their own software. They couldn't rely on AWS, for example. No one else could handle their scale, and they wanted to have really, really strong security."

This highlights a key dynamic: while Cloudflare's network provides a unique advantage in terms of latency and integration, they must execute flawlessly to displace incumbents who have deep-seated trust and established relationships within large enterprises. The "pool of funds" bundling strategy, allowing large customers to draw from a committed budget across any of Cloudflare's offerings, is a direct attempt to overcome this friction and encourage adoption of their newer internal security and developer products. This strategy, by reducing the perceived risk of trying new services, directly addresses the challenge of breaking into established enterprise security budgets.

The Developer Playground and the AI Frontier: Building the Future Infrastructure

Cloudflare's third major product evolution, the developer platform, represents a strategic bet on the future of application development and, crucially, artificial intelligence. By offering serverless functions (Workers), cloud storage, and lightweight databases, they are enabling developers to build sophisticated applications directly on their global network. This is more than just a new revenue stream; it's about embedding Cloudflare into the fabric of how future applications are built.

The significant free tier for these developer products is a direct echo of their early strategy, aiming to attract a massive developer community and foster adoption. This community, in turn, can become a powerful engine for enterprise sales as these developers move into larger organizations.

The AI frontier is where this strategy becomes particularly potent. Cloudflare's network is inherently suited to handle the massive data flows and low-latency requirements of AI inference. By retrofitting their existing hardware with GPUs and offering Workers AI, they are positioning themselves as a critical infrastructure provider for the AI revolution. This is a departure from their historical model of leveraging existing hardware for all services; AI inference requires specialized hardware, introducing a new layer of capital expenditure and ROI calculation.

"Cloudflare's network is inherently suited to handle the massive data flows and low-latency requirements of AI inference. By retrofitting their existing hardware with GPUs and offering Workers AI, they are positioning themselves as a critical infrastructure provider for the AI revolution."

The risk here is that this is a newer, more concentrated bet. While their other services benefit from the diversified ROI of their global network, the GPU investment's return is more directly tied to the success of AI inference. However, the potential payoff is immense, as they aim to become the default infrastructure for AI applications, leveraging their existing network and developer ecosystem to accelerate adoption.

Key Action Items

- Immediate Actions (Next 1-3 Months):

- Analyze Cloudflare's Network Advantage: For businesses relying on web presence or internal security, evaluate how Cloudflare's global network could improve latency and security compared to current solutions.

- Explore Developer Platform Free Tiers: Developers should experiment with Cloudflare Workers and other developer tools to understand their capabilities for new projects or internal tools.

- Review AI Strategy Alignment: For companies integrating AI, assess if Cloudflare's edge inference capabilities align with performance and cost objectives, especially for applications requiring low latency.

- Short-Term Investments (Next 3-9 Months):

- Pilot "Pool of Funds" for Enterprise Adoption: Large organizations should consider piloting Cloudflare's "pool of funds" to encourage cross-product adoption and experimentation with newer services like internal security or developer tools.

- Evaluate Partner Ecosystem Integration: Businesses working with channel partners for cybersecurity should assess if those partners are integrated with Cloudflare to leverage their growing partner network.

- Longer-Term Investments (9-18+ Months):

- Strategic Reinvestment in Infrastructure: Companies with significant internet infrastructure needs should consider Cloudflare's model of reinvesting in a unified, global network as a core strategy for defensibility and cost efficiency. This pays off in 12-18 months through reduced operational costs and enhanced performance.

- Develop "Second Mover" Advantage Strategies: For businesses operating in competitive markets, adopt Cloudflare's approach of leveraging existing strengths to enter new, more competitive arenas, focusing on product simplicity and network integration to gain traction. This requires patience but creates lasting competitive moats.

- Integrate AI Inference into Core Workflows: Plan for the integration of AI inference capabilities at the edge, anticipating that Cloudflare and similar providers will offer increasingly cost-effective and performant solutions, a strategy that will pay off significantly in the next 18-24 months as AI adoption accelerates.